What is Scrunch AI, and why does it matter for AI visibility?

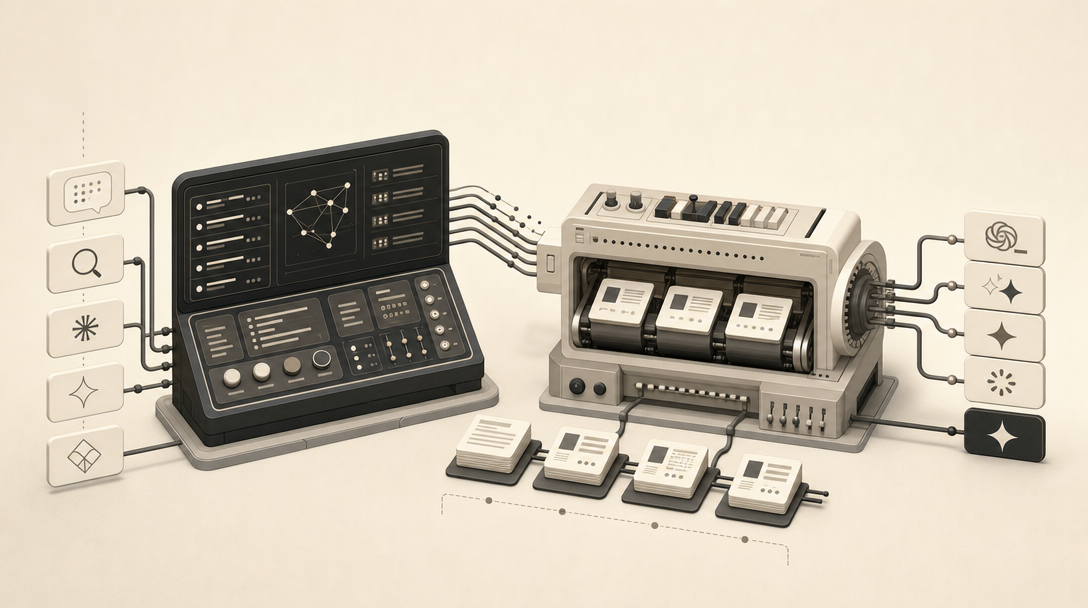

Scrunch AI is an AI Customer Experience Platform that monitors brand presence in AI search, audits and optimizes websites, and delivers content directly to AI agents. According to Scrunch, the product covers four jobs in one workspace: tracking how brands appear inside ChatGPT, ChatGPT Search, Perplexity, Google AI Overviews, Gemini, Claude, Grok, and Meta AI; auditing the technical signals AI crawlers see; recommending optimizations; and serving LLM-friendly content through the Agent Experience Platform.

It matters because the gap between "ranking in Google" and "getting cited by ChatGPT" is now a measurable category of marketing work. Before a content team commits a budget to AEO, GEO, and LLMO execution, they need to see which prompts trigger a brand mention, which competitors own the citations, and whether AI bots can even reach the site. Scrunch is built for that diagnostic layer.

Scrunch is the command center for understanding how a brand appears in AI answers; it is not where citation-ready articles get written and shipped. That distinction matters when you're choosing tools. Scrunch operated in beta through 2024 before announcing general availability in March 2025 (Source: Scrunch), which reconciles the two dates that appear in third-party coverage. The company says Fortune 500 enterprises including Dell, Lenovo, and Akamai use it to monitor millions of prompts and hundreds of thousands of pages (Source: Scrunch). What the platform does well is observation. What it leaves to the rest of your stack is execution — drafting, publishing, and refreshing the content the answer engines will actually quote.

What are Scrunch's four core products?

Scrunch's platform is organized around four products: monitoring, auditing, optimization, and content delivery through the Agent Experience Platform (Source: Scrunch FAQ). Each maps to a different question a marketing team asks once they realize ChatGPT and Perplexity are pulling traffic away from blue links.

| Product | What it does | Operator question it answers |

|---|---|---|

| Monitoring | Tracks prompts, responses, rankings, citations, and share of voice across AI engines | "Where do we appear, and against whom?" |

| Auditing | Surfaces site issues that block AI crawlers and degrade AI extractability | "Can GPTBot, ClaudeBot, and PerplexityBot actually read us?" |

| Optimization | Recommends content and structural changes based on monitoring and audit signals | "What should we change to earn more citations?" |

| Agent Experience Platform (AXP) | Auto-detects AI agent traffic and serves bots a clean, LLM-friendly version of pages | "How do we deliver content optimized for machine readers?" |

The enterprise positioning around these products is concrete: SOC 2 Type II compliance, support for multiple brands, multiple domains, multiple regions, and multiple languages, plus a Data API for piping signals into internal systems and dashboards (Source: Scrunch Platform). Scrunch's enterprise page says many enterprise customers are deployed on day one, which fits the command-center framing — observation tooling tends to onboard faster than editorial systems.

How does Scrunch's Agent Experience Platform serve AI-optimized content directly to LLMs?

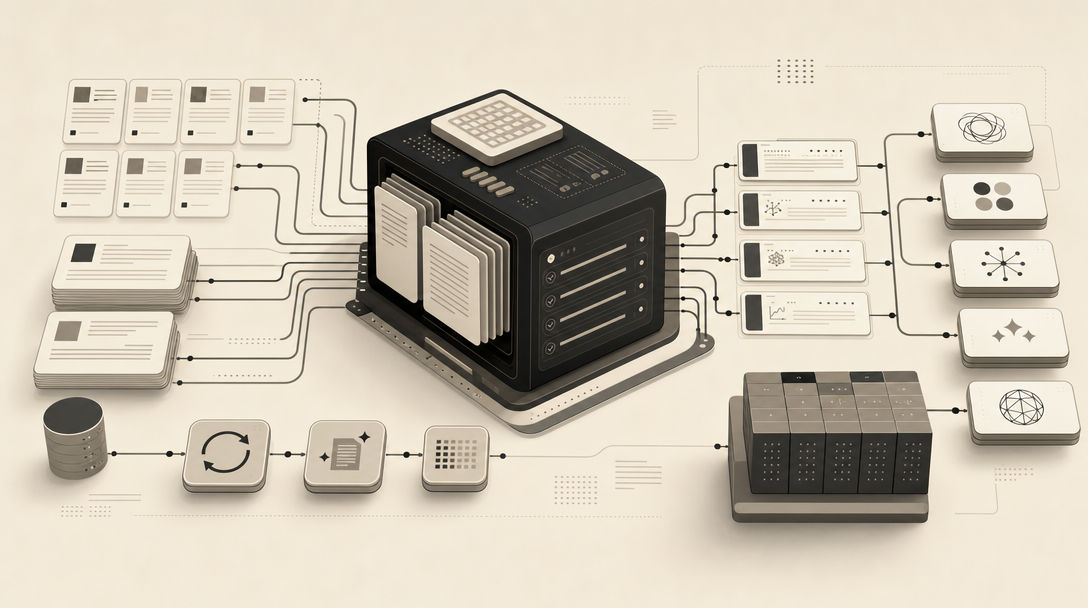

The Agent Experience Platform (AXP) is Scrunch's content delivery layer. It auto-detects AI agent traffic and serves bots a clean, structured, LLM-friendly version of a webpage without changing what human visitors see (Source: Scrunch). The mechanism is bot-aware delivery: when GPTBot, ClaudeBot, PerplexityBot, or another AI agent requests a URL, AXP returns a version optimized for machine reading.

The documented workflow has four steps (Source: Scrunch How-Tos):

- Create the AI-optimized webpage content.

- Enter the URL path AXP should match.

- Upload markdown or HTML, or write the content directly.

- Deploy the configuration.

The appeal is obvious: you can ship structured, extractable content for AI crawlers without touching the human-facing CMS template. The trade-offs are less obvious and worth naming honestly. The provided Scrunch sources don't deeply resolve a few governance questions every editorial team will eventually ask:

- Canonical parity. If the AXP version diverges from the human page, which is the source of truth for fact updates?

- CMS ownership. Does AXP content sit alongside your CMS, or replace parts of it?

- Refresh cadence. Who updates the AXP version when the canonical article changes?

- Cloaking risk. Serving different content to bots and users has historically been a search-policy red flag in classic SEO; the AI-search equivalent is still being defined.

AXP is a delivery mechanism, not an editorial pipeline — it determines how content reaches LLMs but not how that content gets researched, written, or kept current. Teams adopting AXP usually still need a separate workflow for producing the underlying material.

Does Scrunch create citation-ready content, or mainly monitor, audit, optimize, and deliver it?

Scrunch's strength is visibility monitoring, audit signals, optimization guidance, competitor analysis, and AXP delivery. The provided Scrunch sources are thin on a full editorial pipeline for researching, drafting, and publishing citation-ready AEO, GEO, LLMO, and SEO articles. This isn't a criticism of the product — it's a category boundary.

Third-party alternatives coverage makes the same point. According to Orchly.ai, tracking AI visibility does not move the needle unless it connects to content creation, optimization, and updates. According to GetMint, Scrunch AI is strong for technical infrastructure and AXP, but often stops short of helping teams write the actual answers that win citations.

That gap is where the operating model matters. A monitoring platform tells you that a competitor owns the citation for "best [category] for enterprise" across ChatGPT, Perplexity, and Google AI Overviews. It can audit your page and tell you the schema is weak, the answer isn't extractable in the first paragraph, and GPTBot timed out twice last week. What it doesn't do is sit down and produce the next forty articles your taxonomy needs, each with a research-grounded direct answer, FAQ block, JSON-LD, and a markdown mirror — on a schedule, across one site or across a hundred client sites.

What is the practical difference between an AI visibility command center and an AI visibility engine?

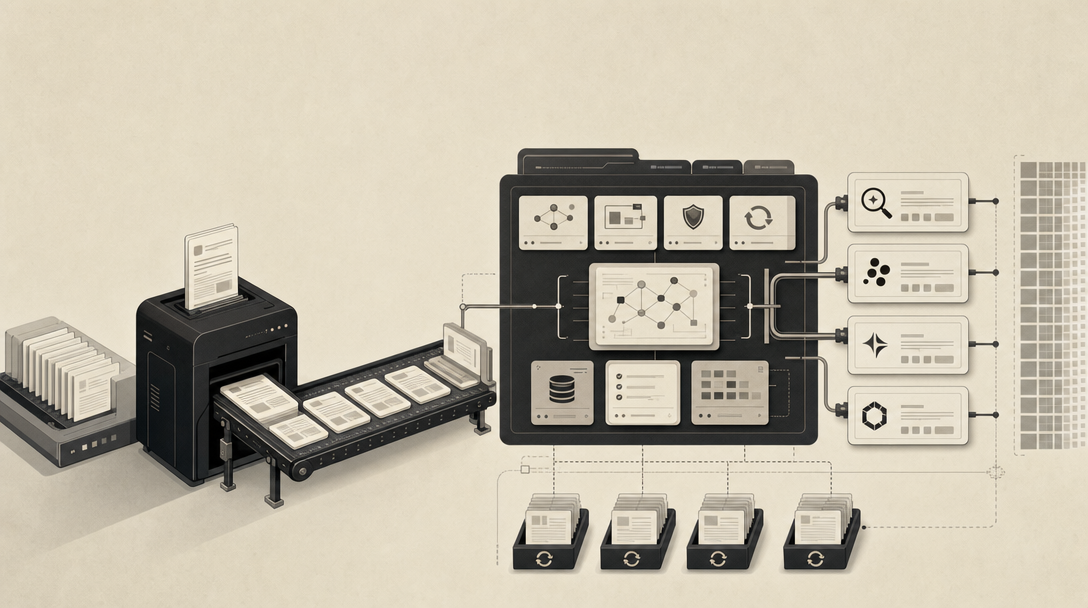

A command center observes; an engine produces. Scrunch is the command center: it tells you which prompts matter, which competitors get cited, where AI bots break, and how to deliver structured content through AXP. Mentionwell is the engine: it turns those signals into shipped, citation-shaped articles on a recurring cadence.

The two roles are complementary, not redundant. A typical operating model looks like this:

| Layer | Role | Example output |

|---|---|---|

| Visibility command center (Scrunch) | Monitor prompts, citations, competitors, bot access; recommend optimizations; deliver to bots via AXP | Weekly share-of-voice report; bot crawl errors flagged; AXP-served markdown for top URLs |

| Editorial execution engine (Mentionwell) | Scan domain, build site profile, run research → outline → draft → editorial critique → metadata → FAQ → embeddings → images → publish → refresh | New AEO/GEO/LLMO/SEO articles into WordPress, Webflow, Ghost, Shopify, Notion, or via public read-only API |

Mentionwell describes itself as a full content engine — not a wrapper — where every article runs through an 11-stage pipeline and every site receives its own brand profile, taxonomy, image style, and reader API (Source: Mentionwell Features). Every article ships with four optimization layers: AEO, GEO, LLMO, and classic SEO. Outputs include FAQPage and Article JSON-LD, RSS, JSON Feed, a sitemap, per-article markdown mirrors, and a site-wide llms.txt (Source: Mentionwell).

Some context on the terminology itself: Generative Engine Optimization was coined in a 2023 Princeton paper (Source: Mentionwell). AEO, GEO, LLMO, and SEO are distinct optimization layers, not interchangeable labels for "AI SEO" — and a real publishing workflow has to account for all four.

What are the key differences between SEO vs. AEO, GEO, and LLMO workflows?

Each acronym targets a different retrieval surface, and the workflows differ accordingly:

- SEO still governs crawlability, indexation, and search demand — the foundation that everything else assumes is working.

- AEO (Answer Engine Optimization) shapes content so answer engines can extract a clean response. Direct-answer openings, FAQ blocks, and schema do the heavy lifting. See What Is AEO in 2026?.

- GEO (Generative Engine Optimization) supports citation in generative answers from ChatGPT, Perplexity, Gemini, and Claude. Entity clarity, attributed statistics, and structured comparisons matter most. See What Is GEO in 2026?.

- LLMO (Large Language Model Optimization) strengthens the entity and context signals that influence model-driven retrieval and recommendation. See What Is LLMO in 2026?.

Practical comparison:

| Layer | Primary surface | Key page signals | Primary workflow |

|---|---|---|---|

| SEO | Google, Bing organic | Title, meta, internal links, backlinks, Core Web Vitals | Keyword research → page → indexation |

| AEO | Google AI Overviews, featured answers | Direct-answer openings, FAQ schema, extractable structure | Question research → answer-shaped page |

| GEO | ChatGPT, Perplexity, Claude, Gemini citations | Entity density, attributed stats, citable phrases | Topic synthesis → research-grounded article |

| LLMO | Model-internal recommendations and recall | Entity consistency, brand co-occurrence, llms.txt | Topical authority + retrieval signals |

For deeper dives, see AEO vs GEO vs LLMO: Which Workflow Fits Your Team? and What Is LLMs.txt in 2026?. Platform-specific guides exist for ChatGPT, Claude, Gemini, Perplexity, Google AI Overviews, and Microsoft Copilot.

How should you choose between Scrunch, Mentionwell, or both?

Choose based on which bottleneck is binding. If the bottleneck is seeing what's happening in AI search, you need a command center. If the bottleneck is producing the content that feeds it, you need an engine.

Choose Scrunch when:

- The team needs prompt tracking, citation analysis, and competitor visibility across ChatGPT, Perplexity, Gemini, Claude, Grok, and Meta AI.

- AI bot traffic analysis and crawl audits are central — GPTBot, ClaudeBot, PerplexityBot access matters operationally.

- Enterprise reporting, persona segmentation, and geography breakdowns are required.

- The content delivery model fits AXP — serving bot-specific LLM-friendly versions of pages.

Choose Mentionwell when:

- The team needs a repeatable, citation-shaped publishing workflow into WordPress, Webflow, Ghost, Shopify, Notion, or via public API.

- Outputs need to include FAQPage and Article JSON-LD, RSS, JSON Feed, sitemap, markdown mirrors, and llms.txt.

- The operating model spans one site or hundreds — agencies, multi-brand portfolios, programmatic SEO at scale with editorial controls.

- Archive refreshes and brand-consistent voice across domains are required.

Use both when the team needs signal discovery and ongoing execution — Scrunch surfaces the prompt gaps, citation losses, and bot issues; Mentionwell ships the articles, schema, and llms.txt that close them.

A note on evidence boundaries. Scrunch's enterprise adoption claims include Fortune 500 usage, Dell, Lenovo, and Akamai monitoring millions of prompts and hundreds of thousands of pages (Source: Scrunch). Current official Scrunch pricing and quantified citation, traffic, conversion, or revenue lift are not resolved in the publicly available sources reviewed here — confirm directly with the vendor before committing.

If your next move is shipping the content layer that turns visibility insights into citations, Mentionwell ships research-grounded articles with AEO, GEO, LLMO, and SEO built into every draft, into your existing CMS or headless stack. Get My Site GEO Optimized.

Sources

- Scrunch AI Review: Can it compete with serious AI visibility tools?www.tryprofound.com

- FAQs - Scrunch AIscrunch.com

- Scrunch AI Review: Is It Worth the Investment? | Rankability Blogwww.rankability.com

- My Scrunch AI Visibility Review (SaaS and B2B Tech Focus)generatemore.ai