What Is AEO, and Why Does It Matter in 2026?

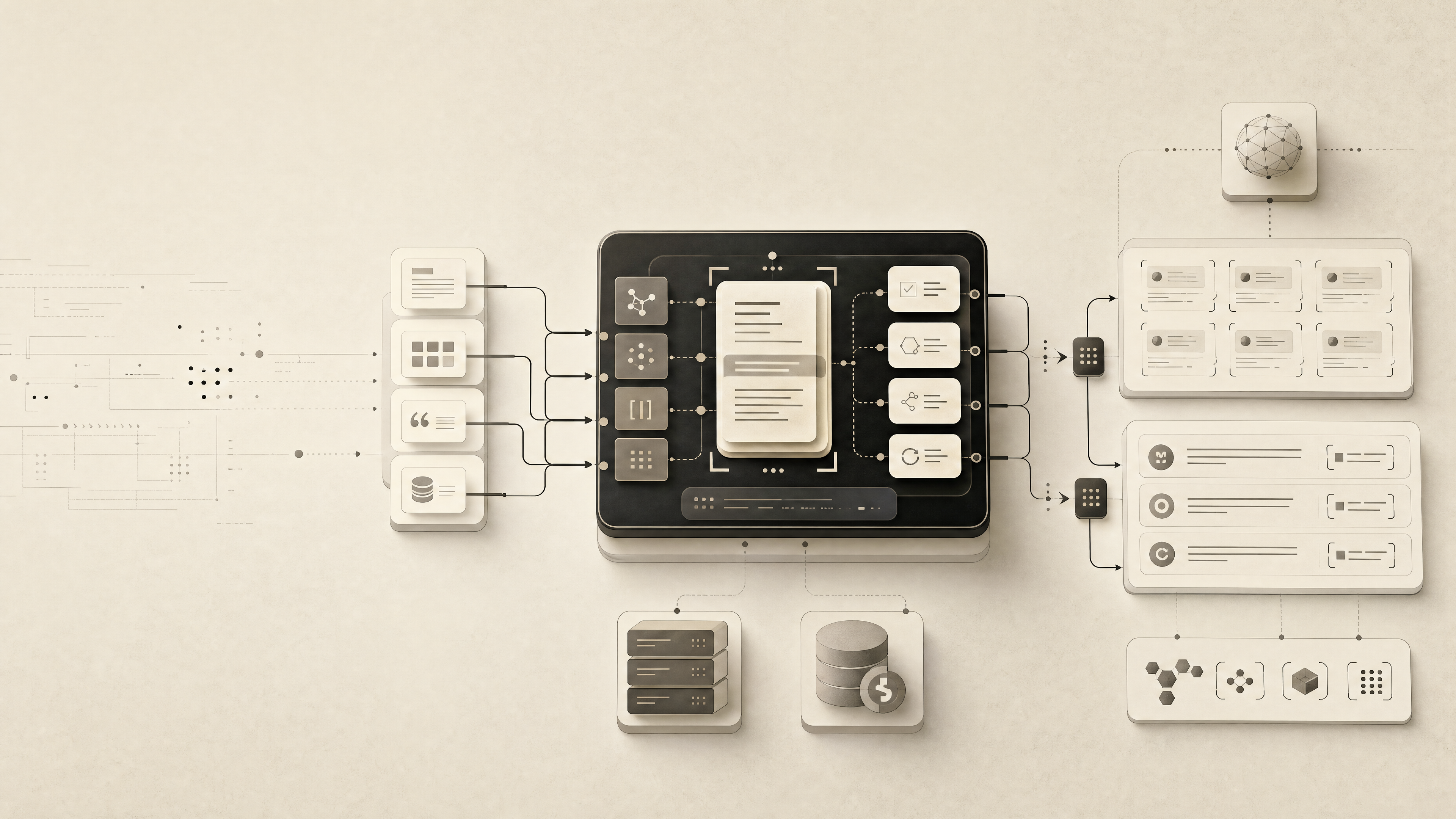

Answer Engine Optimization (AEO) is the practice of structuring content so AI answer engines — ChatGPT, Google AI Overviews, Gemini, Perplexity, Claude, and Microsoft Copilot — can understand, summarize, quote, and cite it inside their generated responses. It is the answer-extraction layer that sits inside a broader publishing system covering SEO, AEO, GEO, and LLMO.

The shift that makes AEO urgent is a change in the result itself. Traditional search returns a ranked list of links. Answer engines return a synthesized paragraph, often with a small set of cited sources attached. According to HubSpot's Consumer Trends Report, 72% of consumers plan to use AI for shopping more frequently, which compresses the old click-through journey into a single generated response. According to Gartner, traditional search engine volume will drop 25% in 2026 as users migrate to AI chatbots and virtual agents (Source: LevelUp, citing Gartner).

The operational risk is direct: if your content is not structured for answer engines, your brand may simply not appear in the answer, and inaccurate narratives sourced from pages you do not control can surface instead (Source: HubSpot). Ranking well in Google does not guarantee citation in an AI answer — answers that are buried, thin, or not clearly quotable get skipped (Source: LevelUp).

AEO is not a replacement for SEO. It is an additional editorial and technical layer that makes already-indexed content extractable, quotable, and attributable. In 2026, treating AEO as optional is the same operational bet as treating mobile-responsive design as optional in 2015 — the distribution surface has already moved.

Which AEO Meaning Are We Talking About?

This article covers AEO as Answer Engine Optimization — the marketing and content discipline. It is not about the two other major uses of the acronym that appear in general search results.

The U.S. Energy Information Administration uses AEO for its Annual Energy Outlook, a long-term projection of U.S. energy production, consumption, and prices produced by the National Energy Modeling System. The European Commission uses AEO for Authorised Economic Operator, a customs partnership programme between customs authorities and economic operators that facilitates legitimate trade and ties into supply-chain security frameworks such as the World Customs Organization's WCO SAFE Framework of Standards.

When marketers, SEO leads, and content operators say "AEO" in 2026, they mean Answer Engine Optimization — the practice of earning citations inside AI-generated answers. The rest of this guide uses that definition exclusively.

What Is an Answer Engine?

An answer engine is an AI-powered system that delivers a direct response instead of a list of links, by retrieving content from the web or a trained corpus, extracting relevant passages, synthesizing a response, and often citing a small set of sources (Source: Rankgarage).

The 2026 answer-engine set spans several product categories:

| Surface | Platform / Owner | Primary use case |

|---|---|---|

| ChatGPT | OpenAI | Conversational answers, research, product recommendations |

| Google AI Overviews | Google Search | Zero-click summaries at the top of SERPs |

| Google AI Mode | Google Search | Full conversational search surface |

| Gemini | Standalone assistant and embedded Workspace answers | |

| Perplexity | Perplexity AI | Citation-heavy research answers |

| Copilot | Microsoft | Web search answers and Work-mode enterprise retrieval |

| Claude | Anthropic | Reasoning-heavy answers, Projects, Connectors |

| Voice | Siri, Alexa, Google Assistant | Short spoken answers, often one source |

According to RYGR, ChatGPT reached 900 million monthly users and Gemini surpassed 650 million in late 2025, while Google Search remained dominant with over 4 billion users. Each of these surfaces selects and cites sources differently, which is why treating "AEO" as a single-platform tactic under-delivers.

For a platform-by-platform breakdown, see our internal guide on [How to Show Up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026).

How Does Answer Engine Optimization Differ from SEO?

SEO helps people find your website. AEO helps answer engines understand your content well enough to use it inside the answer itself (Source: Wingman Planning). The two are complementary, not competing.

SEO is the discovery infrastructure: crawlability, indexability, site structure, keyword targeting, internal linking, backlinks, and technical performance. Without it, AI models that rely on live web-search indexes — which is most of them — generally cannot retrieve or cite your content (Source: Rankgarage). AEO layers answer-first formatting, entity clarity, schema, and quotable passages on top of that foundation so retrieved content is actually usable in a synthesized response.

Here is the practical split:

| Dimension | SEO | AEO |

|---|---|---|

| Goal | Rank in results | Be cited inside the answer |

| Unit of success | Position, clicks | Citation, mention, accurate paraphrase |

| Content format | Keyword-targeted pages | Question-led, answer-first passages |

| Technical focus | Crawl, index, Core Web Vitals | Schema, semantic markup, structured data |

| Authority signals | Backlinks, E-E-A-T | Backlinks, E-E-A-T, earned media, entity consistency |

| Measurement | SERP rankings, organic traffic | Citation presence, answer accuracy, AI share of voice |

Ranking in Google is a necessary but insufficient condition for AEO — pages can rank on page one and still be skipped by AI answers because the answer itself is buried, padded, or not clearly quotable (Source: LevelUp). The editorial work of AEO is to surface the answer, name the entity, and make the passage extractable in 1–2 sentences.

Keyword-rank thinking also loses leverage here. Answer-engine results are paragraphs, and according to Webflow, legacy Google-style queries average 4 words while conversational queries average 23 words. That shifts the unit of optimization from keyword → passage.

How AEO Differs from GEO, LLMO, and SEO

The cleanest taxonomy for operator teams: SEO is discovery infrastructure, AEO is answer extraction and citation readiness, GEO is how a brand appears across generative answer surfaces, and LLMO is optimization for how language models retrieve, interpret, and respond.

| Layer | What it optimizes for | Primary artifacts |

|---|---|---|

| SEO | Being crawled, indexed, and ranked | Site structure, internal links, backlinks, Core Web Vitals |

| AEO | Being extracted and cited in answers | Direct answers, schema, question-led sections, entity clarity |

| GEO | Brand presence across generative surfaces | Third-party mentions, comparison coverage, review presence, narrative consistency |

| LLMO | Model retrieval and response behavior | Canonical entity language, authoritative source coverage, freshness, semantic signals |

Rankgarage frames it compactly: SEO gets content discovered, AEO makes it citable, GEO manages how a brand shows up across AI channels. LLMO goes one level deeper into the retrieval and reasoning behavior of the model itself — the signals that influence whether a language model pulls your passage, rephrases it accurately, and attributes it to your brand.

These are not competing workflows. They are stacked layers inside the same publishing system. For a deeper operating-model breakdown, see [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

How Do AI Answer Engines Choose and Cite Sources?

Answer engines follow a repeatable selection workflow: crawl or retrieve content, identify entities, extract concise passages, synthesize a response, and cite a limited set of trusted sources when the platform supports citations (Source: Rankgarage).

The signals that move content into that small cited set:

- Crawlability and indexability. Most answer engines rely on live web-search indexes — Google's for Gemini and AI Overviews, Bing's for ChatGPT and Copilot. Content that is not indexed generally cannot be cited (Source: Rankgarage).

- Structured, extractable content. Evergreen Media notes that AI systems favor clearly structured content with concise answers and logical flow.

- E-E-A-T and authority signals. Experience, expertise, authoritativeness, and trustworthiness increase citation likelihood (Source: Evergreen Media).

- Earned media and third-party coverage. According to Muck Rack's Generative Pulse 2025 report, 82% of links cited by AI are from earned media, and about 25% come from journalism (Source: RYGR citing Muck Rack).

- Entity clarity. Consistent brand and product naming across owned and earned surfaces makes it easier for models to resolve your brand as a distinct entity.

- Freshness. Muck Rack found that half of all AI citations come from content published within the last 11 months, with the highest spike in the first seven days after publication (Source: RYGR citing Muck Rack).

- Platform variance. ChatGPT, Google AI Overviews, Perplexity, Gemini, Copilot, and Claude have different source preferences (Source: Evergreen Media).

The operational takeaway: owned-content structure and earned-media coverage are both citation inputs, and neither one alone is sufficient. Webflow also reports that 73% of Google's top answers use schema while 88% of sites do not have schema implemented — a large, easily-closable gap for teams that do the markup work.

What Makes Content Easy for AI Systems to Quote or Cite?

The editorial rule is blunt: every important page should contain a 1–2 sentence direct answer, placed near the top, that can be lifted verbatim into a generated response without losing meaning. Everything else — structure, schema, authority — supports that core passage.

Page-level requirements for B2B SaaS teams:

- Answer-first openings. Open each section with the direct answer in 1–2 sentences. Context, caveats, and examples come after.

- Question-led headings. Structure H2s as questions users actually ask: "What is X?", "How does X differ from Y?", "When should I use X?".

- Concise terminology definitions. Define the term in one sentence. LLMs lift definitional sentences directly.

- Comparison blocks. Use tables for X vs. Y, pricing tiers, integration options, and product-category breakdowns. They extract cleanly.

- Use-case and integration pages. "X for [role]" and "X + [tool]" pages match the comparative and recommendation queries users bring to ChatGPT and Perplexity.

- Schema and semantic markup. Article, FAQPage, Product, Organization, and BreadcrumbList schema give answer engines structured entities.

- Crawlability and indexability. If the page is not in Google's or Bing's index, it will not be retrieved.

- Evidence-backed claims. Attribute every number to a named source. Unnamed statistics are routinely ignored by LLMs.

- Consistent entity language. Use the same product name, category term, and brand descriptors across every page and every earned-media mention.

The failure mode to avoid: pages that rank in Google but still lose AEO because the answer is buried three scrolls down, the definition is hedged, or the quotable sentence is wrapped in marketing language. Rankgarage and LevelUp both note that AI systems skip content that is not clearly extractable, regardless of its search ranking.

How Should a B2B SaaS Team Run an AEO Publishing Workflow?

An AEO publishing workflow is an editorial pipeline, not a one-off content project. It runs continuously and treats every page as a citation asset.

- Onboard the domain. Confirm crawlability, indexation in Google and Bing, sitemap health, and schema coverage.

- Define the site profile. Capture product category, ICP, audience questions, competitors, entity language, and tone. This profile anchors every brief.

- Map audience questions. Build a seed set of 100–500 real questions pulled from sales calls, support tickets, community forums, and observed AI prompts.

- Write answer-first briefs. Each brief names the question, the 1–2 sentence direct answer, the supporting entities, and the required schema.

- Draft with SEO, AEO, GEO, and LLMO constraints together. Keyword targets, question-led H2s, quotable passages, entity consistency, and evidence attribution all get enforced in the same draft, not in separate passes.

- Apply schema and semantic markup. Article, FAQPage, Product, Organization, HowTo where relevant.

- Editorial review. Check for direct-answer clarity, entity naming, source attribution, and extractability. Cut padding.

- Publish through the existing CMS or headless stack. No platform migration required.

- Test citations. Run the target question set across ChatGPT, Google AI Overviews, Perplexity, Gemini, Copilot, and Claude. Log citation presence and accuracy.

- Refresh the archive. Quarterly baseline refresh, faster for volatile pages.

Mentionwell operationalizes this pipeline as a blog engine for teams running it across one site or hundreds — research-grounded drafts with AEO, GEO, LLMO, and SEO built into every brief, delivered into the CMS or headless stack already in use, with archive refreshes handled as part of the same workflow. The point is not to replace editorial judgment; it is to make the citation-shaped pipeline repeatable at scale.

Which AI Platforms Should You Prioritize First?

Prioritize by audience behavior, index dependency, and query type — not by raw user counts. A B2B SaaS team selling to engineering leaders has a different optimal platform order than a consumer brand chasing shopping queries.

A decision frame that holds up across most B2B cases:

| Priority | Platform | Why it matters |

|---|---|---|

| 1 | Google AI Overviews | Highest overlap with existing SEO investment; Google index |

| 2 | ChatGPT | Largest conversational user base; Bing index |

| 3 | Perplexity | Citation-forward; strong for comparison and research queries |

| 4 | Microsoft Copilot | Enterprise distribution via Microsoft 365; Bing index |

| 5 | Gemini | Google index overlap; Workspace embedding |

| 6 | Claude | Reasoning-heavy answers; strong for technical buyers |

| 7 | Meta AI, DeepSeek, Grok | Situational, depending on audience |

Index dependency shortcuts the work: optimizing for Google's crawl and index covers AI Overviews and Gemini, while optimizing for Bing's crawl and index covers ChatGPT and Copilot. Platform-specific structure and entity tuning handle the rest.

For platform-level workflows, start with the two highest-leverage surfaces — [How to Show Up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026) and [How to Show Up in Perplexity in 2026](/how-to-show-up-in-perplexity-in-2026) — then expand to the remaining platforms as your audience data dictates.

How Should Marketers Measure and Refresh AEO Performance?

Measurement for AEO moves beyond rankings and clicks. The unit of success is citation — whether your content was pulled into a generated answer, how accurately it was represented, and which entities were attributed.

A workable measurement stack:

- Repeatable query sets. A fixed list of 50–200 prompts covering definitional, comparative, and product-recommendation intents, run on a schedule across target platforms.

- Citation presence and quality. Was your domain cited? Was the quote accurate? Was the brand named correctly?

- Entity consistency. Is your product described the same way across surfaces?

- AI share of voice. Your citation share vs. named competitors on the query set.

- Source freshness. Publication and last-updated dates on cited pages.

- Comparison coverage. Are you present in X-vs-Y answers in your category?

Tools and sources referenced across the corpus include Google Search Console for crawl and index health, Semrush AIO for AI visibility benchmarking (Source: RYGR), Ahrefs Brand Radar, Profound, Muck Rack's Generative Pulse 2025 citation research (Source: RYGR), HubSpot's Consumer Trends Report, and Gartner's search-volume prediction (Source: LevelUp citing Gartner).

Refresh cadence: quarterly as a baseline, faster for high-value or volatile pages. Evergreen Media notes that brands leading in AEO update content quarterly. Muck Rack's data goes further: half of AI citations are from content published within the last 11 months, with the highest citation spike in the first seven days after publication (Source: RYGR citing Muck Rack). That argues for treating archive refreshes as a core editorial activity, not a clean-up task.

A practical refresh rule: update cornerstone definition pages, comparison pages, and category pages every quarter; update high-traffic pages monthly; update any page where a cited competitor passes you within 30 days.

Mentionwell is built around this operating model — a blog engine that handles citation-shaped drafting, schema, multi-site publishing, and scheduled archive refreshes as one pipeline. If your team needs SEO, AEO, GEO, and LLMO running together across one domain or hundreds without rebuilding the stack, Get My Site GEO Optimized is the starting point.

Sources

- Answer engine optimization trends in 2026: How AEO is ...blog.hubspot.com

- What Is AEO? Why Answer Engine Optimization Matters More Than ...wingmanplanning.com

- Introduction to Answer Engine Optimization (AEO) - YouTubewww.youtube.com

- What is AEO ? (Answer Engine Optimization) : r/localseo - Redditwww.evergreen.media

- Why AEO (Answer Engine Optimization) Is Critical to 2026 Marketing ...blog.levelupdigitaladvertising.com

- What is AEO? Answer Engine Optimization Explained for 2026www.rankgarage.com