What Is llms.txt, and Why Does It Matter in 2026?

llms.txt is a proposed Markdown file placed at the root of a domain that gives large language models a curated map of a site's most important content. It is not an officially ratified web standard. According to txt-llms.com, Jeremy Howard — co-founder of Answer.AI and fast.ai — proposed the convention in September 2024, and both txt-llms.com and llmstxt.org still describe it as emerging rather than adopted.

The reason it matters in 2026 is narrower than most SEO blogs suggest. llms.txt is an inference-time routing signal for AI systems like ChatGPT, Claude, Gemini, Perplexity, Meta AI, Copilot, Llama, Grok, and Granite — it tries to help them find your canonical pages when they assemble an answer. It is not a training-data permission file, not a ranking mechanism, and not a guarantee that OpenAI, Anthropic, or Google will cite you.

That adoption curve is real, but so is the asymmetry: the file is cheap to publish and its consumption by major AI crawlers is still unproven. Treat it as a low-cost routing layer inside a broader AEO, GEO, LLMO, and SEO program — not as an AI visibility shortcut.

What Problem Is LLMs.txt Trying to Solve?

llms.txt exists to solve a narrow inference-time problem: AI assistants cannot reliably process a whole website at answer time, and raw HTML is a poor input format for them. The official llms.txt specification (via txt-llms.com and summarized by Semrush) describes the underlying issue as an LLM context-window constraint — most sites are too large to fit in context, and converting complex HTML with navigation, ads, cookie banners, and JavaScript into useful model text is lossy and imprecise.

A Markdown file at a known URL solves a narrow slice of that. As Liran Tal puts it, the original use case was helping AI agents access site content without parsing HTML, loading JavaScript, or handling scraping complexity. llms.txt is a machine-readable content routing layer — a highlight reel of the pages you want models to consider when generating an answer.

The distinction that trips most teams up: this is about inference, not training. Webflow's AEO explainer is explicit that llms.txt does not change how search engines index a site and is not a training-data instrument. When a user asks ChatGPT, Claude 3.7 Sonnet, GPT-4o, or Gemini a question and the system performs retrieval, llms.txt is one of several signals that can point it to your canonical docs, product pages, or glossary entries rather than an auto-generated tag archive.

What it does not solve: enforcement, relationships between entities, versioning, or staleness. Those require a broader architecture, which is why Duane Forrester frames llms.txt as "step one" rather than a finished solution.

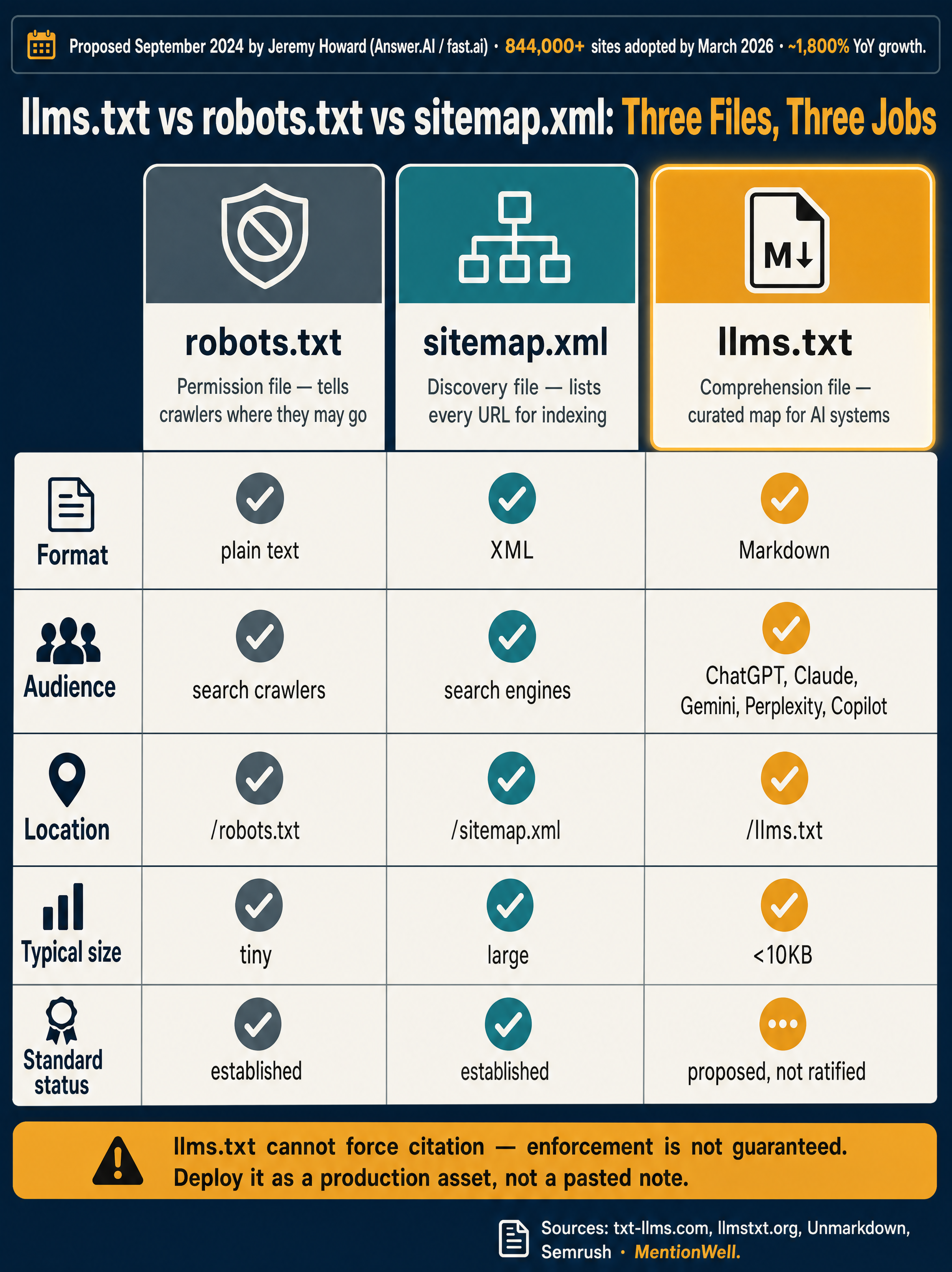

LLMs.txt vs Robots.txt vs Sitemap.xml: What Changes?

robots.txt is a permission file, sitemap.xml is a discovery file, and llms.txt is a comprehension file. That framing, credited to Unmarkdown and echoed by Webflow, is the cleanest way to separate them.

Webflow uses a plainer analogy: robots.txt is a bouncer deciding who gets in, sitemap.xml is a table of contents listing every URL, and llms.txt is a highlight reel telling language models which pages actually matter.

There is a common source of confusion worth flagging. Similar AI's guide presents llms.txt using Allow/Disallow syntax reminiscent of robots.txt — as if it controls AI crawler access. Similar AI itself notes that compliance varies by AI system and there is no enforcement mechanism. Unmarkdown and Webflow, by contrast, describe llms.txt as curated guidance, not access control. In practice, llms.txt can signal preferences, but it cannot block crawling and cannot compel citation.

| File | Purpose | Format | Audience | Enforcement |

|---|---|---|---|---|

| robots.txt | Allow or disallow crawling | Plain text directives | Search and AI crawlers | Voluntary, widely honored by mainstream crawlers |

| sitemap.xml | List discoverable URLs | XML | Search engine indexers | None — discovery aid only |

| llms.txt | Point AI systems at important content | Markdown | Large language models at inference | None — proposed, not ratified |

| llms-full.txt | Provide full text of key pages in one document | Markdown | LLMs that prefer a single ingest | None — proposed |

Google has not endorsed llms.txt as a ranking signal. Semrush, Webflow, Unmarkdown, and Liran Tal all describe it as a proposed convention with growing but uneven support. Treat it as complementary to robots.txt and sitemap.xml, never as a replacement.

Where Should You Place the llms.txt File?

Place the file at the root of the domain at `https://yourdomain.com/llms.txt`. The filename is `llms.txt`, not `llm.txt` — a mistake that appears in several snippets and AI-generated guides. Similar AI and txt-llms.com both specify the root path.

Deployment hygiene matters more than the file itself:

- Public access with no login wall. Crawlers will not authenticate.

- Stable URL. Do not redirect `/llms.txt` through marketing campaigns or A/B frameworks.

- UTF-8 plain text. Serve as `text/plain` or `text/markdown`; avoid transforming it into HTML.

- Cache sensibly. A short TTL (minutes to hours) lets updates propagate without hammering origin.

- Check your links. Every Markdown link in the file should return 200; broken links in llms.txt are dead weight.

How Are LLMs.txt Files Structured?

An llms.txt file is written in Markdown with one required element — an H1 naming the site — followed by optional sections of annotated Markdown links. Unmarkdown confirms the H1 is the only strict requirement; Semrush and Liran Tal describe Markdown as lightweight, plain-text, and parseable by AI systems without rendering.

A well-formed file typically looks like this:

- `# Site or Project Name` — the required H1

- A short blockquote or paragraph describing what the site does

- `## Section` headings that group related resources (Docs, API, Pricing, Case Studies, Glossary)

- Markdown links with short annotations: `- Page title: one-sentence description`

- An optional `## Optional` section for secondary material

Per Unmarkdown, the annotations after each link are the most underused part of the format. They let a model decide whether a page is relevant before fetching it — which matters when context windows and retrieval budgets are tight.

What to include: homepage, core product pages, canonical documentation, API references, changelogs, glossary and terminology pages, flagship case studies, pricing (when public), and definitive explainers.

What to exclude: internal wikis, private customer data, outdated product claims, thin duplicate content, staging URLs, gated commercial details, and anything you would not want quoted verbatim in an AI answer.

Ecosystem tools like CopyMarkdown, LLMs.txt Generator, and llmstxt.studio can scaffold a file, but the editorial decisions — what to include, how to describe it — are what determine whether the file is useful.

How to Create an llms.txt File: Step-by-Step Guide

Build llms.txt as a ten-step workflow driven by your CMS, not a hand-edited template. Hand-crafted files decay within one product release cycle.

- Inventory the highest-value pages. Pull your top organic entries, top docs pages, top AI-cited pages (from referral logs), product pages, and glossary entries. Aim for a curated list, not a full sitemap dump.

- Choose canonical URLs. One URL per concept. Resolve duplicates, consolidate redirect chains, and avoid listing both `/product` and `/products/main`.

- Group by topic or user task. Common sections: Product, Documentation, API, Pricing, Case Studies, Company, Glossary.

- Write concise Markdown descriptions. One sentence per link explaining what the page answers or contains. This is the signal a model uses to decide relevance.

- Decide what stays optional or excluded. Move secondary references into `## Optional`. Omit anything stale or sensitive.

- Generate from the CMS when possible. Hand-written files decay fast. Generate from the same source of truth that publishes the pages.

- Deploy to the root path. `/llms.txt`, served as plain text, no authentication.

- Validate access and encoding. Fetch with `curl -I`, confirm 200 status, UTF-8, and correct MIME type. Check every link returns 200.

- Monitor server logs for requests. Watch which user agents actually fetch `/llms.txt`. This is how you measure real consumption rather than assumed.

- Refresh after any material change. Product launch, pricing change, doc restructure, new case study, or archive refresh should trigger a regeneration.

Platform-specific notes from the research:

- WordPress: Bluehost reports that Yoast SEO can automate llms.txt creation for WordPress sites without code or manual edits.

- Webflow: Webflow's AEO documentation covers uploading the file directly through site settings.

- GitHub / headless builds: Generate at build time from front-matter and page metadata; commit `/llms.txt` to the static output.

- Multi-site agencies and programmatic SEO: Templating is mandatory. Hand-editing per site does not scale and re-introduces the drift llms.txt was supposed to avoid.

If you are running llms.txt across many properties, the maintenance cost is where the strategy breaks down first. Mentionwell generates citation-shaped content and routing files from your site profile across one site or hundreds — Get My Site GEO Optimized to run llms.txt maintenance from the same pipeline that publishes the pages.

Do You Need Both llms.txt and llms-full.txt?

Most sites need llms.txt. Fewer need llms-full.txt. The two serve different purposes and have very different size profiles.

| File | Typical size | Contains | Best for |

|---|---|---|---|

| llms.txt | Under 10KB (LLMs.txt Generator) | H1, description, curated Markdown links with annotations | Almost any site that wants to guide AI systems |

| llms-full.txt | Multiple megabytes (LLMs.txt Generator) | Full text of key pages compiled into one document | Sites with dense, stable, technical content that benefits from single-file ingest |

Unmarkdown positions `llms-full.txt` as useful for developer documentation, API references, and curated knowledge bases where an AI agent benefits from one ingestible document rather than fetching many URLs. Duane Forrester agrees that llms.txt has its strongest utility for developer docs and technical content where prose and code are already structured.

Skip `llms-full.txt` if your key pages change frequently, if content contains sensitive commercial details, or if your site is primarily marketing prose. Maintaining a multi-megabyte second copy of your content creates a parallel source of truth that will drift from the live site within weeks — which is exactly the failure mode Duane Forrester warns about.

What Can llms.txt Not Guarantee?

llms.txt cannot force citation, cannot block training, and cannot be enforced. txt-llms.com states plainly that llms.txt is not yet officially ratified. Duane Forrester goes further: no major AI platform has formally committed to consuming it.

The consumption evidence is thin. Duane Forrester cites an audit of CDN logs across 1,000 Adobe Experience Manager domains where LLM-specific bots were essentially absent from `/llms.txt` requests, while Google's crawler accounted for most fetches. That is one audit, not a universal result, but it should temper expectations — a file being downloaded is not the same as a model using its contents during answer generation.

Specific limits to keep in mind:

- Not a training opt-out. llms.txt does not prevent any model from being trained on your content. Use robots.txt, IP allowlists, and license terms for that.

- Not a ranking signal. Google has not confirmed llms.txt influences Search or AI Overviews.

- Not enforceable. Similar AI explicitly notes there is no enforcement mechanism; compliance is voluntary and inconsistent.

- No relationship model. Per Duane Forrester, llms.txt cannot express product families, deprecations, replacements, or authoritative spokespeople — it is a flat list of links.

- Stale content risk. A neglected llms.txt becomes a second outdated copy of your positioning, potentially confusing the AI systems you were trying to help.

- Privacy and compliance risk. Anything included becomes easier for AI systems to ingest. Do not list pages with PII, unreleased pricing, or confidential commercial terms.

Treat llms.txt as a low-cost experiment with asymmetric upside, not a strategy. The value today is that it is cheap to maintain if generated programmatically, costs little if it is ignored, and pays off if adoption accelerates.

How Should Teams Maintain llms.txt Inside AEO, GEO, LLMO, and SEO Workflows?

llms.txt is one component of a broader answer-engine and generative-engine strategy — not a standalone AI visibility tactic. A useful mental model: AEO structures pages to be quotable, GEO shapes content so generative engines synthesize from it, LLMO builds the entity and brand signals models rely on, SEO keeps the site indexable and ranked, and llms.txt routes AI systems to the canonical URLs those other disciplines produced.

Operational requirements for SaaS teams, agencies, and multi-site operators:

- Generate from the CMS or content engine. Hand-editing does not survive a product release cycle.

- Validate on every deploy. 200 status, UTF-8, MIME type, live links.

- Log AI bot requests. Track which user agents fetch `/llms.txt` and how often.

- Refresh with archive updates. Any time you refresh an older article, retire a page, or update pricing, regenerate the file.

- Keep it consistent with product, docs, and case studies. llms.txt must reference the same canonical URLs your schema, internal links, and sitemap do.

- Treat it as one surface, not the surface. Pair it with structured data, clean page templates, direct-answer formatting, and entity consistency.

For deeper context on the surrounding disciplines, see MentionWell's guides to [AEO](/what-is-aeo-in-2026-answer-engine-optimization-explained), [GEO](/what-is-geo-in-2026-generative-engine-optimization-explained), [LLMO](/what-is-llmo-in-2026-large-language-model-optimization-explained), and [AI SEO](/what-is-ai-seo-in-2026-the-new-search-playbook-explained), along with citation playbooks for [ChatGPT](/how-to-show-up-in-chatgpt-in-2026), [Gemini](/how-to-show-up-in-google-gemini-in-2026), [Claude](/how-to-show-up-in-claude-in-2026), [Perplexity](/how-to-show-up-in-perplexity-in-2026), [Copilot](/how-to-show-up-in-microsoft-copilot-in-2026), and [Google AI Overviews](/how-to-show-up-in-google-ai-overviews-in-2026).

Mentionwell operates as the blog engine behind this kind of workflow: a research-grounded pipeline that ships AEO, GEO, LLMO, and SEO into every draft, refreshes archive content, and publishes into existing CMS or headless stacks across one site or hundreds. If llms.txt is going to stay synchronized with your real content — and not drift into a second stale copy — it needs to be generated from the same system that produces the pages. Get My Site GEO Optimized to run llms.txt maintenance, archive refreshes, and citation-shaped publishing from a single editorial pipeline.

Sources

- What Is llms.txt? How the New AI Standard Works (2026 Guide)www.bluehost.com

- What Is LLMs.txt? & Do You Need One? - Neil Patelneilpatel.com

- llms.txt Was Step One. Here's the Architecture That Comes Nextduaneforresterdecodes.substack.com

- What Is LLMs.txt & Should You Use It?semrush.com