What Is AI SEO?

AI SEO is the practice of making content discoverable, extractable, trusted, and cited across AI-powered search experiences — not just ranked in blue links. It extends classic SEO fundamentals (helpful content, technical strength, semantic structure, authority) into the surfaces where answers are generated instead of retrieved: ChatGPT, Google AI Overviews, Google AI Mode, Gemini, Claude, Perplexity, Copilot, and Bing-powered experiences.

The reason it matters in 2026 is that visibility has split. One layer is the traditional ranked result. The other is the generated answer, where the model synthesizes a response and decides which sources to cite. According to Clearscope, SEO isn't disappearing — it's diverging into two environments, and visibility in the AI layer depends on whether a system understands your content well enough to cite it. Sentic makes the consequence concrete: a brand can rank first for a competitive keyword and still lose traffic when Google AI Overviews cites a competitor as the authoritative source.

AI SEO is the discipline of earning both the ranking and the citation, because in 2026 neither one guarantees the other.

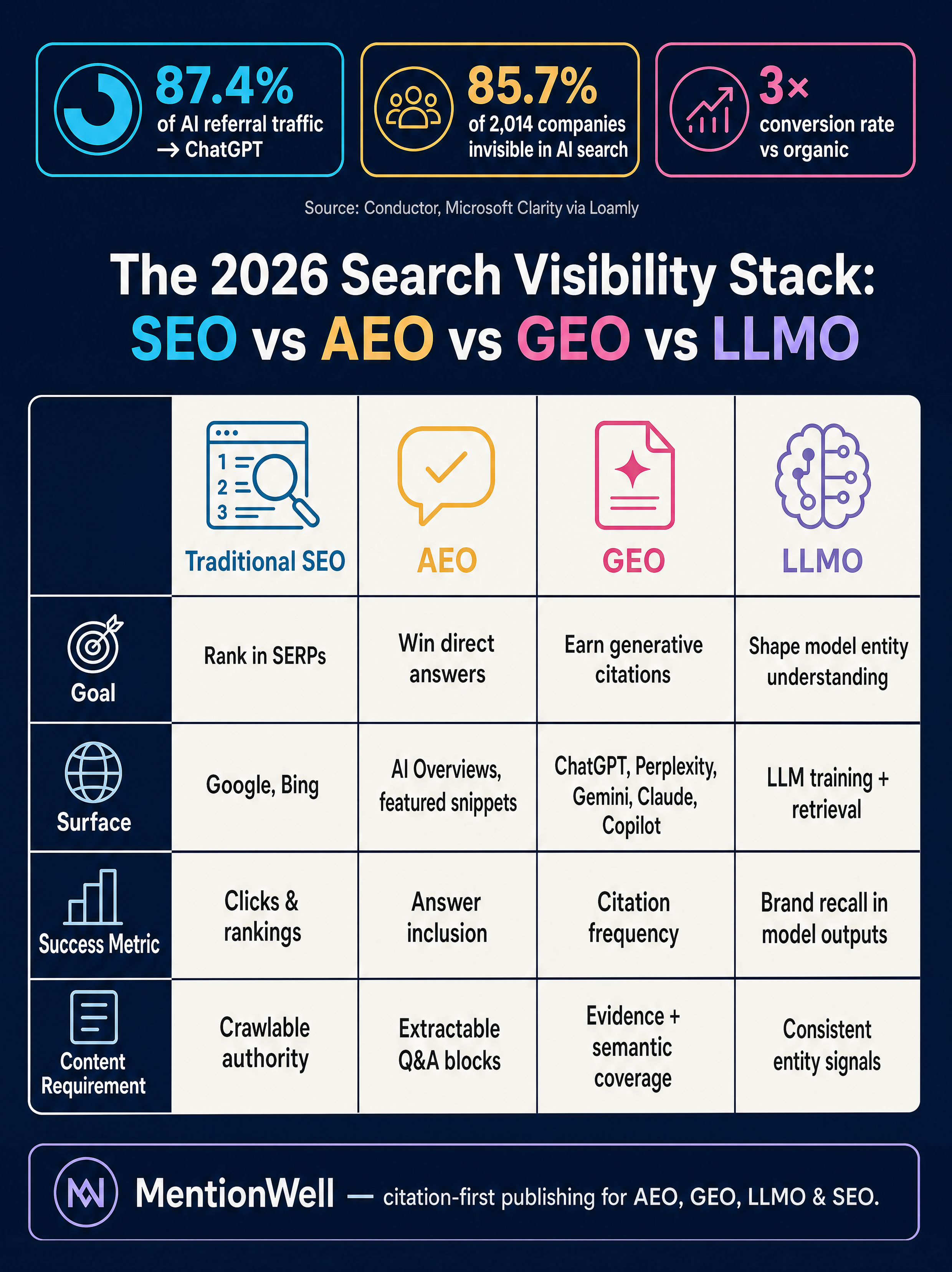

The operational implication for B2B SaaS teams is that content has to do two jobs at once: satisfy crawlers and rankers on Google and Bing, and satisfy the retrieval-and-synthesis logic of large language models. That's why AI SEO is usually decomposed into sub-practices — AEO (Answer Engine Optimization), GEO (Generative Engine Optimization), and LLMO (Large Language Model Optimization) — each targeting a different part of the AI discovery stack.

Is AI SEO About Tools, AI Search Citations, or Both?

AI SEO carries two distinct meanings, and treating them as the same term is the fastest way to build the wrong roadmap. According to PikaSEO, AI SEO can mean using AI-powered tools to make traditional SEO faster and more scalable, or it can mean optimizing content to perform in AI-powered search engines — a discipline PikaSEO and Loamly both identify formally as Generative Engine Optimization (GEO).

The workflow meaning covers AI-assisted keyword research, briefs, drafts, technical audits, schema generation, and publishing. According to a 2025 BrightEdge study cited by Hashmeta, 84% of SEO professionals now use AI tools in their daily work, and teams adopting AI-powered workflows report 40–60% time savings on research and optimization tasks. That's useful, but it's an efficiency story, not a visibility story.

The citation meaning is the strategic one. It covers AEO (optimizing for direct-answer extraction), GEO (earning citations in generative engines), and LLMO (shaping how models understand your brand and entities). These are the practices that determine whether ChatGPT, Perplexity, or Google AI Overviews surface your page when a buyer asks a question.

How Are AI SEO and Traditional SEO Different?

Traditional SEO optimizes for ranked retrieval against a query. AI SEO optimizes for extraction, synthesis, and recommendation inside a generated answer. They share foundations — crawlability, E-E-A-T, semantic structure — but the success condition is different: a ranked page can be invisible in an AI answer, and a cited source doesn't need to rank first.

Within AI SEO, the three sub-disciplines are not interchangeable:

| Discipline | Primary goal | Main surface | Success metric | Content requirement |

|---|---|---|---|---|

| Traditional SEO | Rank on query | Google/Bing SERP | Position, clicks, sessions | Crawlable, authoritative pages |

| AEO | Be quoted as the answer | Featured snippets, AI Overviews, voice | Direct-answer inclusion | Question-led sections, concise answers |

| GEO | Be cited in generated responses | ChatGPT, Perplexity, AI Mode, Copilot | Citation share, brand mentions | Evidence, comparisons, recency |

| LLMO | Be understood and recommended by models | Any LLM output (cited or uncited) | Mention rate, entity accuracy | Entity consistency, off-site signals |

According to Loamly, this layering matters because traditional SEO gets a brand into the candidate pool, while AI SEO determines whether the brand is actually recommended from that pool. Clearscope frames the same split differently: one world still revolves around rankings, the other is emerging inside AI-driven answers where visibility depends on whether a system understands content well enough to cite it.

Ranking makes you eligible. Citation makes you chosen. The operational takeaway is that a B2B SaaS content program in 2026 needs measurement, structure, and refresh logic for both layers — not one strategy bolted onto the other.

For a deeper breakdown of how these workflows split operationally, see [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

Do I Need Both AI SEO and SEO?

Yes — and in most cases they reinforce each other rather than compete. According to Search Engine Land, AI SEO does not replace SEO; it complements and expands classic SEO so marketers can pursue visibility in both organic SERPs and AI-powered environments. Loamly's framing is blunter: traditional SEO gets you into the candidate pool, AI SEO decides whether you're recommended from it.

The dependency runs in both directions. Classic SEO foundations — Core Web Vitals, mobile-first indexing, crawlability, structured data, site architecture, and E-E-A-T — still govern whether your pages are eligible to be retrieved at all. According to Sentic, technical SEO remains foundational for AI-era visibility because AI systems rely on the same underlying crawl and index infrastructure. Loamly cites a Lily Ray study of 11 websites in early 2026 finding that sites losing Google rankings saw ChatGPT citations fall by up to 49% — concrete evidence that rankings and AI citations are linked, not independent.

Why Can a Page Rank in Google but Miss AI-Generated Answers?

Because ranking and citation are decided by different mechanics. Ranking rewards relevance and authority against a query. Citation requires that a generative system can extract a clean answer, trust the source, and fit the passage into a synthesized response.

According to Clearscope, generative systems infer intent from meaning, context, relationships, and semantic patterns — matching a keyword can help a page rank but does not guarantee inclusion in an AI-generated answer. The selection logic looks roughly like this:

- Retrieval — the AI system issues one or more sub-queries against an index (Bing for ChatGPT and Copilot, Google's index for AI Mode, Brave for Claude web search, per Loamly).

- Candidate pool — top-ranked pages enter the shortlist. This is where classic SEO still gates eligibility.

- Intent inference — the model decides what the user actually wants, often breaking one prompt into multiple fan-out queries. Loamly reports Google AI Mode fires 8–12 fanout queries per prompt, and ChatGPT sends 3–5 sub-queries to Bing.

- Extractability check — the model scans candidates for passages it can cleanly lift: direct answers, comparisons, definitions, numbered steps.

- Trust and evidence — entity consistency, cited data, author signals, and freshness raise citation likelihood.

- Synthesis and citation — the model writes the answer and attributes sources that most cleanly supported it.

Sentic captures the practical consequence: a brand can rank #1 and still lose traffic to an AI Overview that cites a competitor's content as the authoritative source. If your page ranks but isn't structured to be extracted, you're feeding the answer without getting credit.

Which AI Search Environments Matter Most for B2B SaaS?

For most B2B SaaS audiences, the priority stack is ChatGPT, Google AI Overviews and AI Mode, Perplexity, Copilot, Gemini, and Claude — with Bing indexation acting as an upstream dependency for several of them. The retrieval mechanics differ, and the differences should shape where you invest.

| Environment | Retrieval pattern (per Loamly) | Dependency | Notes for B2B SaaS |

|---|---|---|---|

| ChatGPT | 3–5 Bing sub-queries per prompt | Bing indexation | Loamly cites Conductor on 87.4% share of AI referral traffic |

| Google AI Overviews / AI Mode | 8–12 fanout queries; Google index + Shopping Graph for commercial | Google indexation, E-E-A-T | High-volume answer surface |

| Perplexity | ~6 real-time searches per prompt, source-dependent | Live web + community sources | Profound data via Loamly: Reddit appears in 46.7% of top cited sources |

| Copilot | Shares Bing retrieval; Work mode pulls tenant data | Bing indexation, Microsoft surfaces | Enterprise-adjacent distribution |

| Gemini | Google index, integrated across Google surfaces | Google ecosystem | Overlaps heavily with AI Mode behavior |

| Claude | Uses Brave Search for web citations | Brave index, direct training signals | Smaller reach, higher per-citation weight |

The ChatGPT and conversion figures come from Loamly citing Conductor data across 3.3B sessions (ChatGPT at 87.4% of AI referral traffic) and Microsoft Clarity data showing AI-referred visitors convert at 3x the rate of organic visitors. Treat these as directional — attribution in AI-mediated discovery is still maturing — but the pattern is consistent: fewer referrals, higher intent. Note that the Reddit figure is a share of cited sources in Perplexity answers (per Profound), not a share of Perplexity traffic — it's a citation-supply signal, not a platform-priority ranking.

For platform-specific playbooks, see [How to Show Up in ChatGPT](/how-to-show-up-in-chatgpt-in-2026), [Google AI Overviews](/how-to-show-up-in-google-ai-overviews-in-2026), [Perplexity](/how-to-show-up-in-perplexity-in-2026), [Claude](/how-to-show-up-in-claude-in-2026), [Gemini](/how-to-show-up-in-google-gemini-in-2026), and [Copilot](/how-to-show-up-in-microsoft-copilot-in-2026).

How Do You Optimize for AI Overviews and Answer Engines?

Start with the question, not the keyword. AI Overviews and answer engines reward pages that contain a clean, self-contained answer to a real user question, backed by evidence a model can extract. According to Fulltraffic, Google has stated there are no special technical requirements or extra schema types required to appear in AI Overviews or AI Mode — the same foundational SEO best practices remain the path in. That does not mean technical work is optional; it means structure and clarity do the heavy lifting.

A repeatable AEO/GEO optimization workflow:

- Source the questions. Pull real queries from sales calls, demos, onboarding, support tickets, and product docs. Eyeful Media recommends this as the starting point for B2B content because it surfaces questions buyers actually ask, not keywords tools suggest.

- Map fan-out queries. For each primary question, list the follow-ups a model would generate. Loamly's estimate of 8–12 fanout queries in Google AI Mode is a reasonable target for sub-topic coverage.

- Write the direct answer first. Open each H2 with a 1–2 sentence answer readable in isolation. This is the passage the model will extract.

- Add comparison, edge-case, and evidence passages. Generative systems prefer sources that address adjacent intent — comparisons, limits, and exceptions — not just a single definition.

- Strengthen entity consistency. Use full names of products, people, and concepts on first mention. LLMs rely on entity co-occurrence to decide what to surface.

- Attribute every statistic. "According to [Source], X%" is citable; "studies show" is not.

- Maintain technical fundamentals. Crawlability, Core Web Vitals, mobile-first rendering, clean HTML, and logical heading structure all still gate eligibility.

- Use structured data where it helps extraction. FAQ, HowTo, Article, and Product schema can aid classic SERP features even if not required for AI Overviews.

- Refresh, don't just publish. Loamly's data on ranking loss translating to citation loss means decaying pages bleed visibility in both layers simultaneously.

How Can You Use AI for End-to-End SEO Automation Without Thin Content?

The answer is to automate the pipeline, not the judgment. AI can accelerate research, briefs, drafts, internal linking, schema, and QA — but editorial gates, evidence requirements, and entity controls have to sit on top, or you end up with templated pages that neither rank nor earn citations.

A citation-first publishing pipeline looks like this:

- Site profile and entity map. Capture audience, positioning, product vocabulary, and the canonical entity list the content must reinforce.

- Question and query research. Combine sales and support data with fan-out query mapping across target platforms.

- Research-grounded briefs. Every brief should include sources, facts, and the direct-answer passages required for AEO.

- Drafting with structural controls. Enforce direct-answer openings, attributed statistics, and entity-dense prose.

- Editorial review. A human checks claims, adds operator perspective, and kills thin sections.

- CMS publishing. Deliver into WordPress, headless, or existing CMS workflows with internal links and schema pre-wired.

- Monitoring and refresh. Track citation share, AI referral traffic, and rankings; refresh pages when evidence decays or fan-out coverage gaps appear.

Mentionwell runs this pipeline as a citation-first blog engine — onboarding a domain, building the site profile, and producing research-grounded articles with AEO, GEO, LLMO, and SEO baked into every draft, then publishing into existing CMS or headless stacks and refreshing archives on schedule. The point is not faster content; it's consistent, citation-shaped content across one site or hundreds without editorial controls collapsing.

What Metrics Should You Track for AI Search Visibility?

Rankings alone are no longer sufficient. You need a scorecard that covers eligibility, extractability, and citation — plus the downstream value of AI-referred traffic. According to SEO.com, AI search success focuses on visibility, citations, and brand mentions rather than rankings and clicks alone.

A practical measurement stack:

| Layer | What to track | Where to measure |

|---|---|---|

| Eligibility | Indexation, rankings, Core Web Vitals | Google Search Console, Bing Webmaster Tools |

| Extractability | Featured snippets, direct-answer inclusion | GSC, SERP monitoring tools |

| Citation share | Prompt coverage, citation frequency, brand mentions | AI Performance dashboard, manual prompt testing |

| Traffic quality | AI referral sessions, conversion rate | GA4, Microsoft Clarity |

| Archive health | Refreshed-page performance, decay signals | GSC, internal analytics |

Loamly cites Microsoft Clarity data showing AI-referred visitors convert at 3x the rate of organic visitors — useful context for weighting AI visibility investments even though attribution remains noisier than classic organic. The honest framing: treat these metrics as directional, sample prompts regularly across ChatGPT, Perplexity, AI Overviews, and Copilot, and measure citation share over time rather than chasing single-prompt wins.

What Content Types and Formats Earn the Most AI Citations?

Citable assets share three traits: they answer a specific question, they contain evidence a model can lift, and they reinforce entity clarity. The formats that consistently earn citations map to those traits.

- Glossary and definition pages. Clean, entity-dense definitions are high-extractability assets. See [What Is AEO](/what-is-aeo-in-2026-answer-engine-optimization-explained), [What Is GEO](/what-is-geo-in-2026-generative-engine-optimization-explained), [What Is LLMO](/what-is-llmo-in-2026-large-language-model-optimization-explained), and [What Is AISO](/what-is-aiso-in-2026-ai-search-optimization-explained).

- Direct-answer explainers. Question-shaped H2s with a 1–2 sentence answer up top.

- Comparison guides. Tables comparing tools, approaches, or platforms are heavily cited in Perplexity and AI Overviews.

- Platform-specific visibility guides. Cover the retrieval mechanics of individual surfaces — useful as both owned content and citation bait.

- Buyer checklists and workflow articles. Numbered steps get extracted directly into how-to answers.

- Original research and proprietary data. According to Loamly, Princeton and Georgia Tech research found a +40% GEO visibility boost from content enrichment — evidence-dense pages outperform.

- Product-led how-to content and case studies. Turn demos, onboarding flows, and customer outcomes into citable assets.

- Support-derived explainers. Convert top support tickets into public answer pages.

- Refreshed archive pages. Loamly's data on ranking-to-citation correlation makes archive refresh one of the highest-leverage plays in a content program.

Citation-first content is a publishing operation, not a one-off project. If you want a repeatable engine that produces AEO, GEO, LLMO, and SEO-ready articles across your glossary, platform guides, and archive refreshes — and ships them into your existing CMS — Get My Site GEO Optimized with Mentionwell.

Sources

- The SEO Playbook That Actually Works in 2026www.youtube.com

- The 2026 SEO Playbook: How AI Is Reshaping Searchwww.clearscope.io

- The 2026 SEO Playbook: Mastering Search in the AI-First ...www.linkedin.com

- B2B SEO & AI Search Playbook: 10 Winning strategies for ...www.eyefulmedia.com

- SEO 2.0: The Content Playbook for AI Search Visibilitywww.searchenginejournal.com