What Is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the practice of structuring content and digital presence so AI engines — ChatGPT, Perplexity, Google AI Overviews, Google Gemini, Claude, and Microsoft Copilot — can retrieve, cite, and recommend it inside generated answers. The unit of success is not a ranked blue link; it is a cited passage, a named brand mention, or a recommendation surfaced when a model synthesizes a response.

GEO is the discipline of being cited inside an AI-generated answer, not ranked above it.

If you arrived here expecting orbital mechanics, note that "GEO" also refers to Geosynchronous Earth Orbit, Geostationary Earth Orbit, and the intergovernmental Group on Earth Observations. This article is strictly about the marketing discipline, which took its name from a 2023 Princeton-led research paper and has since been adopted across the SEO industry.

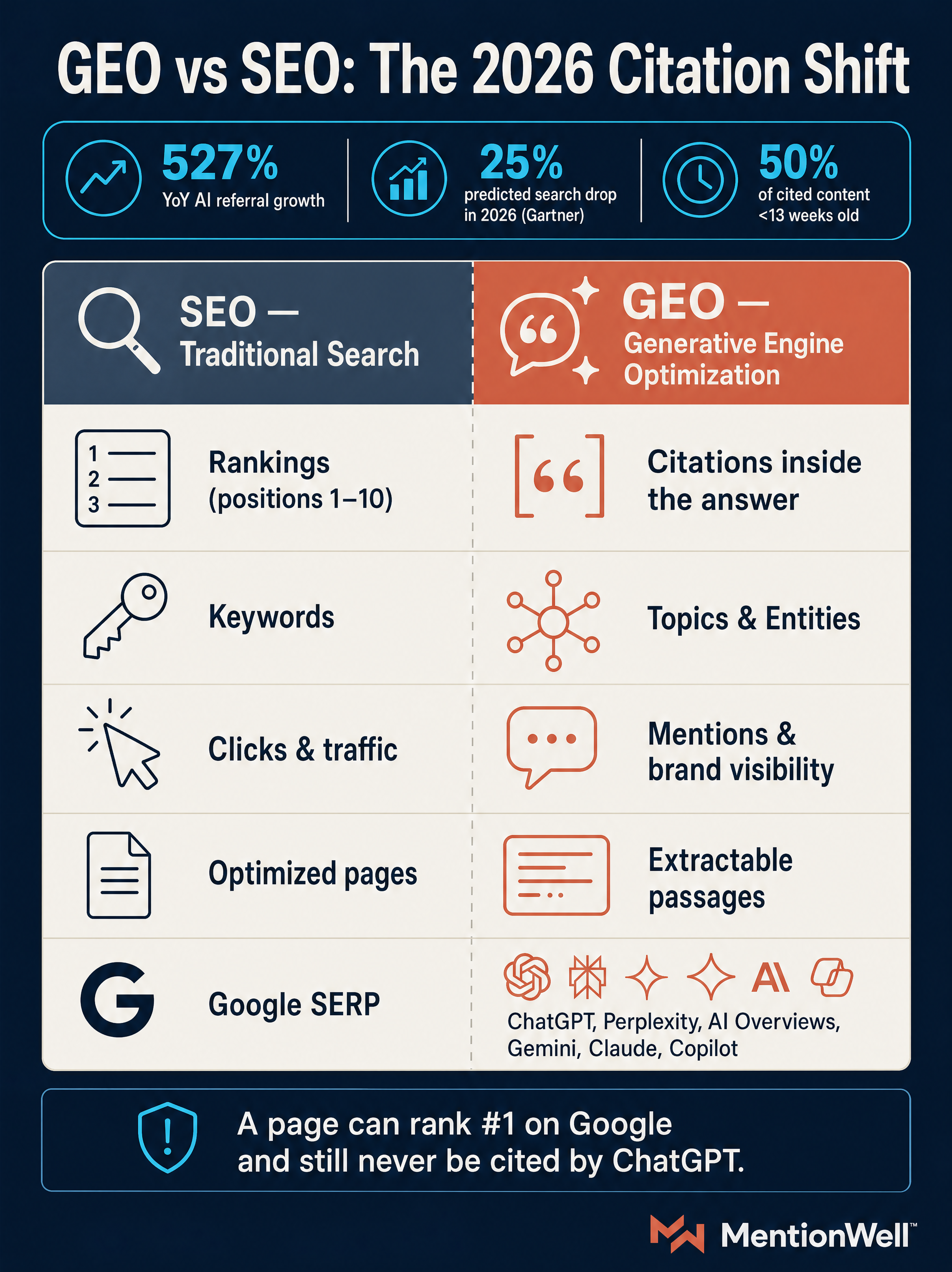

The operating shift, as summarized by AdsX, is concrete:

| Classic SEO | GEO |

|---|---|

| Rankings on a SERP | Citations inside a synthesized answer |

| Keywords | Topics and entities |

| Clicks | Mentions and recommendations |

| Positions 1–10 | Inclusion in the answer |

| Organic traffic | Brand visibility in AI responses |

A page can rank number one in Google Search and still never be cited by ChatGPT if it lacks the structural elements AI engines look for (Source: Frase.io). That is why teams managing B2B SaaS, agency portfolios, or multi-site programs now run GEO as a distinct workflow alongside AEO, LLMO, and classic SEO — each targets a different retrieval behavior inside the same content pipeline.

Why GEO Is Becoming Essential in 2026

The user behavior shift is no longer hypothetical. According to Search Engine Land, Gartner predicted traditional search volume will drop 25% in 2026 as users move to AI-powered answer engines, Google AI Overviews now reach more than 2 billion monthly users, and ChatGPT serves 800 million users each week. Meanwhile, Frase.io (citing Previsible's 2025 AI Traffic Report) reports that AI-referred sessions jumped 527% year over year in the first five months of 2025.

Two structural features make GEO urgent rather than optional:

- Scarcity of slots. According to Search Engine Land, large language models typically cite only two to seven domains per response. Robiz Solutions reports Google AI Overviews pull from 3–5 sources per answer. Being the 11th "ranking" page is not a near-miss in GEO — it is invisibility.

- Weak overlap with classic rankings. AdsX, citing research from Princeton and other institutions, notes that fewer than 10% of sources cited by ChatGPT, Gemini, and Copilot rank in Google's top 10 for the same queries. SEO authority is necessary but not sufficient.

Zero-click brand visibility is now measurable value. Even when the user never visits your domain, being named inside the answer shapes consideration, and that exposure compounds across the major answer surfaces.

GEO vs SEO: What Are the Differences Between SEO and GEO?

SEO optimizes pages for rankings and clicks inside Google Search. GEO optimizes passages, entities, and evidence for inclusion inside a synthesized AI answer. The two disciplines share infrastructure — crawlable HTML, indexation, internal linking, E-E-A-T — but diverge at the content unit and the success metric.

SEO wins you clicks; GEO wins you mentions inside the answer.

| Dimension | SEO | GEO |

|---|---|---|

| Target surface | Google/Bing SERPs | AI-generated answers across ChatGPT, Perplexity, AI Overviews, Gemini, Claude, Copilot |

| Optimization unit | Full page / URL | Self-contained passage, entity, claim |

| Success signal | Ranking + click | Citation, mention, recommendation |

| Content style | Long-form topical coverage | Extractable paragraphs with direct answers and source data |

| Shelf life | Months to years | Weeks — 50% of cited content is <13 weeks old (Source: Seer Interactive via Frase.io) |

| Measurement | Clicks, CTR, conversions, keyword positions | Citation share, AI referrals in GA4, prompt-test inclusion, share of voice in answers |

Classic SEO still matters for GEO. As Geneo notes, content that is not discovered and indexed cannot be cited. Technical hygiene, crawl access, and clean information architecture are GEO prerequisites. What changes is the editorial layer on top: Frase.io frames it as SEO rewarding long-form pages that cover a topic, while GEO rewards self-contained paragraphs that can be extracted and attributed.

The practical consequence for content teams: stop treating "AI SEO" as a rebrand of existing workflows. Run GEO as a parallel track with its own briefs, structural requirements, and measurement loop, and let it share the underlying technical and publishing stack with SEO.

Is GEO the Same as AEO, LLMO, GSO, or AIO?

Short answer: some sources treat them as synonyms, and some draw sharper lines. The operator-useful distinction separates them by where in the retrieval and generation stack they intervene.

- AEO (Answer Engine Optimization) — structuring content so answer engines can extract and present a direct, reliable answer, often as a featured snippet or voice response (per Geneo).

- GEO (Generative Engine Optimization) — citation visibility inside synthesized, generative answers from ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, and Copilot.

- LLMO (Large Language Model Optimization) — model-facing signals: how your brand, entities, and claims are represented in training data, retrieval indexes, and the model's own knowledge graph.

- GSO / AIO — alternate labels. Frase.io treats AEO, LLMO, GSO, and AIO as alternate names for the same discipline, so expect terminology drift across vendors.

| Discipline | Primary goal | Target behavior |

|---|---|---|

| SEO | Rank the URL | Click from a ranked list |

| AEO | Own the direct answer | Extraction into a snippet or voice response |

| GEO | Be cited in the generated answer | Inclusion in a synthesized response |

| LLMO | Shape model-level knowledge | Accurate brand/entity recall without retrieval |

For a deeper breakdown of which workflow fits which team, see our companion piece on [AEO vs GEO vs LLMO](/blog/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team). Most mature content programs run all four on a shared editorial pipeline rather than picking one.

How Do ChatGPT, Perplexity, and Google AI Overviews Select Their Sources?

AI engines do not rank pages the way Google Search does — they retrieve and synthesize. As Growth Marshal describes it, generative systems pull passages from multiple sources, evaluate relevance and trustworthiness, and generate composite answers. The citation is the output of that retrieval-and-ranking step, not a separate editorial decision.

Across engines, the shared selection signals are consistent, even if the weightings differ:

- Retrievability — is the page crawled and indexed by the engine's retrieval layer (Bing for Copilot and some ChatGPT queries, Google for AI Overviews and Gemini, bespoke indexes and the open web for Perplexity and Claude)?

- Entity clarity — can the model resolve the brand, product, author, and topic consistently across the page and external references?

- Extractable structure — are there self-contained paragraphs, direct answers, and supporting data the model can lift?

- Corroboration — do other authoritative sources back up the claim? Search Engine Land notes that a Princeton study and a 2025 citation-bias paper show AI engines strongly favor earned media and authoritative third-party sources over brand-owned content.

- Freshness — recency matters heavily, consistent with the 13-week citation decay number from Seer Interactive.

- Source diversity — models tend to cite a mix of domains rather than stack one site.

| Engine | Retrieval backbone | Notable behavior |

|---|---|---|

| ChatGPT / ChatGPT Search | Bing + own index; GPTBot, ChatGPT-User | Mix of retrieval and model knowledge; cites on live queries |

| Perplexity | Own crawl + web; PerplexityBot | Citation-first UX; surfaces sources prominently |

| Google AI Overviews | Google index | Pulls from 3–5 sources per answer (Source: Robiz Solutions) |

| Google Gemini | Google index + model | Overlaps with AI Overviews; also powers AI Mode |

| Claude | Web access via Anthropic tooling; ClaudeBot | Conservative citation, strong on authoritative sources |

| Copilot | Bing index | Bing indexation is the single biggest lever |

| Grok | X platform + web | Weighted toward public X signals and recent content |

For engine-specific tactics, see the MentionWell guides for [ChatGPT](/blog/how-to-show-up-in-chatgpt-in-2026), [Perplexity](/blog/how-to-show-up-in-perplexity-in-2026), [Google AI Overviews](/blog/how-to-show-up-in-google-ai-overviews-in-2026), [Gemini](/blog/how-to-show-up-in-google-gemini-in-2026), [Claude](/blog/how-to-show-up-in-claude-in-2026), [Copilot](/blog/how-to-show-up-in-microsoft-copilot-in-2026), and [Grok](/blog/how-to-show-up-in-grok-in-2026).

What Content Structure Makes a Page More Likely to Be Cited?

Pages that get cited answer the primary question in the first 2–3 sentences, then support the answer with self-contained, extractable paragraphs, clear entities, and source data. Robiz Solutions describes the preferred structure as factually dense, well structured, authoritative, and direct. Mekaa summarizes the same idea around three levers: clear answers, source data, and extractable structure.

Practical editorial rules that map to this model:

- Lead with the answer. Open each H2 with a 1–2 sentence direct answer that reads correctly in isolation — because an AI engine may lift exactly that passage.

- Write extractable units. Each paragraph should make one claim, cite one source, and stand alone if pulled out of context.

- Attribute every statistic. "According to Gartner, X%" is citable; "studies show" is not. Unnamed numbers are routinely skipped.

- Structure around questions, comparisons, workflows, and edge cases. These are the shapes of user prompts.

- Define entities on first mention. Use full proper names for products, companies, and tools before using shorthand.

- Add real data. Tables, benchmarks, pricing ranges, and dated research are all extraction magnets.

- Cut filler. Vague framing paragraphs compete with citable passages for the model's attention.

What Role Do Entities, Schema, and Consistent Naming Play in GEO?

Entity clarity is a citation prerequisite. AI systems resolve a brand, product, author, or topic by matching signals across your site and the broader web, and ambiguous or inconsistent entities get dropped. Georion notes that LLMs bind mentions to entities, and recommends canonical signals like Wikidata presence, Organization schema, and consistent naming.

The baseline entity and schema stack for GEO:

- Organization schema with consistent legal name, URL, logo, same-as links (LinkedIn, Crunchbase, Wikidata).

- Article schema on editorial content, including author, datePublished, and dateModified.

- Person schema for authors, linked to Organization, with credentials and sameAs profiles.

- Product or SoftwareApplication schema where you describe a named product.

- FAQPage or HowTo schema only where the content genuinely matches — forced schema hurts trust.

- JSON-LD as the implementation format, validated with Google Rich Results Test.

- Consistent naming everywhere — CMS templates, author bios, footer, press, directories. Variant names ("Mentionwell" vs "Mention Well" vs "MW") fragment the entity graph.

E-E-A-T signals — experience, expertise, authoritativeness, trust — reinforce entity resolution. Named authors with real credentials, dated updates, source citations, and corroborating third-party mentions collectively tell the model this entity is real, specific, and reliable. For multi-site operators, entity consistency is the single hardest thing to maintain manually, which is why it belongs in a site profile enforced by the publishing engine rather than in a checklist.

How to Optimize Your Site for GEO: GEO Strategy in 7 Steps

A defensible GEO program is not a content tweak; it is an ongoing loop. Search Engine Land's framework — assess, optimize, measure, iterate — and Geneo's step-by-step workflow converge on the same seven operational stages.

- Set objectives and prompt sets. Define which prompts, topics, and engines matter for your business. Tie each to a revenue or pipeline outcome, not "AI visibility" in the abstract.

- Baseline visibility across engines. Run the same prompt set against ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude, and Copilot. Record who gets cited, which domains dominate, and where your brand is absent or misrepresented.

- Map answer-engine intent. For each target prompt, identify the answer shape: definition, comparison, process, recommendation, troubleshooting. This shape drives the content brief.

- Clean entity and technical signals. Audit Organization, Article, Person, and Product schema. Verify Wikidata and directory consistency. Check crawl access for GPTBot, ChatGPT-User, ClaudeBot, PerplexityBot, and Google-Extended in robots.txt — explicitly allow the ones you want citing you. Evaluate emerging files like `llms.txt` and `llms-full.txt` as experimental, not required.

- Produce answer-ready briefs and content. Every brief specifies the direct answer, the citable statistic, the entities to name, and the corroborating sources. No filler sections.

- Publish through your CMS or headless workflow. Preserve schema, canonical URLs, and author metadata at the delivery layer. For multi-site operators, enforce brand rules at the template level so 50 or 500 sites stay entity-consistent.

- Refresh based on citation decay. Because 50% of cited content is less than 13 weeks old, set a cadence (monthly or quarterly) to update statistics, dates, and claims on priority pages, and re-run your prompt set to confirm recovery.

| Stage | Owner | Cadence | Core output |

|---|---|---|---|

| Baseline + prompt set | SEO/GEO lead | Quarterly | Visibility scorecard |

| Entity + schema audit | Tech SEO | Quarterly | JSON-LD, Wikidata, sameAs fixes |

| Content brief + production | Editorial | Weekly | Answer-ready articles |

| Publishing | CMS / headless | Per release | Validated schema, clean canonicals |

| Measurement | Analytics | Weekly/monthly | Citations, AI referrals, prompt tests |

| Refresh | Editorial | Monthly/quarterly | Updated passages, dates, stats |

How Do You Measure Your Visibility in AI Responses?

Measure GEO with a repeatable prompt set, not one-off manual searches. The useful signals fall into four buckets: inclusion, behavior, competition, and decay.

- Inclusion signals. Citation share, brand mention rate, and recommendation rate across your target prompts in each engine. Trantor contrasts this with SEO's click-based measurement — for GEO, the primary metric is whether you appear inside the synthesized answer.

- Behavior signals. AI referral traffic in GA4 (filter by known referrers like `chatgpt.com`, `perplexity.ai`, `gemini.google.com`), snippet appearances, and assisted conversions from AI sources.

- Competitive signals. Which competitors get cited for your priority prompts, which third-party publications dominate, and where earned media gaps exist.

- Decay signals. Week-over-week change in citation inclusion, correlated with content age. Use this to trigger refreshes before you lose the slot.

An operator-grade measurement setup looks like this:

- A locked prompt set (50–200 prompts) per priority topic.

- Cross-engine runs on a fixed cadence — weekly for priority topics, monthly for the long tail.

- A dashboard that tracks citation rate, competitor inclusion, and prompt-level volatility.

- A decay-response loop: when citation rate drops on a tracked prompt, the associated page goes into the refresh queue.

Citation share on a locked prompt set is the single most reliable GEO metric; everything else is supporting evidence.

GA4 referrals alone understate GEO impact because zero-click answers deliver brand exposure without a session. Pair quantitative referrals with qualitative prompt tests so you capture the visibility that does not convert into a click.

How Do Teams Scale GEO Without Thin Programmatic Pages?

You scale GEO by pairing template constraints with per-page editorial overrides — unique answer capsules, entity-specific evidence, and named third-party corroboration on every URL. Templates that only string-replace a city or product name produce near-duplicates with no unique answer and no source data, which is the exact pattern AI engines deprioritize.

What separates defensible programmatic GEO from thin content:

- Template constraints with editorial overrides. The template enforces schema, internal linking, and section structure. Editorial fills the direct answer, the citable stat, and the entity-specific evidence per page.

- Unique answer capsules. Every page opens with a 2–3 sentence direct answer written for that specific query — not a Mad Libs string-replace.

- Entity-specific evidence. Per-page data: pricing, benchmarks, regional specifics, product attributes. If the only difference between two pages is a city name, the engine sees a duplicate.

- Corroboration at scale. Named third-party sources per page. Search Engine Land's research finding — that AI engines favor earned media — applies doubly to templated content.

- Archive refresh on a cadence. Given the 13-week decay curve, refreshes must be programmatic too: scheduled updates to stats, dated claims, and prompts.

- Brand consistency across sites. For agencies and multi-site operators, site profiles enforce entity names, schema, author blocks, and positioning — so 200 domains stay coherent without per-site manual QA.

This is where MentionWell fits. MentionWell is an automated blog engine that operationalizes this citation pipeline across AEO, GEO, LLMO, and SEO — research-grounded briefs, answer-ready structure, entity-consistent schema, delivery into your existing CMS or headless stack, and scheduled archive refreshes so citations do not decay unnoticed. It is not a generic AI writer and not a visibility analytics tool; it is the publishing engine that enforces GEO discipline at the scale modern content programs require.

If traditional SEO content is no longer earning citations in AI-generated answers, and publishing consistently across a portfolio is outpacing your team's capacity, a citation-shaped pipeline is the fix. Get My Site GEO Optimized to run AEO, GEO, LLMO, and SEO on a single operational workflow.

Sources

- Mastering generative engine optimization in 2026: Full guidesearchengineland.com

- Generative Engine Optimization in 2026 - eMarketerwww.emarketer.com

- GEO (Generative Engine Optimization) and Why It Matters in 2026robizsolutions.com

- Generative Engine Optimization GEO | Complete Guide for 2026www.trantorinc.com

- GEO vs. SEO: Everything to Know in 2026 | WordStreamwww.wordstream.com