What Does It Mean to Show Up in Perplexity in 2026?

Showing up in Perplexity means being retrieved, cited inline, named as an entity, or recommended as the answer — four distinct outcomes that operators routinely conflate. Perplexity is an AI-powered answer engine that runs live web search, synthesizes what it finds, and shows inline citations so users can verify every claim (Source: AIclicks). It is not a list of blue links, and "ranking" is the wrong mental model.

Separate the four outcomes before you optimize anything:

| Outcome | What it means | What it requires |

|---|---|---|

| Crawlable | PerplexityBot and Perplexity-User can fetch the page | Open robots.txt, server-rendered HTML, valid sitemap |

| Cited | The URL appears as an inline source under an answer | Topical relevance to the query + answer-ready structure |

| Mentioned | Your brand or product name appears in the synthesized answer | Strong entity signals across the web |

| Recommended | Perplexity suggests your product as the solution | Comparison coverage, reviews, category association |

To show up in Perplexity in 2026, a page must first be eligible (crawled and indexed), then be selected (topically relevant and answer-shaped), then be reinforced (entity signals off-site), then be refreshed to survive Perplexity's recency bias.

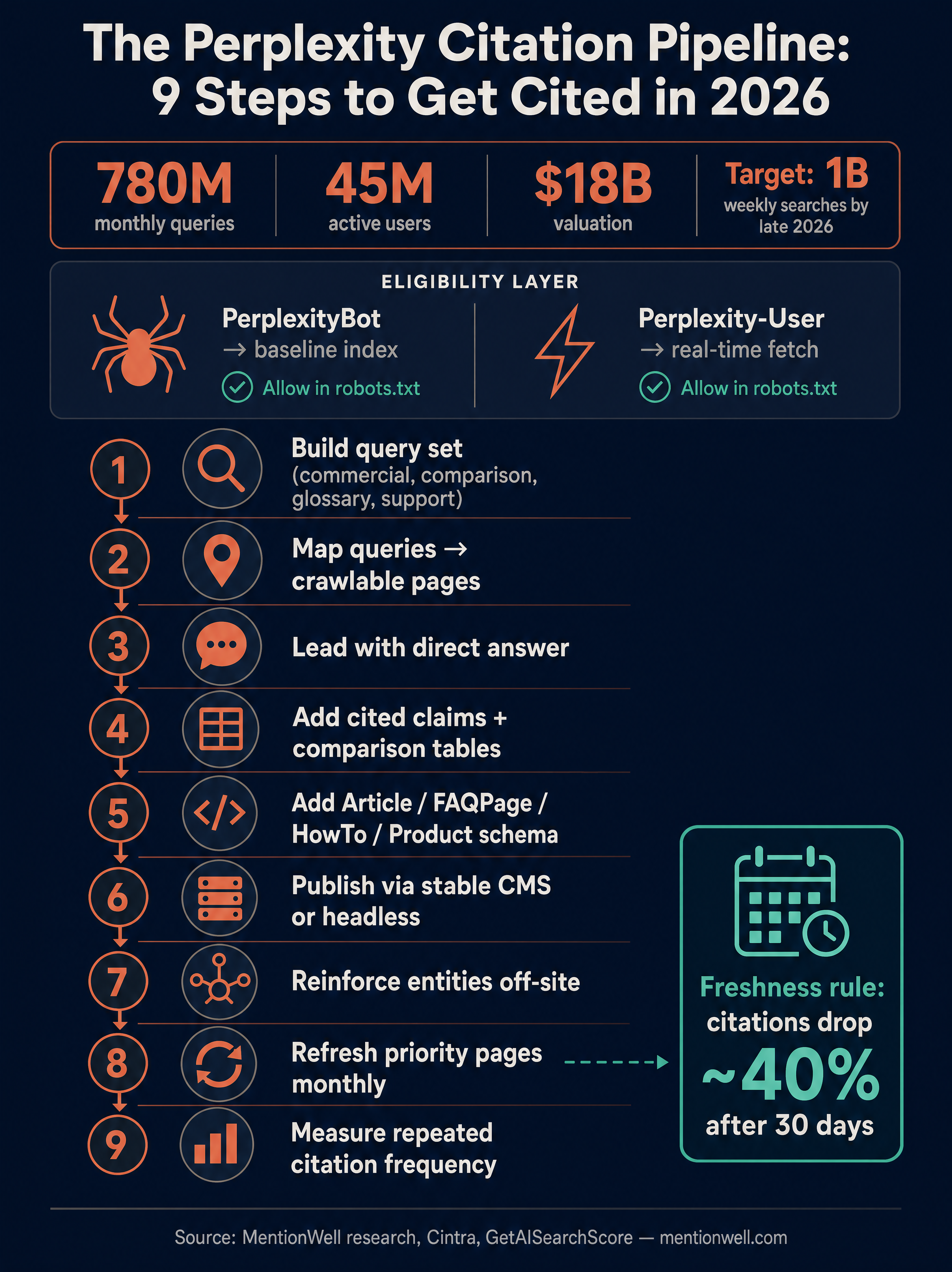

Scale matters enough to justify the work. According to DemandSage via Cintra, Perplexity processes 780 million monthly queries, up from 230 million in mid-2024, with projections of 1.2–1.5 billion monthly queries by mid-2026. That is a meaningful surface for B2B buyer research, not a novelty.

How Does Perplexity Find and Cite Sources?

Perplexity runs on real-time retrieval-augmented generation: every query triggers a fresh scan of the live web, and the model synthesizes an answer with inline citations drawn from what it just retrieved (Source: Cintra). Two crawlers sit underneath this workflow — PerplexityBot builds a baseline knowledge index, and Perplexity-User fetches pages in real time when a user question requires them (Source: Cintra). Both need access for a page to be eligible for citation.

That split has operational consequences. A site can be technically indexed by PerplexityBot and still get excluded from live answers if Perplexity-User is rate-limited or blocked by a WAF. Check both user agents in your logs, not just one.

Classic search indexation is still an eligibility signal. Nathan Gotch's guide is blunt: if a site is not indexed in traditional search (Google, Bing), it has near-zero chance of appearing in Perplexity, and operators should not block PerplexityBot (Source: Nathan Gotch). Treat Google Search and Bing indexation as a prerequisite, not a substitute for Perplexity-specific work.

Other Perplexity surfaces matter insofar as retrieval behavior shifts:

- Pro Search and Research mode do more retrieval passes, which raises the bar for source-worthy pages.

- Spaces pin trusted sources for recurring workflows — being in a user's Space effectively makes you a default source.

- Comet (the agentic browser) and the Sonar and sonar-reasoning-pro models use the same retrieval stack, so optimization carries across.

- Perplexity API, Search API, and Agent API allow you to audit retrieval the same way real queries work.

Why Are My Products Not Showing in Perplexity?

Most B2B SaaS pages fail in Perplexity for technical reasons before content reasons. GetAISearchScore's audit across 485 domains found eight recurring blockers, split between hard stops that prevent any citation and softer blockers that reduce selection odds (Source: GetAISearchScore).

Hard blockers (eligibility killers):

- PerplexityBot blocked in robots.txt.

- Key content hidden behind client-side JavaScript.

- Missing or broken sitemap.xml, so new URLs never get discovered.

If PerplexityBot cannot crawl the page, or if the answer text is only assembled in the browser, topical relevance is irrelevant — the page does not exist to the retriever.

Soft blockers (selection killers):

- Thin descriptions. GetAISearchScore flagged product pages under 100 words as thin and recommends 200+ words of crawlable text as a diagnostic floor.

- Missing Product schema, Offer, or aggregateRating where relevant.

- No FAQ or answer-ready blocks.

- Duplicate pages without canonical tags.

- Missing or inconsistent review and rating signals.

- Pages not indexed in traditional search.

Extend this model to non-commerce pages. For a SaaS integration page, the equivalent of "missing Product schema" is missing entity linkage (what product, what category, what parent integration). For a comparison page, the equivalent of "thin descriptions" is a table that never actually compares on price, limits, or capabilities. For documentation, it's content gated behind a SPA shell that Perplexity-User cannot render.

What Matters More for Perplexity Citations: Schema, Structure, or Topical Relevance?

Topical relevance predicts citations; schema and structure are table stakes. GetAISearchScore's dataset of 14,550 domain-query pairs across 485 domains found essentially zero correlation between its structural AI Search Readiness score and actual Perplexity citations (r=0.009, p=0.849), while same-topic pages were cited at 5.17% versus 0.08% for cross-topic pages — a 62x difference (Source: GetAISearchScore).

That finding reframes the work. JSON-LD, schema, crawlability, server-rendered HTML, canonical tags, and clean markup are necessary — they determine whether you are in the candidate pool at all. But once you're in the pool, Perplexity picks sources by how closely a page matches the query's topic.

| Layer | Role | Examples | Predictive of citation? |

|---|---|---|---|

| Eligibility | Gets you into the candidate set | robots.txt open, SSR HTML, sitemap, canonicals | Required, not predictive |

| Extractability | Makes the answer easy to lift | Direct-answer opening, H2/H3 questions, tables | Moderately predictive |

| Topical relevance | Determines selection | Focused page per query, specific claims, original data | Strongly predictive |

| Entity signals | Reinforces trust | Consistent brand mentions, reviews, docs | Reinforcing |

Write one focused page per commercial query, not one broad page hoping to rank for ten. The 62x gap between same-topic and cross-topic pages is the single most actionable finding in the public Perplexity research.

How to Get Cited by Perplexity?

Treat Perplexity citation as a pipeline, not a tactic. Each stage has a specific output, which is how you scale it across a site or a portfolio.

- Build the query set. Pull commercial, comparison, glossary, support, and "how to" prompts from sales calls, support tickets, Google Search Console, and direct Perplexity audits. Group by intent.

- Map each query to one crawlable page. One page per query, with the query's phrasing in the H1 or first H2. Kill internal duplicates or canonicalize them.

- Lead with a direct answer. Novara Labs recommends the first 1–2 sentences deliver the core answer, with the primary keyword and 3+ data points concentrated in the first 30% of the page (Source: Novara Labs).

- Add cited claims, original data, and comparison tables. Perplexity preferentially lifts from pages that look like primary sources — original benchmarks, pricing tables, capability matrices, dated changelogs.

- Add schema by page type. Article schema for posts, Organization schema for entity consistency, HowTo for procedures, FAQPage for answer blocks (Novara Labs recommends 3–5 questions on commercial pages), Product schema with Offer and aggregateRating where applicable. Validate in Google Rich Results Test (Source: Novara Labs).

- Publish through a stable CMS or headless workflow so URLs, canonicals, and sitemaps stay consistent. Unstable URLs kill the retrieval baseline PerplexityBot builds.

- Reinforce entities off-site. Official docs, YouTube explainers, third-party comparisons, Reddit threads in relevant subreddits, community answers.

- Refresh priority pages on a monthly cadence. Not quarterly.

- Measure citation frequency with repeated runs, not single searches.

Ship citation-shaped pages across AEO, GEO, LLMO, and SEO without rebuilding your stack — Get My Site GEO Optimized.

Running this pipeline manually across a handful of pages is doable. Running it across hundreds of pages, or across a portfolio of client sites, is where the operational cost breaks content teams. Mentionwell is a blog engine that operationalizes exactly these stages — onboarding a domain, defining a site profile, running research-grounded drafts with direct-answer openings and schema, publishing into existing CMS or headless stacks, and running archive refreshes. It's designed to make the citation pipeline repeatable, not to replace editorial judgment.

Which B2B SaaS Page Types Should You Optimize First?

Prioritize by how directly a page maps to a known Perplexity query. The same-topic citation advantage (5.17% vs 0.08% per GetAISearchScore) means narrow, query-matched pages beat broad pillar pages for citation — even when pillar pages are longer and better-linked.

| Page type | Query intent served | Priority | Notes |

|---|---|---|---|

| Comparison pages (X vs Y, X alternatives) | Recommendation, shortlisting | 1 | Highest commercial value; Perplexity loves structured comparisons |

| Glossary / definition pages | Emerging terminology (AEO, GEO, LLMO) | 2 | Low effort per page, strong topical authority compounding |

| Product / use-case pages | Category and entity association | 3 | Fix thin descriptions first; 200+ words of crawlable text |

| Documentation / integration pages | Specific factual answers | 4 | Must be SSR, not SPA-only |

| Blog posts (original data, how-to) | Topical depth | 5 | Protect quality; Perplexity rewards primary sources |

| Pricing pages | Cost queries | 6 | Only if crawlable and itemized; gated pricing cannot be cited |

Comparison and glossary pages are the highest-leverage starting points because they match the exact shape of Perplexity queries and scale cleanly as programmatic SEO — as long as editorial controls prevent templated noise. Programmatic scale without quality controls produces pages that are eligible but never selected.

Which Schema and Page Structure Help Perplexity Extract Answers?

Use Article schema on posts, Organization schema for entity consistency, HowTo schema on procedural content, FAQPage schema on answer blocks, and Product schema with Offer and aggregateRating on commercial pages — and validate every template in Google Rich Results Test (Source: Novara Labs). Structure alone does not drive citations, but extractable pages have materially better odds once topical relevance is in place.

Novara Labs recommends direct-answer openings, question-format H2/H3 headings, and sections that each function as a standalone answer (Source: Novara Labs). That's the extraction pattern: one question, one paragraph, one clear claim with a named source.

Specific structural moves that matter:

- Open every section with 1–2 sentences that fully answer the heading's question.

- Use H2/H3 headings phrased as questions or concrete declarations, not marketing slogans.

- Put definitions, numbers, and named entities early in each section.

- Use compact tables for comparisons (≤8 rows, meaningful headers).

- Back claims with named sources, not "studies show".

- Use full entity names on first mention — Perplexity links pages to entities partly through co-occurrence.

Schema by use case, per Novara Labs:

| Page type | Schema | Why |

|---|---|---|

| Blog post | Article | Author, publish date, update date |

| Organization page | Organization | Entity disambiguation, sameAs links |

| How-to or procedure | HowTo | Step extraction |

| FAQ block | FAQPage (3–5 Qs) | Direct answer extraction |

| Product | Product + Offer + aggregateRating | Commercial eligibility |

Note the caveats: `llms.txt` and Mintlify-style documentation formats are sometimes marketed as citation drivers, but the public evidence does not support treating them as proven Perplexity signals. Use them where they serve users; don't stake a citation strategy on them.

How Fresh Does Content Need to Be for Perplexity?

Monthly, not quarterly, for priority pages. Cintra reports a 40% citation drop after 30 days, and Novara Labs notes that while ChatGPT and Perplexity share core tactics, Perplexity weights freshness more aggressively and priority content should be updated monthly rather than quarterly (Source: Cintra; Source: Novara Labs). Perplexity's retrieval exposes recency filters for the past hour, day, week, month, and year — meaning users can actively exclude anything older than 30 days from their results (Source: Cintra).

Build a refresh queue, not ad-hoc updates. What to update on priority pages each month:

- Dates and "last updated" timestamps in both prose and schema.

- Specific examples tied to product, pricing, or market state.

- Data points and any statistics older than 90 days.

- Screenshots and UI references that have shifted.

- Comparison tables for competitor changes.

- Documentation and API references.

- Any claim affected by a product release or policy change.

Prioritize by citation value: commercial comparison pages first, then glossary and high-intent how-to content, then evergreen blog posts. Pages with no realistic Perplexity query attached do not need monthly refreshes.

How Should You Reinforce Entity Signals Off Site Without Spam?

Entity reinforcement is not link building — it's making sure Perplexity sees a coherent picture of your brand, product, and category across the retrieval pool. Perplexity citation is unstable, and a common failure mode, per the GetAISearchScore audit, is that Perplexity can cite sources inconsistently across repeated runs — which implies a single well-placed mention is unreliable and that distributed, consistent signals are what move the needle (Source: GetAISearchScore).

Defensible off-site signals:

- Reddit discussions in topically relevant subreddits with real answers, not brand drops.

- YouTube explainers with clear titles and descriptions naming the product and category.

- Third-party reviews on category-appropriate platforms.

- Official documentation hosted on docs subdomains, properly canonicalized.

- Comparison mentions on independent comparison sites.

- Community answers on Stack Overflow, Quora, and vertical communities.

Consistency across these surfaces matters more than volume. Use the same product name, the same category phrasing, the same parent entity description everywhere. Perplexity's synthesis step rewards the signal it sees repeated across independent sources — that's how a brand shifts from "mentioned once" to "the obvious answer".

How Should Teams Test Perplexity Visibility When Citations Change?

Build a measurement workflow that assumes citations are unstable. GetAISearchScore's 90 API runs produced 658 citations across 485 domains, and 29.3% of citation appearances looked like noise — they showed up once and didn't recur (Source: GetAISearchScore). A single manual search is not evidence.

A workable audit protocol:

- Define a fixed query set grouped by intent (commercial, comparison, support, glossary).

- Run each query through the Perplexity API or Search API on a repeatable cadence — weekly for priority queries, monthly for the long tail.

- Capture timestamps, full answers, and the complete cited URL list for each run.

- Compute citation frequency (cited in N of M runs) — treat anything under ~20% as noise.

- Track four outcomes separately: crawled (in server logs), cited (URL in the API `search_results` field), mentioned (brand name in answer text), recommended (named as the answer).

Perplexity's API features make this practical. The `search_results` field automatically returns source URLs, and built-in search parameters (domain, recency, location) let you control retrieval rather than try to shape it through prompts (Source: Perplexity API documentation, via Cintra). Domain filters isolate competitor visibility. Location filters test geo-variance. Recency filters (hour, day, week, month, year) test how much of your citation rate depends on freshness.

Embeddings API can support clustering of query sets or grouping similar prompts for audit design — it is not a citation driver on its own.

| Outcome | Signal | Where to measure |

|---|---|---|

| Crawled | PerplexityBot / Perplexity-User in logs | Server access logs |

| Cited | URL in `search_results` | Perplexity API response |

| Mentioned | Brand name in answer text | Parsed answer content |

| Recommended | Named as the answer to commercial queries | Qualitative review of commercial query runs |

Don't optimize what you can't repeatedly measure — citation frequency across repeated runs is the only signal that survives Perplexity's instability.

Perplexity AI vs ChatGPT: What's the Actual Difference?

Perplexity is the research-and-citation engine; ChatGPT is the writing-and-reasoning engine. Perplexity is better suited to real-time research with current information and cited sources, while ChatGPT is stronger for writing, reasoning, coding, and multi-step tasks — which is why tactics diverge even though both are AI answer surfaces (Source: Konabayev, via synthesis).

| Engine | Retrieval | Citation behavior | Optimization priority |

|---|---|---|---|

| Perplexity | Live RAG on every query | Inline URL citations by default | Topical relevance + freshness + crawler access |

| ChatGPT | Mixed: training data + Bing retrieval when browsing | Citations when browsing; entity mentions otherwise | Bing indexation + entity consistency |

| Google AI Overviews / AI Mode | Google's index | Cited via Google's retrieval stack | Classic SEO + answer-ready structure |

| Gemini | Google's ecosystem + connectors | Variable | Same as AI Overviews + connector data |

| Claude | Limited live retrieval; Projects/Connectors contexts | Variable | Entity clarity + structured pages |

AEO, GEO, LLMO, and SEO are complementary operating modes, not competing strategies. The same citation-shaped page — direct answer, question H2s, named entities, schema, refreshed monthly — performs across engines, but the retrieval stacks and distribution levers differ. Optimize the content layer once; tune the distribution layer per engine.

For engine-specific playbooks, see the related guides on [how to show up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026), [how to show up in Google Gemini in 2026](/how-to-show-up-in-google-gemini-in-2026), and [how to show up in Claude in 2026](/how-to-show-up-in-claude-in-2026). For the workflow comparison, see [AEO vs GEO vs LLMO: which workflow fits your team](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

If you're running this pipeline across one site or many, Mentionwell handles the operational layer — research-grounded drafts with AEO, GEO, LLMO, and SEO built in, publishing into your existing CMS or headless stack, and archive refreshes on the monthly cadence Perplexity actually rewards. Get My Site GEO Optimized.

Sources

- How to Show Up in Perplexity in 2026www.youtube.com

- How to Log In to Perplexity AI [2026 Full Guide]www.youtube.com

- The CORRECT way to use Perplexity In 2026 (Beginner ...www.youtube.com

- Does Perplexity make sense in 2026? : r/perplexity_aiwww.youtube.com

- How to Get Cited by Perplexity: The Tactical Playbook for 2026 | Cintragetaisearchscore.com