What Are Google AI Overviews, and Where Do They Appear in Google Search?

Google AI Overviews are AI-generated answer blocks that render above the classic blue links inside Google Search, synthesizing information from multiple indexed web pages and citing a small set of sources alongside the summary. They are powered by Google's Gemini model family and are designed to compress a query into a direct answer rather than route the user to a single "best" result (Source: Oltre.ai).

Google AI Overviews are a synthesis layer, not a ranking layer — they cite several pages that collectively answer a query's fan-out, not the one page that ranks first.

Why this matters in 2026: the surface has become a primary search entry point. According to AuthorityTech, AI Overviews reached 2 billion monthly users by mid-2025 per Google CEO Sundar Pichai's Q2 2025 earnings call, and a February 2026 arXiv study it cites found AI Overview availability expanded to 229 countries (up from 7 in 2024) with exposure jumping 67% on average in already-launched markets. Prevalence estimates still vary: Conductor's analysis of 21.9 million queries found AI Overviews on 25.11% of Google searches (up from 13.14% in March 2025), while Averi.ai and Weply.chat both report 50%+ of searches in 2026 (Source: QuickSEO; Oltre.ai).

The CTR impact is the part that forces a strategy change. Seer Interactive's 25.1-million-impression study found organic CTR fell from 1.76% to 0.61% — a 61% decline — when an AI Overview appeared, and paid CTR fell 68% (Source: QuickSEO). In other words, a ranking that used to earn clicks now often earns an impression behind a summary that may or may not cite you.

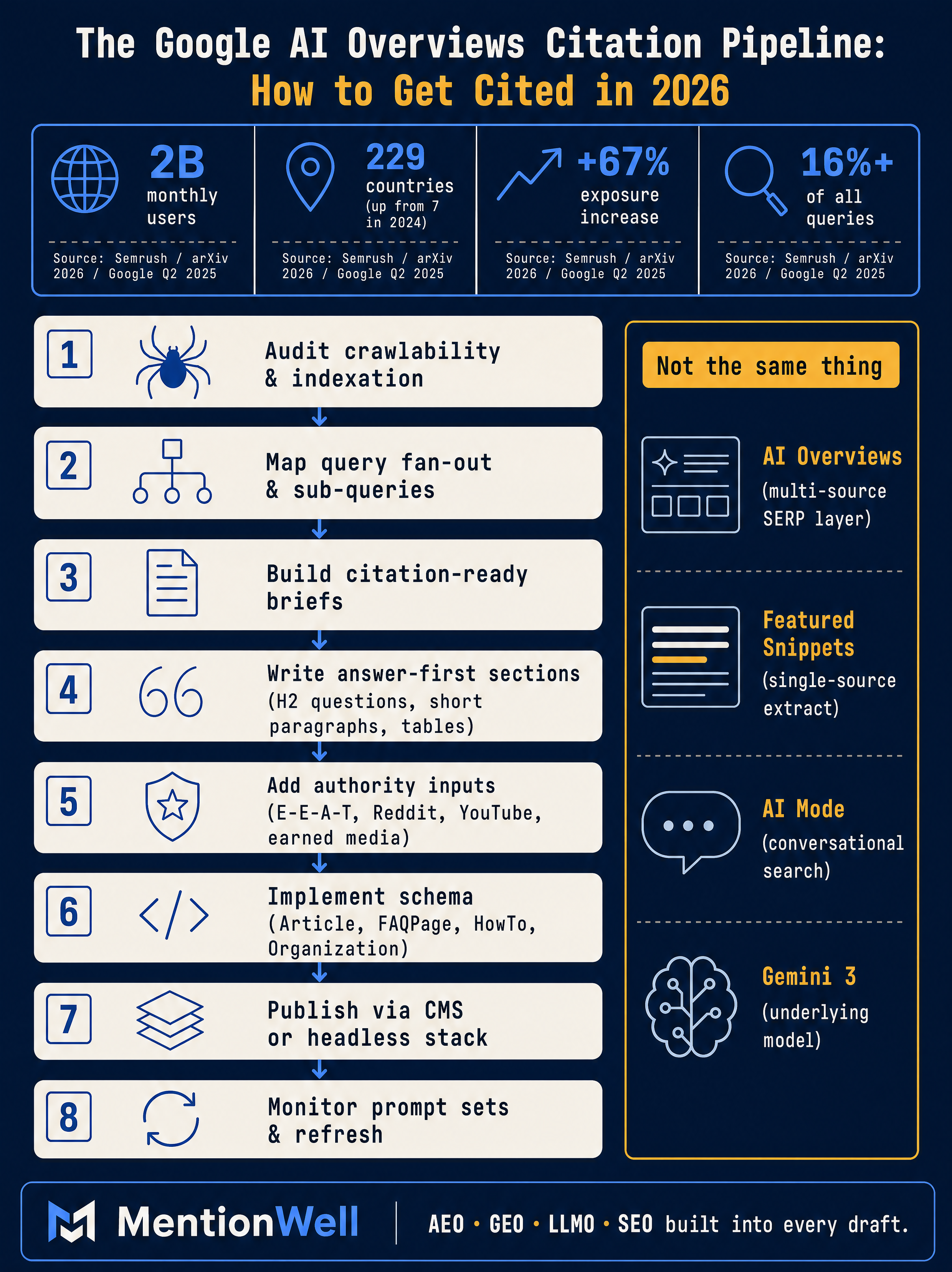

How to Show Up in Google AI Overviews? A 2026 Citation Pipeline

Publish semantically complete, citation-ready pages that answer multiple fan-out sub-queries with clear headings, sourced facts, and fresh updates — then run that process on a schedule instead of as a one-off campaign (Source: Oltre.ai). The single biggest mistake in 2026 is treating AEO as a page-level edit rather than an operational pipeline.

Here is the workflow that reconciles the conflicting guidance in the research corpus into something repeatable:

- Audit crawlability and indexation. Confirm pages are reachable in HTML, not blocked by robots.txt, present in XML sitemaps, and not orphaned. Crawlability is a baseline prerequisite before any AIO work (Source: BASE Search Marketing).

- Map query fan-out. For each target query, enumerate the sub-questions Google is likely to decompose it into — People Also Ask items, long-tail variations, comparison prompts, and objection-style questions. Citation sets change across repeated queries, so breadth of subtopic coverage is what earns durable visibility (Source: Oltre.ai).

- Build citation-ready briefs. Each section gets a question-based H2, a direct answer block, supporting evidence, and a named source. Question-based H2s help because AIOs are triggered by questions and fan-out tries to match subtopics to pages that clearly answer them (Source: Surferstack).

- Write answer-first passages. Lead every section with a self-contained answer before context or nuance. AI extracts passages, not whole pages (Source: Surferstack). RankAI notes 78% of AI Overviews use list formatting, so ordered lists, comparison tables, and labeled steps belong inside those passages (Source: RankAI).

- Add authority inputs. Named authors with credentials, attributed statistics, original data, and links to primary sources. E-E-A-T and factual consistency with Google's Knowledge Graph are repeatedly treated as critical (Source: Oltre.ai).

- Implement schema. JSON-LD for Article, Organization, FAQPage, and HowTo where the content genuinely matches the type (Source: ICODA). Validate with Google Rich Results Test.

- Publish into your CMS or headless stack. Preserve canonical URLs, author metadata, and dated timestamps through the publishing layer.

- Monitor prompt sets. Run a fixed set of target queries against Google AI Overviews monthly; record which URLs are cited and which fan-out angles you are missing.

- Refresh on cadence. When citations rotate, statistics age, or new fan-out subtopics emerge, update the page and re-test.

Mentionwell is built to operate exactly this loop: onboard a domain, define a site profile, and let the blog engine ship research-grounded articles with AEO, GEO, LLMO, and SEO built into every draft, then refresh the archive when citation data changes. For multi-site operators, the same pipeline runs brand-consistently across every domain rather than as a bespoke project per client.

How Does Google Actually Select Sources for AI Overviews?

Google's AIO retrieval filters for extractability, topical authority, and trust signals rather than keyword density or domain authority alone (Source: Surferstack). In practice, that means Gemini is looking for passages it can lift cleanly, from pages that demonstrate repeated coverage of a topic, written by entities Google already associates with that topic in its Knowledge Graph.

The selection model breaks down into four reinforcing signals:

| Signal | What it means | What to build on-page |

|---|---|---|

| Extractability | Passages must stand alone as answers | Answer-first paragraphs, short sentences, lists, tables |

| Topical authority | Coverage across the full fan-out of a topic | Cluster pages across sub-queries, not one hero article |

| E-E-A-T | Experience, expertise, authoritativeness, trust | Named authors, credentials, sourced claims, dated updates |

| Factual consistency | Alignment with Knowledge Graph and trusted sources | Attribution to named studies, primary data, cross-references |

Query fan-out is the mechanism under the hood. Gemini decomposes a query into sub-queries — the kinds of questions People Also Ask surfaces, plus long-tail variations — and retrieves passages for each. According to AuthorityTech, pages ranking across multiple related fan-out query variations are 161% more likely to be cited in AIOs than pages ranking for a single query.

Off-site authority compounds this. AuthorityTech goes further with a stronger, single-source claim: that earned media placements in trusted publications account for the vast majority of AI Overview citations and that brand-owned content is rarely cited. That claim is directional rather than consensus — other guides in the corpus place more weight on owned-page formatting — but it aligns with the broader pattern that third-party mentions on Reddit, YouTube, earned press, and reference sites reinforce Knowledge Graph confidence in your brand as a source on a topic (Source: Ali Vaezi, YouTube AEO Guide 2026).

Do You Need to Rank in the Top 10 Organic Results to Be Cited?

No — top-10 ranking is no longer a reliable prerequisite, but classic SEO is still the foundation because Google's retrieval starts from its own index of trusted, crawlable pages (Source: QuickSEO; Ali Vaezi, YouTube AEO Guide 2026).

The data is genuinely conflicting, and honest guidance has to acknowledge that:

| Source | Finding | Implication |

|---|---|---|

| Ahrefs (mid-2025) | 76% of AIO-cited pages ranked in top 10 | Ranking was a strong signal |

| Ahrefs (Feb 2026) | ~38% top-10 overlap | Decoupling is accelerating |

| BrightEdge | 17% top-10 overlap | Ranking is a minority of citations |

| iMark Infotech | 52% of AIO sources come from top 10 | Ranking still matters materially |

The operational takeaway: run a dual strategy. Keep working rankings because trusted, indexed pages remain the retrieval pool, but stop treating top-10 as the finish line. Optimize for citation across the full fan-out of a topic, not just the ranking of a single head term. That means publishing cluster content across sub-queries, formatting each section to be individually extractable, and building off-site authority in parallel.

What Content Structure Does Google AI Prefer?

Structure content so each section is independently understandable, specific, sourced, and easy to quote — question-based H2s, a direct answer immediately below the heading, then supporting lists, tables, and evidence (Source: Tech Insight Lab; Surferstack).

The extraction rules translate into a concrete page skeleton:

- Question-based H2s. AIOs are triggered by questions; headings phrased as questions match fan-out sub-queries directly (Source: Surferstack).

- Direct answer blocks. Lead with a 1–3 sentence answer before context. Length guidance varies across the corpus — Doc Digital SEM recommends 40–60 words, Adrythm recommends 50–70 words for a TL;DR and 130–160 words for deeper "answer islands" — but the underlying principle is consistent: make the answer self-contained.

- Short paragraphs. 2–4 sentences. Passages, not prose walls.

- Lists and tables. RankAI reports 78% of AI Overviews use list formatting. Comparison tables are particularly citable for "vs" and "alternatives" queries.

- Step-by-step blocks. Numbered steps for any process, matched to HowTo schema where appropriate.

- Dated updates. Visible "last updated" timestamps signal freshness; Oltre.ai treats freshness as one of the four things AIOs reward most.

- Answer islands. Self-contained 130–160 word passages that fully address a sub-query inside a longer page (Source: Adrythm).

Query-length data reinforces the structural logic. According to Tech Insight Lab, 53% of searches with 10 or more words trigger an AI Overview — long-tail, conversational queries are where AIOs concentrate, and those are exactly the queries question-based H2s are designed to match.

Does Schema Markup Help with AI Overviews?

Schema is a clarity layer, not a standalone citation trigger — it helps Google's systems understand what a page is about, but it does not replace the structural, authority, and freshness signals that actually drive AIO selection (Source: ICODA; Oltre.ai).

Implement JSON-LD for the schema types that genuinely match your content:

- Article with author, datePublished, and dateModified

- Organization or LocalBusiness for authority and entity disambiguation

- FAQPage for pages with real question-answer pairs (not repurposed prose)

- HowTo for legitimate step-by-step processes

- Product for ecommerce and SaaS product pages

Pair schema with the technical baseline that has to be in place before any of this matters:

- Crawlable HTML that renders without JavaScript execution barriers

- Clean robots.txt and current XML sitemaps

- Internal links that surface important pages within a few clicks of the homepage

- Mobile performance and Core Web Vitals within Google's thresholds

- Validation via Google Rich Results Test

- Canonical tags that resolve duplication cleanly

Schema does not make a weak page citable. It makes a strong page legible.

What Is the Difference Between Google AI Mode, AI Overviews, Featured Snippets, and Gemini?

These are four distinct surfaces that are often conflated. AI Overviews are a SERP answer layer inside traditional Google Search results. Google AI Mode is a separate conversational search experience where users can ask follow-up questions. Featured snippets are usually single-source extracts from one ranking page. Gemini — including Gemini 3, which RankAI notes launched as the default model powering AI surfaces — is the model family powering them all (Source: Oltre.ai; Oversearch; RankAI).

| Surface | Format | Sources | Optimization priority |

|---|---|---|---|

| Featured snippets | Single extracted passage | Typically one page | Rank in top positions; clean answer blocks |

| AI Overviews | AI-synthesized answer with citations | 3–6 sources (Source: OTT SEO) | Fan-out coverage, extractable passages, authority |

| Google AI Mode | Conversational follow-up experience | Indexed webpages | Same as AIO plus conversational coherence |

| Gemini | Model family | N/A — powers the surfaces | N/A |

Search Generative Experience (SGE) is the earlier lineage that preceded AI Overviews and AI Mode; the terminology has largely been retired in favor of the current surface names.

The practical point: a page that is genuinely citation-ready for AI Overviews tends to carry over well to Google AI Mode and Gemini app answers, because all three pull from the same index and reward the same extractability and authority signals. Model-specific citation strategy for ChatGPT, Claude, Perplexity, Copilot, and Grok follows the same pipeline logic but with different retrieval sources — a topic worth covering in its own right rather than linking out to placeholder destinations.

How Should B2B SaaS Teams Optimize Comparison, Alternatives, Pricing, and Category Pages?

Commercial pages need the same citation-readiness treatment as informational content — neutral criteria, clear category definitions, sourced claims, and structured tables — because answer engines need evidence and specificity, not thin templated copy (Source: Oltre.ai; Surferstack).

The conversion case is real. According to Superlines' compiled 2025–2026 studies cited by QuickSEO, AI Overview traffic converts at 14.2% versus 2.8% for traditional organic search, and brands cited inside AI Overviews earn 35% more organic clicks and 91% more paid clicks than uncited competitors on the same query. Commercial queries are where AEO moves the P&L.

How to make each page type citable:

- Category pages. Open with a precise definition of the category, then a short rubric of the evaluation criteria that actually matter. Follow with a structured comparison table.

- Alternatives pages. Use neutral language, disclose your own inclusion, and list concrete differentiators per option. AI Overviews are wary of vendor-authored puffery; extractable neutrality gets cited.

- Pricing explainers. Break pricing into plan tiers, included seats, usage limits, and common add-ons. Concrete numbers are extractable; "contact sales" is not.

- Implementation and integration pages. Name every integration partner by full proper name. Entity clarity drives co-occurrence signals.

- Vendor selection content. Question-based H2s matching real buyer queries ("what to look for in X", "how to evaluate Y"), each with a direct answer block.

Programmatic SEO still works here, but only with strong editorial controls. Thin templated pages across hundreds of comparison permutations will not survive AIO selection — the pages that get cited are the ones with real criteria, sourced claims, and genuine differentiation per permutation.

How Do AI Overviews Impact SEO Measurement, CTR, and Refresh Cadence?

Traditional rank-tracking and organic CTR are no longer sufficient — you need a KPI model that tracks AI Overview presence, cited URLs, prompt-set results, and downstream conversion quality alongside classic SEO metrics (Source: QuickSEO).

A practical KPI model for 2026:

| Metric | What it measures | Source |

|---|---|---|

| AIO presence rate | % of target queries that trigger an AIO | Manual SERP checks, prompt-set tools |

| Citation rate | % of AIO-triggered queries where you are cited | Manual SERP checks |

| Share of voice | Your citations vs. competitors on shared queries | Prompt-set tracking |

| Cited URL mix | Which specific pages are getting cited | Manual SERP checks |

| Organic CTR delta | CTR change on queries with vs. without AIOs | Google Search Console |

| Assisted conversions | Downstream conversions from AIO-referred sessions | Analytics attribution |

| Refresh debt | # of target pages with stale data or lost citations | Internal tracking |

Expect CTR compression on AIO-triggered queries. According to upGrowth research tracked across 150+ campaigns and cited by Surferstack, traditional blue-link CTR drops by 25–40% when an AI Overview is present. That is not recoverable by ranking harder — it is recoverable by being inside the summary.

Refresh cadence should be driven by signal, not calendar:

- Monthly prompt-set retests for volatile or high-value target queries.

- Quarterly archive audits to flag pages with aging statistics, lost citations, or shifted fan-out.

- Trigger-based refreshes when a citation rotates out, a new competitor enters the summary, or a referenced study gets superseded.

This is where archive refreshes stop being a backlog project and start being a pipeline stage. Mentionwell treats refreshes as a first-class stage alongside new publishing — when a page's citations rotate or its data ages, it goes back through the pipeline instead of decaying in the archive.

Can You Opt Out of AI Overviews?

There is no selective opt-out that keeps you fully eligible for normal organic snippets. Using the `nosnippet` meta directive or `data-nosnippet` attributes can limit what Google may display as a preview, but those controls also remove the normal organic description snippet and typically reduce regular CTR (Source: Analyze AI).

Treat opt-out as a narrow legal, compliance, or content-control decision — a paywalled publisher protecting licensed content, a site with regulatory constraints, or a page where summarization creates real liability. For most B2B and SaaS teams, the better answer is to make sure that when Google summarizes your topic, your page is one of the cited sources rather than one of the absent ones.

If you want that pipeline running across your site — audit, fan-out mapping, answer-first drafting, schema, publishing, and monthly refreshes — Mentionwell operates it as a managed blog engine built for AEO, GEO, LLMO, and SEO together. Get My Site GEO Optimized.

Sources

- How to Get Featured in Google AI Overviews 2026 - AuthorityTechauthoritytech.io

- How to Show Up in AI Overviews: SEO Guide for 2026 - LinkedInwww.linkedin.com