What Is DeepSeek AI, and What Does It Mean to Show Up There?

DeepSeek AI is a Hangzhou-based AI lab that ships open-weight mixture-of-experts language models, a consumer chatbot called DeepSeek Chat, and a developer API (Source: DeepSeekAI.guide). "Showing up in DeepSeek" is not one thing — it splits into three distinct visibility modes, and the workflow to earn each is different.

- Mention from model knowledge. Your brand, product, or category surfaces because it existed in training data at the time of the last pretraining cut. You cannot submit to this; you can only influence it by being discussed widely and consistently across the open web before a training run.

- Retrieval and citation with a link. A DeepSeek-powered surface calls an external search or retrieval layer, pulls your page, and attributes an answer to it. This is where on-page structure, entity clarity, and schema actually move the needle.

- Appearance inside a DeepSeek-powered application. A third-party product built on the DeepSeek API routes queries to your content through its own retrieval and citation logic.

The operator goal in 2026 is citation visibility in retrieval contexts, not trying to "get into" the base model. Classic SEO still matters because retrieval layers usually reach your content through conventional web indexes — but AEO, GEO, and LLMO are what make a page extractable once retrieval finds it.

Which DeepSeek Surface Should You Optimize For First?

Prioritize the surface that matches how your buyers already query AI, then widen coverage. DeepSeek exposes three entry points, and the visibility mechanics differ at each one (Source: DeepSeekAI.guide).

| Surface | Access | State | Visibility path | Who should prioritize |

|---|---|---|---|---|

| Web chat | `chat.deepseek.com` via Chrome, Firefox, Edge, Safari | Server-side history per account | Training-data mentions; any built-in retrieval | Marketing teams testing brand presence |

| Mobile app | Apple App Store, Google Play | Server-side history, voice input, push sync | Same as web chat | Teams validating mobile-query behavior |

| Developer API | `https://api.deepseek.com` via `platform.deepseek.com` | Stateless; resend full message array each call | Whatever retrieval the calling app implements | Product teams and operators running automated tests |

The chat account at `chat.deepseek.com` and the developer account at `platform.deepseek.com` are two separate sign-in surfaces with non-interchangeable credentials — you register each one individually, even with the same email (Source: DeepSeekAI.guide). The web chat tier is free; the API is pay-per-token and requires a topped-up balance before the first call runs.

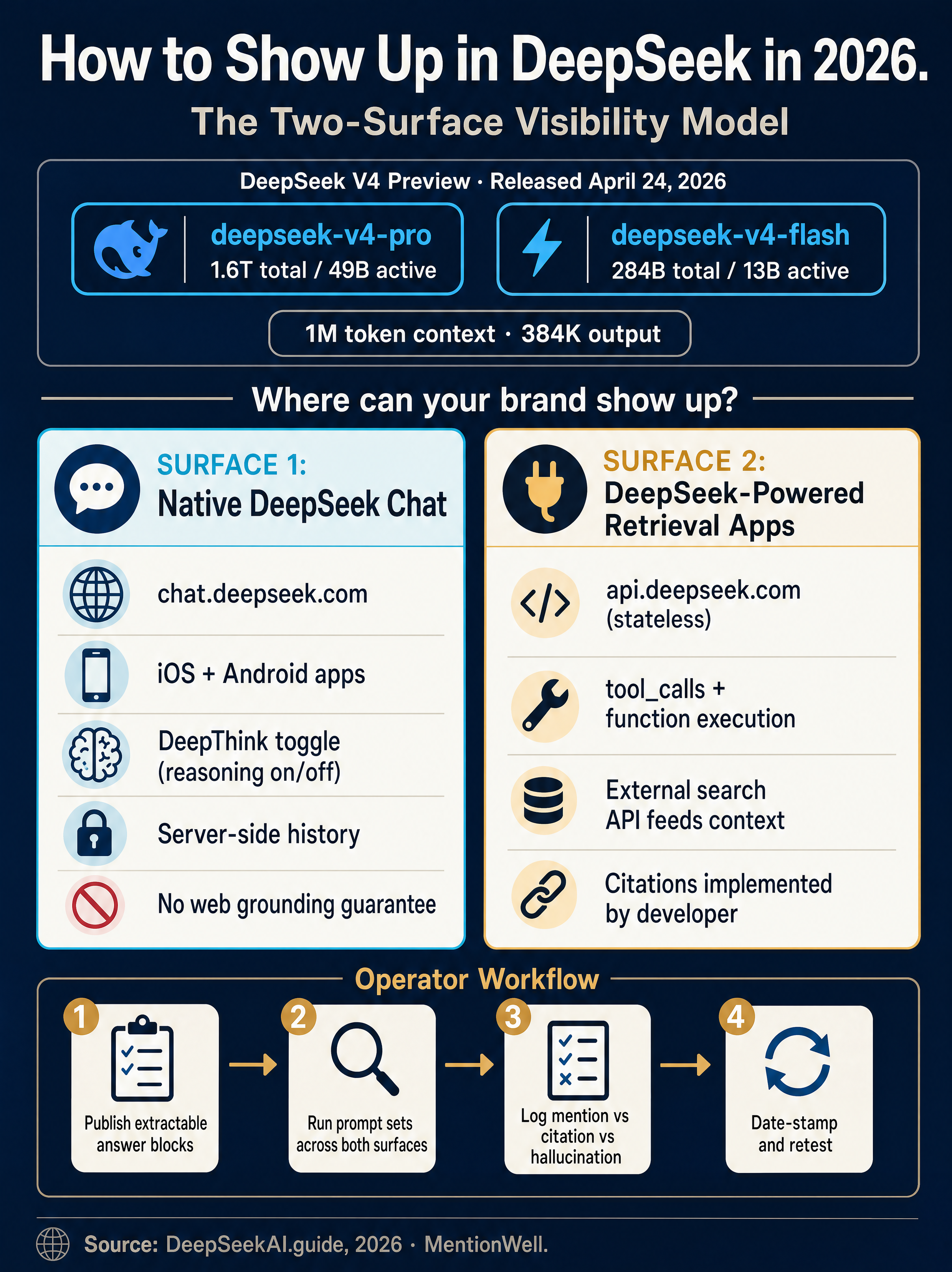

On web and app, DeepSeek V4 is the default model with no picker — users control behavior with the DeepThink toggle. On the API, you select model behavior with the model ID: `deepseek-v4-pro` for maximum capability, `deepseek-v4-flash` for cost-efficient throughput (Source: DeepSeekAI.guide).

Does DeepSeek Publish Official Website Ranking or Citation Factors?

No. The research corpus surfaces no official DeepSeek documentation for website crawling, indexing, direct site submission, ranking weights, or citation-selection factors. Any vendor claiming deterministic "DeepSeek ranking factors" is overstating what DeepSeek has publicly disclosed.

This matters operationally. Your brand's visibility inside a DeepSeek answer is mediated through three indirect channels:

- Training data — whatever public web content existed before the last pretraining cutoff for that model generation.

- External retrieval — search APIs and tool calls that DeepSeek-powered applications connect themselves.

- API integrations and custom RAG layers — third-party products that choose which sources they pull and cite.

There is no "submit-to-DeepSeek" workflow the way Google Search Console accepts sitemaps. Plan your content strategy around being indexable and extractable by the retrieval systems that feed DeepSeek, not around a direct publisher relationship with DeepSeek itself. This is the same posture Mentionwell recommends for Claude, Gemini, and other generation engines that do not expose a publisher portal — see our companion guide on [AEO vs GEO vs LLMO](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team) for the underlying framework.

How Does DeepSeek Chat Generate Answers?

DeepSeek Chat is a transformer-based prediction system that tokenizes input, uses conversation context, and predicts the most likely next tokens — it does not "know" facts in the human sense. For marketers, the critical consequence is this: asking DeepSeek about your brand does not teach it anything, because DeepSeek Chat does not learn from individual conversations unless the underlying model is retrained.

That reframes several common (and wrong) tactics. "Talking to DeepSeek about our product" in hopes of influencing future answers is a dead end. Prompting the chatbot to remember your positioning is session-local at best. Server-side chat history persists per account on `chat.deepseek.com` and the mobile app, but that history informs your future conversations, not the global model.

API calls are a further step removed. The API is stateless; to maintain a multi-turn conversation, clients must resend the full message array on every request (Source: DeepSeekAI.guide). Nothing a caller sends becomes training data by default.

The leverage point, then, is not the conversation — it is the public web content that exists before a DeepSeek training run and the retrieval layers that pull live sources during inference.

When Should I Toggle DeepThink On?

DeepThink is a behavior switch, not a citation control. Toggle it on for multi-step math, code debugging, planning tasks, long-context reasoning, and multi-document comparisons where deliberate chain-of-thought improves output quality (Source: DeepSeekAI.guide). Leave it off for fast drafting, short answers, and casual chat.

Labels like DeepThink, Expert Mode, or Instant Mode do not change whether DeepSeek will cite a source — they change how the model reasons before producing one. In product contexts built on the API, final answers and reasoning traces are typically returned on separate response fields, and reasoning traces should not be shown to end users by default.

Does DeepSeek Have Built-In Web Grounding and Citations?

Treat DeepSeek as a generation engine, not a turnkey search engine with guaranteed website citations. The core Open Platform materials reviewed in the research corpus do not advertise a built-in web-search grounding facility, and search-style DeepSeek implementations typically require teams to call an external search API, feed results back into the model, and implement citation or attribution rules themselves.

That has real implications for how your content gets surfaced:

- No guaranteed first-party citation surface. Unlike Google AI Overviews or Perplexity, there is no documented DeepSeek-native citation UI that reliably links out to publisher pages.

- Retrieval lives in the application layer. When a DeepSeek-powered product answers with a source, that source was chosen by the app's retrieval stack — not by DeepSeek's core model.

- Tool calls are how applications wire in search. The standard non-thinking tool-call flow is: define available functions, inspect whether the model response includes `tool_calls`, run the tool in the application, and send the result back with a matching `tool_call_id`.

What Content Should Companies Publish So DeepSeek Can Extract Accurate Answers?

Publish pages that are structurally extractable: direct answers on top, named entities throughout, source-backed claims, and schema that declares what each page is. This is the overlap where AEO (extractable answers), GEO (generative retrieval), LLMO (language-model optimization), and classic SEO (discoverable and crawlable) stack together — retrieval layers in front of DeepSeek reward all four.

A practical publishing spec for DeepSeek-adjacent visibility:

- Concise answer blocks. Open every key page with a 1–2 sentence direct answer to the implicit question. Retrieval systems lift these.

- Canonical product descriptions. One authoritative description of what your product is, refreshed on release cycles, consistent across site, press, and docs.

- Category and term definitions. Glossary-style pages that name the category, list synonyms, and anchor your product inside it.

- Use cases, integrations, and alternatives. Named, entity-rich pages for each — these are the pages retrieval systems match to comparison prompts.

- Pricing context. Even if exact prices change, publish the shape (tiers, metering, free vs paid) in text, not just in images.

- Source-backed claims. Every statistic or benchmark cites its origin. Unsourced numbers get dropped by careful retrievers.

- Schema and machine-readable metadata. `Organization`, `Product`, `FAQPage`, `Article`, and `SoftwareApplication` where applicable.

- API-readable documentation. Clean Markdown or well-structured HTML with stable headings — developer RAG systems often ingest docs directly.

Entity consistency is the highest-leverage lever: the same product name, the same company name, the same category terminology, spelled the same way, on every page and every surface. Inconsistent entity spellings split the retrieval signal and make your brand harder to cite confidently.

This is where a content engine earns its keep. Publishing twelve entity-complete pages once is easy; keeping forty glossary terms, eight comparison pages, and a dozen integration pages current across quarterly model releases is where manual workflows collapse.

How to Test Whether DeepSeek Can Mention or Cite Your Brand in 2026

Run a controlled prompt matrix across every DeepSeek surface your buyers actually use, then separate four distinct outcomes: mention, citation, recommendation, and hallucinated reference. Log each test with enough metadata that you can re-run it after the next model release.

The outcomes you are measuring:

| Outcome | What it means | What moves it |

|---|---|---|

| Mention | Brand named in the answer, no link | Training-data presence, entity clarity on the open web |

| Citation | Answer attributes a claim to a specific page with a link | Retrieval layer; on-page structure and indexability |

| Recommendation | Model suggests your product for a use case | Category authority, comparison pages, third-party mentions |

| Hallucinated reference | Fabricated URL, wrong attribution, or invented facts | Entity ambiguity, thin documentation, outdated content |

What to log for every test:

- Date and time

- Surface: native DeepSeek Chat, mobile app, API, or DeepSeek-powered third-party app

- Model ID used (`deepseek-v4-pro`, `deepseek-v4-flash`, or legacy IDs like `deepseek-chat` / `deepseek-reasoner`)

- DeepThink on/off

- Whether any search tool or external retrieval was invoked

- Prompt text verbatim

- Full answer output

- Whether the answer cited an owned page, a third-party page, or nothing

Run the same matrix on ChatGPT, Claude, Gemini, Copilot, and Perplexity so you see cross-engine patterns. A brand that gets cited in [ChatGPT](/how-to-show-up-in-chatgpt-in-2026) and [Perplexity](/how-to-show-up-in-perplexity-in-2026) but not DeepSeek usually has a retrieval-layer problem specific to the apps your buyers are using.

Step-by-Step Test Loop for V4-Pro, V4-Flash, DeepThink, and Tool-Call Contexts

- Choose 20–40 prompts covering brand, category, comparison, and use-case intent.

- Call the OpenAI-compatible API using the OpenAI SDK with base URL `https://api.deepseek.com` (Source: Yuv.ai).

- Run each prompt twice — once with `deepseek-v4-pro`, once with `deepseek-v4-flash` — holding everything else constant.

- For multi-turn prompts, resend the full message array on every call (the API is stateless).

- Repeat with DeepThink-equivalent reasoning mode on, then off.

- Add a retrieval layer: wire a search API through tool calls and re-run, recording which sources the app selects and cites.

- Log every failure mode (hallucinations, stale facts, missing citations) against the owned page that should have been surfaced.

Any modern dev environment works — Python, JavaScript, TypeScript, Java, or C++ in VS Code is fine. The goal is a repeatable loop, not a polished product.

DeepSeek vs ChatGPT: Which Visibility Workflow Changes?

The workflow differences are retrieval-layer differences, not model-quality differences. ChatGPT integrates with Bing and OpenAI's own browsing; Claude integrates through Anthropic's Projects, Connectors, and web search; Gemini pulls directly from Google Search and Vertex AI; DeepSeek has no comparable first-party grounded-search surface documented in the research corpus.

| Engine | Primary retrieval path | What you optimize |

|---|---|---|

| ChatGPT | Bing + OpenAI browsing | Bing indexation, entity consistency, citable structure |

| Claude | Anthropic web search, Projects, Connectors | Clean HTML, entity pages, archive freshness |

| Gemini | Google Search, Vertex AI | Core Web Vitals, schema, AI Overviews eligibility |

| DeepSeek | App-layer search API + tool calls | General web indexability; retrieval-ready page structure |

| Perplexity | Proprietary index + live web | Direct-answer openings, source-backed claims |

Practically, a company that already publishes for ChatGPT, Gemini, and Claude visibility is already publishing for DeepSeek — because the retrieval systems that DeepSeek-powered apps call are the same web indexes and vector stores the other engines touch. The delta is mostly in testing and measurement, not in a separate content program. Mentionwell's companion guides cover each engine individually: [Google AI Overviews](/how-to-show-up-in-google-ai-overviews-in-2026), [Microsoft Copilot](/how-to-show-up-in-microsoft-copilot-in-2026), [Grok](/how-to-show-up-in-grok-in-2026), [Gemini](/how-to-show-up-in-google-gemini-in-2026), [Claude](/how-to-show-up-in-claude-in-2026), and [ChatGPT](/how-to-show-up-in-chatgpt-in-2026).

How to Keep DeepSeek Visibility Current as Models, APIs, and Search Behavior Change

Treat every DeepSeek fact in your playbook as date-stamped. The research in this article reflects the April 24, 2026 DeepSeek V4 Preview release (Source: DeepSeekAI.guide); model names, context windows, pricing, and endpoint behavior have changed before and will change again.

A recurring refresh workflow should cover:

- Model-name drift. Track the live roster: `deepseek-v4-pro`, `deepseek-v4-flash`, legacy `deepseek-chat`, `deepseek-reasoner`, and specialist variants like DeepSeek Coder.

- Context and output limits. Re-test when DeepSeek ships a new generation — V4's 1,000,000-token input and 384,000-token output targets may shift (Source: DeepSeekAI.guide).

- Endpoint stability. Confirm the API base URL, auth flow, and OpenAI-compatibility surface each quarter.

- Search and tool behavior. Re-run your prompt matrix when new thinking modes, search toggles, or grounding features ship natively.

- Third-party DeepSeek-powered apps. Inventory which products your buyers actually use and test them directly — their retrieval policies evolve independently of DeepSeek itself.

- Owned-content freshness. Republish entity pages, comparison pages, and glossary terms on a known cadence so retrievers see recent `dateModified` signals.

Local deployment via Ollama with MIT-licensed open weights (Source: Yuv.ai) is a legitimate implementation path for internal tools, but it is not a public-retrieval visibility surface — nothing you run locally influences what the hosted DeepSeek Chat or public DeepSeek-powered apps will surface for your buyers.

DeepSeek visibility is not a one-time optimization; it is a publishing cadence plus a testing loop that survives every model release. Mentionwell runs that cadence as an automated blog engine — research-grounded drafts with AEO, GEO, LLMO, and SEO structure built in, shipped into your existing CMS or headless stack, with archive refreshes scheduled against model-release calendars. Get My Site GEO Optimized and keep citation-ready content current without rebuilding your workflow every quarter.

Sources

- How To Login Into DeepSeek AI [2026 Guide]deepseekai.guide

- How To Use DeepSeek AI [2026 Full Guide]deepseekai.guide