What Does "Show Up in Claude" Mean in 2026?

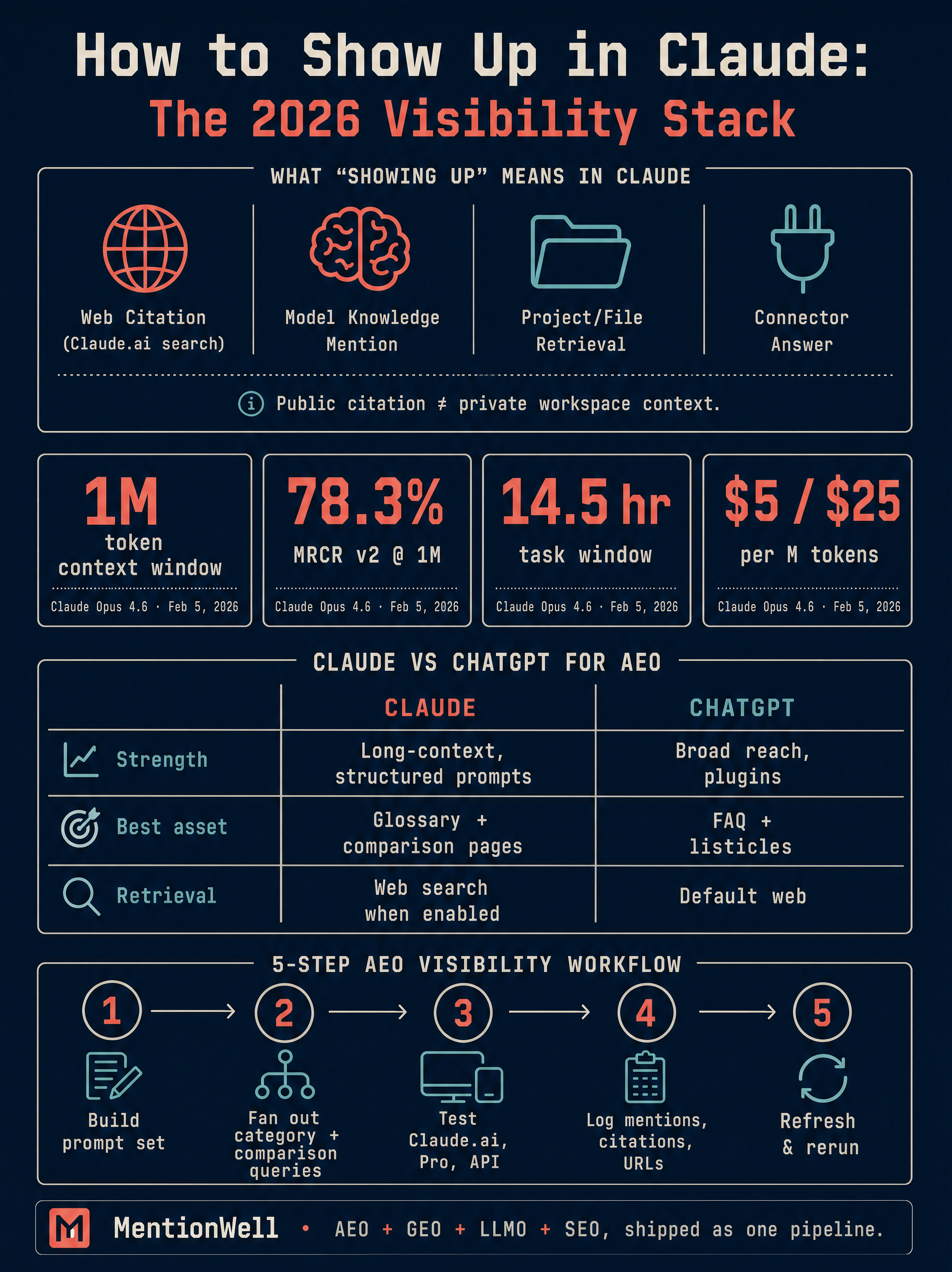

Showing up in Claude means one of five specific things: being named from Claude's trained model knowledge, being cited through Claude.ai web search, being retrieved from a Claude Connector, being pulled from a file uploaded to Claude Projects, or being surfaced through a third-party wrapper that routes to Claude. These are not interchangeable. Each surface has different eligibility rules, and optimizing for one does not automatically produce visibility in the others.

The distinction that matters most is public-web citation versus private workspace context. A sales deck uploaded to a Claude Project is retrievable inside that workspace, but it will never be cited to a stranger running a Claude.ai prompt. A public glossary page with a clean entity definition can be surfaced by Claude web search for any user — if that user's product surface includes web retrieval at all. Treat these as two separate visibility programs.

Anthropic's product surface matters because the company ships fast. According to The AI Corner, Anthropic shipped a major Claude release roughly every two weeks since January 2026, with 52 days of product releases tracked from February 1 to March 24, 2026 (Source: Product Compass Newsletter, via The AI Corner). What's routable to a live web citation this month may be gated behind a different plan or feature next month. "Showing up in Claude" is not one outcome — it's a portfolio of outcomes across Claude.ai, Claude API, Claude Code, Projects, Connectors, and third-party endpoints, each requiring its own content and testing strategy.

Which Claude Surfaces Matter for Visibility?

Only a subset of Claude surfaces can produce a public citation to your content. The rest are either private workspace contexts, where retrieval happens inside a user's own files and connected accounts, or developer and agent environments where your content is unlikely to surface unless it's already part of the open web corpus Claude's search layer can reach.

Here is the Claude surface map for visibility work, scoped to what the research corpus actually supports. Feature availability is drawn from Anthropic documentation and, where noted, from dated reporting by The AI Corner; capability claims from creator tutorials are excluded:

| Surface | Visibility type | Can cite public web? | Optimization target |

|---|---|---|---|

| Claude.ai with web search | Public citation | Yes, via Claude web search | Citation-shaped pages, entity clarity |

| Claude API | Model knowledge | No live web by default | Entity presence in training corpus |

| Claude Code | Developer tool | No | Not a public visibility channel |

| Claude Projects | Private workspace | Retrieves uploaded files only | User-owned content |

| Claude Connectors (Gmail, Google Calendar, Stripe, PayPal) | Private workspace | No | User-owned accounts |

| Claude Artifacts / Skills / Plugins | Output and action layer | No | Not a visibility channel |

| Third-party wrappers (Poe, Brave Leo, DuckDuckGo AI Chat, Cursor, Windsurf, GitHub Copilot) | Public surface routing to Claude | Depends on wrapper's retrieval layer | Same as Claude.ai |

Plan tier changes what you can test, not what gets cited. According to AI Foundations, the Claude free plan includes Claude Sonnet, web search, file uploads, and artifacts. StartupHub.ai reports that Claude.ai's free tier uses a rolling 5-hour message window with typically 30 to 50 messages per window before throttling. Claude Pro, Claude Max, Claude Team, and Claude Enterprise expand limits and unlock features like Projects and Connectors, but your citation surface is the same web-search layer a Free user sees.

Agent and action features shipped by Anthropic in 2026 — named by The AI Corner as Cowork, Dispatch, Computer Use, Channels, Scheduled Tasks, Agent Teams, and Plugins — are execution and automation layers, not ranking surfaces. They can call tools that fetch public pages, but that's downstream of the same Claude web search behavior you'd test on Claude.ai directly. Treat them as user workflow features, not as separate visibility channels.

For public Claude visibility, the only surfaces that matter are Claude.ai with web search enabled and the third-party wrappers that route to Claude with their own retrieval layer. Everything else is either a testing environment or a private context your content strategy can't reach.

Can Claude Cite Public Web Pages?

Yes — but only when the product surface a user is running includes a web search or retrieval layer. Claude's base model, accessed through the Claude API without tools, answers from trained knowledge and does not cite live URLs. Claude.ai with web search enabled, and third-party wrappers like Brave Leo or DuckDuckGo AI Chat that layer their own retrieval, can produce answers that reference and link to public web pages.

There are four distinct sourcing paths inside Claude, and they should not be confused:

- Base model knowledge — Claude answers from what was in its training data. Your brand or content is either present in that corpus or it isn't. No live retrieval happens.

- Live web retrieval — Claude.ai web search fetches current pages in response to a prompt. This is where public-web citations happen.

- Connected-source retrieval — Claude Connectors pull from a user's own Gmail, Google Calendar, Stripe, or PayPal. Private only.

- Uploaded-file retrieval — Claude Projects retrieve from files a user has uploaded to that workspace. Private only.

What the public sources do not support is any detailed claim about how Claude's web search layer chooses which pages to cite. Anthropic has not published a ranking algorithm, and the creator tutorials in our research corpus describe Claude features and usage rather than citation mechanics. Treat Claude's web citation behavior as an observable but unpublished ranking system — measure what gets cited for your category, don't assume a documented algorithm exists.

Third-party wrappers add a wrinkle. According to StartupHub.ai, Claude is accessible through Poe, Brave Leo, DuckDuckGo AI Chat, Cursor, Windsurf, and GitHub Copilot's Claude option. Each wrapper applies its own system prompt, retrieval layer, and filtering — so citation behavior in Brave Leo is not the same as citation behavior in Claude.ai, even when both are calling Claude Sonnet underneath.

How Should a Page Be Structured So Claude Can Extract the Answer?

Structure pages the way Anthropic tells developers to structure prompts: clear, explicit, entity-labeled, example-backed, and unambiguous in format. The same patterns that help Claude parse a prompt help Claude extract a clean citation-ready passage from a public page.

According to Anthropic's prompt engineering documentation, Claude responds well to clear, explicit instructions, and users should explicitly request desired behavior rather than relying on Claude to infer it. Anthropic also states that examples are one of the most reliable ways to steer Claude's output format and that XML tags help Claude parse complex prompts unambiguously when they mix instructions, context, examples, and variable inputs. Translate that into public-page structure:

- Open every H2 with a direct answer. One or two sentences that fully answer the implicit question, readable in isolation.

- Name entities in full on first mention. "Claude Opus 4.6" before "Opus", "Anthropic" before "they". Entity co-occurrence is how models decide what's topically connected.

- Back statistics with a source. Unattributed numbers get ignored by answer engines.

- Use numbered lists for processes. Claude lifts numbered steps directly into step-by-step answers.

- Use comparison tables for options. Multi-column comparisons are easy to extract and hard to hallucinate around.

- Use explicit section labels. Section headings should describe the answer, not the topic.

The same long-context capabilities that make Claude strong for writing also raise the bar on your pages. According to The AI Corner, Claude Opus 4.6 launched on February 5, 2026 with a 1 million token context window, 78.3% on MRCR v2 at 1M tokens, a 14.5 hour task completion window, API pricing at $5/$25 per million tokens, and 128K max output tokens (Source: Anthropic, via The AI Corner). Claude can ingest a full site section before answering — which means thin, duplicative pages get compared against each other in one pass. Your strongest page on a topic should be unambiguous; your weaker pages will lose the comparison.

Pages that get extracted cleanly by Claude look like well-structured prompts: a clear role, explicit context, a stated goal, a numbered or tabular format, and a short, citable summary sentence.

Get your site structured for Claude, ChatGPT, Gemini, and Perplexity citations in one operational pipeline — Get My Site GEO Optimized.

Which Content Assets Are Most Useful to Claude?

Claude extracts cleanly from assets with tight scope, clear entity definitions, and source-backed claims. That favors a specific asset mix — glossary pages, comparison pages, documentation, tutorials, data studies, and narrowly scoped explainers — over generic top-of-funnel blog volume.

Rank your content investments by how cleanly Claude can reuse them:

| Asset type | Claude extraction quality | Why it works |

|---|---|---|

| Glossary / term definitions | High | Single entity, direct answer, citable in isolation |

| Comparison pages (X vs Y) | High | Tabular structure, symmetric entity coverage |

| Documentation and how-to guides | High | Numbered steps, explicit outcomes |

| Source-backed explainers | High | Attributed statistics lift into answers |

| Data studies with original numbers | High | Primary-source statistics get cited disproportionately |

| Tightly scoped question-answer sections within pages | Medium | Useful when questions are real and answers are self-contained |

| Product pages | Medium | Useful for brand mention, weak for category queries |

| Thin programmatic SEO pages | Low | Duplicative content loses the long-context comparison |

| Generic thought-leadership posts | Low | No extractable entity or statistic |

Question-and-answer sections help when they answer real questions in full sentences and hurt when they are keyword-stuffed filler. Product pages help with branded queries but rarely win category-level citations. The asset mix that earns Claude citations looks more like a reference library than a content marketing calendar.

Programmatic SEO is not disqualified, but it needs editorial controls. A templated comparison page with unique data per entry can be high-extraction; a templated page with thin, interchangeable copy is exactly the kind of content Claude's long context will use against you by preferring a denser competitor page on the same topic.

How Does Claude Compare to ChatGPT for AI Visibility?

Optimize for both, test them separately, and expect different winners. Claude and ChatGPT share the structural fundamentals — direct answers, entity clarity, attributed statistics — but their retrieval layers, training corpora, and user behaviors diverge enough that a page dominant in one can be invisible in the other.

Claude's strengths, according to SurePrompts, are long-context work, nuanced instruction following, and structured prompting. Rephrase recommends Claude when work depends on deep writing, long context, and calmer reasoning over long sessions. That profile shapes what gets cited: Claude tends to reward pages that hold up under extended comparison and that answer with precision rather than breadth.

Here's how the optimization targets differ in practice:

| Factor | Claude.ai | ChatGPT | Gemini / Google AI Overviews | Perplexity |

|---|---|---|---|---|

| Primary retrieval | Claude web search | Bing-backed web search | Google index | Multi-source retrieval |

| Rewards | Entity precision, long-form depth | Bing indexation, schema | Google E-E-A-T signals | Fresh, citation-dense pages |

| Common failure | Thin pages lose to denser competitors in long context | Missing from Bing = missing from ChatGPT | Weak entity graph | Low citation density |

| Testing cadence | Per Anthropic major release | Per OpenAI model update | Per Google algorithm update | Per Perplexity index refresh |

SoftVerdict's recommendation applies to visibility testing too: run a real benchmark with your own prompts, documents, and category queries instead of relying on feature checklists. A category-level query in Claude.ai and the same query in ChatGPT will often return non-overlapping citation sets.

For the mirror-image playbook on the other major answer engine, see [How to Show Up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026). Treat Claude and ChatGPT as two distinct distribution channels that happen to share a lot of on-page fundamentals — not as one "AI SEO" target.

How Do AEO, GEO, LLMO, and SEO Work Together for Claude Visibility?

They're four complementary workflows, not competing disciplines. Answer Engine Optimization (AEO) supplies the direct-answer blocks Claude extracts. Generative Engine Optimization (GEO) shapes passages so they read as citation-ready inside a generated response. Large Language Model Optimization (LLMO) builds entity consistency so the model recognizes your brand across contexts. SEO keeps your pages crawlable, indexed, and discoverable through the search layers Claude and third-party wrappers rely on.

Here's how each discipline contributes to a single page that earns a Claude citation:

| Layer | Contribution | On-page output |

|---|---|---|

| SEO | Crawlability, indexation, schema | Page is reachable by Claude web search |

| AEO | Direct answer in first 1-2 sentences of each section | Extractable answer block |

| GEO | Citation-ready summary sentence, attributed stats | Quotable lift into generated answers |

| LLMO | Consistent entity naming, topical depth across site | Brand recognized across Claude contexts |

Skip any one and the page underperforms. SEO alone gets you indexed but not cited. AEO without SEO produces well-structured pages Claude's web search can't reach. LLMO without AEO builds recognition but no extractable passages. GEO without LLMO produces one-off citations but no compounding authority.

For a full breakdown of when to lead with which discipline, see [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team). The teams getting cited in Claude in 2026 are running AEO, GEO, LLMO, and SEO as one pipeline, not four separate content initiatives.

How to Use Claude AI Step by Step (2026): Test Whether You Appear

Testing is the only way to know if you're showing up in Claude — Anthropic publishes no analytics for brand citation. Build a repeatable measurement loop and run it on a cadence tied to Anthropic's release schedule.

The procedural workflow:

- Build a prompt set. For each priority page, write 10-20 category queries, comparison queries ("X vs Y"), and problem-framed queries a buyer would type. Include branded and unbranded variants.

- Fan out queries. For each base query, generate 3-5 paraphrases. Claude's answers vary with phrasing, and so does citation behavior.

- Test in Claude.ai with web search enabled. Run every query in a fresh session. Log the exact answer text, any URLs cited, and any brands mentioned.

- Repeat without web search. This isolates base model knowledge from live retrieval. A brand mentioned here is in Claude's training corpus; a brand only mentioned with search is retrieval-dependent.

- Repeat across plans. Run the same set on Claude Free, Claude Pro, and Claude Team. According to StartupHub.ai, the free tier uses Claude Sonnet with a rolling 5-hour window; paid tiers unlock different models. Behavior can differ.

- Test the Claude API. Use the system parameter to set a neutral system prompt, then run the same queries. SurePrompts notes that system prompts can be set through Claude Projects in Claude.ai or the API's system parameter.

- Test third-party wrappers. Run priority queries in Poe, Brave Leo, and DuckDuckGo AI Chat. Each has its own retrieval layer and will produce different citations.

- Log everything. Record query, surface, date, exact answer, cited URLs, and brand mentions in a single sheet. Track movement over time, not single runs.

- Rerun after Anthropic updates. The AI Corner reports Anthropic shipped major releases roughly every two weeks since January 2026. Rerun your prompt set after each major model or product release.

When writing the prompts you use to test — and the prompts you use inside Claude for content operations — follow Anthropic's own guidance. Per Anthropic documentation, be clear and direct, provide examples, specify the return format, and use role-context-goal framing. Raviteja recommends structuring technical prompts around role, context, and goal, then specifying the exact return format.

Claude visibility is measured, not assumed: a prompt set, a fan-out, a citation log, and a release-tied rerun cadence is the minimum viable measurement loop.

Which Claude Capability Claims Should You Trust or Exclude?

Treat Anthropic documentation as primary, dated reports from established AI publications as secondary, and creator YouTube transcripts as tertiary — and reconcile numbers explicitly when they conflict.

The clearest example is context window size. The AI Corner reports Claude Opus 4.6 launched with a 1 million token context window (attributed to Anthropic). SurePrompts states Claude supports a 200,000-token context window, roughly 150,000 words or 500 pages. Both can be correct in context: context limits vary by model (Claude Opus 4.7, Claude Opus 4.6, Claude Sonnet 4.6, Claude Haiku 4.5, Claude Sonnet), product surface (Claude.ai versus API versus Projects), date, and plan. Any universal "Claude has X tokens of context" claim is wrong unless scoped to a specific model and surface on a specific date.

How to rank evidence from the research corpus:

| Evidence tier | Source type | How to use it |

|---|---|---|

| Strong | Anthropic documentation (docs.claude.com) | Cite directly; safe for capability claims |

| Strong | Dated reports from established publications (The AI Corner, Product Compass Newsletter) | Cite with date and attribution |

| Medium | Tutorials from known practitioners (SurePrompts, Rephrase, SoftVerdict, Raviteja, Claude Lab, How Do I Use AI) | Cite for technique, not contested capability claims |

| Medium | Creator deep-dives with named authorship (Ruben Dominguez, Boris Cherny via X) | Cite for workflow observations |

| Weak | YouTube transcripts without primary-source backing | Use only for feature availability at a point in time, not capability claims |

Claims to exclude without primary evidence: any benchmark numbers that don't trace to a named test and date, any generic "Claude is better than ChatGPT at X" claim without a benchmark, and any dramatic capability story (Mars rovers, unrelated PySpark examples) that appears only in a single creator transcript. If your content cites unsupported claims, Claude's long-context comparison will favor the competitor page that sourced its numbers properly.

How Can Teams Refresh and Scale Claude-Ready Publishing?

Refresh on a cadence tied to Anthropic's release schedule, not your editorial calendar. According to The AI Corner, Anthropic shipped major Claude releases roughly every two weeks since January 2026, with the 52-day window from February 1 to March 24, 2026 covering the release calendar that post documents (Source: Product Compass Newsletter). A page optimized for Claude Sonnet before Claude Opus 4.6 launched may no longer be the strongest extractable source in Claude's long-context comparison.

The operational refresh workflow:

- Maintain a site profile. Document your entities, canonical definitions, brand voice, and citation targets in one place. Every new page inherits from it.

- Track Anthropic releases. Subscribe to Anthropic's release notes and a secondary tracker like The AI Corner or Product Compass Newsletter. Flag releases that change model capabilities, context windows, or web search behavior.

- Rerun citation tests after major releases. Use the prompt set from the testing section above. Flag pages that lost citations.

- Refresh archives, not just recent posts. A glossary page from 2024 can outperform a 2026 blog post if it's structurally cleaner. Archive refreshes often beat new publishing for citation lift.

- Update citation-shaped templates, not individual pages. When a template improvement works, propagate it across every page built from that template.

- Enforce programmatic SEO guardrails. Every templated page needs unique, source-backed data. Templates without editorial controls produce the exact thin content Claude's long context will downrank.

- Run the loop per site, not per article. For agencies and multi-site operators, the unit of work is the site profile — not the post.

This is where Mentionwell fits. Mentionwell is a blog engine that operationalizes AEO, GEO, LLMO, and SEO through a structured onboarding, a persistent site profile, a citation-shaped pipeline, and CMS or headless publishing. It handles programmatic SEO with editorial controls, runs archive refreshes on a defined cadence, and keeps brand-consistent output across one site or hundreds — which is what matters when Anthropic ships major Claude releases on a two-week cadence and your archive needs to hold up under long-context comparison.

If you're running content across multiple domains and need a repeatable pipeline that produces Claude-ready, ChatGPT-ready, Gemini-ready pages without rebuilding your stack, Get My Site GEO Optimized with Mentionwell.

Sources

- How to Use Claude Code for FREE (2026)www.youtube.com

- FULL Claude Tutorial for Beginners in 2026! (Become a ...www.youtube.com

- Everything Claude Shipped in 2026: The Complete Guidewww.the-ai-corner.com

- How to Use Claude AI Step by Step (2026)www.youtube.com

- How to Use Claude AI Free in 2026: 12 Methods That ...www.startuphub.ai

- Claude best practices 2026: the complete power user guidewww.the-ai-corner.com