What Does "Show Up in Google Gemini" Mean in 2026?

Showing up in Google Gemini in 2026 means your pages, brand, and entities are retrieved, cited, and reused inside Gemini-grounded answers — across the Gemini App, AI Mode, Google AI Overviews, and adjacent Google surfaces — not that your team knows how to prompt the Gemini App. This is a source-visibility problem, not a product-access problem.

That distinction matters because most of what is currently published about "Google Gemini" is beginner tutorial content covering Gemini Advanced, Google AI Plus, Google AI Pro, and Google AI Ultra plan access. Those are user-side concerns. Citation visibility is a separate discipline tied to Google Search grounding, the Bard-to-Gemini transition under Google DeepMind, and the expanded retrieval behavior introduced with Gemini 3.

To show up in Google Gemini, you must appear as a retrieved, cited source inside Gemini-generated answers — not simply hold a Google ranking and not simply use the Gemini App.

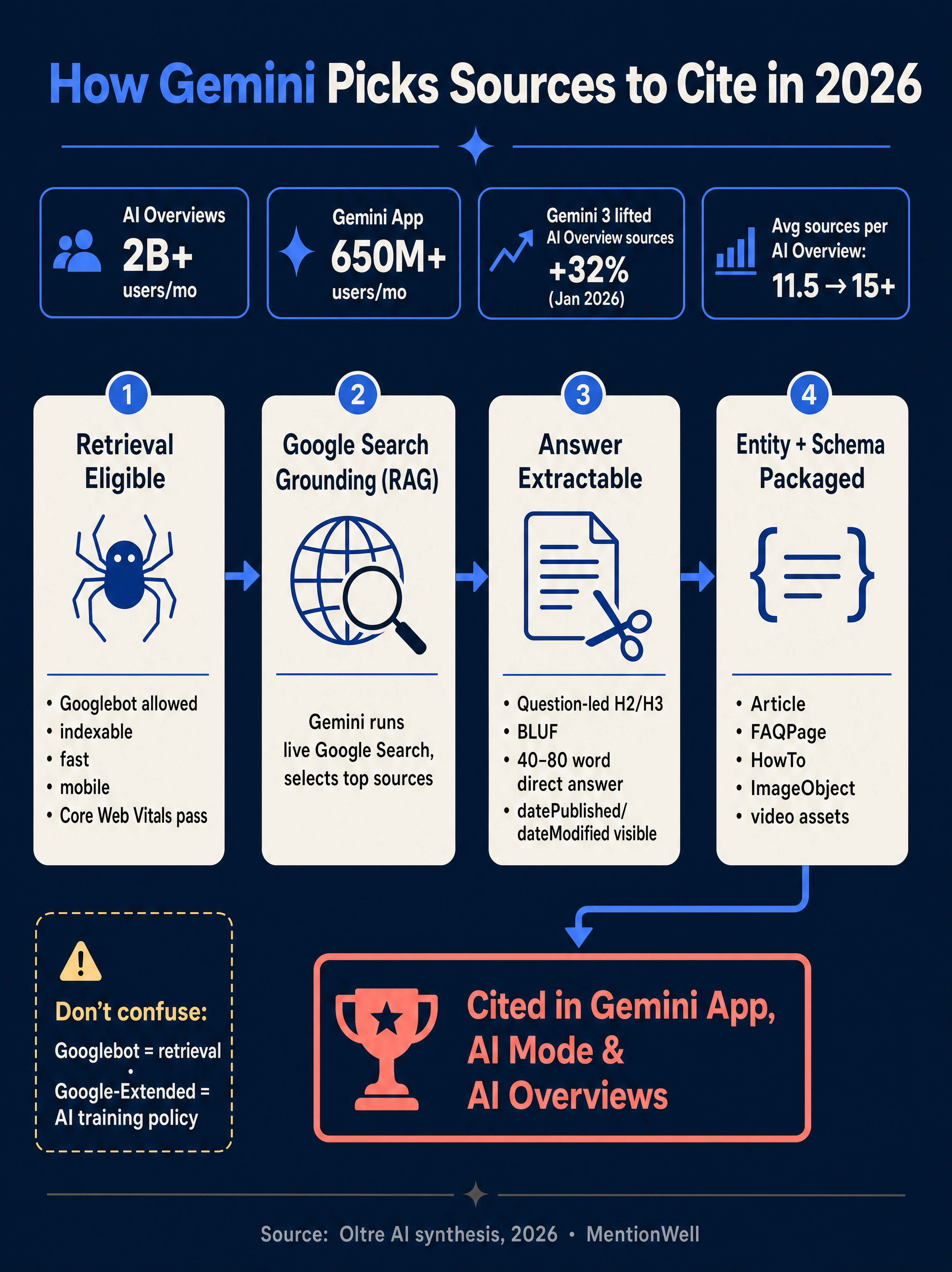

The surface matters too. According to Oltre AI, Gemini citations are influenced by Google Search, AI Overviews, Google Workspace, Android, and YouTube — not only the standalone Gemini App. Oltre AI's ecosystem table lists AI Overviews at 2B+ monthly users and the Gemini App at 650M+ monthly users, which means the Overviews surface is the larger citation opportunity for most B2B SaaS teams.

What Is Google Gemini AI Mode, and Which Google Surfaces Matter?

Foglift defines Google Gemini AI Mode as Google's AI search experience that uses the Gemini model to generate detailed, cited answers by synthesizing information from multiple sources. For a B2B SaaS team, the visibility surfaces worth prioritizing are Google Search, Google AI Overviews, AI Mode, the Gemini App, Google Workspace (Gmail, Google Drive, Google Docs, Google Sheets, Google Slides, Google Meet), NotebookLM, Chrome, Android, YouTube, Google News, and Google Discover. Google Maps and Google Business Profile matter primarily for local businesses and can be deprioritized by most software companies. Google Cloud is a distribution channel for Gemini via API, not a user-facing citation surface.

How Does Google Gemini Decide Which Websites to Cite?

Gemini decides what to cite by running a real-time Google Search, selecting retrieved pages, and composing an answer from them. According to Oltre AI, Gemini grounding is a retrieval-augmented generation (RAG) pattern: Gemini issues queries against Google's index, selects sources, and synthesizes the response from retrieved content.

The practical decision rule follows directly: Gemini can only cite content that Google can retrieve, parse, and trust. Oltre AI states explicitly that Gemini can only cite pages that are eligible for Google retrieval — crawlable, indexable, fast, and machine-readable. Search Atlas reinforces this by framing Gemini optimization as retrieval, citation, and reuse inside AI-generated answers rather than blue-link ranking alone.

The citation pool is also volatile. According to Oltre AI, citing a Female Entrepreneurs analysis, Gemini 3 increased the number of sources cited inside AI Overviews by 32% in January 2026, raised average sources per AI Overview from 11.5 to over 15, and replaced 42% of prior cited domains. More source slots and higher domain churn mean citation readiness is something to maintain, not something to earn once.

Googlebot vs Google-Extended: What Crawler Controls Actually Affect Gemini Citations?

Googlebot access governs whether a page is eligible for Google Search retrieval — and therefore Gemini grounding. Google-Extended is a separate control tied to AI training and product use policies. They are not interchangeable, and confusing them is a common reason teams accidentally remove themselves from AI Overviews citation pools.

| Control | What it governs | Impact on Gemini citations |

|---|---|---|

| `robots.txt` Googlebot rules | Whether Google can crawl the page at all | Blocks retrieval eligibility entirely |

| `noindex` meta tag | Whether the page enters Google's index | Removes the page from Gemini's source pool |

| Google-Extended | AI training / generative product policies | Separate from Search inclusion; check current Google docs before changing |

| Client-side rendering | Whether Googlebot can see HTML content | Invisible content cannot be cited |

What Technical Requirements Must a Page Meet Before Gemini Can Cite It?

A page must be crawlable by Googlebot, indexable, fast, mobile-friendly, and rendered so Google can see the content in HTML. NeuralAdX lists the full prerequisite set: robots.txt access, no accidental noindex tags, Googlebot-visible HTML, fast loading, mobile friendliness, and healthy Core Web Vitals. Oltre AI repeats the same baseline — crawlable, indexable, fast, machine-readable — and frames anything less as ineligible for retrieval.

Use this as an operator checklist before you worry about answer blocks or schema:

- Confirm the URL is indexed in Google Search Console.

- Verify Googlebot renders the primary content in HTML, not only after JavaScript hydration.

- Check Core Web Vitals (LCP, INP, CLS) in Search Console.

- Validate schema with Google Rich Results Test.

- Confirm a single canonical URL and no conflicting canonical tags.

- Ensure the mobile version contains the same content as desktop.

- Audit robots.txt for unintended Googlebot disallows.

- Audit headers and meta tags for stray `noindex` or `nofollow` directives.

If Google cannot retrieve, render, and index the page, Gemini cannot cite it — schema and answer blocks are wasted until retrieval eligibility is fixed.

For teams managing multiple domains or archives, this checklist is the gate every page must clear before editorial work begins. For a deeper comparison of how this prerequisite layer differs across answer engines, see [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

Google Search vs Gemini AI — Can AI Replace Search in 2026?

Gemini does not replace Google Search for visibility purposes — it sits on top of it. According to AtomicAGI, 76.1% of AI Overview citations rank in Google's top 10, and 80% of ChatGPT's LLM citations do not rank in Google's top 100 for the original query. That asymmetry is the core reason Gemini optimization and classic SEO are complementary, not competing.

The implication for B2B SaaS teams: ranking in Google's top 10 is a strong prior for Gemini citation, but ranking alone is not enough. Gemini still needs extractable answers, defined entities, supporting evidence, and structured data to reuse a passage in a generated response. The SEO Guy notes that Gemini is embedded in Google's infrastructure and sensitive to how content is organized — clear headings, direct answers, and schema markup shape what gets extracted.

| Discipline | Primary goal | Success signal |

|---|---|---|

| Classic SEO | Rank in Google's top 10 | Organic position, clicks |

| AEO (Answer Engine Optimization) | Get the direct-answer passage extracted | Featured in AI Overviews, Gemini answers |

| GEO (Generative Engine Optimization) | Be cited across Gemini, ChatGPT, Perplexity, Claude, Copilot | Citation logs per engine |

| LLMO (LLM Optimization) | Be reused inside generative responses, with or without a link | Mention and attribution patterns |

Ship research-grounded pages with AEO, GEO, LLMO, and SEO built into every draft — Get My Site GEO Optimized

How Should a Page Be Structured So Gemini Can Extract a Direct Answer?

Structure every major section so the first 1–3 sentences directly answer the implicit question in the heading. Search Atlas defines this as BLUF — Bottom Line Up Front — meaning the direct answer or definition appears at the very beginning of a section so Gemini can extract and reuse it. AI Marketing Picks makes the same point from the retrieval side: Gemini pulls from sources that answer clearly and concisely, with definitions in the first paragraph and direct answers at the start of sections.

The extraction pattern that consistently works:

- Question-led H2 (e.g., "How does Google Gemini decide which websites to cite?").

- BLUF answer in the first one or two sentences.

- Short elaboration paragraph with the mechanism.

- Evidence block with attributed sources or data.

- Optional list, table, or numbered steps that stand alone if extracted.

- A sharp, bolded citable sentence that summarizes the section.

Each section should be readable in isolation. If a Gemini answer lifted only that section, it should still make sense without the surrounding article.

Where Do 40–80 Word Answers, Evidence Blocks, Dates, and Source Lists Fit?

Toolkit by AI recommends a 40–80 word top answer at the page level, followed by an evidence block with numbered sources, visible `datePublished` and `dateModified` fields, Article and FAQ schema, and machine-readable data such as CSV or JSON where relevant. Treat that module as your page-level BLUF: a compressed answer that can be extracted verbatim, with the longer evidence underneath for validation. Be honest about the limits — Toolkit by AI does not publish methodology for every performance claim, so use the module because it matches how RAG systems retrieve, not because of a specific reported lift.

Which Schema Types Matter Most for Gemini Visibility?

The schema types that matter most depend on the page type, not on a generic "add schema" recommendation. Oltre AI recommends Article, FAQPage, HowTo, and ImageObject schema plus supporting video assets. RankPrompt expands the stack to FAQPage, Article, WebPage, and Organization, with entity-connecting properties such as `sameAs`, `about`, and `mentions`. FogTrail claims schema-marked pages are cited at a 73% higher rate in AI Overviews than unstructured pages, though the available snippet does not expose the underlying study or sample — treat the directional advice as sound and the specific multiplier as unverified.

Map schema to page type rather than stacking everything everywhere:

| Page type | Primary schema | Supporting schema | Purpose |

|---|---|---|---|

| Explainer / glossary | Article, WebPage | Organization, Person, mentions | Definition extraction, entity disambiguation |

| How-to / tutorial | HowTo | Article, ImageObject | Step extraction for AI Mode |

| Comparison / buyer guide | Article | FAQPage, Product, sameAs | Side-by-side reuse in answers |

| Product page | Product, Organization | ImageObject, sameAs | Entity binding, rich result eligibility |

| Help / FAQ | FAQPage | Article, Organization | Q&A parsing by Gemini and other LLMs |

| News / update | Article (NewsArticle where valid) | Person, Organization, datePublished | Freshness and author signals |

Track My Visibility notes that FAQ schema still helps AI models parse Q&A pairs even when Google displays FAQ rich results less prominently than before — which means the schema retains value for extraction even if its classic SERP treatment has faded.

How Should Entities, Author Credentials, and Citations Be Presented?

Name every meaningful entity in plain language, bind it with schema, and connect it to external references with `sameAs`. RankPrompt's operator checklist covers this well: full Google indexing, FAQPage, Article, WebPage, and Organization schema, entity-connecting properties (`sameAs`, `about`, `mentions`), author bios, cited sources, credentials, and original data. FogTrail independently lists verifiable author credentials as one of the five highest-impact Gemini tactics.

The minimum entity layer for a B2B SaaS explainer:

- Organization schema for the publishing brand, with `sameAs` pointing to Crunchbase, LinkedIn, Wikipedia where applicable.

- Person schema for authors, with `sameAs` pointing to professional profiles and a visible bio including credentials.

- Clear definitions for the product, the category, and adjacent entities on first mention — never "the platform" or "the tool" before the entity is named.

- Citations to primary sources with compact inline attribution, plus a numbered source list if the page makes quantitative claims.

This layer also supports Google's E-E-A-T guidance and gives Gemini verifiable context for trust decisions during retrieval.

Should Gemini Optimization Include YouTube, Images, and Other Multimodal Assets?

Yes — with a caveat. Gemini is multimodal, so useful YouTube demos, diagrams, screenshots, and product images with ImageObject markup can support both extraction and trust across Google's ecosystem. Think Tutorial describes Gemini as a multimodal system that understands text, images, video, audio, and code. Oltre AI argues that brand-owned explainers, official docs, and YouTube demos tend to travel farther in Google's ecosystem than generic blog commentary, and FogTrail lists YouTube video content among the five highest-impact Gemini tactics.

The caveat: features like Nano Banana image generation or image editing inside the Gemini App are consumer use cases, not evidence that an asset will be cited. What actually helps:

- Original diagrams or screenshots that make a concept extractable as an image.

- Embedded YouTube demos from the brand's own channel, with descriptive titles and chapters.

- ImageObject markup with meaningful alt text and captions.

- Transcripts for video content so Google can index the spoken content.

Multimodal assets should support the text, not replace it. Gemini still needs a clear written answer to cite; images and video raise trust and engagement around that answer.

How Is Optimizing for Gemini Different from ChatGPT, Perplexity, Claude, and Copilot?

Gemini is the most Google-ranking-dependent of the major answer engines. AtomicAGI's data point — 76.1% of AI Overview citations in Google's top 10 versus 80% of ChatGPT citations not ranking in Google's top 100 — means Gemini rewards classic SEO fundamentals more directly than ChatGPT does. The other engines sit between those poles.

| Engine | Primary retrieval system | What to optimize first |

|---|---|---|

| Google Gemini / AI Mode / AI Overviews | Google Search grounding | Top-10 Google rankings, extractable answers, schema |

| ChatGPT | Bing + web browsing + training data | Bing indexation, entity clarity, citable passages |

| Perplexity | Live web retrieval, its own index | Clear answers, source trust, recency |

| Claude | Web search + Projects + Connectors | Structured pages, entity consistency, doc clarity |

| Copilot | Bing grounding inside Microsoft surfaces | Bing indexation, Microsoft ecosystem signals |

For the engine-specific playbooks, see [How to Show Up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026) and [How to Show Up in Claude in 2026](/how-to-show-up-in-claude-in-2026). The operating model across all four is complementary: AEO, GEO, LLMO, and SEO are different layers of the same content engine, not competing strategies.

How Often Should Teams Refresh Pages for Gemini Visibility?

Refresh high-value explainers, comparisons, product docs, and programmatic glossary pages first, on a cadence tied to topic volatility rather than a uniform schedule. According to Oltre AI, citing Ahrefs 2025 via Am I Visible On AI, AI platforms cite content 25.7% fresher than traditional organic results. FogTrail recommends updating content at minimum every 30 days, though its snippet does not expose methodology. Foglift claims a 3.2x citation boost from monthly updates — treat that specific multiplier as unverified and focus on the directional signal: fresher content wins more AI citations.

A practical refresh priority for a B2B SaaS content archive:

- Category-defining explainers and glossary pages (monthly).

- Buyer-guide comparisons and alternatives pages (monthly).

- Product documentation and how-to pages (as product changes).

- High-traffic organic pages approaching decay (quarterly review).

- News-style posts and announcements (date-locked, refresh only if facts change).

- Low-value archive pages (consolidate, redirect, or retire).

Update `dateModified` and make the change visible on the page — a stale byline on a freshly edited page undermines the signal.

How Can Teams Measure Gemini, AI Mode, and AI Overview Visibility?

There is no single reliable Gemini dashboard in 2026, so measurement should combine Google Search Console signals, prompt testing, citation logs, and third-party tools. Track My Visibility recommends filtering Search Console by an "AI Overview" search appearance where available, while AI Marketing Picks notes that Google has not released comprehensive Gemini-specific metrics — the guidance in the corpus is genuinely contradictory, and teams should not treat any single method as definitive.

A layered measurement stack that is honest about its limits:

- Google Search Console — monitor impressions, clicks, and any AI Overview search appearance filter that surfaces for your property.

- Prompt testing — maintain a fixed set of brand, category, and buyer-intent prompts and run them weekly in the Gemini App and AI Mode, logging whether your domain is cited.

- Citation logs — track which URLs are cited, for which prompts, with what anchor text, and what the surrounding answer says.

- Landing-page traffic patterns — watch for referral spikes from `gemini.google.com`, AI Mode, or Google AI Overviews where attribution is visible.

- Third-party tools — consider the SE Ranking Gemini Tracker or Track My Visibility for automated prompt sampling.

- Competitive gap tracking — log which competitor domains appear for prompts where you do not, to target future content.

Reliable Gemini measurement in 2026 is a triangulation exercise, not a dashboard read — combine Search Console, repeatable prompt tests, and citation logs before trusting any single number.

How to Get Cited by Gemini in 2026: A Step-by-Step Workflow

Run an eight-step pipeline every publishing cycle: map query fan-outs, pick pages, verify retrieval eligibility, write BLUF answer blocks, package entities and schema, add citations and multimodal support, publish, and test. This is the minimum operating sequence that turns Gemini citation from a lucky outcome into a repeatable one.

- Map Gemini and AI Mode query fan-outs. For each target topic, log the primary prompt plus 5–10 fan-out variants Gemini actually expands into (compare, define, alternatives, how-to, pricing). This becomes your section-level outline.

- Choose pages to create or refresh. Prioritize category explainers, comparisons, buyer guides, product docs, and high-value glossary pages. Retire or consolidate thin archive pages.

- Verify Google retrieval eligibility. Confirm indexation, Googlebot-visible HTML, Core Web Vitals, canonical clarity, and clean robots.txt and noindex audits before editorial work starts.

- Write BLUF answer blocks. Open every H2 with a direct 1–2 sentence answer. Include a 40–80 word page-level top answer per Toolkit by AI's module.

- Package entities and schema. Add Article, FAQPage, HowTo, WebPage, Organization, Person, and ImageObject schema matched to page type, with `sameAs`, `about`, and `mentions` properties binding entities to external references.

- Add citations and multimodal support. Attribute every number, link primary sources, embed brand-owned YouTube demos where useful, and add ImageObject markup to diagrams and screenshots.

- Publish through the CMS or headless stack. Ship with correct `datePublished` and `dateModified`, internal links to related pages, and a clean URL structure.

- Test citations and refresh. Run the prompt set, log citations, and schedule the next refresh cycle based on topic volatility — monthly for category-defining pages, quarterly for stable explainers.

The hard part is not any single step — it is running this pipeline consistently across dozens of pages and refresh cycles without producing low-value templated content. That is where an operations layer matters.

Mentionwell is a blog engine built for this workflow. Onboard a domain, define a site profile, and Mentionwell runs research-grounded articles through a citation-shaped pipeline — AEO, GEO, LLMO, and SEO built into every draft, programmatic SEO with editorial controls, archive refreshes on cadence, and delivery into your existing CMS or headless stack. If your team needs to ship Gemini-ready pages across one site or hundreds without rebuilding the stack, Get My Site GEO Optimized.

Sources

- Google Gemini Complete Beginners Guide 2026 (How To ...www.youtube.com

- How To Master Google Gemini in 2026 (Free Course)www.youtube.com

- How To Use Google Gemini Application 2026www.youtube.com

- How To Access Google Gemini [2026 Guide]www.boltic.io

- How to use Google Gemini in 2026 | Tried and testedwww.youtube.com

- GOOGLE GEMINI: 2026 tutorial for beginnerswww.oltre.ai

- How to Get Cited by Gemini: Complete Guide 2026www.visalytica.com