What are SEO, AEO, GEO, and LLMO?

SEO, AEO, GEO, and LLMO are four layers of the same citation-oriented publishing system, each targeting a different retrieval surface: SEO earns rankings and clicks, AEO wins extractable answer units, GEO earns citations inside generative answers, and LLMO shapes how models describe your brand when no source is shown.

Here is how ExecSearches defines each layer in its 2026 content checklist:

- SEO (Search Engine Optimization): helping traditional search engines crawl, understand, rank, and send traffic to pages.

- AEO (Answer Engine Optimization): structuring content so it can be pulled into direct answer boxes, rich results, voice responses, and AI-generated snippets.

- GEO (Generative Engine Optimization): earning visibility and citations inside AI-generated answers from tools like Google AI Overviews, Perplexity, and Bing Copilot.

- LLMO (Large Language Model Optimization): helping models like ChatGPT, Claude, and Gemini understand who you are, what you do, and when to recommend you in conversational responses.

SEO handles indexing and rankings, AEO wins extractable answer units, GEO earns citations inside generative answers, and LLMO shapes how models describe your brand when no source is shown. ExecSearches is explicit that these are not separate strategies — strong SEO remains the foundation, and GEO, AEO, and LLMO build on top of it.

For operator-minded teams, the useful read is this: you are not picking one acronym. You are running one editorial pipeline that produces assets visible across Google Search, Google AI Overviews, ChatGPT, Perplexity, Gemini, Claude, Bing Copilot, and voice assistants — because the underlying signals (entity clarity, schema, evidence, internal links, brand authority) feed all of them.

GEO vs AIO vs LLMO vs AEO: What's the difference?

AEO, GEO, and LLMO differ by target surface and retrieval mechanic, not by whether they replace SEO. AEO targets extractive answer units pulled from a single page; GEO targets generative synthesis where multiple sources are merged and cited; LLMO targets the model's internal understanding of your brand before any retrieval happens. AIO, GAIO, AISO, and Semantic SEO 2.0 are largely synonyms that overlap with these three.

The cleanest operating distinction comes from Matthew Edwards: AEO is extractive — an engine lifts a chunk from one page — while GEO is generative — an engine synthesizes multiple sources and cites them. AEOfix pushes the distinction further by layer: AEO targets the retrieval layer where an AI engine searches the web in real time, while GEO targets the training layer that shapes what a model knows before it searches.

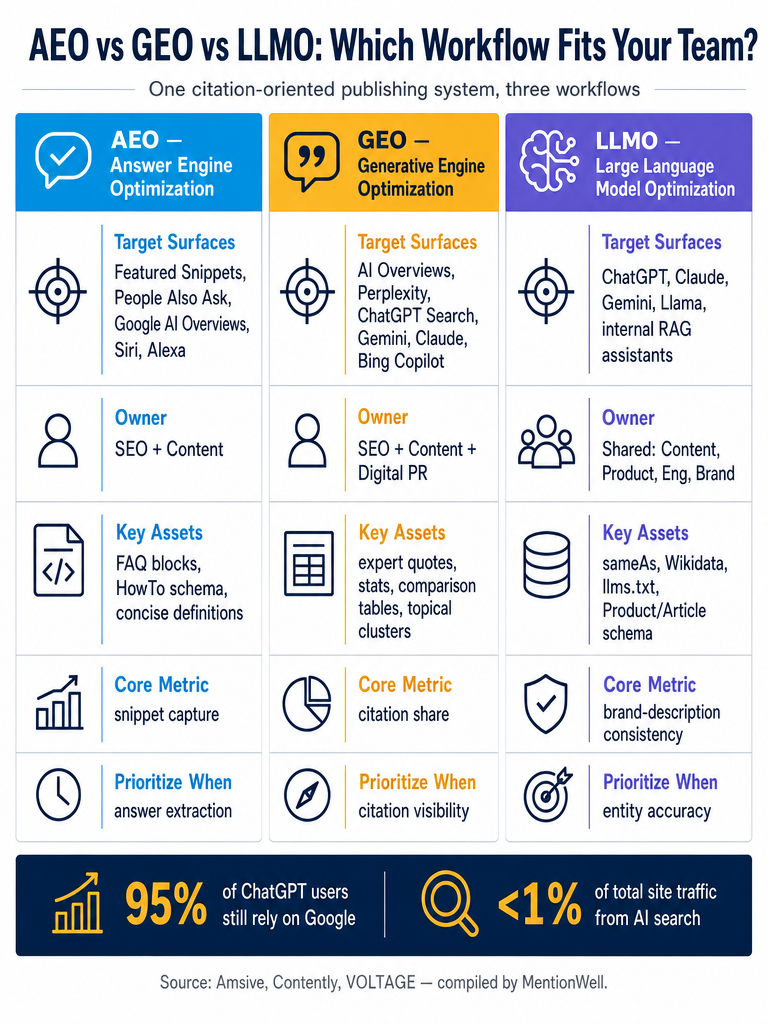

Here is how the acronyms map to target surface, retrieval mechanic, and typical ownership, synthesizing definitions from ExecSearches, SEOctopus, Brand Armor AI, AEOfix, and Matthew Edwards:

| Acronym | Primary surface | Retrieval mechanic | Typical owner |

|---|---|---|---|

| SEO | Google, Bing organic results | Crawl, index, rank | SEO team |

| AEO | Featured Snippets, People Also Ask, AI Overviews, voice | Extractive: pull from one page | SEO + content |

| GEO | ChatGPT Search, Perplexity, Gemini, Claude, AI Overviews | Generative: synthesize + cite multiple sources | SEO + content + digital PR |

| LLMO | ChatGPT, Claude, Gemini, Llama, internal assistants | Parametric knowledge + RAG | Shared: content, product, brand |

| AIO / GAIO / AISO | Generally synonymous with GEO/AEO | Varies by definition | Same as GEO/AEO |

Contently makes the pragmatic case that AEO, GEO, and LLMO describe the same underlying practice with different historical baggage and emphasis — and that their differences matter less than the consultant industry suggests. Contently traces AEO to Jason Barnard's 2017 Trustpilot white paper and his BrightonSEO 2018 keynote, GEO to a November 2023 paper by Pranjal Aggarwal and colleagues at Princeton, Georgia Tech, the Allen Institute for AI, and IIT Delhi presented at KDD 2024, and LLMO to Search Engine Land guidance and vendor posts in 2024–2025.

Where do these strategies overlap?

The overlap is larger than the difference. AEO, GEO, and LLMO all depend on the same editorial substrate: accessible pages, E-E-A-T, structured high-quality content, clear headings, semantic HTML, evidence density, entity clarity, internal links, brand authority, and technical excellence.

According to Amsive, many of the "new" optimization tactics being marketed as GEO or LLMO are updated versions of long-standing SEO best practices. Lily Ray, VP of SEO Strategy and Research at Amsive, argues that large language models are built on the same foundation traditional SEO supports — accessible, trustworthy, well-structured information — and that AI search is expanding traditional search, not replacing it.

Two Amsive data points temper the hype: 95% of ChatGPT users still rely on Google (citing Digital Information World), and AI search currently drives less than 1% of total site traffic (citing Amsive's own data-backed study). Those numbers argue for treating AI visibility as an additive layer on top of SEO, not a replacement.

The same structural improvements that help Google understand your pages also help AI tools cite and recommend you. Contently reinforces this: all three disciplines converge operationally on authority signals, extractable content structure, verifiable substance such as statistics and expert quotes, and freshness.

The Reddit DigitalMarketing thread on the topic reaches the same conclusion in plainer language: the same things work for GEO, AEO, AIO, and even LLMs — helpful content, clear structure, and trust.

Which platforms does each workflow target?

Each workflow targets a different set of surfaces with different retrieval mechanics. Mapping them precisely is how teams avoid producing generic "AI content" that fits nowhere.

| Workflow | Primary target surfaces | Retrieval mechanic |

|---|---|---|

| AEO | Google Featured Snippets, People Also Ask, Google AI Overviews, Google Assistant, Siri, Alexa | Extractive snippet pull from a single ranked page |

| GEO | Google AI Overviews, SGE, Bing Copilot Search, Microsoft Copilot, Perplexity, ChatGPT Search, Gemini, Claude, Yahoo | Live retrieval + generative synthesis with citations |

| LLMO | ChatGPT, GPT, Claude, Gemini, Llama, internal AI assistants, RAG-enabled products | Parametric knowledge + retrieval-augmented generation |

SEOctopus defines GEO as ensuring a brand or content appears inside AI responses from ChatGPT, Gemini, Perplexity, and Claude, with the goal of being mentioned or cited rather than ranked in a list of links. SEOctopus defines AEO as appearing in Google's direct answer features: Featured Snippets, People Also Ask, and Google AI Overviews. SEOctopus defines LLMO as optimizing how a brand is represented in the training data and parametric knowledge of GPT, Claude, Gemini, and Llama.

The surface that matters most operationally is the one marketers most often miss: RAG-enabled internal assistants. Brand Armor AI describes LLMO as improving how custom or third-party models respond inside products — meaning an enterprise Slack assistant, a customer-facing support agent, or a sales research bot. That is a different artifact than a blog post, and it requires different distribution (MCP feeds, CMS APIs, partner data) rather than only web publishing.

Who should own each strategy?

Ownership should follow the retrieval mechanic, not the acronym. AEO belongs to SEO and content teams because the signals (schema, headings, on-page structure) are extensions of classic on-page SEO. GEO belongs to SEO plus content, communications, and digital PR because generative citations reward evidence, expert quotes, and third-party authority. LLMO requires shared governance across content, product, engineering, and brand because the model's understanding of your entity is shaped by the web, by structured data, and by the feeds that power in-product assistants.

Brand Armor AI proposes an operating model worth adopting literally: centralize facts, create modular assets, distribute updates through MCP, CMS, and partner feeds, measure citation share, AI Overview placements, and in-product assistant accuracy, and govern the workflow with owners, SLAs, and compliance reviews. Brand Armor AI also notes that in practice, GEO typically sits with marketing and communications, AEO with SEO specialists, and LLMO with product teams.

For agencies and multi-site operators, this ownership split is where brand consistency breaks. If entity facts live in three different docs across three different teams, the model will surface the version that's most frequently cited on the web — not the version leadership approved. A single source of truth for entity facts, distributed via CMS templates and structured data, is the governance fix.

How do we prioritize investments?

Prioritize by the surface where your audience actually decides. If buyers search Google for definitions and comparisons, start with AEO. If buyers ask ChatGPT and Perplexity for vendor shortlists, start with GEO. If buyers use internal or embedded AI assistants to evaluate products, start with LLMO. Most B2B SaaS teams need all three, sequenced by where pipeline currently forms.

Here is a decision matrix that maps each workflow to trigger conditions, content assets, technical signals, and metrics:

| Workflow | Prioritize when… | Core assets | Key technical signals | Primary metrics |

|---|---|---|---|---|

| AEO | You need answer extraction in Google surfaces | Concise definitions, comparison answers, FAQ blocks, HowTo steps | FAQPage, HowTo, Article schema; clean H2/H3 hierarchy | Zero-click share of voice, snippet capture, AI Overview appearances |

| GEO | You need citation share in AI-generated answers | Expert quotes, statistics, comparison tables, topical clusters, fresh authoritative guides | Evidence density, sameAs, internal linking, citations to primary sources | AI citation rates, prompt success, cross-engine coverage |

| LLMO | Brand descriptions and in-product assistant accuracy matter | Entity pages, brand fact sheet, consistent language, Wikipedia/Wikidata presence | sameAs, Knowledge Graph alignment, llms.txt, Product/Article schema, MCP feeds | Accurate extraction, reuse in AI outputs, brand-description consistency |

| SEO | You need traffic, rankings, and indexation as the base layer | Pillar pages, topical clusters, internal link graph | Crawlability, indexability, Core Web Vitals, canonical structure | Clicks, impressions, keyword rankings |

Contently, citing the foundational GEO research, reports that the study tested tactics on a 10,000-query benchmark and found that statistics, citations, and quotations could boost source visibility by up to 40%. That is the single clearest empirical argument for loading GEO content with evidence rather than opinion.

Geneo maps measurement differently across the four disciplines: AEO uses zero-click share of voice, AI citations, snippet capture, and session time; GEO uses AI citation rates, prompt successes, and cross-engine coverage; LLMO uses accurate extraction and reuse in AI outputs; SEO uses clicks, impressions, and keyword rankings. Pick the metric set that matches the surface you are actually trying to win.

AdsAgenz adds a useful format heuristic: AEO favors short paragraphs, bullets, and definitions; GEO favors comprehensive guides and topical clusters; LLMO favors well-organized long-form content with consistent language. In practice, a single article should carry all three — a tight answer block at the top, an evidence-dense body, and consistent entity language throughout.

How should the workflow run from onboarding to refresh?

The workflow should run as one editorial pipeline with nine sequential stages, not three parallel projects. A single QA pass, a single schema pass, and a single distribution step produce assets visible across every surface — extractive, generative, and parametric.

- Onboard the domain and stack. Inventory the current CMS or headless setup, existing content, schema coverage, robots.txt, llms.txt, and sitemap health.

- Define the site profile and entity facts. Centralize brand descriptions, canonical definitions, product facts, leadership, sameAs references, Wikidata and Wikipedia coverage, and Google Knowledge Graph alignment.

- Map target surfaces and owners. Assign AEO to SEO/content, GEO to SEO plus content plus digital PR, LLMO to shared governance. Name owners and SLAs.

- Build content templates for AEO, GEO, LLMO, and SEO. Each template enforces an answer block, evidence density, citable phrases, entity consistency, and the right Schema.org types (FAQPage, HowTo, Product, Article).

- Draft with evidence and answer blocks. Lead every section with a direct answer. Load bodies with attributed statistics, expert quotes, and comparison tables — the pattern Contently documents as the GEO tactic that lifted visibility by up to 40%.

- QA for factual consistency, schema, headings, and citation readiness. Check entity language, internal links, canonical structure, and schema validation before publish.

- Publish through the CMS. Deliver into the existing CMS or a headless front end; do not force teams to rebuild their stack.

- Monitor rankings, clicks, AI citations, AI Overview coverage, prompt success, extraction accuracy, and brand-description consistency. Use the Geneo-style metric split — each surface has its own signal.

- Refresh archives. Re-run evidence passes, update statistics, revise entity language, and re-ship old pages on a scheduled cadence.

Mentionwell is a blog engine built to operationalize exactly this pipeline: onboard a domain, define the site profile, run research-grounded drafts with AEO, GEO, LLMO, and SEO built in, publish into an existing CMS or headless stack, and run archive refreshes on schedule across one site or hundreds. For agencies and multi-site operators, the unlock is consistency — the same entity facts, templates, and QA gates applied across every domain.

Is this GEO, AEO, LLMO, or really just SEO?

It is SEO plus three additional layers, and the distinction matters because collapsing everything into "AI SEO" hides which signals are proven and which are speculative. Classic SEO remains the foundation because crawlability, indexability, structured data, internal links, topical authority, and trust signals feed every AI retrieval surface — extractive, generative, and parametric.

VOLTAGE argues that renaming SEO is not the same as rethinking it; the real shift is adapting strategy so brands show up where audiences search, not choosing the newest acronym. ExecSearches reinforces the point: strong SEO is still the foundation, and GEO, AEO, and LLMO build on top of it.

What is proven:

- Structured, accessible, well-headed content earns extraction (AEO) and synthesis (GEO).

- Evidence density — statistics, quotes, citations — lifts generative citation rates (Contently, citing the GEO study: up to 40%).

- Entity consistency across sameAs, Wikidata, Wikipedia, and Google Knowledge Graph shapes how models describe a brand (LLMO).

- Schema.org types (FAQPage, HowTo, Product, Article) remain useful for both Google answer features and AI citations.

What is less settled:

- The practical impact of llms.txt on model behavior is not substantiated in the research corpus reviewed here.

- robots.txt rules for specific AI crawlers are actively debated; their downstream effect on citation rates is unclear.

- MCP feeds and training-layer influence are emerging patterns, not proven tactics.

- Vendor claims about 2-6 day or 2-8 week result windows for AI visibility are not substantiated by the sources reviewed; treat timeline promises cautiously.

The operator-minded read: invest heavily in the proven fundamentals, experiment modestly with the emerging signals, and ignore anyone promising fast AI-visibility results without evidence.

How can teams scale AEO, GEO, and LLMO without thin programmatic pages?

Scale works only when templates enforce unique evidence and editorial controls, not when they multiply boilerplate. Programmatic SEO earns citations when each page carries entity-specific facts, sourceable claims, comparison logic, and scheduled refreshes — and it produces AI-invisible sludge when it doesn't.

The format mix per article should match the surface coverage you want:

- AEO assets: concise answer blocks at the top of every page, FAQ sections for long-tail questions, HowTo steps for process content, comparison answers for versus queries. ExecSearches recommends 50–60 character unique titles, 120–155 character meta descriptions, and at least 600–800 words on priority pages to avoid thin content.

- GEO assets: long-form guides and topical clusters with dense evidence — attributed statistics, expert quotes, comparison tables, primary-source citations. This is the content shape Contently's synthesis of the GEO research identifies as most likely to be cited.

- LLMO assets: entity pages (company, product, founder, category), a canonical brand fact sheet, consistent language across all properties, sameAs references, and Wikidata/Wikipedia alignment.

- Schema layer: FAQPage, HowTo, Product, and Article types applied where content genuinely fits — not as decoration.

For agencies and multi-site operators, the governance problem dominates. Scale requires a shared site profile per domain, shared entity facts, brand-consistent templates, editorial QA gates before publish, and a refresh cadence that updates statistics and entity language before they go stale. Scale is consistency and governance, not volume.

Mentionwell is designed for this operational shape: one research-grounded pipeline, programmatic coverage with enforced evidence and QA, AEO/GEO/LLMO/SEO built into every draft, publishing into existing CMS or headless stacks, and archive refreshes scheduled across one site or hundreds. If your team is running the AEO/GEO/LLMO workflow across multiple domains without rebuilding the stack, see how the pipeline works.

Sources

- GEO vs AEO vs LLMO: The New Search Optimization Trinity for ...www.indegene.com

- SEO vs GEO vs AEO vs LLMO: Shifting Strategies, Not Nameswww.linkedin.com

- GEO? AEO? LLMO? What's with all this AI SEO stuff? | Patrick Stoxblog.execsearches.com

- What's The Difference Between SEO, AEO, GEO And LLMOwww.brandarmor.ai