How ChatGPT decides whether to show your brand

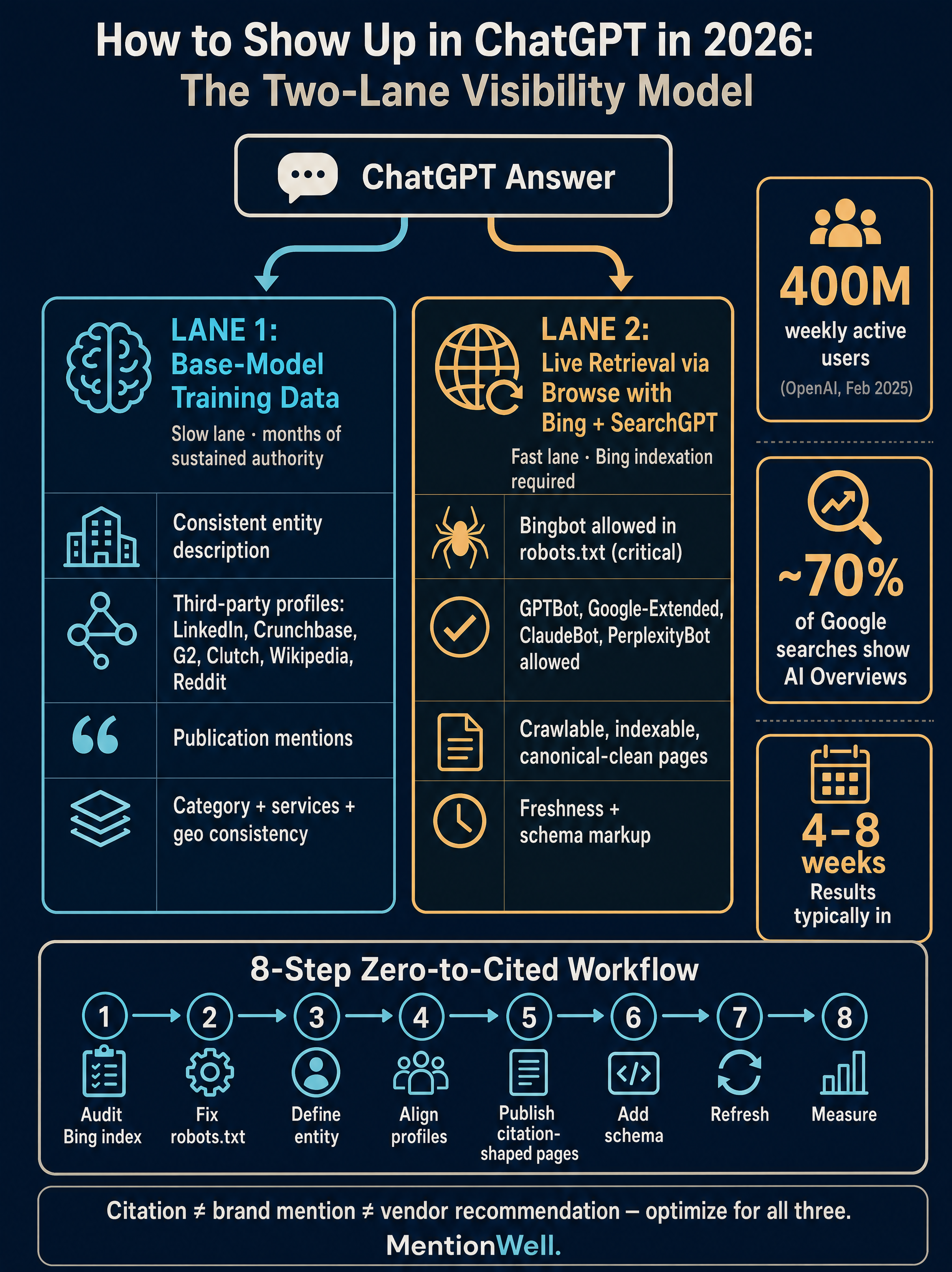

Showing up in ChatGPT means one of three distinct outcomes: being cited as a source URL, being mentioned as a brand inside the answer text, or being recommended as a vendor when a buyer asks for options. These are not the same result, and optimizing for one does not automatically produce the others. According to Digital SEO Land, ChatGPT cites sources 87% of the time but mentions brands in only 20.7% of answers — the gap between "linked" and "named" is where most B2B visibility work actually lives.

This matters because ChatGPT does not behave like Google's ten blue links. ROI Amplified notes that AI assistants typically surface one or two authoritative recommendations per query, so inclusion in the answer set is materially different — and more concentrated — than classic SERP ranking. Market-size claims vary across sources (OpenAI's 400 million weekly active users as of February 2025, per bittermelon.ai, with higher figures cited elsewhere without primary attribution), so treat absolute numbers with caution. The directional reality is clear enough: B2B buyers increasingly shortlist vendors inside ChatGPT before they ever open a search tab.

To appear in ChatGPT, a brand needs base-model entity recognition, live-retrieval eligibility through Bing, and third-party authority signals working in parallel.

What does ChatGPT actually draw from when it answers?

ChatGPT answers from two lanes running in parallel: the base model's training data, and live retrieval through ChatGPT Search, SearchGPT, or Browse with Bing. According to bittermelon.ai, these are two different problems requiring two different approaches — and most teams optimize only for the second.

The base model reflects a snapshot of the public web up to its training cutoff plus any licensed content OpenAI has ingested. Brands the model already "knows" get mentioned spontaneously without any web call. The retrieval lane, by contrast, grounds answers in real-time results — primarily Bing's index when Browse with Bing is active. Authority signals on LinkedIn, Crunchbase, G2, Reddit, and Wikipedia feed both lanes by shaping how OpenAI, Bing, and third-party sources describe your entity.

| Lane | Primary input | Optimization lever | Feedback speed |

|---|---|---|---|

| Base-model knowledge | Training data snapshot | Sustained entity authority across the web | Slow (months to model updates) |

| Live retrieval (ChatGPT Search / Browse with Bing) | Bing index + grounding pass | Bing indexation, page structure, freshness | Fast (days to weeks) |

Base-model training data visibility

Base-model visibility is the slow lane, and it rewards consistency more than campaigns. The model learns who you are by seeing the same brand name, category, service description, and geographic footprint repeated across high-authority sources over months. Solumize notes that many B2B companies optimize only for recent keyword-driven blog posts while ignoring the entity signals that shape the model's base understanding of a category — which is why they never get mentioned spontaneously.

Practically, this means your LinkedIn "About" section, Crunchbase description, Clutch profile, G2 listing, and homepage hero copy should describe the same company in the same language. Conflicting descriptions across those surfaces reduce model confidence. A brand described as a "content engine" on its homepage, an "AI writing tool" on G2, and a "marketing agency" on Clutch will not consolidate into a clean entity in the model's weights.

ChatGPT Search and Browse with Bing visibility

Live-retrieval visibility depends on being indexed where ChatGPT actually looks. According to bittermelon.ai, when ChatGPT browses, it pulls results from Bing's search index and synthesizes them into an answer — which makes Bing indexation a practical requirement, not a nice-to-have. If your pages are not in Bing, they are not eligible for citation through Browse with Bing, regardless of Google rankings.

Eligibility is not a guarantee. Being indexed means you can be retrieved; it does not mean you will be cited or that your brand will be named in the answer. The retrieval lane still filters by freshness, passage clarity, and how cleanly a chunk answers the specific prompt. Digital SEO Land reports that ChatGPT enabled search on 34.5% of queries, down from 46% in late 2024 — meaning the base-model lane handles the majority of answers and retrieval is selectively invoked. Both lanes matter, but you cannot skip either.

How does ChatGPT actually decide what to recommend?

ChatGPT does not rank pages — it synthesizes an answer from model knowledge, live web retrieval, and internal confidence signals about which entities are credible for the query. Digital SEO Land frames this as three inputs working together: training data, Bing's live index, and third-party authority signals like review sites, directories, and publications.

The most important nuance for B2B teams is the citation-versus-mention gap. Digital SEO Land's 87% citation rate and 20.7% brand-mention rate means ChatGPT will often link to a page that discusses a category without naming any vendor in the answer text. If your goal is to be the named recommendation when a buyer asks "what's the best X," you need brand mentions inside answers — which requires strong entity signals the model associates with the category, not just a ranking page.

Technical eligibility: Bing indexation, robots.txt, and crawler access

Start with the operator checklist. If crawlers cannot reach your pages, nothing else matters.

- Confirm Bing indexation. Run `site:yourdomain.com` in Bing and submit your sitemap in Bing Webmaster Tools. Bingbot is critical because ChatGPT Browse uses Bing as its retrieval layer.

- Audit robots.txt. According to bittermelon.ai, blocks against GPTBot, Google-Extended, ClaudeBot, PerplexityBot, or Bingbot can prevent AI systems from accessing your content. Allow the crawlers you want to appear in.

- Check indexable status. Ensure key pages return 200, have clean canonicals, are not noindexed, and are not buried behind JavaScript Bingbot cannot render.

- Keep sitemaps current. Freshness signals matter for retrieval; stale sitemaps delay indexation of refreshed pages.

- Decide per crawler. GPTBot (OpenAI training), Google-Extended (Gemini and Google AI Overviews training), ClaudeBot (Anthropic / Claude), and PerplexityBot each govern a different AI surface. Block selectively, not reflexively.

Allowing crawlers is a permission layer, not a ranking lever — it removes a barrier but does not guarantee citation.

Entity authority: third-party profiles and consistent descriptions

External corroboration is how the model builds confidence that you are who your site says you are. Solumize names LinkedIn, Crunchbase, Clutch, G2, Reddit, and sector directories as key surfaces, and emphasizes that conflicting business names, positioning, cities, or service descriptions reduce model confidence.

Align these fields across every profile: legal and trading name, one-sentence description, primary category, top three services, cities or regions served, and canonical homepage URL. Use the same sentence — literally — on LinkedIn, Crunchbase, and your About page where possible. On Reddit, Nathan Gotch and others caution against spammy promotional activity; authentic participation in relevant subreddits helps, but engineered mentions damage trust and can get accounts banned without improving ChatGPT visibility.

Where to start if you are starting from zero?

Work the stack in order. Skipping steps wastes cycles — you cannot earn citations on pages Bing has not indexed.

- Audit crawlability and Bing indexation. Run `site:` queries in Bing, submit sitemaps via Bing Webmaster Tools, and confirm key pages return 200 with clean canonicals.

- Fix robots.txt and technical blockers. Explicitly allow Bingbot, and decide per crawler on GPTBot, Google-Extended, ClaudeBot, and PerplexityBot based on your AI-surface priorities.

- Define the entity and site profile. Write one canonical description, one category, one service list, and one geographic footprint. This becomes the reference for every external profile.

- Align third-party profiles. Update LinkedIn, Crunchbase, Clutch, G2, Wikipedia (where eligible), and relevant sector directories to match the canonical entity definition.

- Publish citation-shaped pages. Lead with a 40–60 word direct answer, use declarative first sentences, include named statistics with sources, and add comparison tables where relevant.

- Add schema. Implement Organization and, where relevant, LocalBusiness JSON-LD with `areaServed`, `sameAs`, `description`, and service/category fields.

- Refresh content on a cadence. Live retrieval rewards freshness; archive pages need periodic updates to stay eligible.

- Measure prompts, mentions, and citations. Build a prompt set, log what appears, and repeat monthly.

Ship citation-shaped pages across your site without rebuilding your stack — Get My Site GEO Optimized with Mentionwell.

Solumize reports established domains typically see results in 4–8 weeks once this system is in place — a useful expectation-setter, though individual timelines vary by starting authority, category competitiveness, and publishing cadence.

How should a page be structured so ChatGPT can extract and cite it?

Structure pages so a single passage can answer a single intent cleanly. Solumize recommends opening key pages with a 40–60 word direct-answer block written in complete sentences — not bullets, not marketing copy. That block is what retrieval systems lift into answers. Everything below it should reinforce, extend, and structure the same claim.

The rest of the page should follow AEO, GEO, LLMO, and SEO structural norms simultaneously:

- Declarative first sentences in every section that answer the implicit question in the heading.

- Clear H2/H3 logic where each subheading maps to one sub-intent, not decorative phrasing.

- Comparison tables when a section discusses options, costs, timelines, or vendor differences — LLMs extract tabular data directly.

- Named statistics with sources. bittermelon.ai and Digital SEO Land both emphasize that attributed numbers get cited while unattributed ones get ignored.

- Conversational long-tail questions as H2s, matching how buyers actually prompt ChatGPT.

- Short, quotable summary sentences — the single sharpest claim per section is the one most likely to be lifted verbatim.

Nathan Gotch's position is worth repeating here: mass-producing AI content for publishing velocity does not survive core updates or LLM quality filters. Extraction-friendly structure only works on top of genuinely useful content. The structure makes good content citable; it does not make thin content good.

Schema markup and geographic recommendation fields

Schema tells crawlers what your entity is, where it operates, and how it maps to categories. Solumize recommends Organization schema on every page and LocalBusiness schema where physical or regional service delivery is relevant, both in JSON-LD.

Key fields to implement:

| Field | Purpose | Example value |

|---|---|---|

| `name` | Canonical entity name | Matches LinkedIn, Crunchbase |

| `description` | One-sentence entity summary | Same sentence across all profiles |

| `sameAs` | External identity corroboration | LinkedIn, Crunchbase, G2, Wikipedia URLs |

| `areaServed` | Geographic recommendation eligibility | Specific cities, regions, or countries |

| `serviceType` / `category` | Category mapping | One primary category, up to three services |

Schema alone does not create visibility. It makes entity claims machine-readable so the retrieval lane and the base-model lane have less ambiguity to resolve. Treat it as a clarity layer, not a ranking lever.

How does GEO differ from traditional SEO, AEO, and LLMO?

Generative Engine Optimization (GEO) targets AI-generated summaries and LLM answers, while traditional SEO targets blue-link rankings. Chris Raulf defines GEO as structuring content so AI-powered systems — including Google AI Overviews and standalone LLMs like ChatGPT, Claude, Gemini, and Perplexity — recognize it as authoritative, relevant, and worth surfacing. The four disciplines are complementary, not competing.

| Discipline | Primary target | Core optimization |

|---|---|---|

| SEO | Google and Bing blue links | Crawlability, backlinks, keyword relevance |

| AEO (Answer Engine Optimization) | Direct-answer snippets, featured answers | 40–60 word answer blocks, FAQ structure |

| GEO (Generative Engine Optimization) | AI Overviews, LLM answer synthesis | Passage clarity, entity context, citable structure |

| LLMO (Large Language Model Optimization) | Model-readable entity understanding | Entity consistency, schema, third-party corroboration |

SEO supports crawlability and search demand; AEO structures direct answers; GEO targets generative summaries; and LLMO improves model-readable entity context. A team running only one discipline leaves citations on the table in the others. For a deeper workflow breakdown, see [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

How to know if it is working?

There is no Search Console for ChatGPT, so measurement is a workflow, not a dashboard. Build a prompt set, test it on a cadence, and log what changes.

- Build a prompt set of 30–100 queries covering category prompts ("best X for Y"), comparison prompts ("X vs competitor"), problem prompts ("how do I solve Z"), and branded prompts ("what is [your company]").

- Run each prompt in ChatGPT, Gemini, Claude, and Perplexity with and without browsing enabled — base-model and live-retrieval results differ.

- Log three outcomes per prompt: source citation (your URL appears), brand mention (your name appears in the answer text), and vendor recommendation (you are named as a suggested option). These are separate metrics.

- Track Bing visibility in parallel using Bing Webmaster Tools for indexation, impressions, and clicks — it is the closest proxy for live-retrieval eligibility.

- Re-test monthly. Base-model updates are infrequent; live retrieval shifts weekly. A monthly cadence captures both without drowning in noise.

- Expect uneven timelines. Solumize's 4–8 week range applies to established domains with existing authority; newer domains take longer, and category competitiveness matters more than effort.

Digital SEO Land reports that outbound referral traffic from ChatGPT grew 206% in 2025 and that ChatGPT accounts for 87.4% of AI referral traffic across major industries — so referral analytics in GA4, filtered by ChatGPT source, is a useful secondary signal even though it undercounts pure brand-mention impact.

How can teams scale ChatGPT visibility without thin AI content?

Scaling citation-shaped content across many pages or many sites is a systems problem, not a writing problem. The failure mode is well known: templated programmatic SEO produces low-value pages that Bing deprioritizes and LLMs ignore. The solution is an editorial engine where templates enforce structure while research and entity context keep every page substantive.

A scalable system needs five operational layers:

- Site profile — canonical entity definition, tone, audience, competitors to exclude, CTA rules. Every page inherits from it.

- Template library — glossary pages, comparison pages, question-answer pages, and pillar pages, each with required sections (direct answer, tables, schema, citations).

- Research pipeline — per-page source gathering so every factual claim traces to a real reference, not model guesswork.

- Editorial QA — checks for attribution, entity consistency, schema completeness, and citation-shaped structure before publishing.

- Refresh cadence — scheduled updates to archive pages so live retrieval continues to surface them.

Mentionwell operationalizes this stack as an automated blog engine: onboard a domain, define the site profile, and the pipeline runs research, drafting, AEO/GEO/LLMO/SEO structuring, schema, publishing into your CMS or headless stack, and scheduled refreshes across one site or hundreds. The positioning is deliberately operational — it is a content engine for teams that need consistent citation-shaped output at scale, not a generic AI writing tool.

Which 2026 tactics are proven, experimental, or overclaimed?

Some 2026 tactics are backed by evidence; others are emerging patterns worth testing but not betting on. Sorting them honestly prevents wasted cycles.

| Tactic | Status | Notes |

|---|---|---|

| Bing indexation + robots.txt hygiene | Proven | Required for Browse with Bing eligibility (bittermelon.ai) |

| Entity consistency across LinkedIn, Crunchbase, G2, Clutch | Proven | Directly shapes model confidence (Solumize) |

| 40–60 word direct-answer blocks | Proven | Extraction-friendly for retrieval (Solumize) |

| Organization + LocalBusiness schema | Well-supported | Clarity layer, not a ranking lever |

| Regular content refreshes | Proven | Freshness influences live retrieval |

| `llms.txt` file | Experimental | No public evidence ChatGPT consumes it; treat as optional helper |

| A2A protocol, Universal Commerce Protocol, WebMCP | Emerging | Agent-interaction standards referenced by Chris Raulf; not replacements for crawlability |

| Market-size statistics in SERP content | Overclaimed | Weekly-active-user figures range widely across sources; only OpenAI's 400M (Feb 2025, per bittermelon.ai) has clear primary attribution |

The durable pattern cuts across every source from Nathan Gotch to Digital SEO Land to Solumize: crawlability, entity clarity, and citation-shaped publishing are the core stack. Emerging protocols and helper files may matter more in 18 months, but they do not replace the fundamentals today.

If you want an automated pipeline that ships AEO-, GEO-, LLMO-, and SEO-structured pages into your existing CMS and refreshes them on schedule, Get My Site GEO Optimized with Mentionwell.

Sources

- How to Show Up in ChatGPT in 2026 - YouTubewww.youtube.com

- Show up on Chat GPT: The 2026 Guide to Dominating AI Searchroiamplified.com

- How to Access ChatGPT in 2026 | Step-by-Step Beginner's Guidewww.solumize.com