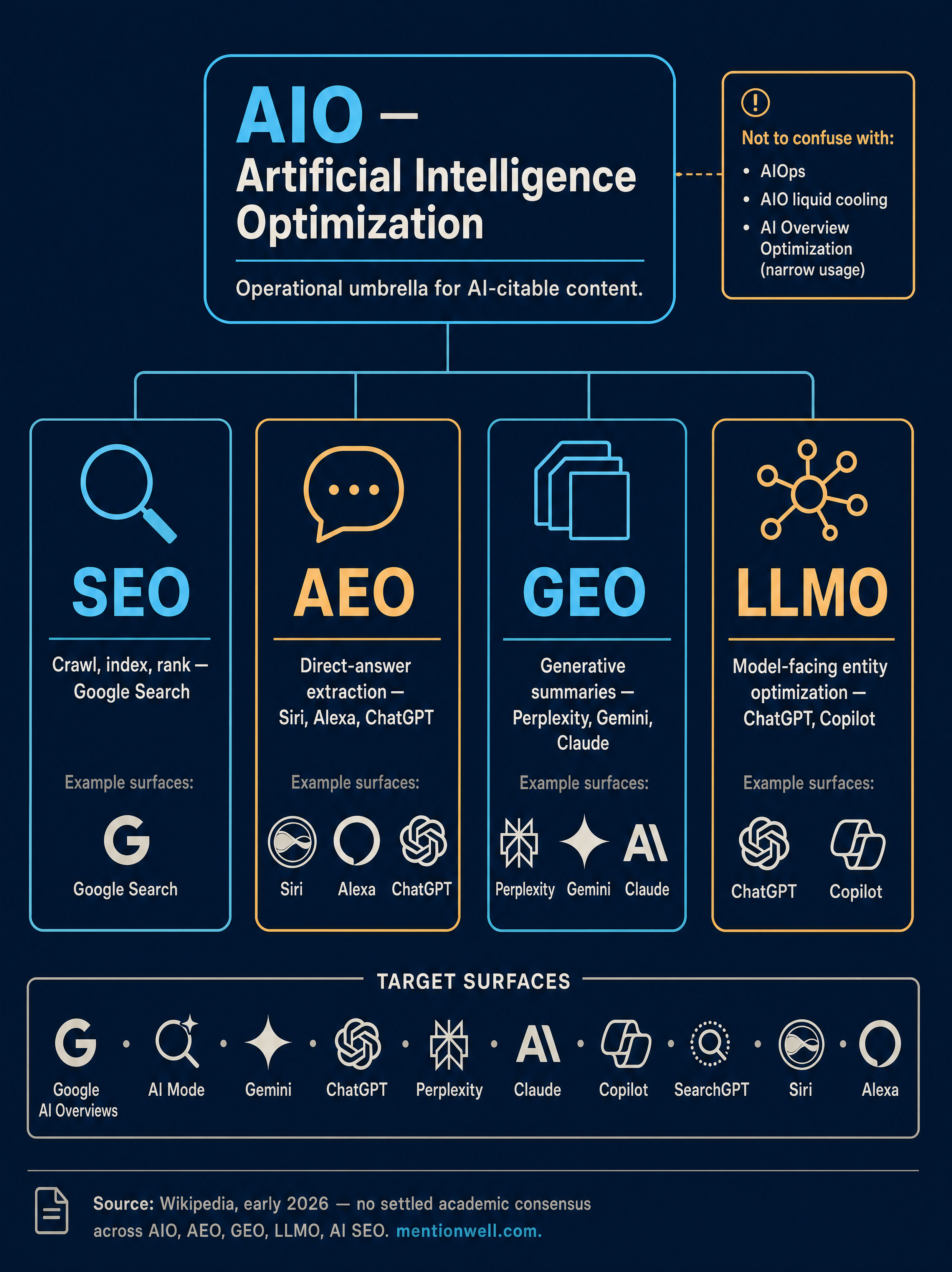

What is AIO?

AIO most usefully stands for Artificial Intelligence Optimization: the operational umbrella for making content, technical structure, and brand authority understandable, citable, and recommendable by AI systems. That is the definition EisnerAmper uses when it describes AIO as "the broader process of optimizing content, technical structure, and brand authority so you are understood, cited, and recommended across AI-driven discovery experiences" (Source: EisnerAmper).

The acronym is genuinely contested in 2026, and the confusion is worth naming directly instead of pretending it is settled.

| Usage of "AIO" | Source framing | Scope |

|---|---|---|

| Artificial Intelligence Optimization | EisnerAmper, LinkedIn practitioner content | Umbrella discipline across AI discovery surfaces |

| AI Overview Optimization | Than Institute | Narrow: Google AI Overviews only |

| AI-Powered Optimization | AIO Copilot | Strategy using AI insights across search, voice, chatbots |

| AIOps (artificial intelligence for IT operations) | AWS | Infrastructure and IT automation, unrelated to search |

| All-in-one (software, liquid coolers) | PCMag | Hardware and software category label |

According to Wikipedia, no consensus definition distinguishing GEO, AEO, LLMO, AIO, and AI SEO had been established in academic literature as of early 2026, and the terms are frequently used interchangeably in practitioner contexts. That means any article claiming a single "correct" definition is overreaching.

For the rest of this guide, AIO refers to Artificial Intelligence Optimization — the coordinating layer across Answer Engine Optimization (AEO), Generative Engine Optimization (GEO), Large Language Model Optimization (LLMO), and classic SEO. Where sources specifically mean AI Overview Optimization, we will call that out.

What is AI optimization (AIO) and how is it different from SEO?

AI optimization extends SEO; it does not replace it. SEO gets your pages crawled, indexed, ranked, and eligible in Google Search. AIO adds the editorial and structural work that makes those pages easier for AI systems to interpret, cite, synthesize, and recommend inside answers.

AOL/Stacker, republishing WebFX content, puts it directly: AI optimization is the practice of optimizing content so AI-powered platforms can find, understand, and cite it when generating answers, and it extends SEO rather than replacing it because technical SEO, crawlability, structured data, site speed, and mobile-friendliness still affect AI discoverability (Source: AOL/Stacker).

The practitioner distinction is between two different outcomes:

- SEO outcome: a blue-link ranking in Google Search that earns a click.

- AIO outcome: being selected, cited, mentioned, or recommended inside an AI-generated answer in ChatGPT, Google AI Overviews, Perplexity, Gemini, Claude, or Microsoft Copilot.

LinkedIn practitioner content frames the same shift as moving from ranking on a page to being selected inside an answer, and argues that SEO ensures crawlability, indexing, technical performance, and baseline authority, while AIO helps machines recommend a brand (Source: LinkedIn).

A note on terminology: "AI SEO" is a loose umbrella in the market, not a precise discipline. Treat it as shorthand, not as a replacement for the more specific AEO, GEO, LLMO, and AIO vocabulary.

What's the difference between AEO and GEO?

AEO optimizes for direct-answer extraction; GEO optimizes for multi-source generative summaries; LLMO optimizes how models understand your brand and entities; AIO is the umbrella that coordinates all of them alongside classic SEO.

EisnerAmper defines AEO as structuring content so systems can extract the best direct answer quickly, using clear headings, direct definitions, and consistent language, and it defines GEO as being the trusted source in multi-source AI summaries, with emphasis on content structure, depth, and E-E-A-T so a source is safe to cite (Source: EisnerAmper). EisnerAmper explicitly says AEO, GEO, and AIO work together rather than competing.

| Term | Primary goal | Representative surfaces | Main signals |

|---|---|---|---|

| AEO | Get quoted as the direct answer | ChatGPT, Claude, Siri, Alexa, featured snippets | Clear headings, definitions, Q-and-A shape, schema |

| GEO | Be cited in a synthesized multi-source summary | Google AI Overviews, Gemini, Perplexity AI | Depth, E-E-A-T, original data, structured passages |

| LLMO | Be understood and recommended by the model itself | ChatGPT, Claude, Gemini, Copilot | Entity consistency, brand signals, training-data presence |

| AIO | Coordinate AEO + GEO + LLMO + SEO as one pipeline | All of the above | Site profile, schema, refreshes, measurement |

| SEO | Rank in classic blue-link search | Google Search, Bing | Crawlability, indexing, authority, on-page relevance |

For deeper operator-level guides on each layer, see the MentionWell breakdowns on [AEO](/what-is-aeo-in-2026-answer-engine-optimization-explained), [GEO](/what-is-geo-in-2026-generative-engine-optimization-explained), [LLMO](/what-is-llmo-in-2026-large-language-model-optimization-explained), and the comparison piece [AEO vs GEO vs LLMO](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

Which platforms and search experiences does AIO target?

AIO targets every surface where an AI system decides which sources to cite, quote, or recommend — not just Google. That list has expanded meaningfully in 2026.

Concretely, an AIO program should plan for coverage across:

- Google AI Overviews and Google AI Mode — AI-generated answers inside Google Search.

- Google Gemini — Google's conversational assistant, with Google Bard as the legacy brand.

- ChatGPT and SearchGPT — OpenAI's assistant and search-oriented surface.

- Perplexity AI — citation-heavy answer engine.

- Claude — Anthropic's assistant, often used in Projects and Connectors contexts.

- Microsoft Copilot — Copilot Web and Work modes, backed by Bing indexation.

- Voice assistants — Siri and Alexa for conversational, short-answer queries.

Hiilite emphasizes that Google AI Overviews are dynamic and can vary by query type, location, language, and what Google believes the user is trying to accomplish (Source: Hiilite). That variability is why AIO planning starts from query clusters, not single keywords.

Enterprise AI vendors such as AI21 and IBM matter when the conversation shifts to internal model deployments, not consumer search. Keep them on the boundary unless your audience is buying model infrastructure.

How do AI Overviews change click-through rate and traditional rankings?

AI Overviews change click-through rate because attention now gets spent before a user reaches your listing — dashboards that look "fine" on rankings can still lose clicks on informational queries while demand shifts toward deeper-intent pages. Reporting has to adapt, not just the content.

Hiilite breaks the click journey into three patterns (Source: Hiilite):

- Some queries end without a click because the AI Overview answered the basics.

- Some clicks go to the sources cited inside the AI Overview.

- Some clicks shift to deeper-intent queries — pricing, comparisons, local providers, next steps.

Hiilite references Pew Research Center on reduced clicks when AI summaries appear, but the crawled article does not expose specific numeric CTR benchmarks, so we will not invent any here. The directionally useful point stands: informational top-of-funnel CTR compresses, and demand moves downstream to decision content.

Google Search Central's guidance, as summarized by Hiilite, is that AI Overviews and AI Mode are part of Google Search, and the usual essentials still apply — helpful, accessible, and eligible content, including for AI formats (Source: Hiilite via Google Search Central).

If your archive is strong on informational keywords but losing clicks to AI Overviews, a structured refresh turns those pages into citation targets instead of dead ends — Get My Site GEO Optimized.

The operational consequence: report on citation presence and deeper-funnel clicks alongside rankings, not instead of them.

What makes content citation-ready for answer engines and generative engines?

Citation-ready content is structured so a retrieval system can lift a self-contained, trustworthy passage and safely use it in an answer. That is the practical meaning of designing for retrieval-augmented generation (RAG): models need retrievable, accurate chunks they can attribute.

AOL/Stacker, drawing on WebFX, lists the editorial tactics that matter (Source: AOL/Stacker):

- Clear headings and direct answers near the top of each section.

- Scannable formats — lists, tables, definitions.

- Schema markup.

- Original data, expert commentary, and firsthand experience.

SEO Savages adds the structural requirements: complete answers, structured data, semantic HTML, precise facts, clear H1–H3 hierarchy, schema markup, and self-contained context (Source: SEO Savages). EisnerAmper ties the same list back to E-E-A-T, arguing that GEO requires depth and credibility so a source is safe to cite.

The browsing layer is shrinking. The evaluation layer is automated. Visibility is no longer about ranking on a page — it's about being selected inside an answer.

Practically, that means every section of a citable page should work as a standalone passage: a direct answer, a named entity, a specific fact or number, and enough context that an AI system does not need the rest of the page to quote it.

What technical signals matter for AIO?

Technical AIO is about eligibility and interpretation, not magic ranking factors. AI systems cannot cite what they cannot crawl, parse, or trust.

The signals that consistently show up across sources:

- Schema markup and structured data — Article, FAQPage, HowTo, Organization, Product.

- Semantic HTML — proper `h1`–`h3` hierarchy, lists, tables, `dl` for definitions.

- Crawlability and indexing — clean robots rules, sitemaps, canonical tags, reachable from both Googlebot and Bingbot (Bing powers Copilot and parts of other stacks).

- Accessibility, site speed, and mobile usability — baseline quality signals that affect eligibility.

- Machine-readable organization — consistent entity names, internal links, and clear page purpose.

AOL/Stacker frames this plainly: technical SEO, crawlability, structured data, site speed, and mobile-friendliness still affect AI discoverability (Source: AOL/Stacker). Google Search Central's position is aligned — AI formats depend on helpful, accessible, eligible web content.

Technical SEO is the foundation of AIO; without it, your editorial work is invisible to the systems you are trying to influence.

How to rank in AI Overviews?

Ranking in AI Overviews — and getting cited across ChatGPT, Perplexity, Gemini, and Claude — requires a repeatable workflow, not a one-off content sprint. SEO Savages recommends starting with live query testing to see which sources get cited and where answers are vague, incomplete, or outdated (Source: SEO Savages).

A workable operator loop:

- Select target queries from your pipeline — informational, comparison, pricing, local, and branded questions.

- Run each query in Google Search, Google AI Overviews, Google AI Mode, ChatGPT, Claude, Gemini, and Perplexity. Record cited sources and gaps.

- Identify weak answers — outdated facts, shallow summaries, missing entities, or no strong source cited.

- Map entities and question variants for each cluster so your page covers the full intent surface.

- Draft direct-answer blocks at the top of each section, followed by supporting detail, data, and examples.

- Add schema and semantic HTML — FAQPage, HowTo, Article, and clean heading hierarchy.

- Publish through your CMS or headless stack with consistent templates.

- Test citations, mentions, and recommendations across the same AI surfaces one to four weeks after publish.

- Refresh archives on a cadence — dates, stats, entity lists, and direct answers degrade quickly in AI search.

This is where a blog engine earns its keep. MentionWell operationalizes the full loop: onboarding a domain, defining a site profile, running pipeline stages that bake AEO, GEO, LLMO, and SEO into every draft, delivering into an existing CMS or headless stack, and handling archive refreshes and programmatic SEO governance so multi-site operators do not ship thin templated pages.

For platform-specific workflows, see the MentionWell guides on [Google AI Overviews](/how-to-show-up-in-google-ai-overviews-in-2026), [ChatGPT](/how-to-show-up-in-chatgpt-in-2026), [Perplexity](/how-to-show-up-in-perplexity-in-2026), [Gemini](/how-to-show-up-in-google-gemini-in-2026), [Claude](/how-to-show-up-in-claude-in-2026), and [Microsoft Copilot](/how-to-show-up-in-microsoft-copilot-in-2026).

How should teams measure AIO success beyond rankings and traffic?

AIO reporting tracks citation presence and recommendation share across AI surfaces, alongside — not instead of — classic rankings, impressions, CTR, organic traffic, and conversions. Wikipedia's AIO entry explicitly points at brand mentions, cited URLs, domains, and share of voice as the newer metrics that matter (Source: Wikipedia).

A practical reporting model for sophisticated teams:

| Metric | What it tells you | Measured where |

|---|---|---|

| Cited URLs and domains | Whether specific pages earn AI citations | Manual and automated query testing across ChatGPT, AI Overviews, Perplexity, Gemini, Claude, Copilot |

| Brand mentions | Whether your brand surfaces even without a link | Same AI surfaces |

| Recommendation presence | Whether you appear when the user asks for a vendor or tool | ChatGPT, Gemini, Claude, Copilot |

| Share of voice across AI answers | Your citation density vs competitors across a query set | Tracked query clusters |

| Platform coverage | How many AI surfaces cite you for your core topics | Query matrix by platform |

| Query-cluster visibility | Citation rate across a defined cluster (e.g., "AIO definitions") | Rolling weekly or monthly test |

| Deep-funnel clicks | Shifts toward pricing, comparisons, next-step pages | GA4, server logs, CRM |

| Classic SEO metrics | Rankings, impressions, CTR, organic traffic, conversions | Google Search Console, analytics |

Treat citation metrics as leading indicators and classic SEO metrics as lagging confirmation. Both are required to run a defensible AIO program.

If your team is tired of publishing SEO content that ranks but never gets cited, MentionWell runs the research, structure, schema, and refresh cadence that turns blog archives into citation surfaces across AEO, GEO, LLMO, and SEO. Get My Site GEO Optimized.

Sources

- AI Overview Optimization: The Complete AIO Guide 2026www.thaninstitute.com

- The Rise of AIO (AI Optimization): What Every Marketer Must ...www.linkedin.com

- AI Search Optimization In 2026: AEO, GEO & AIO | EisnerAmpereagrowthsolutions.com

- What Is AIO? A Guide to AI Optimization for Staffing Firmswww.haleymarketing.com

- Artificial intelligence optimizationen.wikipedia.org