What Is LLMO (Large Language Model Optimization)?

LLMO stands for Large Language Model Optimization: the practice of structuring content, websites, and brand presence so large language models can discover, understand, extract, cite, mention, and recommend them inside AI-generated answers. It is how brands stay visible in 2026 when users ask ChatGPT, Google AI Overviews, Gemini, Claude, Perplexity, or Microsoft Copilot a question and never click a blue link.

The purpose is not ranking — it is inclusion. According to Search Engine Land, LLMO is aimed at getting a brand "mentioned, cited, and recommended within conversational AI responses, even when users do not click through to the website." That is a different primitive than the ten blue links SEO was built for.

LLMO is the discipline of earning citations and mentions inside AI-generated answers produced by OpenAI, Google, Anthropic, Microsoft, and Perplexity — not rankings on a results page.

A few framings to hold in mind before going deeper:

- Evergreen Media notes that GAIO, AIO, GEO, and LLMO are all used in the market to describe optimizing for LLMs and AI search engines, with no single accepted term.

- ClickPoint defines LLMO as writing and structuring content "so it can be accurately understood, extracted, and reused by AI systems such as Google's AI Overview, ChatGPT, Gemini, and Claude."

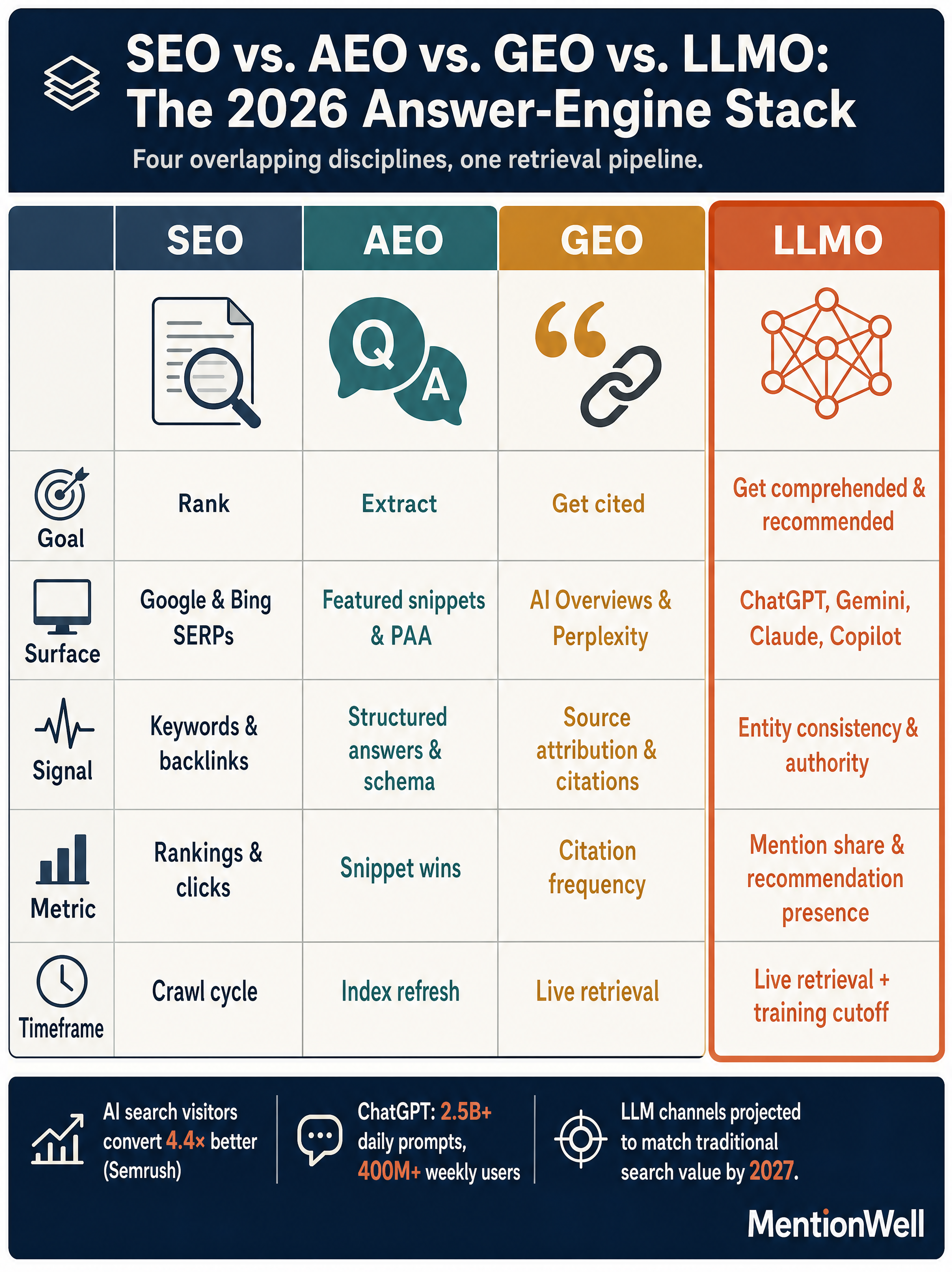

- Search Engine Land reports, citing Semrush research, that AI search visitors convert 4.4x better than traditional organic search visitors, and that LLM traffic channels are projected to drive as much business value as traditional search by 2027.

The practical implication: organic clicks have been eroding since AI Overviews launched in May 2024 (Source: Search Engine Land), but the users who do arrive from LLM surfaces are more valuable. LLMO is how you stay in the answer set that shapes their decision before they ever land on your page.

How Do LLM-Powered Search Engines Work?

Large language models reach your content through two separate pathways, and LLMO only works if you optimize for both. LLMrefs describes these as the training-data pathway and the live-retrieval pathway — base-model knowledge versus real-time context fetched at query time.

Training data

Foundation models like GPT-4, Gemini, and Claude are pre-trained on massive text corpora. LLMrefs identifies Common Crawl as a primary public archive used in LLM training, and notes that repeated brand mentions across the web can add data points to future training sets. What the model "knows" about your category, your product, and your competitors is frozen at a cutoff date — and you cannot edit the weights after the fact.

Real-time retrieval and RAG

Retrieval-Augmented Generation (RAG) grounds answers in external sources pulled live at query time. Evergreen Media describes RAG as a framework that "improves the quality of LLM-generated answers by grounding the model in external knowledge sources… and reduces the need for constant retraining." This is the pathway you can influence today.

According to LLMrefs, the retrieval plumbing differs by platform: ChatGPT primarily runs live searches through Bing, Perplexity uses its own crawler plus additional sources, and Google AI Overviews pull from Google's index. Gemini exposes a grounding API for developers doing the same thing inside applications.

Fan-out queries

LLMrefs also explains that AI systems often break a full user prompt into smaller fan-out sub-queries, then retrieve against each one separately. Content that only targets the full conversational question will miss the sub-queries the model actually runs. A page about "best CRM for B2B SaaS" needs to also answer sub-questions like pricing, integrations, and migration — because those are what the model retrieves against under the hood.

SEO vs. AEO vs. GEO vs. LLMO: What's the Difference?

SEO optimizes for rankings on a results page, AEO optimizes for extractable answer content, GEO optimizes for citation visibility inside generative answers, and LLMO optimizes for model-layer comprehension, entity consistency, and conversational inclusion. The four disciplines overlap but target different surfaces, and the market uses the acronyms inconsistently.

Here is the cleanest separation, drawing on how Search Engine Land, Machine Relations, and Green Lotus frame each term.

| Discipline | Primary focus | Main surfaces | Success metric |

|---|---|---|---|

| SEO | Crawl, index, rank | Google, Bing results pages | Rankings, organic clicks |

| AEO (Answer Engine Optimization) | Extractable answer content | Google AI Overviews, featured snippets | Snippet captures, answer inclusion |

| GEO (Generative Engine Optimization) | Citation visibility in generative answers | Perplexity, ChatGPT Search, AI Mode | Cited URLs, source diversity |

| LLMO | Model-layer comprehension, entity consistency, conversational inclusion | ChatGPT, Gemini, Claude, Copilot | Mentions, recommendations, context accuracy |

The distinctions matter operationally. Machine Relations separates base-model knowledge from real-time retrieval, arguing that LLMO addresses "authoritative entities, frameworks, and facts baked into model weights," while GEO and AEO primarily address retrieval. Green Lotus frames AEO as the tactic of creating extractable answer content and LLMO as the broader strategy of building brand authority through E-E-A-T and Entity SEO.

You will also see AIO, GAIO, LLM SEO, Google SGE, and SGE used as near-synonyms. Do not collapse them into "AI SEO" in a strategy doc — the tactics behind each one are different enough that the vagueness will cost you.

For deeper treatment of the adjacent disciplines, see our companion guides on [What Is GEO in 2026](/blog/what-is-geo-in-2026-generative-engine-optimization-explained), [What Is AEO in 2026](/blog/what-is-aeo-in-2026-answer-engine-optimization-explained), and [AEO vs GEO vs LLMO: Which Workflow Fits Your Team](/blog/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

Will LLMO Replace SEO in 2026?

No. LLMO builds on SEO. LLMrefs states this directly: "LLM SEO is not a replacement for traditional search engine optimization. It builds on the same foundations. Quality content, technical health, authority, and trust all still matter."

The mechanics reinforce the point. If ChatGPT retrieves through Bing and Google AI Overviews pulls from Google's index (Source: LLMrefs), then your page has to be crawlable, indexable, and discoverable in those systems before any LLM can cite it. Classic SEO fundamentals — technical health, internal links, E-E-A-T, Entity SEO, Bing and Google index visibility — are the prerequisites that determine whether your content enters the retrieval context window at all.

What changes is the scoreboard. Old success signals were rankings and clicks. LLMO signals are citations, mentions, recommendations, and context accuracy — whether the model names you, links you, suggests you, and describes you correctly. Semrush research (via Search Engine Land) projects LLM traffic channels will match traditional search in business value by 2027, which is a reason to add LLMO, not to abandon the SEO stack feeding it.

How Do You Optimize for LLMs?

Optimizing for LLMs is an eleven-step operational workflow that runs from prompt mapping through archive refreshes — not a one-time content audit. The sequence below is synthesized from Pipeline Velocity's LLMO playbook, BlogSEO's answer-block methodology, and the on-page/off-page split Evergreen Media recommends.

- Map personas and prompts. List the conversational questions your buyers ask an LLM during research, evaluation, and purchase. Pipeline Velocity calls this mapping AI search personas and prompts — it is the foundation of everything downstream.

- Identify fan-out subqueries. For each primary prompt, enumerate the shorter sub-questions an LLM is likely to run behind the scenes. LLMrefs emphasizes that visibility on sub-queries often decides whether you get cited at all.

- Draft answer blocks. BlogSEO recommends opening each target page with a 40- to 60-word direct answer to the conversational query, written in sentences under 20 words for token efficiency.

- Support with citable evidence. Add attributed statistics, named sources, and concrete examples. LLMs preferentially cite content that already cites its own sources cleanly.

- Use descriptive H2/H3 structure. Headings should be the questions your audience actually asks. This is how AEO answer extraction works and it improves LLM segmentability.

- Add schema and structured data. FAQ, Article, Organization, and Product schema help both classic crawlers and retrieval systems parse your page into citable fragments.

- Name entities explicitly and consistently. Your company, product, people, and partners need to be written the same way across the site, your About page, press mentions, Wikipedia-adjacent sources, and aggregator profiles. Entity consistency is the backbone of LLMO.

- Build topical hubs with internal links. Pipeline Velocity recommends building an LLMO content hub — a cluster of interlinked pages that demonstrate topical authority on a well-defined subject area.

- Strengthen off-page signals. Evergreen Media is explicit that off-page LLMO requires presence on databases, aggregator sites, and digital PR. Machine Relations adds that repeated third-party mentions feed future training sets via Common Crawl.

- Publish through your CMS or headless stack. Whatever the delivery layer, the published URL must be crawlable by Googlebot, Bingbot, GPTBot, ClaudeBot, and PerplexityBot.

- Refresh on a cadence. Hashmeta lists regular content updates as a core LLMO strategy. Stale facts and outdated statistics get filtered out of retrieval contexts.

Which AI Platforms Matter for LLMO?

Treat each AI surface as a distinct retrieval system, not one interchangeable "AI" channel. The platforms use different indexes, different crawlers, and different citation behaviors — a page that gets cited in Perplexity may be invisible in Copilot, and vice versa.

| Surface | Retrieval source | Priority for most B2B teams |

|---|---|---|

| ChatGPT / ChatGPT Search / SearchGPT | Bing index + OpenAI crawlers | High |

| Google AI Overviews / AI Mode | Google index | High |

| Google Gemini | Google index + Gemini grounding API | High |

| Perplexity | Own crawler + additional sources | High |

| Microsoft Copilot / Bing Chat | Bing index | Medium–High |

| Claude | Mixed retrieval + web search | Medium |

| Grok, Meta AI, DeepSeek, Amazon Nova, Microsoft Phi | Varies by deployment | Lower / test case by case |

Retrieval attribution draws from LLMrefs, which notes ChatGPT's Bing dependence, Perplexity's own crawler, and Google AI Overviews pulling from Google's index.

For platform-specific workflows, see [How to Show Up in ChatGPT](/blog/how-to-show-up-in-chatgpt-in-2026), [Google AI Overviews](/blog/how-to-show-up-in-google-ai-overviews-in-2026), [Google Gemini](/blog/how-to-show-up-in-google-gemini-in-2026), [Claude](/blog/how-to-show-up-in-claude-in-2026), [Perplexity](/blog/how-to-show-up-in-perplexity-in-2026), [Microsoft Copilot](/blog/how-to-show-up-in-microsoft-copilot-in-2026), [Grok](/blog/how-to-show-up-in-grok-in-2026), [Meta AI](/blog/how-to-show-up-in-meta-ai-in-2026), and [DeepSeek](/blog/how-to-show-up-in-deepseek-in-2026).

What Should Marketers Measure for LLMO Success?

A defensible LLMO measurement model tracks citation behavior across a fixed prompt set over time, not vague "AI visibility." The repeatable model has three layers: coverage, outcomes, and retrieval readiness.

Coverage — what fraction of your target prompts and fan-out sub-queries return an answer that mentions or cites you.

- Prompt set coverage (primary prompts)

- Fan-out query coverage (sub-queries)

- Surface coverage (ChatGPT, Gemini, Claude, Perplexity, Copilot, AI Overviews)

Outcomes — what the model actually does with you when you appear.

- Citation frequency (named source with link)

- Mention share (brand named without link)

- Recommendation presence (model suggests you as an option)

- Cited URL (which specific page gets pulled)

- Answer accuracy and context accuracy (does the model describe you correctly?)

- Source diversity (how many of your pages get cited, not just one)

Retrieval readiness — the upstream signals that determine whether you can be cited at all.

- Crawl and index status across Bing and Google

- Entity consistency across owned properties and third-party sources

- Freshness and refresh recency

Classic tooling from Ahrefs and Semrush still matters for the retrieval-readiness layer — ranking, backlinks, and index coverage directly feed what LLMs can pull. But pair them with a citation-tracking workflow that runs your prompt set against each AI surface on a regular cadence.

Can I Optimize for LLMO Retroactively?

Partially. You can dramatically improve live-retrieval citability today, but you cannot retroactively edit what the model already "knows" about you. Machine Relations draws this line clearly: base-model knowledge reflects training data before the cutoff date and cannot be changed after release; brands can only influence future model versions by building earned authority now.

What you can fix immediately on an existing archive:

- Add 40- to 60-word answer blocks to evergreen pages

- Attribute statistics and claims with named sources

- Add FAQ, Article, and Organization schema

- Reconcile entity naming across the site, About page, and author bios

- Rebuild internal links around topical clusters

- Unblock GPTBot, ClaudeBot, PerplexityBot, and other retrieval crawlers in robots.txt

- Resubmit refreshed URLs to Google and Bing

What only compounds over time:

- Third-party mentions that land in Common Crawl and feed future training runs

- Consistent entity signals across aggregators, databases, and PR

- Category-defining content that other authoritative pages cite back to you

Machine Relations adds a useful nuance: for broad category research queries, retrieval may not even trigger, which means base-model authority dominates. For current events, product comparisons, or specific buying questions, retrieval activates and GEO/AEO tactics matter more. Plan for both.

What Are the Most Common LLMO Mistakes?

The most common LLMO mistakes are treating it as keyword stuffing, collapsing AEO/GEO/LLMO/SEO into one "AI SEO" bucket, and confusing LLMO with model-deployment disciplines like LLMOps. The recurring failure modes across sophisticated teams all trace back to the same root cause: treating LLMO as a surface-level content tweak rather than a systems problem.

- Treating LLMO as keyword stuffing. LLMs reward comprehension and extractability, not density. ClickPoint notes that keyword padding and formulaic SEO structures no longer work.

- Collapsing AEO, GEO, LLMO, and SEO into one "AI SEO" bucket. The tactics behind each discipline diverge. Lumping them together hides the gaps in your workflow.

- Confusing LLMO with LLMOps, MLOps, or FMOps. These are model-deployment disciplines — an IEEE search result for LLMO actually returns LLMOps material, which is unrelated to content visibility.

- Chasing unrelated acronym results. Pages targeting llmoai.net-style SERPs or using LLMO to mean "adapting LLMs for marketing tasks" (as one Applabx result does) are not about AI-search visibility at all.

- Publishing low-value programmatic templates. Scaling glossary-style coverage without strong editorial controls produces repetitive pages that LLMs ignore.

- Omitting evidence and attribution. Unsourced claims are hard for models to trust and harder to cite.

- Blocking retrieval crawlers. If GPTBot or PerplexityBot can't reach the page, nothing else you do matters.

- Leaving stale facts and ignoring off-page signals. Freshness and third-party mentions are both retrieval inputs.

Mentionwell is built to run AEO, GEO, LLMO, and classic SEO as one editorial pipeline — onboarding, site profile, research, entity-consistent drafts, schema, CMS or headless publishing, and archive refreshes across one site or hundreds. If you want that system working on your domain, Get My Site GEO Optimized.

Sources

- Large Language Model Optimization (LLMO) explainedwww.evergreen.media

- What Is LLMO? Will it Replace SEO in 2026? - The ClickPoint Blogblog.clickpointsoftware.com

- What Is LLMO? Optimize Content for AI & Large Language Modelssearchengineland.com

- What Is LLMO? Guide to Large Language Model Optimizationgaurav-sharma11.medium.com

- Large Language Model Optimization (LLMO) Guidewww.pipelinevelocity.com

- What Is LLM Optimization (LLMO)? The New Frontier of SEOwww.ranktracker.com