What Is Penfriend AI?

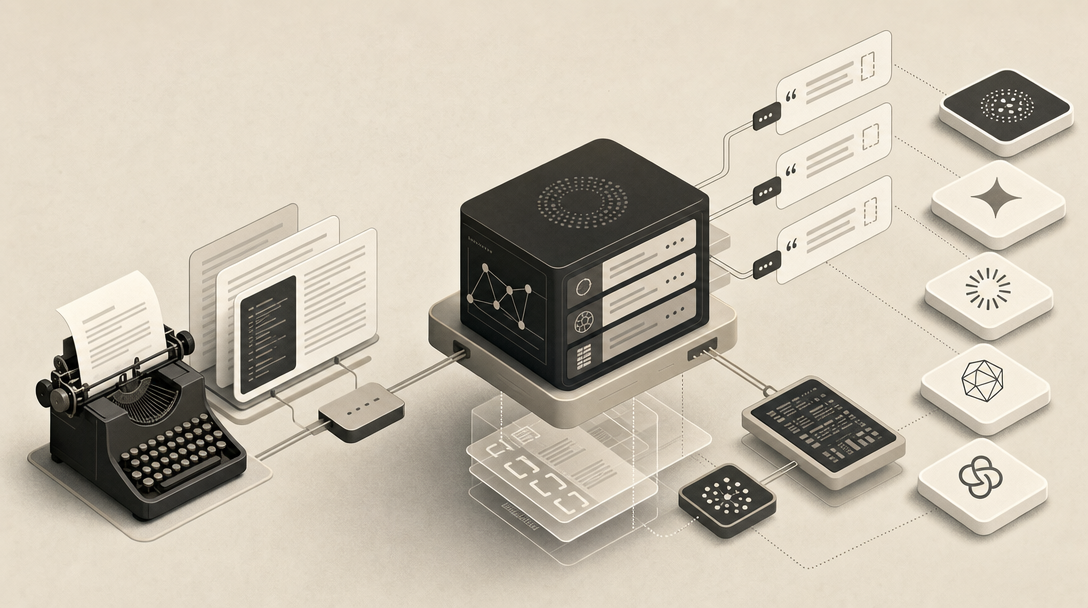

Penfriend.ai is a focused long-form blog drafting platform that turns a keyword or topic into a structured, SEO-oriented article — not a pen-pal service, not the unrelated Global Penfriend correspondence network, and not the Penpal-Gate controversy that clutters the SERP. The product is built around one job: generate a long-form draft fast, in the writer's voice, with prompting handled internally.

The system runs on a feature called Penny. According to Penfriend, Penny works out search intent for a topic, generates 40+ titles, judges them against what gets clicks, and then drafts the article. Penfriend says Penny was built on over 2,100 hours of prompt engineering and is positioned to "win search and LLMs in 2026" (Source: Penfriend.ai).

That positioning is where the operator question gets interesting. Penfriend is built to ship a polished first draft; whether that draft becomes citable by ChatGPT Search, Perplexity, Gemini, Claude, Bing Copilot, or Google AI Overviews is a separate engineering problem. Polished prose and citation-ready content are different layers of the stack. One is a writing problem. The other is a publishing-infrastructure problem involving schema, crawler access, markdown mirrors, llms.txt, and refresh cadence.

Throughout this guide, the question is not whether Penfriend writes well. It writes well. The question is what has to happen after the draft exists for AI answer engines to actually read, parse, and quote it.

How Many Clicks Does It Take to Make an Article With Penfriend?

According to Penfriend's own ChatGPT comparison page, you can produce an article in as little as 3 clicks and 6 minutes, because all the prompt engineering is handled inside the tool (Source: Penfriend.ai).

The workflow looks like this:

- Enter a keyword or topic. Penny works out search intent and generates 40+ candidate titles, scored against expected clickability.

- Pick a title. Penfriend builds a structured outline using a dedicated prompt for each section rather than one-shotting the full article — a design choice flagged in third-party reviews (Source: hellodavelin.com).

- Generate the draft. Penfriend's stated average output is 2,850 words per article, with three free articles available on trial (Source: Penfriend.ai).

- Hand off to a human reviewer for fact-checking, voice tuning, examples, and product accuracy.

| Stage | Click count | Time | Human input required |

|---|---|---|---|

| Topic → titles | 1 | seconds | low |

| Title → outline | 1 | ~1 min | low |

| Outline → full draft | 1 | ~5 min | none |

| Editorial review | n/a | hours | high |

Is Penfriend a Publish-Ready Platform or a First-Draft Engine?

Penfriend is a first-draft engine, and the company's own blog says so. Penfriend's Best AI Writing Software article describes the product as "a strong first draft for a team to edit and enrich with personal experience" (Source: Penfriend.ai). Third-party reviewers reach the same conclusion: Zuleika LLC writes that Penfriend AI is "best used as a first-draft engine paired with human editing, not as a publish-ready, zero-oversight solution," and HelloDaveLin describes the output as "realistic, human-like first-draft copy within minutes."

That is a useful, honest framing — but it sits in tension with Penfriend's homepage marketing, which leans on "high-authority content" and "win search and LLMs in 2026." A reader skimming the homepage might assume the draft ships. It doesn't, and it shouldn't.

The editorial layer Penfriend explicitly leaves to the human team includes:

- Fact verification and citation checking

- Source selection and link insertion

- Brand nuance, edge cases, and product accuracy

- Subject-matter-expert input on technical claims

- Concrete examples drawn from real customer work

- Personal experience and first-party data

Human-like writing and citation-ready publishing infrastructure are different products, and conflating them is the most expensive mistake a content team can make in 2026. A draft that reads like a senior writer wrote it can still be invisible to GPTBot, ClaudeBot, and PerplexityBot if the page lacks structured data, extractable summaries, and crawler access. Penfriend solves the writing problem cleanly. The infrastructure problem is downstream.

Does Penfriend Create SEO-Optimized Articles?

Yes — Penfriend is explicitly designed for classic SEO. According to Penfriend, articles are "search aligned, follow SEO best practices, use up-to-date information," and are built around the SEO expertise of a content strategist with over 10 years of experience (Source: Penfriend.ai). Average output is 2,850 words, the format is long-form blog only, and the company claims Penny can compress a two-week content project into a one-day job.

What that covers well:

- Keyword and search-intent targeting via Penny

- Long-form structure with H2/H3 hierarchy

- Voice cloning from user inputs and PDFs

- Title generation tested against click likelihood

- Section-by-section prompt design instead of single-shot generation

What classic SEO alignment does not automatically include:

| Optimization layer | Penfriend covers? | Required for AI citations |

|---|---|---|

| SEO (rankings) | Yes | Helpful, not sufficient |

| AEO (answer engines) | Partial — depends on draft structure | Yes |

| GEO (generative engines) | Not addressed in source material | Yes |

| LLMO (crawler/retrieval access) | Not addressed in source material | Yes |

Ranking on Google and getting cited by ChatGPT Search are governed by overlapping but distinct signals. Penfriend's documentation focuses on the first. The second requires schema, markdown mirrors, llms.txt, and answer-first content shapes — a stack Penfriend's public material does not describe. For a deeper breakdown of how these layers interact, see AEO vs GEO vs LLMO: Which Workflow Fits Your Team?.

How Does Penfriend Stack Up Against ChatGPT and Other Article Tools?

Penfriend's value proposition is narrower and sharper than general-purpose tools. It is a prompt-less article generator built for one job — long-form blog articles — rather than a flexible writing assistant or an SEO optimization layer.

| Tool | Primary job | Prompting | Best fit |

|---|---|---|---|

| Penfriend | Long-form SEO article drafting | Built-in (Penny) | Teams that want polished first drafts fast |

| ChatGPT | General-purpose generation | User-built | Custom workflows, varied formats |

| Frase.ai | SERP-driven brief + draft | Hybrid | SEO teams optimizing against competitors |

| Surfer | On-page optimization scoring | Hybrid | Editors tuning existing drafts to rank |

| ZimmWriter | Bulk article generation | Templated | Affiliate and high-volume operators |

| Jasper AI | Marketing copy across formats | User-built | Brand and campaign teams |

| Rytr / Writesonic / Anyword | Short-form + ad copy | User-built | Performance marketing |

Penfriend's edge is that the prompt engineering is already done. Per the company, over 2,100 hours went into building Penny's prompts (Source: Penfriend.ai). That removes a real source of variance in ChatGPT-based workflows. But none of these tools — Penfriend, ChatGPT, Frase, Surfer, or the rest — automatically produce schema, markdown mirrors, FAQPage JSON-LD, llms.txt, sitemap signals, or refresh operations. Those are publishing-engine concerns, not drafting concerns.

Drafts are easy. Citations are infrastructure. If your bottleneck is the gap between a polished draft and a page that actually gets cited in ChatGPT Search and Google AI Overviews, Get My Site GEO Optimized handles the publishing layer that no drafting tool covers.

Are Penfriend Articles Safe for Google—and Are They Citation-Ready for AI Engines?

AI-assisted content is not, on its own, a Google problem. Google's published guidance treats helpfulness, accuracy, originality, and editorial review as the criteria that matter. A well-edited Penfriend draft with real sources, first-party examples, and SME review can rank — the same way a well-edited draft from any tool can rank.

The bigger question is citation readiness for AI answer engines: ChatGPT Search, Claude, Gemini, Grok, Perplexity, Bing Copilot, and Google AI Overviews. That requires more than helpful prose.

A draft that ranks on Google can still be invisible to GPTBot, ClaudeBot, and PerplexityBot.

Penfriend's claim that its content will "win search and LLMs in 2026" is, based on the publicly available material, unsupported by citation data, AI Overview screenshots, Perplexity citation logs, or third-party benchmarks. That doesn't mean the claim is wrong — it means the evidence isn't shown. Operators evaluating any AI writing tool in 2026 should treat "wins LLMs" claims as marketing until the vendor produces actual citation data from ChatGPT Search, Perplexity, or Google AI Overviews.

The honest version: Penfriend produces drafts that could be cited if the surrounding publishing layer does its job. Without that layer, prose quality alone doesn't earn citations. For the underlying mechanics, see How to Show Up in ChatGPT in 2026 and How to Show Up in Google AI Overviews in 2026.

What Technical Artifacts Help AI Answer Engines Read, Parse, and Cite Content?

The artifacts that make content citable by AI answer engines are structured data, extractable summaries, machine-readable feeds, and explicit crawler access — specifically Article and FAQPage JSON-LD, answer-first opening sentences, source-backed claims, glossary-shaped definitions, per-article markdown mirrors, RSS and JSON Feed, sitemaps, llms.txt, llms-full.txt, and robots.txt rules that admit GPTBot, ClaudeBot, and PerplexityBot. AI engines don't read your blog the way a human does — they crawl it, parse it, retrieve it, and decide whether to cite it based on extractable structure.

The full artifact list:

- Article and FAQPage JSON-LD. Structured data that tells crawlers what the page is, who wrote it, what questions it answers, and how the answers are framed.

- Answer-first summaries. A 1–2 sentence direct answer at the top of each section, written so an LLM can lift it verbatim.

- Source-backed claims. Inline attribution to named sources with URLs — not "studies show."

- Extractable definitions. Glossary-shaped sentences ("X is a Y that does Z") that match how LLMs build entity associations.

- Markdown mirrors. A

.mdversion of every article served at a predictable endpoint, because LLM crawlers parse markdown more reliably than rendered HTML. - RSS, JSON Feed, and sitemaps. Machine-readable change signals that crawlers use to discover new and updated content.

- llms.txt and llms-full.txt. The site-level files that declare crawler permissions and content scope. See What Is LLMs.txt in 2026? for the format.

- Crawler-friendly access for GPTBot, ClaudeBot, and PerplexityBot. Robots.txt rules, server-level allowlists, and no aggressive bot-blocking on the edge.

| Artifact | AEO | GEO | LLMO | SEO |

|---|---|---|---|---|

| FAQPage JSON-LD | ✓ | ✓ | ✓ | ✓ |

| Answer-first summaries | ✓ | ✓ | — | partial |

| Markdown mirrors | — | ✓ | ✓ | — |

| llms.txt | — | — | ✓ | — |

| Sitemaps + RSS | partial | partial | ✓ | ✓ |

| GPTBot/ClaudeBot access | — | ✓ | ✓ | — |

None of this guarantees citations. It makes citations possible. Without it, the question is moot.

How Does Mentionwell Differ From an AI Blog Writer Like Penfriend?

Mentionwell is a content engine, not a writing tool. The functional difference: Penfriend ends at the draft; Mentionwell treats the draft as one stage in an 11-stage pipeline that ends in a published, schema-equipped, AI-readable article on your domain (Source: Mentionwell features).

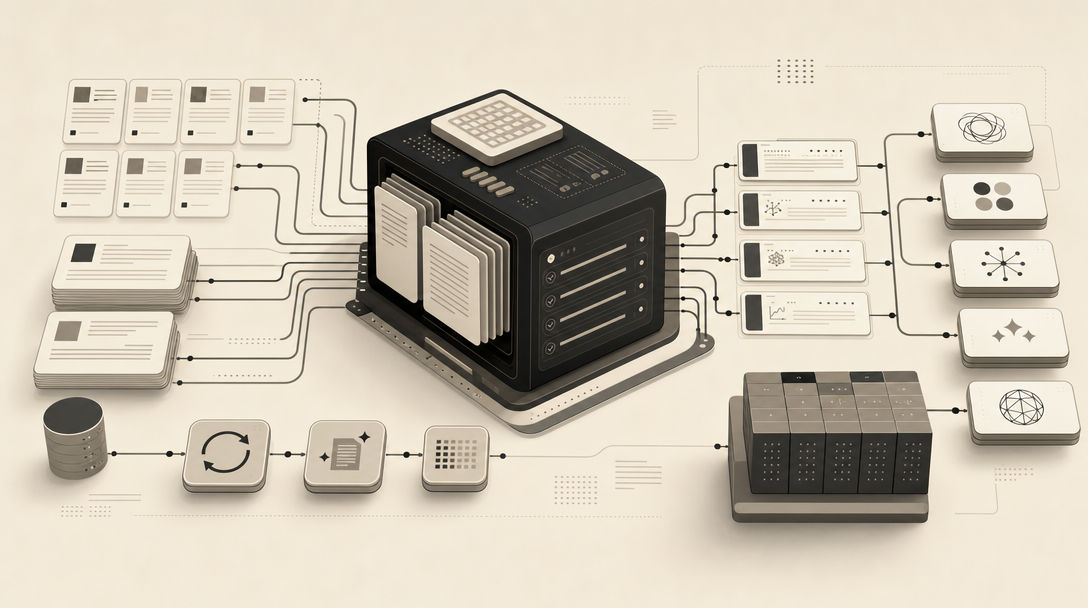

The Mentionwell workflow, per the company:

- Domain scan. Crawls the site and builds a baseline.

- Site profile. Captures voice, taxonomy, audience, pain points, pitch rules, and competitor exclusions.

- GEO baseline. Optionally runs prompts against AI answer engines to capture citations, fan-out queries, cited-page claims, and competitor gaps.

- Research. Pulls source-backed facts with attribution.

- Outline. Section-by-section, intent-aligned.

- Draft. Long-form generation in the site's voice.

- Editorial critic. A QA pass for tone, accuracy, and structure.

- Metadata. Title, description, OpenGraph, Twitter cards.

- FAQ. FAQPage-shaped Q&A rendered as schema.

- Embeddings. For internal-linking and retrieval.

- Image generation. Brand-consistent visuals.

Every article ships with four optimization layers — AEO, GEO, LLMO, and SEO — and the publishing layer adds Article and FAQPage JSON-LD, RSS, JSON Feed, sitemaps, a per-article markdown mirror, and a site-wide llms.txt (Source: Mentionwell).

| Capability | Penfriend | Mentionwell |

|---|---|---|

| Long-form draft generation | Yes | Yes |

| Voice cloning | Yes | Yes (via site profile) |

| SEO structure | Yes | Yes |

| AEO direct-answer shaping | Partial | Yes |

| GEO baseline + competitor gap data | No | Yes |

| LLMO (llms.txt, markdown mirrors) | No | Yes |

| FAQPage + Article JSON-LD | No | Yes |

| CMS publishing | No | Yes |

| Archive refresh operations | No | Yes |

| Multi-site / programmatic SEO controls | No | Yes |

Penfriend writes the article. Mentionwell ships the article into a system designed to be cited.

Which Workflow Handles CMS Delivery, Headless Publishing, and Archive Refreshes?

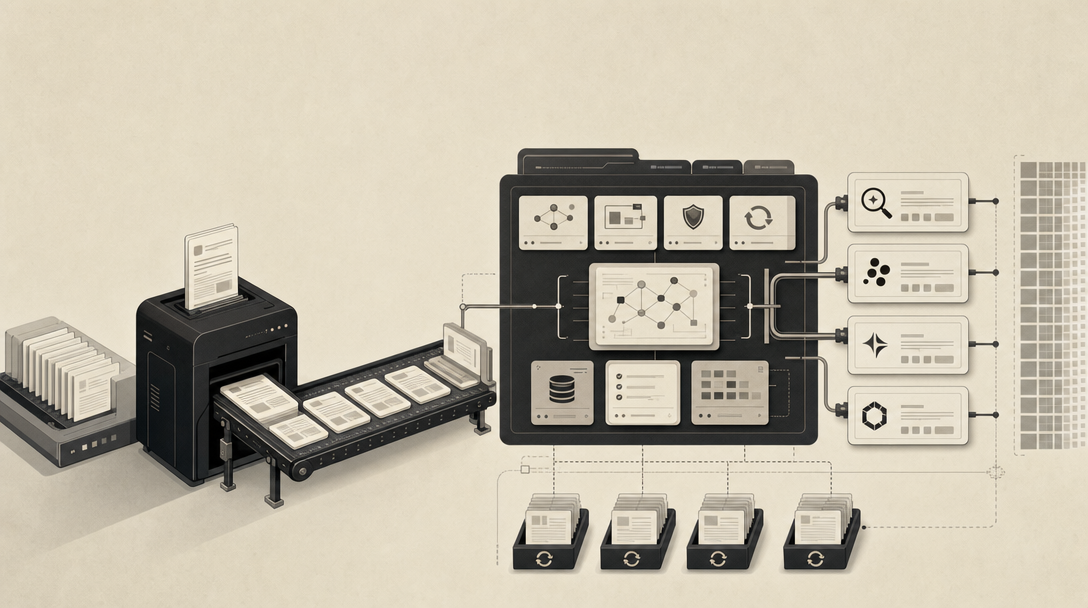

Once an article exists, the operational question is: how does it reach readers, crawlers, and AI engines reliably? This is where Mentionwell's delivery layer matters.

Per Mentionwell, articles can publish into WordPress, Webflow, Ghost, Shopify, or Notion, or serve from a public read-only API. The company exposes a mentionwell-reader endpoint and per-article markdown sources, which give AI crawlers a clean, parseable representation of every page (Source: Mentionwell).

The full delivery surface includes:

- Native CMS publishing into the five platforms above

- Headless API delivery for custom front-ends

- Markdown-source endpoints designed for GPTBot, ClaudeBot, and PerplexityBot

- RSS and JSON Feed for change detection

- Sitemap maintenance as the archive grows

- llms.txt and llms-full.txt at the parent domain

- Archive refresh operations to keep older articles current

Refresh is the silent operational requirement. AI answer engines weight recency. An archive of 200 articles published two years ago and never updated is, for citation purposes, partially decommissioned. A content engine maintains the archive; a drafting tool does not.

For deeper context on each surface, see: - How to Show Up in Perplexity in 2026 - How to Show Up in Claude in 2026 - How to Show Up in Microsoft Copilot in 2026 - How to Show Up in Google Gemini in 2026

When Should a Team Choose Penfriend, Mentionwell, or Both?

Match the tool to the bottleneck.

Choose Penfriend when: - The constraint is first-draft creation speed - Voice capture across a small writing team is hard - The team already has strong editorial QA, schema tooling, and CMS workflows - The output target is classic SEO rankings, not AI citations

Choose Mentionwell when: - The constraint is citation readiness in ChatGPT Search, Perplexity, Gemini, Claude, Copilot, or Google AI Overviews - AEO, GEO, and LLMO structure need to ship by default - CMS delivery and headless publishing are required - Multi-site or programmatic SEO consistency matters - Archive refreshes and llms.txt maintenance are not getting done manually

Use both when: Penfriend drafts feed a downstream pipeline that adds technical artifacts, editorial QA, schema, markdown mirrors, and AI-engine readability before publishing. In this configuration Penfriend solves drafting; the publishing engine solves everything else.

The honest decision rule: a beautiful draft is not a publishing strategy, and a publishing strategy is not a writing tool — operators who treat them as the same thing get neither.

If the goal in 2026 is to be cited — not just ranked — the writing layer is necessary but not sufficient. The infrastructure layer is where the citation work actually happens. Get My Site GEO Optimized to put both layers under one workflow.

Sources

- [PDF] Your Sinclairarchive.org

- [PDF] ED 328 909 AUTHOR TITLE REPORT NO PUB DATE ... - ERICfiles.eric.ed.gov