What Is AI Tracker, and What Did Surfer Actually Add?

Surfer did not name its new feature "GEO tracking." It launched AI Tracker, an add-on inside Surfer SEO that monitors how often large language models — ChatGPT, Gemini, Perplexity, SearchGPT, Google AI Overviews, and Google AI Mode — mention a brand, product, or topic in AI-generated answers (Source: Surfer). If you searched "surfer added geo tracking" expecting a generative-engine-optimization workflow, the actual product is narrower: a visibility dashboard for LLM responses.

The query is also semantically polluted, so it's worth disambiguating up front. None of the following are what Surfer SEO shipped:

- Golden Software Surfer, the contour and 3D mapping product used in geosciences.

- Geo Tracker, the Android GPS app for hikes and bike rides.

- Browser geolocation, Usermaven geo-tracking scripts, Flowcode QR location data, MaxMind GeoIP2, IP2Location, or OpenStreetMap layers.

AI Tracker is a measurement layer for answer-engine visibility, not a content workflow that produces the pages those engines cite. That distinction is the entire point of this article. Monitoring whether ChatGPT names your brand is operationally useful — it tells you where the gaps are. Closing those gaps requires a separate publishing system: research-grounded briefs, AEO/GEO/LLMO/SEO structure, CMS delivery, and archive refreshes.

Surfer's own documentation is explicit about scope: AI Tracker "tracks mentions of your content and brand in responses generated by large language models" (Source: Surfer docs). It is a dashboard. The work of becoming citable in the first place — entity-rich pages, glossary coverage, comparison content, programmatic libraries — sits outside the tool. That gap is what Mentionwell is built to close.

What Does AI Tracker Do?

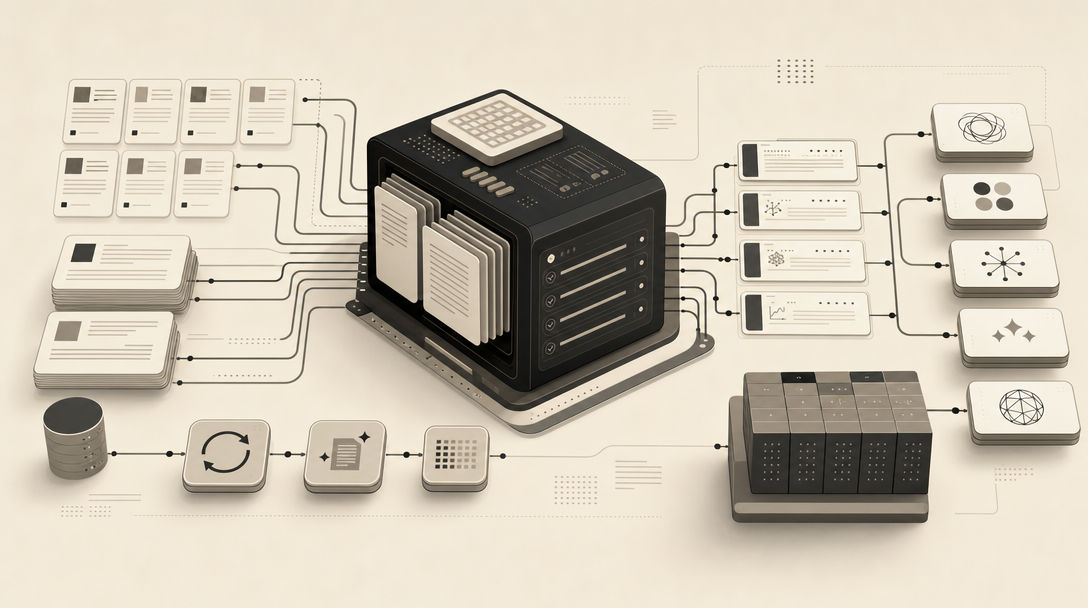

AI Tracker evaluates a set of prompts, runs them against multiple LLMs, and reports which domains are cited or referenced when those prompts are answered (Source: Surfer docs). Instead of pasting prompts into ChatGPT and copying answers into a spreadsheet, you get a structured view of who shows up.

The tool surfaces six core data types:

- Visibility score — an aggregate measure of how present your brand is across the tracked prompt set.

- Mention rate — how frequently your brand appears in AI answers.

- Average position — where your brand or page ranks within the model's response.

- Topics and prompts — the clusters and individual queries being monitored.

- Sources — the domains LLMs cite or recognize when generating answers.

- Competing domains — which competitors are being mentioned alongside or instead of you.

AI Tracker tells you who answer engines are listening to; it does not change who they listen to. That is a critical line for operators to draw. A dashboard that shows competitor citations is diagnostically valuable, but the remediation — writing the page that earns the next citation — happens elsewhere.

| Capability | AI Tracker delivers | AI Tracker does not deliver |

|---|---|---|

| Prompt-level visibility data | Yes | — |

| Competitor citation list | Yes | — |

| Source domain tracking | Yes | — |

| Brief generation from gaps | — | No |

| CMS publishing pipeline | — | No |

| Archive refresh workflow | — | No |

| Programmatic SEO coverage | — | No |

For B2B SaaS and agency teams already running AEO, GEO, and LLMO programs, AI Tracker fits as a measurement input. It does not replace the editorial pipeline that produces citation-shaped pages.

What Is the Difference Between AI Tracker Sources and Visible Brand Mentions?

A visible brand mention is when the LLM names your brand in the answer text a user reads. A Source is a domain the LLM cited or recognized when generating the answer, even if your brand name does not appear in the visible response (Source: Surfer docs). The two metrics measure different things, and conflating them produces bad decisions.

Consider the asymmetry:

- A page on your domain can be pulled into the model's context and shape the answer without your brand appearing in the response. You influenced the answer; you got no exposure.

- A competitor can be named in the visible answer without their domain being cited as a source. They got exposure; the model used someone else's content to describe them.

For AEO, GEO, LLMO, and SEO teams, this distinction maps to two different remediation paths:

- Low mention, high source presence — your content is being read by the model but not surfaced by name. Fix: stronger entity signals, clearer brand-as-subject framing, comparison and definition pages that make naming you the path of least resistance.

- High mention, low source presence — the model knows your brand but cites someone else to describe it. Fix: own the definitional pages (glossary, product features, integrations) so your domain becomes the cited source.

How Does AI Tracker Work?

The setup flow is short. According to Surfer's launch documentation, you add AI Tracker to a Surfer plan, enter your brand, define focus topics and language, review or edit the suggested prompts, and let the tracker run (Source: Surfer).

Under the hood, Surfer says it improves accuracy with a self-consistency method: prompts are repeated several times across models, and results are averaged to reduce volatility from any single LLM response (Source: Surfer docs). That matters because LLM outputs are non-deterministic — a single query against ChatGPT this morning and the same query this afternoon can produce different citations.

What Surfer does not disclose publicly is also worth naming:

- Model versions are not specified per run.

- Geography controls are not detailed in public documentation.

- Prompt sample size per evaluation is not published.

- Citation-extraction rules — how the system decides what counts as a citation versus an incidental mention — are not audited externally.

- Confidence thresholds for the visibility score are not exposed.

The operational implication is straightforward: use AI Tracker to identify prompt clusters where you are missing, then move that intelligence into a brief and publishing system that can act on it. The tool is the input; your content engine is the output. If your team is hitting the ceiling between "we see the gaps" and "we shipped the pages that close them," Get My Site GEO Optimized is the system built for exactly that handoff.

How Often Is AI Tracker Data Refreshed?

Surfer's own materials disagree. The AI Tracker documentation states that data and charts are refreshed daily after a query is created (Source: Surfer docs). The launch blog lists weekly updates as a feature (Source: Surfer blog). A separate updates page references more frequent daily LLM pulls. The cadence you actually get inside your plan is unclear from public materials alone.

| Source | Stated cadence |

|---|---|

| Surfer AI Tracker docs | Daily refresh |

| Surfer AI Tracker launch blog | Weekly updates |

| Surfer updates page | Daily LLM pulls (described more frequently) |

| Surfer Rank Tracker (separate product) | Daily keyword tracking, weekly SEO reports |

Two operator implications follow.

First, verify the actual cadence inside your own Surfer account before building reporting on top of it. The visible refresh date in the dashboard is the only number that should drive internal SLAs.

Second, do not treat any AI visibility score as a real-time performance metric. LLM outputs drift between model updates, prompt re-rolls, and retrieval changes on the underlying engines. A weekly or daily snapshot is a trend signal, not a live KPI. Pair it with classic rank tracking and referral data from Google Search Console and GA4 for triangulation, not as a standalone source of truth.

Which Plans Include AI Tracker, and How Many Tracked AI Prompts Do They Include?

Surfer's AI Tracker documentation lists prompt limits by tier: Standard includes 25 tracked AI prompts, Pro includes 50, and Peace of Mind includes 100 (Source: Surfer docs).

| Plan | Tracked AI prompts |

|---|---|

| Standard | 25 |

| Pro | 50 |

| Peace of Mind | 100 |

For a single-product brand monitoring a narrow set of category queries, 25–50 prompts can be enough. For most B2B SaaS, agency, or programmatic SEO operations, the math gets thin quickly. Consider what a real prompt set looks like:

- Category prompts — "best [category] tool," "alternatives to [competitor]" — easily 10–20 alone.

- Comparison prompts — "X vs Y," "X vs Y vs Z" — multiplies with each competitor.

- Feature-level prompts — "how does [product] handle [feature]" — can run to 30+ for a mature product.

- Use-case prompts — "best tool for [job]" — another 10–20.

- Geographic and language variants — multiplies everything by 2–5x for international brands.

- Client portfolios — agencies need this entire structure per client.

For agencies running citation-shaped programs across 10 client sites, AI Tracker prompt limits become a budgeting constraint long before they become a strategy. The broader GEO platform market — Promptwatch, Profound, Peec AI, Otterly.AI, Rankscale and others — offers different prompt-volume economics, but none of those tools write the pages that close the gaps they identify.

AI Tracker vs Rank Tracker: Which Visibility Problem Are You Measuring?

These are two products solving two different problems, and Surfer keeps them separate for a reason. AI Tracker measures LLM and answer-engine visibility; Rank Tracker measures classic keyword positions in Google by targeted location (Source: Surfer docs).

| Dimension | AI Tracker | Rank Tracker |

|---|---|---|

| What it measures | Brand mentions, sources, prompt-level visibility in LLMs | Keyword positions in classic search |

| Engines covered | ChatGPT, Gemini, Perplexity, SearchGPT, Google AI Overviews, Google AI Mode | Google (by targeted location) |

| Update cadence | Daily / weekly (inconsistent in materials) | Daily tracking, weekly reports |

| Unit of measurement | Prompts | Keywords |

| Use case | AEO, GEO, LLMO diagnostics | Classic SEO performance |

Treat them as complementary, not competing. Classic rankings tell you whether your page is reachable through SERP-driven demand. AI tracking tells you whether answer engines are willing to cite or name you when users skip the SERP entirely.

A practical rule: if your category still has meaningful click-through from organic search, Rank Tracker remains essential. If your category increasingly resolves in AI Overviews, ChatGPT, or Perplexity without a click, AI Tracker becomes essential. Most B2B SaaS categories now have both dynamics happening at once, which is why AEO, GEO, LLMO, and SEO need to share one editorial pipeline rather than living in separate dashboards. For a fuller breakdown of how those workflows differ, see AEO vs GEO vs LLMO: Which Workflow Fits Your Team?.

What Happens After Competitors Are Cited and Your Domain Is Not?

This is the question Surfer's AI Tracker is not built to answer. The dashboard tells you that ChatGPT cited Competitor A for the prompt "best B2B analytics platform" and Perplexity cited Competitor B for "alternatives to [your product]." It does not tell you what to publish, where to publish it, how to structure it, or how to refresh it.

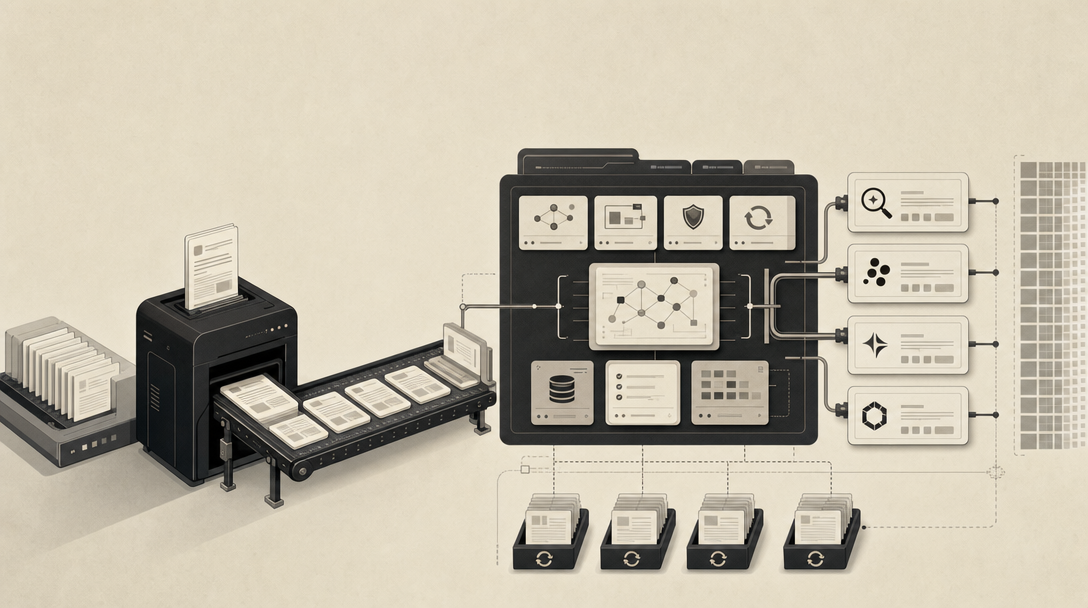

The operational gap looks like this:

- Prompt gap identified — AI Tracker shows you are missing on 14 high-value prompts.

- Brief required — each gap needs a research-grounded brief with entity coverage, direct-answer structure, and source attribution.

- Page type decided — glossary page, comparison page, feature page, integration page, or programmatic SEO library entry.

- Draft produced — citation-shaped, AEO/GEO/LLMO/SEO structured, not generic blog filler.

- CMS publishing — into your existing stack, headless or otherwise.

- Citation movement monitored — back into AI Tracker or equivalent.

- Archive refresh scheduled — because LLM training and retrieval favor recency signals.

Surfer covers step 1. The market is crowded with tools that also stop at step 1 or 2: Promptwatch, Profound, ZipTie, Peec AI, Scrunch AI, Otterly.AI, Rankscale, LLM Pulse, Rankshift, AIclicks, AthenaHQ, Semrush AI visibility, Bluefish AI, and Evertune. Each has different strengths in onboarding speed, prompt customization, citation analysis, or enterprise reporting. None of them produce the pages that close the gaps they identify.

The tracking-heavy landscape is real and growing — but a dashboard that shows you are absent does not, by itself, make you present.

This is the pattern teams keep hitting: a measurement budget is approved, a tool is purchased, prompt gaps are catalogued, and then the catalogue sits in a Notion doc because the editorial pipeline to act on it does not exist. The bottleneck moves from visibility to production.

How Should Teams Turn Prompt Gaps Into Publishable Content?

The workflow that converts AI Tracker output (or any GEO platform's output) into citation-ready pages has nine repeatable steps:

- Cluster prompts by intent. Group tracked prompts into definitional, comparative, transactional, and use-case clusters. Different intents map to different page types.

- Identify missing owned pages. For each cluster, check whether you have an indexable page that directly answers the prompt. Most teams discover 30–60% of their tracked prompts have no corresponding owned page.

- Map cited competitor sources. For each gap, list which domains ChatGPT, Perplexity, and Gemini are citing. This is your editorial benchmark.

- Choose the right page type. Glossary pages for terms (see What Is GEO in 2026?, What Is LLMO in 2026?, What Is AEO in 2026?). Comparison pages for "X vs Y" prompts. Feature or integration pages for product-level prompts. Programmatic libraries for high-volume long-tail clusters.

- Draft answer-first sections. Every H2 opens with a 1–2 sentence direct answer that is self-contained and citable.

- Add entity and source clarity. Name every entity by full proper name on first mention. Attribute every statistic to its source. LLMs use entity co-occurrence to decide what to surface.

- Publish through the existing CMS or headless stack. No replatforming required.

- Monitor citation movement. Re-run tracked prompts after 2–4 weeks to see whether the new page is being picked up as a source or visible mention.

- Refresh the archive on cadence. Update high-value pages every 60–90 days. Recency is a retrieval signal across most major engines.

This is the workflow Mentionwell automates. It is also the workflow most teams attempt manually after their tracker shows them the gap — and the workflow that breaks first under multi-site or programmatic scale. Platform-specific guides on how to show up in ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews cover the engine-specific signals that should inform your brief structure.

When Is a GEO Tracker Enough, and When Do You Need a Content Engine?

A GEO tracker is enough when your job is measurement, benchmarking, prompt monitoring, or executive reporting. If you have a competent in-house editorial team already producing citation-shaped content at the volume your category demands, AI Tracker, Profound, Peec AI, or Scrunch will give you the visibility data you need to prioritize. The dashboard becomes the analytics layer on top of an editorial system that already works.

A content engine is needed when your job is to build the pages answer engines can cite, at consistent quality, across one site or hundreds. The operational requirements look different from a tracker:

| Requirement | GEO tracker | Content engine |

|---|---|---|

| Measure prompt visibility | Yes | — |

| Identify competitor citations | Yes | — |

| Generate research-grounded briefs | — | Yes |

| Produce AEO/GEO/LLMO/SEO-structured drafts | — | Yes |

| Publish into existing CMS or headless stack | — | Yes |

| Run programmatic coverage at scale | — | Yes |

| Maintain brand consistency across multi-site portfolios | — | Yes |

| Refresh archives on cadence | — | Yes |

Surfer added AI Tracker as a checkbox inside an SEO suite. Mentionwell is an automated blog engine built around the workflow that turns tracker output into citable pages. That is the structural difference. A checkbox extends an existing product. An engine replaces a manual pipeline.

For B2B SaaS marketing teams, agencies running multi-client portfolios, growth and content leaders maintaining existing CMS setups, and operators building programmatic SEO libraries around AEO, GEO, and LLMO terminology, the decision is rarely "tracker or no tracker." It is "what produces the pages after the tracker tells us where we are absent." If that production capacity does not exist internally, no amount of dashboard refinement will close the gap.

If your tracker has already shown you the gaps and the editorial pipeline to close them does not exist yet, Get My Site GEO Optimized.

Sources

- Surfer Map Properties Training Video - Golden Software Supportsupport.goldensoftware.com

- [PDF] Surfer User's Guide - Golden Softwaredownloads.goldensoftware.com

- [PDF] Surfer Quick Start Guidesurfer.pl

- HOW TO USE SURFER FOR MAPS AND MODELS - PART 1www.youtube.com

- Creating Boreholes & Cross-Sections in Grapher & Surfersupport.goldensoftware.com

- AI Tracker | Surferdocs.surferseo.com