What Is HubSpot's AEO Grader?

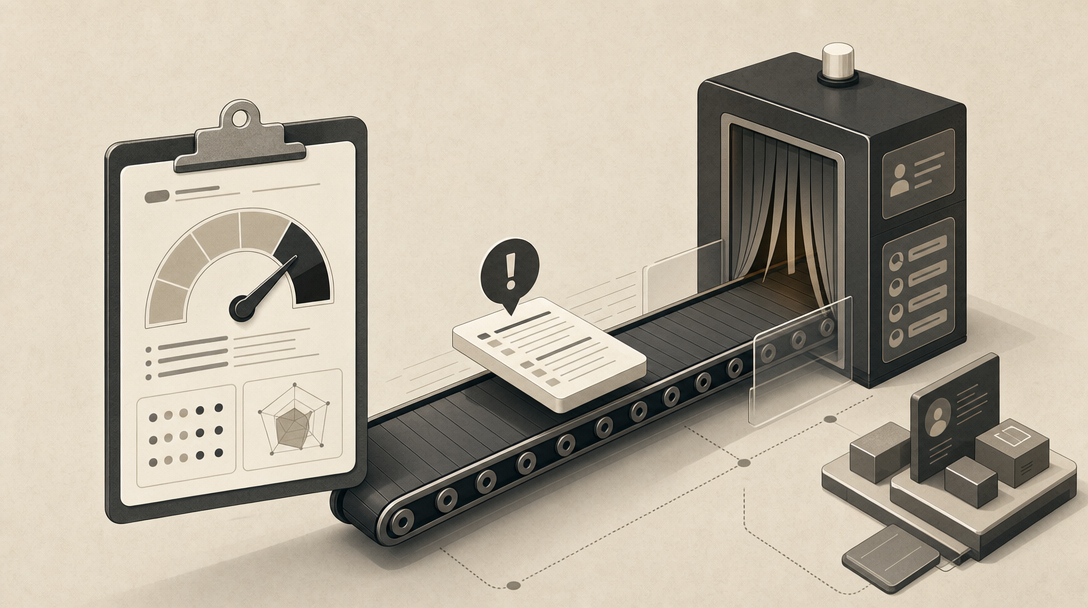

HubSpot's AEO Grader is a free, one-time Answer Engine Optimization diagnostic that scores how ChatGPT, Perplexity, and Gemini characterize your brand based on their training data, returning a single composite score out of 100 across five dimensions. It is a snapshot tool, not a content engine — it tells you whether AI systems describe you accurately, favorably, and competitively, then leaves the remediation work to you.

The product exists because AI search has flattened the consideration set. When a buyer asks ChatGPT "what's the best CRM for a 50-person sales team," the model either names you in the synthesized answer or it does not — there is no second page of results to scroll. HubSpot positions the Grader as the entry point to that visibility question, with HubSpot Academy describing it as a tool that "scans real AI-generated responses to determine your brand's appearance, sentiment, and competitive position" (Source: HubSpot Community).

The HubSpot AEO Grader is a diagnostic that scores AI visibility; it does not produce, publish, or refresh the content needed to fix what it finds. That distinction matters because the Grader's natural upsell path leads to HubSpot AEO at $50/month and, beyond that, to Marketing Hub Pro+ — a CRM purchase, not a content production system. For teams without HubSpot in their stack, the score is useful as a starting line and nothing more.

The five-dimension framing is also less settled than HubSpot's marketing implies. HubSpot-authored sources emphasize brand recognition, sentiment, competitive position, source authority, and contextual accuracy. Third-party reviews like Scribe describe a different five — structured data, FAQ schema, content clarity, E-E-A-T, and content hierarchy. Treat the score as directional.

How Do You Use HubSpot's AEO Grader to Measure Brand Visibility in AI Tools?

To run the AEO Grader, you enter four inputs — company name, location, industry, and product or service — and submit a short form to access the full results. HubSpot then sends that brand information to ChatGPT, Perplexity, and Gemini, asks each model how it characterizes the brand, and returns a composite score, a per-dimension breakdown, and a written interpretation.

The workflow is intentionally light:

- Enter your own brand or a competitor's into the input form at hubspot.com/aeo-grader.

- Add location, industry, and the specific product or service you want evaluated.

- Submit the form (HubSpot uses this as a lead-capture step).

- Read the score out of 100, the five-dimension breakdown, and the narrative interpretation.

- Optionally, repeat for 2–5 direct competitors to build a comparison set, as recommended by ALM Corp.

Because HubSpot lets you enter any company, the most useful application is not your own grade in isolation — it is the spread between you and the three or four competitors most likely to appear in the same buyer prompt. A 62 on its own is meaningless; a 62 against competitors scoring 78 and 81 tells you the consideration set is moving without you.

Reddit threads in r/DigitalMarketing and LinkedIn posts in late 2025 confirm broad marketer interest in the tool, mostly as a curiosity check rather than a production input. Treat it the same way: a useful awareness signal that justifies a real workflow, not a substitute for one.

How Is HubSpot AEO Grader Different From HubSpot AEO?

The Grader is a free, one-time snapshot. HubSpot AEO is the ongoing monitoring product at $50/month that tracks specific prompts daily across ChatGPT, Perplexity, and Gemini, compares competitors, and reports core metrics week over week. Conflating the two is the single most common mistake in current SERP coverage.

Here is how the two products separate cleanly:

| Capability | HubSpot AEO Grader (Free) | HubSpot AEO ($50/month) | Marketing Hub Pro / Enterprise / Pro+ |

|---|---|---|---|

| Cost | Free | $50/month (28-day trial per ContentMonk) | Bundled with Marketing Hub tier |

| Cadence | One-time snapshot | Daily prompt runs | Daily prompt runs |

| Engines | ChatGPT, Perplexity, Gemini | ChatGPT, Perplexity, Gemini | ChatGPT, Perplexity, Gemini |

| Prompt set | Auto-generated from inputs | User-defined tracked prompts | CRM-suggested prompts based on industry and segments |

| Competitor comparison | Manual (re-run per competitor) | Built-in, ongoing | Built-in, ongoing |

| Week-over-week tracking | No | Yes | Yes |

| Recommendations | General | Prioritized | Tools to act on recommendations (Pro+) |

| Content creation | No | No | No |

The Pro+ distinction matters. HubSpot's AEO marketing guide says Marketing Hub Pro+ adds "the tools to act on" recommendations — meaning landing pages, email, and CRM-driven workflows already inside HubSpot. It does not mean the platform writes citation-ready blog content, builds glossary pages, or refreshes archived posts. Content production is still on you.

If your stack is already HubSpot, AEO at $50/month is a low-friction add. If it is not, you are buying a CRM to monitor visibility you still have to fix elsewhere. For teams that need a publishing pipeline structured for citations across ChatGPT, Perplexity, Gemini, Copilot, and Google AI Overviews — not just a dashboard that grades the gap — Get My Site GEO Optimized.

ContentMonk's review puts the trade-off bluntly: HubSpot AEO's strength is CRM-powered prompts and three-engine tracking; its gap is that it "does not include content creation or SEO tracking."

Which AI Engines and Model Versions Does HubSpot AEO Grader Use?

HubSpot identifies ChatGPT, Perplexity, and Gemini as the three engines the Grader queries, but the underlying model versions and data freshness are not fully transparent. A HubSpot-page snippet references GPT-5.2 for the free analysis. A separate HubSpot Community thread states the tool uses OpenAI's GPT-4o with a knowledge cutoff. Both cannot be true simultaneously, and HubSpot has not publicly reconciled them. The GPT-5.2 reference should be treated cautiously — it does not match any publicly released OpenAI model name as of writing, and may reflect a draft, typo, or unreleased internal label rather than a confirmed version.

The deeper issue is corpus type. ChatGPT and Gemini results in the Grader appear to reflect training-data characterizations — what the model already knows about your brand from its pretraining corpus. Perplexity, by design, can blend training data with real-time web search, which means a Perplexity score may shift faster than the others when you publish or refresh content. The same HubSpot Community thread notes that Perplexity "differs because it can use internet search for real-time information" (Source: HubSpot Community).

What you can conclude:

- The Grader queries ChatGPT, Perplexity, and Gemini.

- At least one of those engines (Perplexity) likely incorporates live retrieval.

- The exact GPT version, query timing, and refresh cadence are not consistently disclosed.

What you cannot conclude from the available sources: whether identical inputs produce identical scores across runs, whether the system caches recent grades, or how the Grader handles model drift between OpenAI releases. Treat the score as a directional read on visibility, not a reproducible measurement.

What Does the Score Out of 100 Mean?

HubSpot says the Grader uses deterministic scoring with schema validation and retry logic to produce a total score out of 100 calculated across five dimensions. Deterministic scoring means the math on the back end — once the model returns its characterization — is consistent. It does not mean the underlying AI answers are deterministic, which they are not.

The scoring-dimension question is unresolved across the public corpus:

| Source | Five Dimensions Described |

|---|---|

| HubSpot AEO Grader page | Brand-level signals: recognition, sentiment, competitive position, authority, accuracy |

| HubSpot Academy (community video) | Appearance, sentiment, competitive position |

| Scribe (third-party review) | Structured data completeness, FAQ schema, content clarity, E-E-A-T, content hierarchy |

These are not the same framework. HubSpot's own descriptions emphasize brand-perception outcomes — how the model talks about you. Scribe's description reads like a technical SEO audit — what is on your pages. Both could be inputs into a single composite, but no public source confirms the actual weighting.

What this means operationally: a 72/100 tells you the engines do not characterize you cleanly, but it does not tell you whether the gap is on the brand side (low recognition, weak entity signals, mixed sentiment) or the page side (missing schema, thin FAQs, unclear hierarchy). A weak AEO Grader score is a prompt to investigate, not a prescription. The remediation depends entirely on which dimension is dragging the composite down, and the Grader's general recommendations rarely make that diagnosis specific enough to act on directly.

What Does the Grader Not Show You?

The Grader does not show you the exact prompts it ran, the full model responses, the sources or citations the models referenced, when the queries were sampled, or how the score would shift if you re-ran it tomorrow. For sophisticated SEO and growth teams, those omissions are the work.

Specific blind spots, drawn from HubSpot's own materials and third-party reviews:

- Prompt transparency. You see a score and an interpretation, not the literal prompts sent to ChatGPT, Perplexity, and Gemini. Without prompts, you cannot replicate or stress-test the result.

- Response transcripts. Scribe notes the Grader "does not show how a brand appears in specific LLM responses over time." You get aggregate scoring, not the sentences the models actually produced.

- Sources and citations. The Grader scores characterization but does not list which web sources the models drew from. Citation provenance — the actual URLs being quoted — is the most actionable AEO signal, and it is absent.

- Query timing. As HubSpot Community confirmed, the tool does not surface when the underlying queries ran. A 600-query sample ranging from January to September would produce a very different read than 600 queries run yesterday — and you cannot tell which you got.

- Repeatability. No public source — not Scribe, ContentMonk, Skillaeo, INSIDEA, Blogarama, ALM Corp, AirOps, Profound, Conductor, or Scrunch AI — has published a controlled test of running identical inputs through the Grader twice to measure score stability.

- Prompt sensitivity. Small wording changes in industry or product description appear to move scores. The Grader does not quantify that sensitivity.

- Competitor-swap effects. When you change which competitors a model is comparing you against, the framing shifts. The Grader does not isolate that variable.

- Model drift. OpenAI, Anthropic, Google, and Perplexity update models continuously. The Grader does not version-stamp results.

Skillaeo characterizes the Grader as a "quick signal and lightweight awareness tool" rather than a deeper diagnosis tool — an accurate framing. Use it to prove visibility is uneven; use something else to fix it.

Is It the Best Answer Engine Optimization Tool for Marketers?

The Grader is the best free awareness tool for confirming that AI engines describe your brand inconsistently. It is not the best tool for fixing that, because it does not produce, publish, or refresh the content that earns citations.

The clean decision criteria:

| Use case | Grader is right | Grader is not enough |

|---|---|---|

| One-time competitor benchmark | ✓ | |

| Internal proof that AI answers vary by engine | ✓ | |

| Quick consideration-set check before a board meeting | ✓ | |

| Ongoing weekly visibility monitoring | Needs HubSpot AEO at $50/month or alternative | |

| Content production (definitions, comparisons, FAQs) | Out of scope entirely | |

| CMS publishing and archive refreshes | Out of scope entirely | |

| Programmatic SEO across hundreds of pages | Out of scope entirely | |

| Multi-site agency workflows | Out of scope entirely | |

| Schema, internal linking, and E-E-A-T execution | Out of scope entirely |

HubSpot's strength is diagnosis and CRM-connected monitoring. The weakness is that diagnosis without execution is a deck, not a strategy. Most of the third-party comparison pages — Scribe, ContentMonk, Skillaeo, INSIDEA, Blogarama, ALM Corp, AirOps, Profound, Conductor, Scrunch AI — point at this same gap, though they are vendor-authored and should be read with that bias in mind.

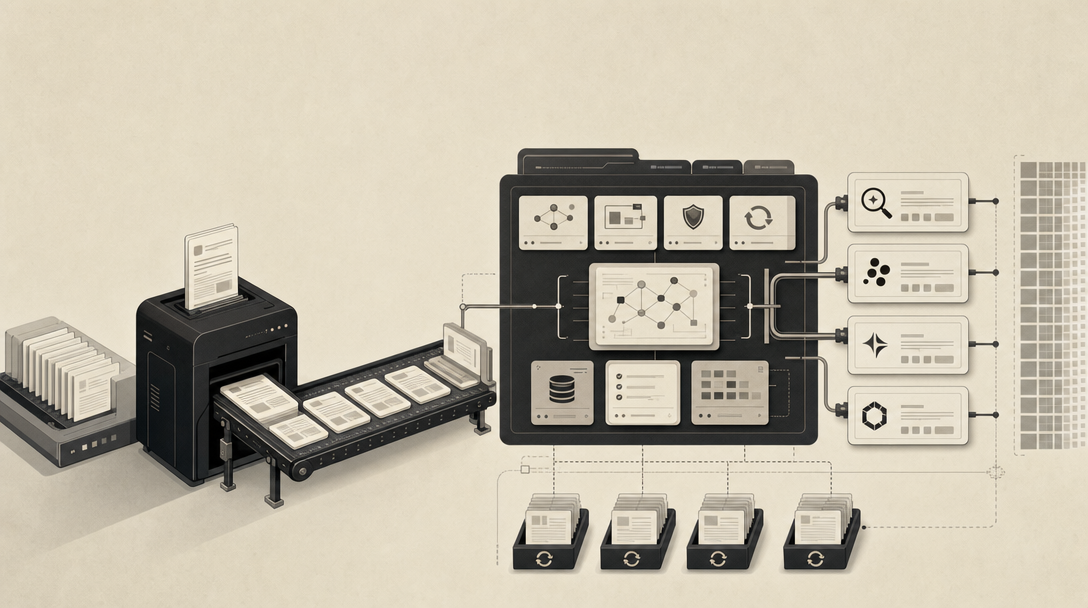

What Should You Do After a Weak AEO Score?

Treat the score as a hypothesis, not a verdict. The work that earns citations happens on your own pages, not inside HubSpot's dashboard.

A practical post-grade operating model:

- Verify the diagnosis. Run the same brand against ChatGPT, Perplexity, Gemini, Google AI Overviews, Copilot, and Claude manually with the prompts your buyers actually use. Note which engines describe you well, which describe you wrong, and which omit you entirely.

- Identify the failing signal. Is the gap brand recognition (the model does not know who you are), sentiment (it knows you but characterizes you negatively or vaguely), source authority (it cites competitors but not you), or answer structure (your pages exist but cannot be extracted into a direct answer)?

- Map gaps to citable assets. Each failure mode has a corresponding content type: definition pages for recognition gaps, comparison pages for competitive position, customer-evidence pages for sentiment, FAQ and schema work for extraction, and entity-consistent author and organization markup for E-E-A-T.

- Build the assets at production scale. A handful of polished pages will not move a brand-recognition signal across three models. You need consistent, repeated, cross-linked coverage of the entities and questions that define your category.

- Publish through your existing CMS. WordPress, Webflow, headless, or custom — citation-readiness is a content and structure problem, not a platform migration problem.

- Refresh the archive. Outdated pages drag composite signals down. Republishing with current data, year stamps, and tightened direct-answer openings is one of the highest-leverage moves available.

- Re-test on a cadence. Run the Grader again in 30, 60, and 90 days. Run manual prompts in parallel. Track which engines respond first to your changes (Perplexity, with its retrieval bias, typically does).

This is the operating loop the Grader assumes you already have. Most teams do not.

Mentionwell is the blog engine for teams that need this loop running across one site or hundreds: research-grounded articles with AEO, GEO, LLMO, and SEO built into every draft, programmatic SEO and archive refreshes, and delivery into your existing CMS or headless stack. It is not a generic AI writing tool — it is the publishing pipeline that turns a weak AEO score into citation-ready coverage on a repeatable cadence.

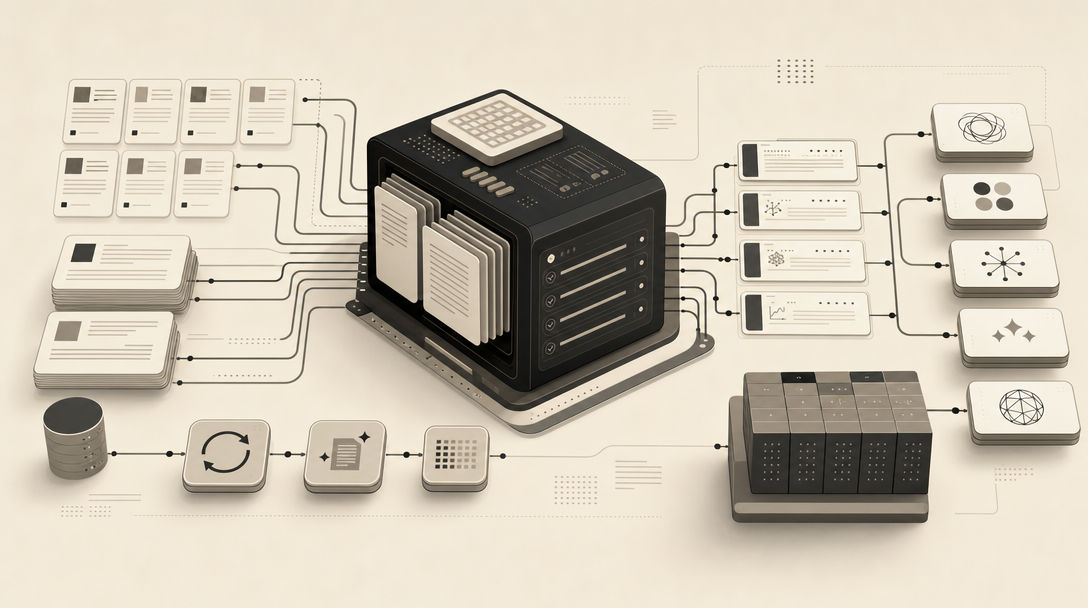

How Should AEO, GEO, LLMO, and SEO Work Together After the HubSpot Snapshot?

AEO, GEO, LLMO, and SEO work together as four distinct layers on the same content: AEO shapes the direct answer, GEO earns the citation in synthesized AI responses, LLMO strengthens the entity signals models use to recommend you, and SEO keeps the page crawlable and indexed in the first place. The HubSpot AEO Grader measures the outcome; this stack is what produces it.

How the layers separate:

- AEO (Answer Engine Optimization) targets direct-answer extraction. It lives in the first 1–2 sentences of every section, FAQ schema, definition structures, and clear question-and-answer pairing. See [What Is AEO in 2026?](#) for the full breakdown.

- GEO (Generative Engine Optimization) targets citation in synthesized AI answers — ChatGPT, Perplexity, Gemini, Copilot, Google AI Overviews. It depends on entity clarity, source authority, and content structures the models can lift verbatim. See [What Is GEO in 2026?](#).

- LLMO (Large Language Model Optimization) strengthens the entity, brand, and topical signals models use to decide what to recommend. This is where consistent author bios, organization schema, and cross-domain mentions matter. See [What Is LLMO in 2026?](#) and [What Is llms.txt in 2026?](#) for the crawler-file layer.

- SEO keeps crawlability, indexation, internal links, and traditional search demand intact. AI engines retrieve from indexes that classic SEO still gates.

For teams operationalizing this across surfaces, see the per-engine guides on showing up in [ChatGPT](#), [Perplexity](#), [Google Gemini](#), [Microsoft Copilot](#), [Claude](#), [Google AI Overviews](#), [Meta AI](#), [Grok](#), and [DeepSeek](#). And for the workflow choice itself, [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](#) is the right next read.

The HubSpot AEO Grader is a useful free signal that something is off. The system that fixes it is the part HubSpot does not sell you. If you want that pipeline running across your site without rebuilding your stack — research-grounded articles, citation-shaped structure, archive refreshes, and delivery into the CMS you already use — Get My Site GEO Optimized.

Sources

- AEO Grader - 2026www.hubspot.com

- Show Up in AI Search with Answer Engine Optimization ...www.hubspot.com

- Academy Short | What Is HubSpot's AEO Gradercommunity.hubspot.com

- How HubSpot's AEO Grader Tool Keeps You Visiblewww.linkedin.com

- AI Search Tool | HubSpotwww.hubspot.com

- AI Sentiment Analysis Tool | HubSpotwww.hubspot.com