What does Profound actually do?

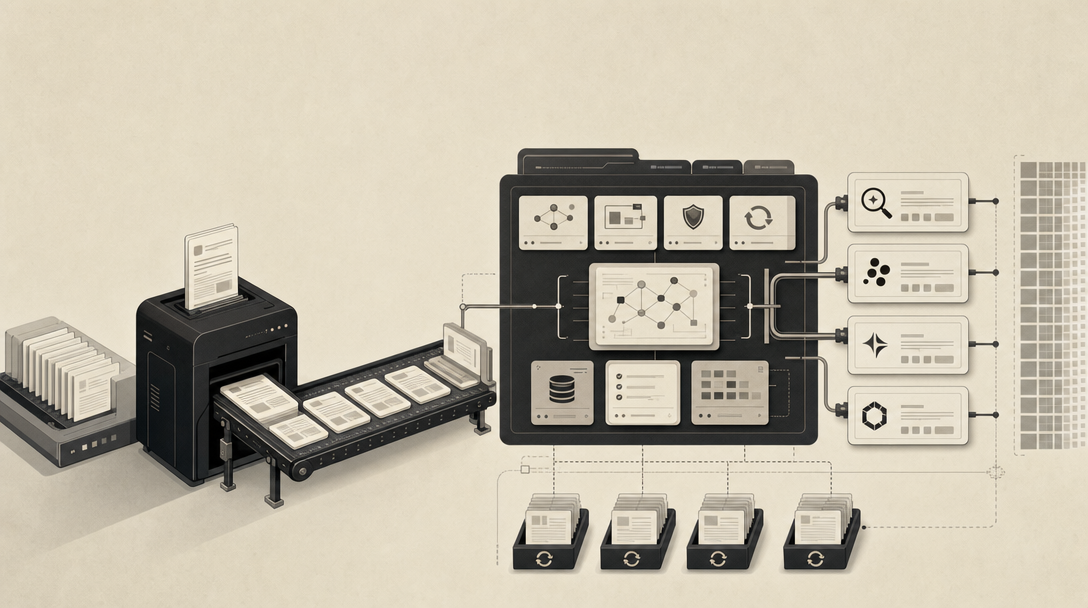

Profound is an enterprise AI visibility and GEO monitoring platform — not a blog engine, not a content generator, and not a writing tool. It queries answer engines with tracked prompts, records whether your brand appears in the responses, and surfaces the result as citation and mention data inside a dashboard. That's the category. According to Surferstack, this is what AI visibility tools do as a class: "query ChatGPT, Perplexity, Claude, or Google AI Overviews with tracked prompts, record whether a brand appears, and show the result in a dashboard."

A quick entity note before going further: this article is about Profound, the AI visibility company covered by Jason Del Rey at The Aisle and reviewed by Surferstack and LLM Pulse. It is not about the adjective "profound." If you arrived here from a dictionary result, the rest of this piece won't help you.

The answer-engine surface Profound monitors maps to where buyers actually need to show up: ChatGPT, Perplexity, Claude, Google AI Overviews, Google AI Mode, Gemini, Microsoft Copilot, Grok, Meta AI, and DeepSeek. Profound's job is to tell you where your brand is invisible across those engines, not to produce the pages that would make it visible. That distinction is the entire reason this article exists.

What Profound does not do — based on the cited reviews — is operate the publishing layer: source research, AEO-shaped page structure, CMS or headless delivery, archive refreshes. Surferstack characterizes Profound as "the strongest enterprise monitoring option" in its comparison while noting it "costs significantly more and lacks content generation." Treat it as a measurement tool sitting upstream of your content workflow.

Did Profound raise $1 billion, or is it valued at $1 billion?

Profound announced new funding at a $1 billion valuation — but the available evidence does not say Profound raised $1 billion in cash, and the two are not the same thing.

The cleanest attribution comes from Jason Del Rey's LinkedIn post tied to The Aisle, which describes Profound as "one of the leaders in the SEO for AI space" and notes that the company "announced new funding at a $1 billion valuation." Valuation is the price tag on the company; raise amount is the cash that came in the door at that price. A startup can be valued at $1 billion while raising a fraction of that in a single round.

The corpus also contains a separate, smaller number. Surferstack's Profound review states the company has $58.5M raised in total. That figure is consistent with a unicorn-tier valuation on relatively modest cumulative funding — common for AI-category companies in 2025–2026 — but again, neither source confirms the size, investors, or close date of the specific round behind the $1B valuation headline.

Does Profound generate articles or just reports?

Profound is built to report AI visibility, not to write the articles that close citation gaps. A monitoring dashboard can show you that competitors are getting cited more often in ChatGPT or Google AI Overviews; it cannot, on its own, produce the research-grounded pages that an answer engine would cite instead.

The source evidence is slightly inconsistent and worth naming directly. One Surferstack guide says Profound "lacks content generation." A separate Surferstack alternatives table is more nuanced, listing Profound's content generation as "Limited." Neither source verifies what "limited" covers in practice — whether it produces briefs, full drafts, CMS-ready articles, schema, or refresh recommendations. In procurement terms, that ambiguity is the answer: if your team needs to brief, draft, publish, and refresh complete articles, do not assume Profound does any of those steps end-to-end without a live demo against your actual workflow.

The practical implication of the category split:

| Capability | AI visibility monitoring (Profound class) | Citation-shaped blog engine |

|---|---|---|

| Tracks prompts across answer engines | Yes | Sometimes |

| Records brand mentions and citations | Yes | No (not the job) |

| Identifies citation gaps vs competitors | Yes | Sometimes |

| Researches and writes full articles | No or limited | Yes |

| Structures pages for AEO, GEO, LLMO, SEO | No | Yes |

| Publishes into CMS or headless stack | No | Yes |

| Refreshes archive pages on a cadence | No | Yes |

Surferstack puts it bluntly in its content-team alternatives guide: a dashboard "that shows competitors are getting cited more does not close the gap if it does not write the article that addresses it."

How much does Profound cost?

Profound's pricing has a $99 entry point and a much higher real-world floor. Surferstack's Profound review says the $99 Starter plan is ChatGPT-only with 50 prompts, which it characterizes as "not enough to run a real program," and that meaningful functionality starts at $399/month. That single sentence is the most useful pricing summary in the corpus.

LLM Pulse — a competitor — adds higher-tier comparisons that should be read as competitor-sourced claims rather than neutral facts. According to LLM Pulse, Profound Lite runs $499/month with limited prompts and 5 models, while LLM Pulse compares its own €49/month tier against that price point and claims "90% cost savings." LLM Pulse also asserts that Profound's $99 Starter covers 1 AI model versus 5 models on its own entry plan, and that Profound's entry multi-model tier is $399/month for 3 models.

| Tier (per Profound reviews) | Price | What's included | Source |

|---|---|---|---|

| Starter | $99/month | ChatGPT only, 50 prompts | Surferstack |

| Real-functionality floor | $399/month | Multi-model coverage (3 models per LLM Pulse) | Surferstack, LLM Pulse |

| Lite (mid-tier) | $499/month | 5 models, limited prompts | LLM Pulse |

The buying lesson is not "Profound is expensive." It's that multi-model coverage, prompt volume, and workflow depth determine real cost far more than the headline entry price — and none of those numbers tell you anything about whether your team can produce the pages that the dashboard says are missing.

If a billion-dollar dashboard still leaves your team without a single new article, the missing layer is publishing. Get My Site GEO Optimized puts a citation-shaped editorial pipeline behind whatever monitoring tool you already trust.

How does Profound compare to the main alternatives?

Profound sits at the premium enterprise end of a market that also includes Promptwatch, Peec AI, Otterly.AI, Scrunch, and LLM Pulse. The right comparison is not feature checklists — it's whether the tool stops at reporting or extends into the work that creates citations.

Using only Surferstack's and LLM Pulse's own published numbers:

| Tool | Starting price | LLM coverage | Crawler logs | Content generation |

|---|---|---|---|---|

| Profound | $99/mo (Starter); $399/mo real floor | 9+ | No | Limited |

| Promptwatch | $99/mo | 10 | Yes | Per Surferstack table |

| Peec AI | $49/mo | 3 | — | — |

| Otterly.AI | $49/mo | 3 | — | — |

| Scrunch | $300/mo + $25/user | 4 in Core (ChatGPT, Perplexity, Google AI Overviews, Copilot); Claude, Gemini, Grok, Meta AI on Enterprise | — | Reporting-focused |

| LLM Pulse | €49/mo | 5 at entry | — | — |

A few things to read carefully here. Surferstack reports Promptwatch is used by 6,700+ brands and agencies including Booking.com and Center Parcs, and that Scrunch has raised $15M+. Those are credibility signals, not capability proofs — both tools, like Profound, are primarily monitoring platforms. The category boundary that matters is: all of these tools tell you where you're invisible; none of them, on their own, ship the citable pages that fix it.

LLM Pulse's positioning of Profound is consistent with that frame. It calls Profound a premium platform "for large enterprises, emphasizing compliance, enterprise workflows, and broader AI coverage at higher price points," and argues the lower tiers are "limited and expensive compared with newer alternatives." That is a competitor talking about a competitor, but it lines up with Surferstack's independent assessment that real Profound functionality begins at $399/month.

If your shortlist is Profound vs Scrunch vs Promptwatch, you are still comparing dashboards. See our take on Scrunch as a visibility command center and how Ahrefs Brand Radar's $828/month lands in the same category before a single page gets written.

What workflow is still required after a dashboard finds citation gaps?

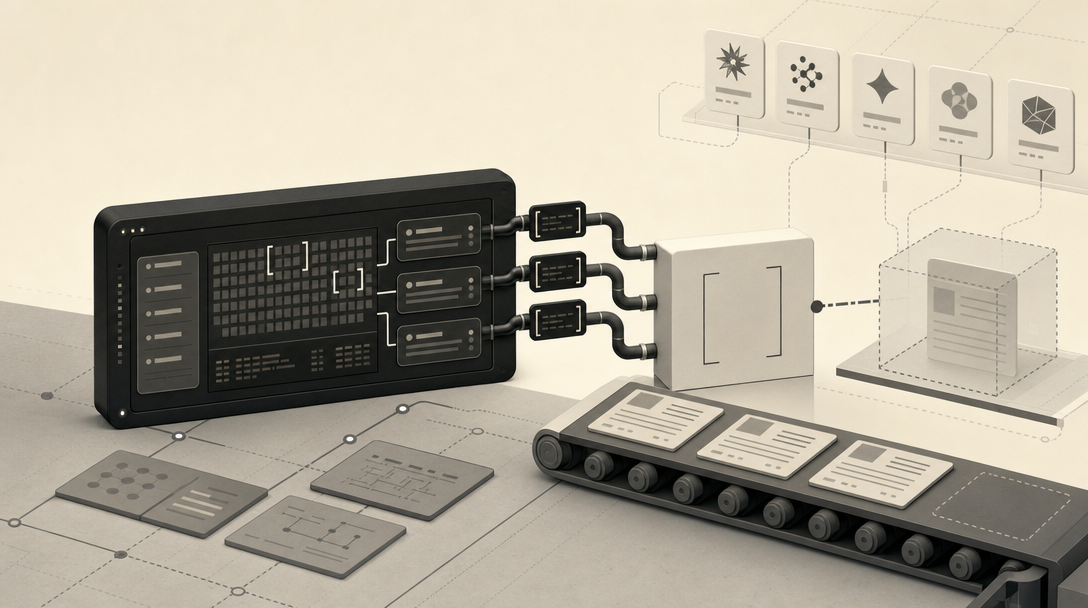

The work splits into seven steps, and a monitoring dashboard like Profound only runs the first one. Surferstack frames AI visibility improvement as a three-step process — know where you're invisible, figure out what to do, then do the work and measure whether it worked — but content teams need a sharper operational sequence than that. Here it is in practice:

- Identify prompts and engines where the brand is invisible. Use Profound, Promptwatch, Scrunch, or any monitoring tool to surface tracked prompts where ChatGPT, Perplexity, Claude, Google AI Overviews, Gemini, Copilot, Grok, Meta AI, and DeepSeek cite competitors instead of you.

- Map competitor citations to missing topics and entities. Pull the URLs being cited. Read them. Identify which topics, entity definitions, comparison frames, or data points your site does not yet own.

- Research source evidence. For each missing topic, gather first-party data, primary sources, and verifiable third-party stats. Citation-shaped content is research-grounded; AI engines preferentially surface pages that look authoritative on entity and source dimensions.

- Produce citation-shaped pages. Write direct-answer openings, attributed statistics, named entities, numbered process steps, and structured comparison tables. This is the AEO, GEO, and LLMO layer — the structure that makes a page extractable.

- Publish into the CMS or headless stack. Ship the page where it can be crawled by Bing (for ChatGPT, Copilot, and Grok), Google (for AI Overviews, AI Mode, Gemini), and direct Perplexity, Claude, Meta AI, and DeepSeek crawlers. Schema, internal links, and llms.txt all live here.

- Refresh archives. Update older pages with new data, new entity references, and new direct-answer formatting. Citation-readiness decays; archive refresh is recurring work, not a one-time migration.

- Retest across answer engines. Re-run the original tracked prompts. Measure whether the new and refreshed pages now appear as citations or mentions. Feed the result back into step one.

This is the workflow Profound does not operate. It is also the workflow that determines whether your brand earns citations or just gets measured against the ones it didn't earn. For a deeper breakdown of how the structural layers fit together, see AEO vs GEO vs LLMO: Which Workflow Fits Your Team?.

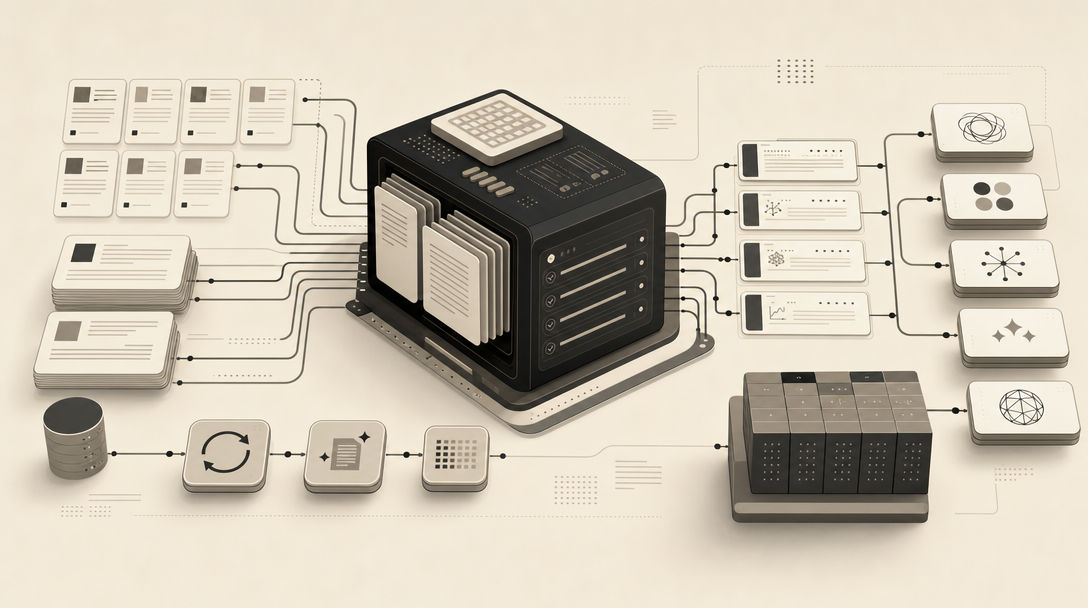

How should AEO, GEO, LLMO, and SEO work together in a citation-focused pipeline?

Treat them as four layers of the same publishing pipeline, not four competing strategies.

- AEO (Answer Engine Optimization) — answer-ready page structure: direct-answer leads, numbered steps, comparison tables, FAQ patterns. This is what makes a page extractable as a quoted answer.

- GEO (Generative Engine Optimization) — citation readiness inside generative engines like ChatGPT, Perplexity, Claude, Gemini, and Copilot. Entity clarity, source attribution, and topical depth determine whether the engine cites you when it generates an answer.

- LLMO (Large Language Model Optimization) — entity- and language-level readability. Consistent brand naming, proper nouns spelled the same way everywhere, full disambiguation on first mention, llms.txt where appropriate.

- SEO — indexable, query-aligned classic search performance. Still the foundation: if Bing and Google cannot crawl and index your page, no answer engine will surface it.

A blog engine operationalizes all four together. Mentionwell is built for exactly that: onboard a domain, define a site profile, and run a citation-shaped editorial pipeline — research, draft, AEO/GEO/LLMO structure, CMS or headless publishing, and scheduled archive refresh — across one site or many. It's a content engine, not an AI visibility dashboard. The two work together: monitoring tells you what to write; the engine writes and ships it.

For engine-specific implementation, see How to Show Up in ChatGPT in 2026, Perplexity, Google AI Overviews, Gemini, Claude, Copilot, Grok, Meta AI, and DeepSeek.

Who should choose Profound, and who needs a blog engine instead?

Buy Profound — or a peer monitoring platform — if your team is enterprise-scale, has compliance and procurement requirements, has an existing content operation that already produces and refreshes pages at volume, and your bottleneck is measurement and reporting across ChatGPT, Perplexity, Claude, Google AI Overviews, Gemini, Copilot, Grok, Meta AI, and DeepSeek.

Buy a citation-focused blog engine instead if you are a SaaS marketing team, an agency managing multiple client domains, a multi-site operator, or a programmatic SEO team — and your bottleneck is producing and refreshing the pages that answer engines can actually cite. A dashboard cannot fix a publishing gap. If the missing capability is reporting, buy monitoring; if the missing capability is publishing, use a content engine.

Mentionwell is built for the second case. Get My Site GEO Optimized and put a citation-shaped editorial pipeline behind whatever monitoring tool you already trust.

Sources

- Profound AI Startup Raises $1B, Brands Weigh AI-Driven Discoverywww.linkedin.com

- THE MAN WHO GAVE AWAY $1 BILLION — AND EVERYONE ...www.facebook.com

- ONE BILLION DOLLARS - Paul Krugmanpaulkrugman.substack.com

- OpenAIsurferstack.com