What Does It Mean to Show Up in Grok in 2026?

Showing up in Grok means one of three specific outcomes: a direct brand mention inside Grok's answer, a citation that links to a URL you own, or a product or service reference that points readers to your offering even when your brand name is not spoken. Singularity Digital draws this distinction clearly, and it matters because each outcome requires a different content and distribution move.

Grok is the xAI assistant embedded inside X, and according to 99helpers it can also be reached through grok.com, the iOS and Android apps, the xAI API, and Microsoft Azure AI. What makes Grok different from ChatGPT, Gemini, Claude, and Perplexity is its direct line into real-time X activity — tweets, threads, replies, and industry conversations — alongside whatever web content it retrieves. AISO System describes this as a dual-signal model: web sources plus live X data.

To show up in Grok, the job is not generic "AI visibility" but making your entities, pages, and public X posts easier for Grok to identify, trust, and reference as evidence for a specific answer.

That framing matters for B2B SaaS teams. A Grok answer about "best observability platforms for Kubernetes" is shaped by what's indexable on the web, what verified accounts are saying on X this week, and what entities Grok already associates with the category. Chasing impressions is the wrong target. The target is engineered citation readiness across three surfaces — owned pages, structured entities, and public X activity — measured against a fixed prompt set over time.

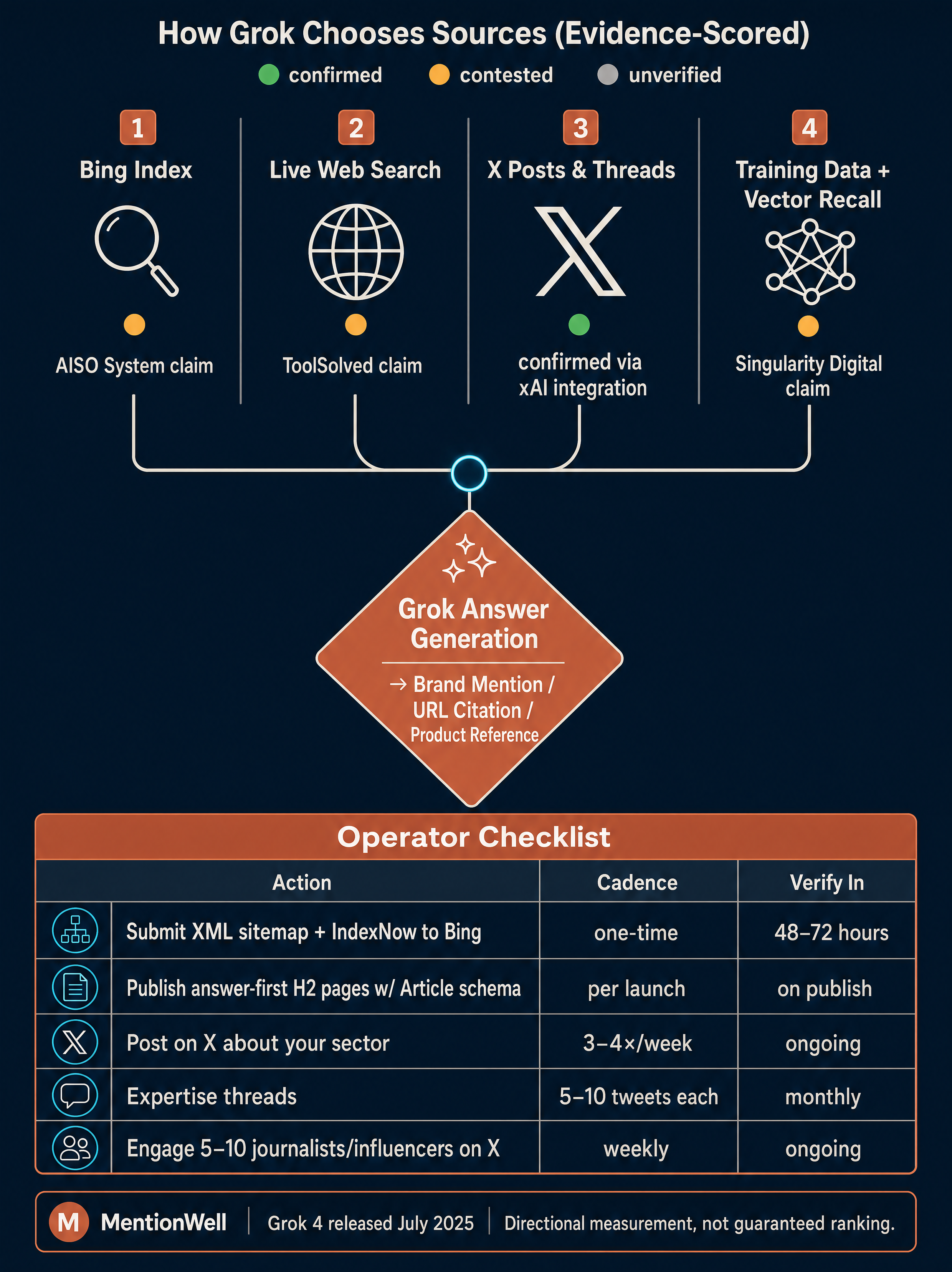

How Does Grok Choose Its Sources?

There is no public xAI documentation confirming Grok's exact retrieval or ranking logic, so treat every vendor claim as a working hypothesis. The defensible move is to reconcile the three competing models in the public corpus and optimize for the union of their requirements.

Three sources describe Grok's retrieval differently:

| Source | Claimed retrieval model | Operational implication |

|---|---|---|

| AISO System | Grok primarily relies on the Bing index for web-based responses; sites not indexed on Bing are "invisible" to Grok | Prioritize Bing Webmaster Tools, XML sitemaps, and IndexNow |

| ToolSolved | Grok pulls from three sources: training data, live web search, and X posts | Maintain crawlable web content and an active public X presence |

| Singularity Digital | Grok does not run a traditional web crawler or maintain a searchable URL index; it relies on training data, real-time X signals, and vector-based semantic representations | Focus on entity clarity, topical density, and engaged public X content |

These are not fully compatible. AISO System treats Bing as the gate; Singularity Digital disputes that a URL index even exists in the same sense. Rather than pick a winner, build for the intersection.

Optimize for four surfaces simultaneously: Bing crawl access, structured owned content with clear entities, sustained public X activity, and third-party corroboration across press, forums, and partner sites. AISO System specifically notes that Grok builds trust through triangulation — the same brand appearing across X, articles, specialized forums, and owned web content.

This is also why an answer engine strategy needs to be multi-engine. The discipline that earns citations in Grok — entity clarity, public corroboration, answer-first structure — is the same discipline that improves odds in ChatGPT, Perplexity, Gemini, and Claude. For the cross-engine operating model, see Mentionwell's [AEO vs GEO vs LLMO workflow guide](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team).

Do You Need Bing Webmaster Tools, Sitemap Submission, and IndexNow?

Yes — if any part of Grok's web retrieval flows through Bing, then Bing Webmaster Tools, XML sitemap submission, and IndexNow are the highest-leverage crawl-access actions available, and Google Search Console alone does not cover them. This is AISO System's core operational claim, and it costs very little to execute even if Grok's exact retrieval model turns out to be broader than Bing.

The mistake AISO System flags is the default assumption that Google Search Console is sufficient. For Grok-facing workflows, Bing is the entry point you cannot skip.

A minimum viable setup:

- Create a Bing Webmaster Tools account and verify the domain.

- Submit the XML sitemap for all pages you want Grok to be able to retrieve.

- Enable IndexNow so Bing is notified automatically when new pages publish or existing pages update.

- Verify indexation of key pages within 48–72 hours of submission, per AISO System's recommendation.

- Re-check indexation after every material refresh, not just at launch.

IndexNow is the piece most teams skip, and it is the cheapest way to compress the window between publish and potential Grok availability. For programmatic SEO or large archive refreshes, manual sitemap pings will not scale — IndexNow integration belongs in the publishing pipeline itself, not as a quarterly task.

This is crawl access, not a citation guarantee. Being indexed in Bing is necessary but not sufficient. It gets your pages into the set of documents Grok could plausibly retrieve; the rest of the article covers what makes a page worth retrieving.

How Important Is an Active X Presence for Grok Visibility?

Active public X participation is the single signal that distinguishes Grok optimization from every other AI engine. ToolSolved frames it directly: a brand actively discussed on X can generate stronger Grok signals than a brand with comparable web content and no social footprint, because Grok has direct access to public X posts, engagement signals, and verified account content.

Singularity Digital adds that Grok favors brands that participate in conversations, trends, and high-engagement threads, and that timely content around breaking or trending topics gives brands a quicker path to Grok mentions.

AISO System provides the most concrete cadence guidance in the public corpus:

| Activity | AISO System recommendation |

|---|---|

| Posting frequency | At least 3–4 posts per week about your sector |

| Expertise threads | Develop topics across 5–10 tweets with data and examples |

| Relationship building | Engage with 5–10 journalists or influencers active in your sector on X |

| Content types | Concrete data, statistics, clear positions, visuals, replies |

Treat those numbers as starting benchmarks, not proven thresholds — no source quantifies the weight Grok assigns to any individual signal.

There is also a governance constraint worth naming. According to the AI Response Generator guide, Grok reply interactions on X require public accounts and public posts; Grok cannot access direct messages or private-only interactions. If your Grok visibility plan depends on employee or founder X activity, those accounts and posts must be public — private accounts and DMs produce zero usable signal.

For B2B SaaS teams, this is a real operating shift. A content calendar that ends at "publish and enqueue for email" leaves the Grok-specific signal on the floor. X distribution briefs need to be part of every article's production pipeline, not a separate social task.

What Does Grok Look for When Deciding Which Brands or Content to Cite?

Grok favors content that answers a question directly, demonstrates expertise with evidence, declares its authors clearly, and exposes its structure through schema. Singularity Digital frames this as technical clarity — clean metadata, schema markup, and clear author expertise make content easier for Grok to interpret — and AISO System operationalizes it into a repeatable page shape.

The answer-first page structure AISO System recommends:

- H2 written as the direct question a reader would ask.

- First paragraph answers in 2–3 sentences, self-contained.

- Supporting data, studies, and concrete examples follow the direct answer.

- Clear author attribution with verifiable expertise signals.

- An actionable summary or next step at the end of the section.

On structured data, AISO System recommends:

- Article or BlogPosting schema with `author`, `datePublished`, and `headline` fields.

- Organization schema on the publisher entity with consistent name, URL, and logo.

- Person schema for named authors with credentials and a linked profile.

- FAQPage schema as an optional structured-data layer when genuine Q&A content exists on the page.

Singularity Digital's point about authority matters too: Grok rewards well-supported content over keyword-heavy posts, which means evidence blocks — a cited statistic, a dated study, a named customer, a real example — do more work than keyword density. This is the operational overlap between AEO, GEO, LLMO, and classic SEO: the same page structure that earns Grok citations is also what wins in Perplexity sources, Gemini overviews, and Claude's web retrieval.

How Do You Make New Research, Launches, or Guides Visible to Grok Quickly?

The fastest defensible path is a coordinated publish-index-distribute sequence that closes the loop between an owned page, Bing retrieval, and public X discussion within the same day. Speed matters, but editorial control matters more — Singularity Digital notes that timeliness helps Grok visibility, and chasing shallow trends without evidence works against the authority signals Grok rewards.

A repeatable Grok-visibility workflow for a new page:

- Publish a citable owned page. Apply the answer-first structure: H2 as a direct question, 2–3 sentence direct answer, supporting data, evidence blocks, named author with Person schema, Article or BlogPosting schema with `author`, `datePublished`, and `headline`.

- Notify Bing. Update the XML sitemap and trigger IndexNow so the page enters Bing's index without waiting for the next crawl cycle. Verify indexation within 48–72 hours.

- Publish a public X thread. Convert the page's main findings into a 5–10 tweet expertise thread (per AISO System's cadence). Post from a verified account if available. Link back to the canonical URL in the final tweet, not the first, so the thread reads as substantive rather than promotional.

- Add visuals and evidence. Include charts, screenshots, or data tables inside the X thread — Singularity Digital identifies visuals as an engagement signal Grok treats as a relevance cue.

- Prompt legitimate third-party mentions. Share the research with the 5–10 journalists, analysts, or partners you track on X. Encourage customers and partners who can speak to the findings to reply or quote — ToolSolved specifically calls out customer and partner mentions as a Grok-strengthening pattern.

- Engage in adjacent public conversations. Reply with the page's evidence inside existing threads where the question is already being asked. Avoid drive-by link drops; quote the specific finding.

- Refresh the page as facts change. Update `dateModified`, re-ping IndexNow, and restate the update in a follow-up X post. Archive refreshes are a first-class step, not a cleanup task.

The goal of this sequence is not one viral moment but a triangulated footprint Grok can reconcile across Bing, X, and third-party mentions within hours of publication. Teams running this across dozens of launches a quarter need automation in the pipeline — IndexNow pings, schema generation, X brief creation, and refresh scheduling should be built into the publishing workflow rather than handled as separate manual steps.

How Should Teams Measure Whether They Are Appearing in Grok Answers Over Time?

Measure Grok visibility directionally with a fixed prompt set, a classified outcome log, and a regular capture cadence — not with absolute rankings, because the public corpus does not provide controlled tests proving that specific actions cause specific Grok citations. The sources are honest about this gap; your measurement framework should be too.

A repeatable measurement system:

- Build a fixed prompt set. Pick 20–40 prompts that reflect how buyers in your category actually phrase questions — category queries, comparison queries, problem-framed queries, and branded queries. Freeze the wording.

- Capture a baseline. Run every prompt in Grok (via X and grok.com where access differs), record the full answer text, and save visible sources or citations.

- Classify each outcome using Singularity Digital's three categories:

| Outcome type | What it looks like in a Grok answer |

|---|---|

| Brand mention | Your brand name appears in the answer text |

| URL citation | A page you own is linked or cited as a source |

| Product/service reference | The answer describes your category position without naming you (e.g., "the observability tool built for Kubernetes") |

- Log the context. Record the date, the exact prompt, the answer text, any visible sources, and which competitor brands appeared.

- Repeat on a cadence. Monthly is a reasonable default; weekly for categories with fast-moving X conversation.

- Correlate with actions. Tag each capture window with what changed — new pages published, IndexNow submissions, X threads posted, refresh cycles, earned media. Look for directional movement, not causation.

Treat Grok measurement as visibility telemetry, not a rankings report — the honest output is "we moved from 0 mentions to 6 mentions across 30 prompts after this quarter's publishing and X activity," not "action X caused citation Y."

For teams running this across multiple client sites or many domains, the prompt set, capture workflow, and classification taxonomy should be templated so the methodology is consistent quarter over quarter. Inconsistent capture is worse than no capture — it will produce noise that looks like signal.

Grok vs ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews: What Changes?

The core disciplines transfer, but the signal mix shifts per engine. Grok is the only major engine with direct public X exposure, which changes what "distribution" means in a visibility workflow. DataStudios positions Grok as strongest for fast situational understanding and trend interpretation, while Perplexity is stronger when the deliverable must be sourced, defensible, and easy to audit.

| Engine | Primary retrieval signals | What most moves the needle |

|---|---|---|

| Grok | Bing index (per AISO System), live web, public X posts and engagement | Public X activity plus answer-first owned pages; real-time relevance |

| ChatGPT | Bing-based browsing, training data | Bing indexation, entity consistency, citable page structure |

| Perplexity | Live web retrieval with source display | Crawlable, cite-worthy pages with clear attributions |

| Google AI Overviews | Google index plus Gemini retrieval | Classic SEO fundamentals plus answer-ready structure and schema |

| Gemini | Google retrieval, structured data, entities | Schema, E-E-A-T signals, Google Search visibility |

| Claude | Limited web contexts, Projects, Connectors, API use | Structured pages, entity clarity, archive readiness |

The practical implication for B2B SaaS teams: build one citation-ready content system, then layer engine-specific distribution on top. The owned-page shape is shared. The differentiators are Bing coverage for Grok and ChatGPT, Google coverage for Gemini and AI Overviews, live-web cleanliness for Perplexity, structured context for Claude, and — uniquely — public X activity for Grok.

Mentionwell publishes engine-specific operating guides for each of these:

- [How to Show Up in ChatGPT in 2026](/how-to-show-up-in-chatgpt-in-2026)

- [How to Show Up in Perplexity in 2026](/how-to-show-up-in-perplexity-in-2026)

- [How to Show Up in Google Gemini in 2026](/how-to-show-up-in-google-gemini-in-2026)

- [How to Show Up in Claude in 2026](/how-to-show-up-in-claude-in-2026)

- [AEO vs GEO vs LLMO: Which Workflow Fits Your Team?](/aeo-vs-geo-vs-llmo-which-workflow-fits-your-team)

Grok is the engine where a content team without a public X practice leaves the most visibility on the table.

Which Grok Visibility Claims Are Proven, and Which Are Still Unproven?

The corpus supports a set of reasonable operational actions and a set of ranking assertions that remain unverified. Being clear about which is which protects credibility and stops teams from investing in tactics the evidence does not support.

| Claim | Evidence status | Basis |

|---|---|---|

| Bing indexation improves Grok web retrieval odds | Reasonably evidenced | AISO System's working model; low-cost to execute either way |

| Public X activity strengthens Grok signals | Reasonably evidenced | ToolSolved, Singularity Digital, AISO System all converge |

| Answer-first page structure with schema is quotable | Reasonably evidenced | AISO System's formatting guidance; consistent with cross-engine AEO practice |

| Author expertise and named authors matter | Reasonably evidenced | Singularity Digital's technical-clarity argument |

| Cross-source corroboration across X, press, forums, owned content builds trust | Reasonably evidenced | AISO System's triangulation model |

| Exact ranking weights for replies, reposts, verification, or visuals | Unproven | No source quantifies these signals |

| Specific posting frequencies guarantee visibility | Unproven | AISO System's 3–4 posts/week is a recommendation, not a tested threshold |

| Grok uses or does not use a traditional URL index | Contested | AISO System and Singularity Digital disagree |

| Any specific action caused a specific Grok citation | Unproven | No controlled before-and-after tests in the public corpus |

Optimize for what the evidence supports; hold tactics built on unverified ranking claims to a lower investment bar until real test data appears.

When Should You Use a Content Engine for Grok Visibility?

A content engine earns its place the moment the Grok-visibility workflow — citable pages, schema, Bing indexation, IndexNow pings, public X threads, third-party prompting, and refresh cycles — stops fitting inside a manual process. For a single-site team publishing four articles a month, spreadsheets and a content calendar can hold it together. For teams running launches across many pages, archives that need refreshes, or agencies operating across many client domains, the coordination cost becomes the bottleneck.

What an operational Grok-ready pipeline actually needs:

- Onboarding and site profiles that capture entities, authors, categories, and tone per domain.

- Research grounded in real sources, not generic AI drafts.

- Answer-first drafting with direct-answer openings, evidence blocks, and citable phrases.

- Schema-aware structure — Article, Organization, Person, FAQPage — applied consistently.

- Publishing into existing CMS workflows or headless stacks without rebuilding them.

- IndexNow-aware publishing so Bing is notified automatically.

- X distribution briefs produced alongside every article.

- Archive refreshes scheduled as a first-class pipeline stage, not cleanup.

- Multi-site brand consistency when the same operator runs many domains.

Mentionwell is built as an automated blog engine for exactly this — a repeatable citation pipeline for AEO, GEO, LLMO, and SEO execution across one site or hundreds, not a generic AI writing tool and not a visibility analytics platform. If your team is publishing enough that Grok, ChatGPT, Perplexity, Gemini, Claude, and Google AI Overviews all need consistent treatment, the engine replaces the coordination overhead, not the editorial judgment.

Ready to make your owned content citation-ready across Grok and every major answer engine? Get My Site GEO Optimized.

Sources

- How to use Grok For Free in 2026www.youtube.com

- How to Appear in Grok in 2026: Complete Visibility Guidewww.aisosystem.com

- How To Use Grok AI [2026 Full Guide]www.youtube.com

- Grok AI in 2026? : r/HeavenGFwww.youtube.com

- How To Use Grok AI On X (2026 Updated)singularity.digital

- How to Get Mentioned in Grok //toolsolved.com