What is a GEO platform in AI search?

A GEO platform is software that measures and improves how often a brand is mentioned, cited, or recommended inside AI search systems — ChatGPT, Google AI Overviews, Google AI Mode, Gemini, Claude, Perplexity, Copilot, and Meta AI. The category exists because AI answers do not behave like a ten-blue-link SERP: they synthesize sources, attribute selectively, and rewrite recommendations on every run.

This is not the same GEO that maps teams talk about. AI-search GEO (Generative Engine Optimization) has nothing to do with geo-targeting, geographic information systems, or local SEO. If you are evaluating Google Maps Platform, Mapbox, Esri ArcGIS, QGIS, CARTO, Shopify Markets, GeoSwap, Local Falcon, or Geostar, you are in a different product category — those tools handle location data, store locators, and map rendering. AI-search GEO platforms handle prompt sampling, citation tracking, and content optimization for large language models.

The confusion is worth flagging early because the same three letters appear across both categories, and procurement teams routinely pull the wrong shortlist. Throughout this article, "GEO" refers exclusively to Generative Engine Optimization.

Does GEO replace SEO?

No. According to Yellow Duck Marketing, Google sends 345x more traffic than all AI platforms combined, which means classic SEO is still where the volume lives. GEO, AEO, and LLMO are additive layers, not substitutes.

The practical implication: technical SEO fundamentals still decide whether AI engines can find and trust your pages in the first place. Google indexation, schema markup, internal linking, crawlability, and Core Web Vitals all feed the retrieval pipelines that ChatGPT (via Bing), Perplexity, and Gemini draw from. Platforms like Ahrefs, Semrush, SE Ranking, BrightEdge, and Conductor remain useful for the SEO half of the workflow.

What GEO adds on top is a different optimization target: structuring content so it gets extracted, quoted, and attributed inside generative answers — not just ranked in a list. Treat them as one stack, not a migration.

Why are cheap GEO tools easy to buy but hard to trust?

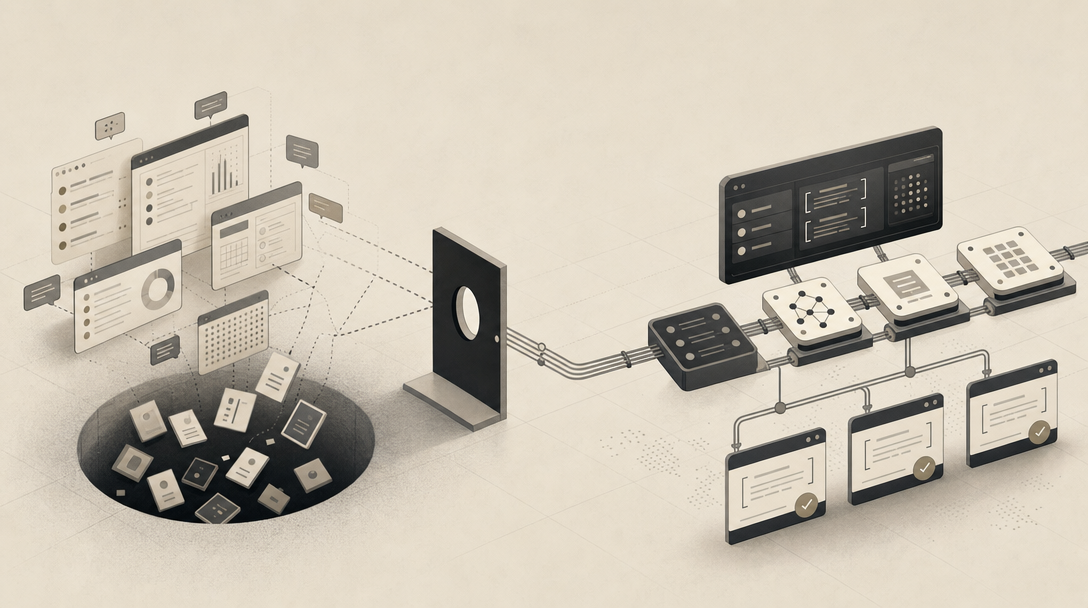

Cheap GEO tools sell a believable promise: pay $29/month, $49/month, or sometimes nothing, and watch a dashboard tell you whether ChatGPT mentions your brand. The problem is not the price — it is what the price buys.

According to AI Search Tools, most low-cost platforms run a simple loop: send a prompt to one or two AI models, check whether the brand appears, log the result. What they rarely tell you is which prompts they sampled, how often, against which model versions, or why a competitor was cited instead of you. That gap between "we saw a mention" and "here is what to do about it" is where the real cost shows up — in strategic mistakes that AI Search Tools categorizes as optimizing for the wrong prompts, missing competitor gaps, and publishing content the models never cite.

The due-diligence questions cheap dashboards usually fail:

- How many prompts are tested per topic, and how often are they rerun?

- Which engines and which model versions are covered?

- Are results averaged across runs, or is the dashboard showing a single screenshot?

- Are cited URLs captured, or only brand-name mentions?

- Is there a path from a finding to a content fix?

The AI Search Tools comparison frames the 2026 market as "crowded with entry-level trackers that look similar on pricing pages but differ significantly in data quality, model coverage, and actionability." That last word — actionability — is the one that separates a $29 dashboard from a system you can run a content program on.

What separates monitoring-only GEO tools from full optimization platforms?

Monitoring tools tell you what is happening in AI answers. Optimization platforms change it. The decision criterion, as GEO Alliance puts it, is whether a tool can act on its findings or only report them.

Monitoring-only platforms — Otterly, Peec AI, Profound, AthenaHQ, Scrunch AI, Ahrefs Brand Radar, parts of Semrush, and Promptwatch — track prompts, mentions, share of voice, narrative tone, and competitor citations. That data is genuinely useful for diagnosis. According to GEO Alliance, Peec AI monitors ten AI engines and raised seven million euros within five months of its 2025 launch, which signals real demand for visibility dashboards specifically.

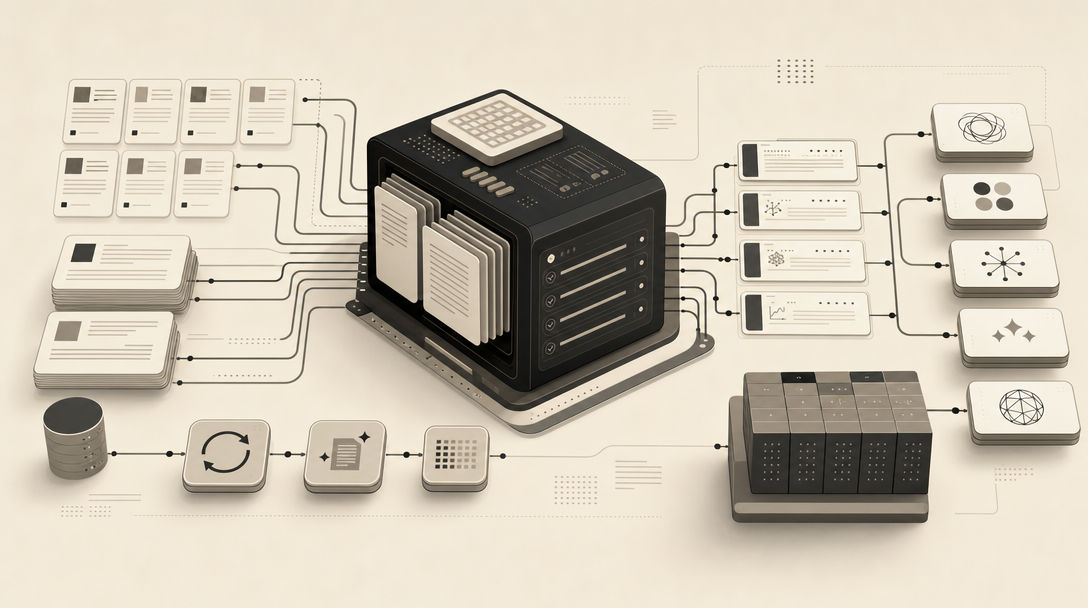

Full optimization platforms add the next four steps: answer gap analysis, citation-grounded content briefs, published pages, and refresh loops tied to page-level citation evidence. Senso.ai describes most teams ending up with a stack of complementary tools rather than one monolithic system, which matches the operational reality.

| Capability | Monitoring-only dashboards | Full optimization / content engines |

|---|---|---|

| Prompt and citation tracking | Yes | Yes |

| Share of voice, narrative tone | Yes | Sometimes |

| Answer gap analysis tied to briefs | Rare | Yes |

| Citation-grounded drafts | No | Yes |

| CMS publishing (WordPress, Webflow, Shopify, headless) | No | Yes |

| Archive refresh workflow | No | Yes |

| Page-level citation attribution | Sometimes | Yes |

| Multi-site / agency operations | Limited | Yes |

Bluefish makes the same distinction more bluntly: tools like Profound and AthenaHQ help you see narrative tone and citation share, but they do not explain why an AI model generated a specific answer or how to influence it across engines. Diagnosis is a step. It is not the program.

If you want a content engine that turns AI visibility gaps into published, citation-ready pages instead of another screenshot dashboard, Get My Site GEO Optimized.

How should AI visibility be measured when outputs are probabilistic?

AI visibility should be measured as a frequency over many runs, not as a single rank in a single response. According to Plate Lunch Collective, running the same prompt 100 times can produce 100 different responses, which means a "rank" in an AI answer is not a stable position — it is a probability distribution.

The strongest data point on this comes from SparkToro and Carnegie Mellon University research published in January 2026: there is less than a 1-in-100 chance that ChatGPT or Google AI will produce the same brand recommendation list twice across 100 identical runs. Plate Lunch Collective also notes the AEO tool market has crossed $300M in venture funding while measurement methodologies are still being standardized — which is why vendor claims like "335% visibility increase" or "10x citation rate" are not directly comparable across tools.

What this means for measurement design:

- Prompt volume matters more than prompt cleverness. A handful of curated prompts run once is closer to anecdote than data. Useful tools run hundreds to thousands of prompts and report mention frequency over time.

- Engine coverage is non-negotiable. ChatGPT, Gemini, Claude, Perplexity, Meta AI, and Google AI Mode use different policies, source-weighting logic, and narrative patterns. Single-engine optimization is single-engine guessing.

- Rerun cadence beats screenshots. Daily or weekly resampling exposes variance. A monthly snapshot hides it.

- Model versions need to be tracked. A "GPT-4" result from March is not comparable to a "GPT-4o" result from November.

- Brand visibility is not category visibility. Plate Lunch Collective's research across 1,423 companies found brand visibility — does AI know you exist? — scores far higher than category visibility, which is whether AI recommends you in unbranded buyer queries like "best CRM for B2B SaaS." Category visibility is the harder, more commercially valuable metric.

- Cited URLs beat mention counts. A brand mention in a hallucinated paragraph is worth less than a quoted paragraph from a specific page on your domain. Source inclusion and page-level attribution — verifiable in GA4 referral data — are the metrics that tie GEO to revenue.

Treat AI visibility as a confidence in trends, not a number in a cell. If a vendor reports a precise rank without a sample size, a confidence interval, or a time window, the precision is theatre.

How to choose a GEO tool: the six-check due-diligence scorecard

Score every GEO platform on six checks before you sign a contract: sampling methodology, multi-engine coverage, brand-vs-category metrics, cited-source evidence, action-loop depth, and CMS refresh workflow. The same scorecard works on Topify, Rankability, Naridon, Frase, Surfer, Writesonic, Mentio, Goodie AI, Geoptie, LLMrefs, Gauge, Profound, AthenaHQ, Scrunch AI, Peec AI, Otterly, Ahrefs Brand Radar, Semrush, or anything else on a GEO Alliance or AI Search Tools comparison page.

| Check | What to ask the vendor | What "good" looks like |

|---|---|---|

| 1. Sampling methodology | How many prompts per topic? Rerun cadence? Averaging window? | Hundreds of prompts per topic, weekly or better resampling, results averaged over a defined window |

| 2. Multi-engine coverage | Which engines and model versions are tested? | ChatGPT, Gemini, Claude, Perplexity, Meta AI, Google AI Mode, with versioning |

| 3. Brand vs category metrics | Do you report both, separately? | Yes, with category visibility called out as the harder metric |

| 4. Cited-source evidence | Are cited URLs captured per response? | Page-level URLs, not just brand-name mentions |

| 5. Action-loop depth | Can the tool generate briefs, drafts, and published pages? | Answer gap → brief → draft → CMS publish → refresh |

| 6. CMS refresh workflow | How does the tool handle archive refreshes? | Native publishing into WordPress, Webflow, Shopify, or headless, with refresh governance |

A tool can pass checks 1–4 and still fail you — that is the line between monitoring and optimization. Checks 5 and 6 are where most "GEO platforms" stop being platforms and start being dashboards.

A vendor that cannot describe its sampling methodology in concrete numbers is not selling data — it is selling vibes in a chart. Apply the same skepticism to claims like "10x citation rate." Ask: ten times what baseline, measured how, across how many runs, on which engines, between which dates? If the answers are not on the page, the number is not a number.

What is Multi-Platform Strategy in GEO?

Multi-platform strategy is the coordinated creation, optimization, and distribution of content across ChatGPT, Claude, Perplexity, Gemini, Google AI Overviews, Google AI Mode, Meta AI, and emerging systems — designed to maximize visibility, citations, and authority across all of them simultaneously. The Generative Engine Optimization Knowledge Base defines it that way, and Bluefish reinforces why it matters: each engine has different policies, different source-weighting logic, and different narrative patterns.

Texta.ai's recommendation is the practical one: most of the work — roughly 80–90% — should go into a strong universal foundation: content quality, structured answers, entity clarity, schema, internal links, and technical fundamentals. Platform-specific tweaks (Bing indexation for Copilot, X presence for Grok, Reddit and forum signals for Perplexity) layer on top of that foundation. They do not replace it.

Single-engine optimization is brittle. A page that ranks beautifully in Perplexity but never gets cited in ChatGPT is a page that depends on one retrieval pipeline staying the same — and these pipelines are changing every quarter.

Are tools like Profound or AthenaHQ full GEO solutions?

No. Profound, AthenaHQ, Ahrefs Brand Radar, Scrunch AI, Semrush, and Surfer-style trackers are useful for visibility diagnosis — they show prompts, mentions, citation share, and competitor narratives. What none of them do is publish the citation-ready pages an AI engine needs to find before it can cite you.

That gap is well-documented across our prior breakdowns: Profound Is Worth a Billion Dollars. It Still Won't Write You a Single Article, Ahrefs Brand Radar Costs $828/Month Before a Single Article Gets Written, Scrunch Is an AI Visibility Command Center. MentionWell Is an AI Visibility Engine, Semrush Treats AI Visibility Like a Line Item, and Surfer Added GEO Tracking as a Checkbox. The same logic separates AEO, GEO, and LLMO workflows.

Diagnosis tools belong in the stack. They just do not finish the job.

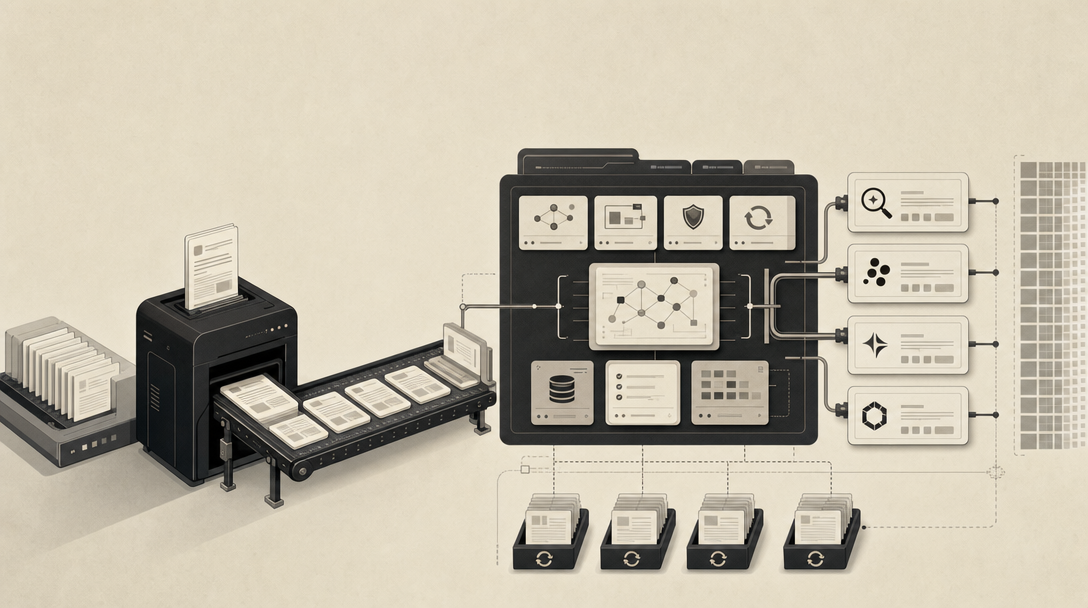

How do you do Generative Engine Optimization as a publishing workflow?

GEO is a publishing operation, not a checklist. The workflow has ten repeatable stages:

- Identify answer gaps. Sample prompts across your category and competitors. Find the queries where AI answers cite competitors and not you.

- Prioritize category and comparison queries. Plate Lunch Collective's 1,423-company research is clear: category visibility on unbranded queries is the commercial metric. Branded mention counts are a vanity layer.

- Build briefs. Each brief specifies the question, the expected direct answer, the supporting entities, the citable statistics, and the schema.

- Create glossary pages and comparison pages. Geoseoguide recommends 100–200 word overviews per item in listicles, and quarterly refreshes.

- Verify entities. First-mention proper nouns, consistent naming, and disambiguation against same-name competitors.

- Ground claims in citations. Every statistic attributed to a named source. No "studies show."

- Add schema. Article, FAQPage, HowTo, Organization, Product — whichever fits the page type.

- Strengthen internal links. Build topical clusters around glossary terms (see What Is GEO in 2026, What Is AEO in 2026, What Is LLMO in 2026, and What Is AI SEO in 2026) so AI engines see a coherent topical entity.

- Publish through your existing CMS. WordPress, Webflow, Shopify, or headless — the workflow has to fit the stack, not replace it.

- Track page-level citation evidence and refresh quarterly. Stale comparison content drops out of AI answers fast.

The risk in programmatic GEO is the same risk as any templated content operation: thin pages, duplicate patterns, hallucinated entities, ungrounded claims. The quality controls that prevent that are entity verification, source grounding, duplicate-pattern detection, editorial review on every page, and refresh governance that retires or rewrites stale assets on a schedule.

This is the operational layer Mentionwell is built for — a blog engine that runs onboarding, site profile, brief generation, citation-grounded drafting, CMS publishing, and archive refreshes as one pipeline across one site or hundreds. It is not a writing tool. It is the system that turns AEO, GEO, LLMO, and SEO into a single editorial workflow you can audit.

How long should teams expect GEO changes to take?

Faster than SEO in some engines, slower in others, and never guaranteed. According to Geoseoguide, Perplexity may reflect content changes within days, ChatGPT may take weeks to months, and teams should plan for 3–6 months to see meaningful results across all platforms.

A practical cadence: publish glossary and comparison pages on a steady schedule, refresh listicles and ranked comparisons quarterly, and resample your tracked prompts weekly or better. No GEO platform — including ours — can guarantee a citation. What it can do is improve the conditions under which citations happen, then measure whether they do.

If you want a content engine that runs that loop end-to-end across AEO, GEO, LLMO, and SEO — onboarding, briefs, citation-grounded drafts, CMS publishing, and quarterly refreshes — Get My Site GEO Optimized and we will show you what a citation-shaped pipeline looks like on your domain.

Sources

- The Problem with GEO & AI Prompt Visibility - LinkedInwww.linkedin.com

- GEO vs SEO: Why Your Content Needs to Impress AI Nowwww.yellowduckmarketing.com

- 7 hard truths about measuring AI visibility and GEO performancesearchengineland.com

- Why Ignoring AI Search Visibility in 2026 Is Your Firm's Most ...www.attorneyjournals.com

- AEO & GEO Tools: 52 AI Search Visibility Platforms Comparedwww.platelunchcollective.com