What does "BrandWell was built for Google" mean?

BrandWell was built for Google because its documented foundations are long-form SEO content, keyword-driven ranking workflows, and traditional search visibility — not citation in AI answer engines. Read BrandWell's own pages and the lineage is consistent: a long-form content generator that grew into a brand-growth platform optimized to "rank at the top of Google" (Source: BrandWell). That heritage is real, deep, and genuinely useful — for the search world it was designed to win.

The problem is that the search world changed. The visibility surface for B2B content now spans ChatGPT, Google AI Overviews, Gemini, Claude, Perplexity, and Microsoft Copilot, and each one decides which sources to cite using its own retrieval and ranking logic. A page that ranks position #3 in classic Google results is not automatically a page ChatGPT will quote, and a page Perplexity cites is not necessarily one Gemini surfaces.

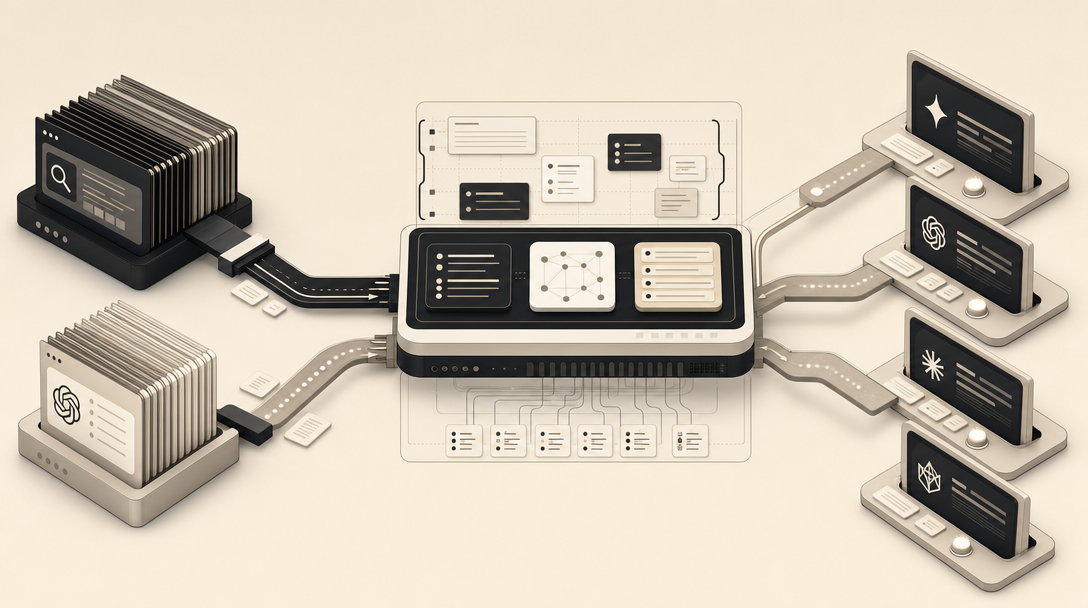

That is the gap MentionWell is built into. Where BrandWell's roots are RankWell projects, WriteWell drafts, Google Search Console integration, and WordPress and Shopify publishing aimed at SERP outcomes, MentionWell is a blog engine built around AEO, GEO, LLMO, and SEO as four distinct optimization layers shipped on every article. The distinction is not "better writing." It is which destination each system was architected for.

What was BrandWell originally built to do as Content at Scale?

BrandWell began life as Content at Scale, a long-form AI writing platform built in 2021 to help marketers produce SEO content that could rank in Google. PRWeb's announcement of the rebrand confirms the 2021 origin and the original mandate: scale content output while preserving ranking quality (Source: PRWeb). BrandWell's About page adds that the underlying technology took the equivalent of six full-time developers over a year to build, and that the product launched in September 2022 — two months before ChatGPT's November 30, 2022 release (Source: BrandWell).

That timing matters. Content at Scale was conceived in a pre-ChatGPT world where the only meaningful destination for a 3,000-word blog post was Google's blue links. The early product reflected that: proprietary long-form writing, a RankWell project structure for keyword-led briefs, WriteWell as the drafting surface, and direct integrations with Google Search Console, WordPress, and Shopify (Source: BrandWell Help). According to BrandWell's Anyword comparison, the platform started as a long-form content generator and later expanded to include over 40 specialized AI agents plus the AIMEE conversational chatbot (Source: BrandWell).

The launch story on BrandWell's own blog explains why the name eventually changed: the team decided "Content at Scale" no longer fit because they wanted to move beyond optimized content into helping customers grow a brand (Source: BrandWell). That is an honest description of a platform whose center of gravity was, and largely remains, ranked content production.

Was BrandWell built for SEO rankings, brand growth, intent-led marketing, or all three?

BrandWell has been positioned as all three at different points, and the inconsistency itself is the most useful signal for buyers. A long-form SEO content platform, a brand growth engine, and an intent-led marketing system are three different products with three different operating models. Reading across BrandWell's own pages, you can see the platform layering each new positioning on top of the last.

Here is how the documented positioning has evolved:

| Era | Positioning | Documented features |

|---|---|---|

| 2021–2022 (Content at Scale) | Long-form AI SEO content | Proprietary long-form writer, keyword-led briefs, ranking focus |

| 2024 rebrand to BrandWell | Brand growth engine | Brand graph, link graph, old-content updating, optional link co-op |

| Latest "all-new BrandWell" | Intent-led marketing | Audiences, Content, AIMEE, Reporting, TrafficID, ChainAI |

BrandWell's Jasper comparison still describes it as "an all-in-one brand growth platform that focuses on long-form, SEO-driven content designed to rank at the top of Google" (Source: BrandWell). The Anyword comparison says BrandWell expanded to include over 40 specialized AI agents plus the AIMEE conversational chatbot (Source: BrandWell). The "Meet the all-new BrandWell" post frames the evolution around customer questions like "is the content working?" and "who is reading it?" (Source: BrandWell).

These are additive GTM and SEO layers. None of the public documentation describes a dedicated AEO, GEO, or LLMO citation architecture — the structures, schema, and crawl surfaces specifically engineered so that ChatGPT, Claude, Perplexity, Gemini, Copilot, and Google AI Overviews can quote a page. That is a different category of work, and it does not appear in the BrandWell stack as documented.

Why BrandWell is better than ChatGPT for SEO?

BrandWell is better than ChatGPT for SEO because BrandWell is a full SEO content platform engineered for long-form ranked content, while ChatGPT is a general-purpose chatbot built for shorter assisted writing tasks. BrandWell's own side-by-side page makes the point directly: BrandWell is built for long-form, optimized content production, while ChatGPT is "more of a general-purpose chatbot that can help with short text" (Source: BrandWell).

That framing was accurate when ChatGPT was a chat window. It is incomplete now. According to a LinkedIn post from Jeff Joyce, OpenAI launched OpenAI Atlas, an AI-powered browser built around ChatGPT that does not return a traditional search results page — users ask a question and the answer is generated, with citations pulled from the web (Source: LinkedIn / Jeff Joyce). The destination has shifted. The relevant SEO question is no longer only "does my page rank?" but "does ChatGPT quote my page when a user asks a question my content answers?"

That is a different optimization problem. Ranking #1 in Google does not guarantee inclusion in a ChatGPT answer, and being cited by Perplexity does not require a top-three blue-link position. The "BrandWell vs ChatGPT for SEO" comparison answers the 2022 question. The 2026 question is which engine your content is engineered to be cited inside.

How to Use BrandWell to Create Content at Scale?

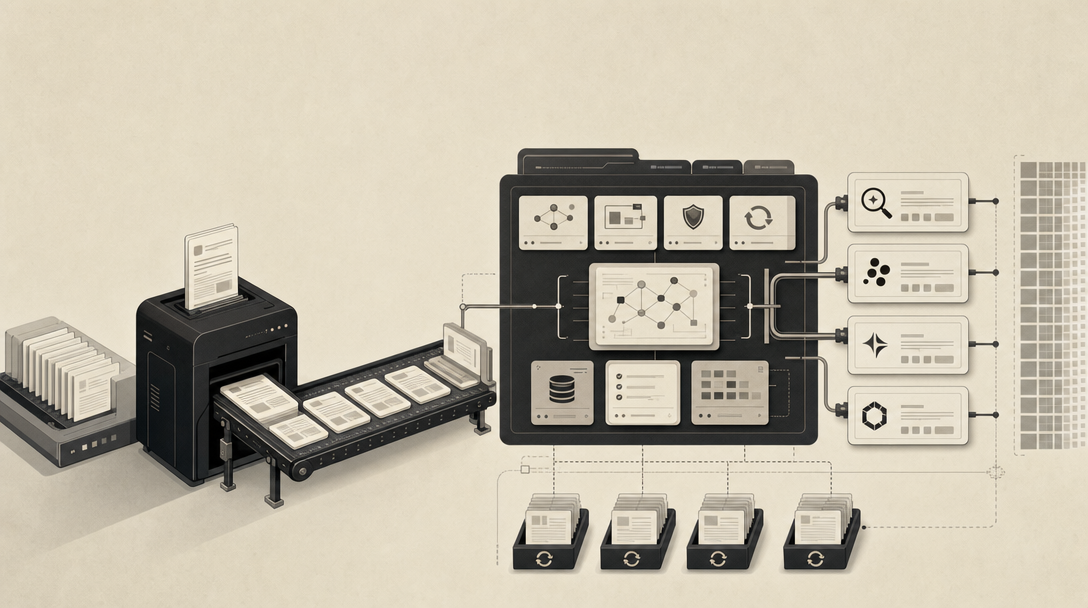

Using BrandWell to create content at scale is a five-stage human-led workflow, not a fully autonomous pipeline. BrandWell's own process article documents the steps clearly (Source: BrandWell):

- Ideation — a writer or strategist defines topics and angles

- Delegation — work is assigned within the team

- Outlining, writing, and research — the AI drafts, the writer drives

- Editing and optimizing — humans review, edit, and tune for SEO

- Final polishes and publishing — last passes before WordPress or Shopify

The workflow article is explicit that "a human writer drives the tool" and reviews every output before publish (Source: BrandWell). That is a healthy editorial principle, and it is also a meaningful constraint: at this operating model, scale is bounded by writer hours.

For agencies and multi-site operators running content across dozens of domains, the math gets uncomfortable. Five-stage human-led production multiplied across sites does not behave like infrastructure — it behaves like a services line. BrandWell's help docs list useful operational features (Google Search Console integration, custom tone of voice, content calendar, post statuses, language support, API access) that reduce friction inside that workflow, but they do not change the fundamental shape of it.

What does it mean to say MentionWell was built for ChatGPT and answer engines?

MentionWell was built for ChatGPT and answer engines because every article is engineered as a citation-shaped publishing unit, not as a Google-ranking artifact that happens to be readable. MentionWell describes itself as the blog that gets cited by Google AI Overviews, Gemini, and the rest of the answer-engine surface — drop in a domain, MentionWell researches competitors, writes the post, generates the structure, and publishes (Source: MentionWell).

The system is documented at the operational layer. Every site gets its own brand profile, taxonomy, image style, and reader API. Every article runs through an 11-stage pipeline, and every article ships with four optimization layers: AEO for answer engines, GEO for generative engines, LLMO for LLM crawlers, and classic SEO for blue-link search (Source: MentionWell Features).

The technical surface is built for AI ingestion, not just for human readers:

llms.txtandllms-full.txt— the crawler files AI systems use to discover and parse site content- Markdown-source endpoints — clean, parseable versions of every article

- ChatGPT, Claude, and Perplexity-friendly summaries — direct-answer extracts engineered for retrieval

- RSS, JSON Feed, sitemap, and FAQ schema — structured ingestion surfaces

mentionwell-readeron npm — a public reader package for headless and multi-site delivery

MentionWell's About page states the underlying premise: the search surface is fragmenting across Google AI Overviews, ChatGPT, Claude, Gemini, Perplexity, and Microsoft Copilot, and each system has its own way of choosing what to cite (Source: MentionWell). A platform built for one destination cannot serve six. MentionWell is built for the fragmented destination.

How do AEO, GEO, LLMO, and SEO change the publishing workflow?

AEO, GEO, LLMO, and SEO are four distinct optimization disciplines, and collapsing them into "AI SEO" hides the operational differences that determine whether a page actually gets cited. Each one shapes a different part of the publishing workflow.

| Layer | What it optimizes for | Workflow implication |

|---|---|---|

| SEO | Classic Google blue-link ranking | Keyword targeting, internal linking, on-page structure, backlinks |

| AEO | Answer engines like ChatGPT, Perplexity, Copilot citing your page | Direct-answer openings, FAQ schema, citable phrases, entity clarity |

| GEO | Generative engines like Google AI Overviews and Gemini including you in synthesized answers | Sourceable claims, attributed statistics, structured comparisons |

| LLMO | Large language models discovering and indexing your brand and content | llms.txt, markdown endpoints, entity consistency, archive refreshes |

A traditional SEO workflow ends at "publish the article and build links." An AEO/GEO/LLMO workflow continues: every claim needs an attributable source, every section needs a self-contained direct answer, every entity needs full proper-name treatment on first mention, and the site itself needs crawlable surfaces that AI systems actually read. Refreshes matter more, because LLMs index reality on a rolling cadence and stale content gets dropped from the citation pool.

For deeper coverage, see AEO vs GEO vs LLMO: Which Workflow Fits Your Team?, What Is AEO in 2026?, What Is GEO in 2026?, and What Is LLMO in 2026?.

Can I Have Access to BrandWell's API? How publishing architecture differs

BrandWell offers API access as a help-center FAQ item; MentionWell is built around a public reader API and headless delivery as a first-class architecture. That is the operational fork in the road for teams choosing between the two.

BrandWell's documented stack is centered on direct CMS publishing: Google Search Console integration, custom tone of voice, content calendar, post statuses, multi-language support, and API access listed alongside features like RankWell project setup (Source: BrandWell Help). The integrations of record are WordPress and Shopify. That is the right architecture for a single-site marketing team running an SEO blog.

MentionWell's architecture is shaped for a different problem — the same content needing to live in a CMS, a headless front-end, an AI crawler's index, and an answer engine's retrieval cache simultaneously:

- Reader API — every article addressable as structured data

mentionwell-readernpm package — drop-in headless rendering- RSS, JSON Feed, sitemap — multiple syndication surfaces

- FAQ schema — pre-built for AI extraction

llms.txtandllms-full.txt— explicit crawler discovery- Markdown-source endpoints — clean parseable copies for LLM ingestion

For background on the crawler surface, see What Is LLMs.txt in 2026? The AI Crawler File Explained.

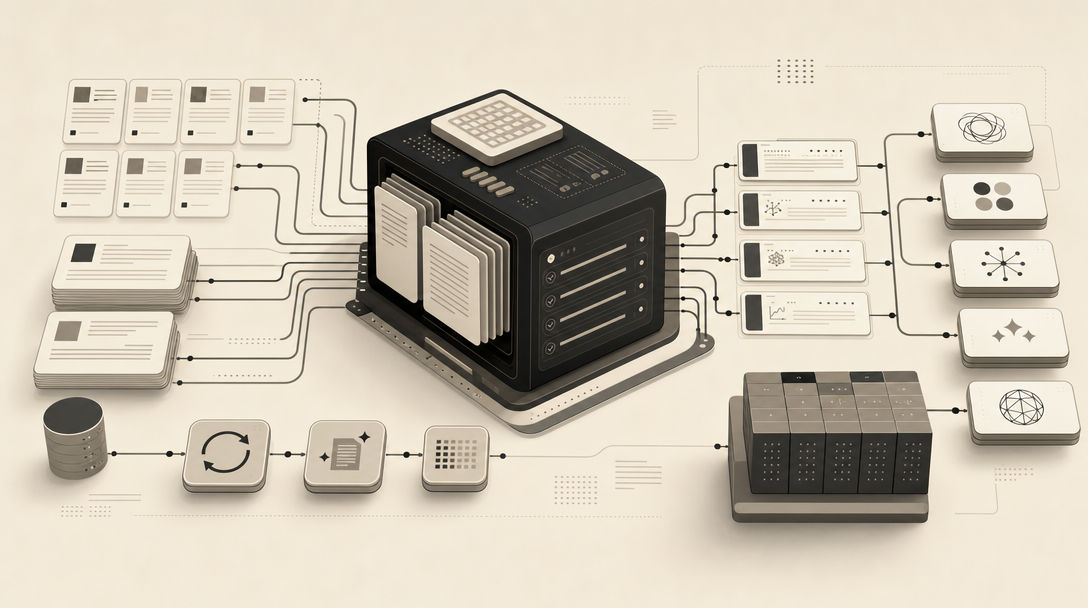

BrandWell's five-stage process vs MentionWell's 11-stage pipeline

MentionWell's 11-stage pipeline is engineered for citation-ready output across one site or hundreds, while BrandWell's five-stage human-led process is engineered for editorial quality on a single SEO blog. That is the operating-model difference, not a quality verdict.

| Dimension | BrandWell | MentionWell |

|---|---|---|

| Stages | 5 (ideation → publish) | 11 |

| Optimization layers | SEO + brand voice | AEO + GEO + LLMO + SEO |

| Operating mode | Human writer drives the tool | Pipeline runs end-to-end |

| Primary destination | Google rankings | AI answer-engine citations + classic SEO |

| Publishing surface | WordPress, Shopify | Reader API, headless, RSS, JSON Feed, llms.txt |

| Scale unit | Per-writer throughput | Per-domain pipeline throughput |

| Multi-site fit | Per-account workflow | Brand profile per domain, repeatable across sites |

This is not a quality argument — BrandWell-produced content is real long-form SEO content. It is an architecture argument. If your job is publishing one polished SEO article per week on one domain, the five-stage process is appropriate. If your job is publishing programmatic SEO clusters across a dozen client sites with AEO, GEO, LLMO, and SEO running on every page, the 11-stage pipeline is the operational fit.

Is there public evidence that either platform earns AI-generated citations?

No — neither BrandWell nor MentionWell publishes third-party verified evidence that their content consistently earns citations inside ChatGPT, Perplexity, Claude, Gemini, Copilot, or Google AI Overviews. What the public corpus documents is positioning, workflows, and technical infrastructure. Buyers should treat that honestly.

What you can verify directly, on any candidate platform:

- Crawl surfaces — does the platform expose

llms.txt,llms-full.txt, and markdown endpoints? - Indexability — are pages reachable by AI crawlers (GPTBot, ClaudeBot, PerplexityBot, Google-Extended)?

- Citation-ready structure — do articles open with direct answers, use FAQ schema, and attribute statistics?

- Published examples — can the vendor show live URLs and where those URLs are appearing in AI answers?

- Refresh cadence — does the system refresh archives on a documented schedule?

These are observable, not promised. A platform's citation architecture should be inspectable from the outside; if it isn't, treat citation claims as marketing.

When should an agency or multi-site operator choose a blog engine?

Choose a blog engine when the job is repeatable, citation-ready publishing across multiple domains — and choose a content platform when the job is editorial production on a single brand. The decision turns on operating model, not feature checklist.

A blog engine fits when your team needs:

- AEO, GEO, LLMO, and SEO running on every article without manual layering

- Brand-consistent output across many domains via per-site profiles and taxonomies

- Programmatic SEO clusters with editorial controls, not templated thin content

- Headless delivery, reader APIs, and crawler files for AI ingestion

- Archive refreshes on cadence, not as one-off projects

- Pipeline throughput that scales without linear writer headcount

A long-form SEO content platform like BrandWell fits when your team needs:

- Editorial long-form drafts with human-driven review on each piece

- Direct WordPress or Shopify publishing on a single brand

- Brand-growth and intent-led GTM features layered alongside content

- Workflow tools sized for a single-team marketing org

For agencies running content across client portfolios and B2B SaaS teams that need pages cited in AI answers — not just ranked — the operating model that scales is a blog engine with AEO, GEO, LLMO, and SEO built into the pipeline. See related comparisons: Scrunch Is an AI Visibility Command Center. MentionWell Is an AI Visibility Engine. and Semrush Treats AI Visibility Like a Line Item.

If your content needs to show up in ChatGPT, Google AI Overviews, Gemini, Claude, Perplexity, and Copilot — not just rank in classic Google — drop in your domain and let MentionWell run the citation-shaped pipeline across it. Get My Site GEO Optimized.

Sources

- BrandWell vs ChatGPT - Side by Side Comparisonbrandwell.ai

- 25x Your Brand Presence & DOMINATE Online With AI (LIVE wwww.youtube.com

- About BrandWellbrandwell.ai