What does "close the loop" mean for HeyAmos and Mentionwell?

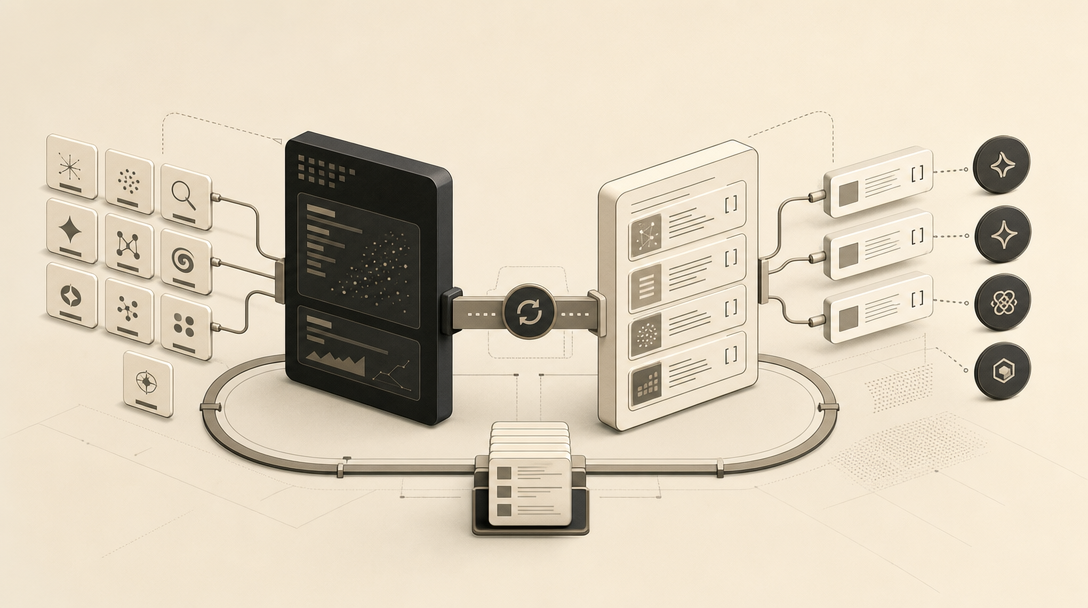

Closing the loop in AI search means moving from observed signal — prompts, citations, mentions, and gaps in ChatGPT, Gemini, Perplexity, and adjacent surfaces — to shipped, crawlable, refreshable pages, and then back to measurement. Without that round trip, you have a dashboard or a draft, not a system.

HeyAmos and Mentionwell both claim to close that loop, but at different layers of the workflow. HeyAmos closes the loop on the visibility side: it monitors buyer prompts, surfaces the answers AI engines give, identifies competitor citations and blockers, and turns those gaps into prioritized recommendations and a content piece you can copy into a CMS. The next tracking cycle then measures impact. Mentionwell closes the loop on the publishing side: onboard a domain, generate a site profile, run every article through an 11-stage pipeline that ships AEO, GEO, LLMO, and classic SEO together, and deliver into existing CMS or headless stacks via four delivery modes and a reader API.

The "engine count" framing buyers reach for first — 10 versus 4 — is the wrong axis. The real comparison is workflow depth: where the loop starts, where it ends, and what it produces in between. One product is built to tell you what to write. The other is built to publish it in a citation-shaped form across one site or hundreds. Most serious operators end up needing both layers, not one or the other.

Is the "10 engines vs 4" comparison actually documented?

No. The "10 engines vs 4" shortcut is not supported by the public source material from either company, and treating it as a feature comparison conflates four very different kinds of engine support. Coverage means something different at each layer of the stack.

The HeyAmos homepage explicitly names ChatGPT, Gemini, and Perplexity for monitoring and action workflows; broader coverage is described only as "and more." The Mentionwell homepage explicitly names ChatGPT, Claude, Gemini, Grok, and Perplexity as the citation targets for its blog engine. Several other engines and crawlers appear in Mentionwell's AEO and LLMO definitions — Google AI Overviews, Bing Copilot, Microsoft Copilot, GPTBot, ClaudeBot, PerplexityBot — but as optimization surfaces, not as monitored prompts.

The honest comparison is a coverage matrix that separates support type:

| Engine / Crawler | HeyAmos (monitoring named) | Mentionwell (citation target named) | Mentionwell (AEO/LLMO surface) |

|---|---|---|---|

| ChatGPT / ChatGPT Search | Yes | Yes | Yes |

| Claude | Not explicitly named | Yes | Yes (ClaudeBot) |

| Gemini | Yes | Yes | Yes |

| Grok | Not explicitly named | Yes | Yes |

| Perplexity | Yes | Yes | Yes (PerplexityBot) |

| Google AI Overviews | Not explicitly named | Implied via GEO | Yes |

| Bing Copilot | Not explicitly named | Not citation target | Yes |

| Microsoft Copilot | Not explicitly named | Not citation target | Yes |

| GPTBot | N/A (not a monitoring surface) | N/A | Yes |

The defensible reading of the public material is that HeyAmos names three engines for monitoring and Mentionwell names five for citation, with broader crawler and answer-engine coverage on the optimization side. Anyone selling a clean 10-versus-4 number is filling in gaps the source pages do not.

What does HeyAmos track across ChatGPT, Gemini, and Perplexity?

HeyAmos is the visibility and action layer. According to the HeyAmos homepage, it monitors the prompts buyers are asking, shows the answers AI engines return, tracks brand appearance over time, and identifies what is driving or blocking visibility — then analyzes competitor citations and turns gaps into prioritized actions.

The most useful conceptual contribution from HeyAmos is the distinction between three outcome types:

- Citation — the AI engine links to your source and a user can click through. This is the closest analog to a traditional search result.

- Mention — your brand is referenced in the answer text without a link.

- No-link mention — same as a mention, with no obvious path back to your site.

These are not interchangeable. A citation creates a measurable click path; a mention creates only brand surface area. Optimizing for one without measuring the other produces misleading reports.

The evidence gap to flag honestly: the supplied HeyAmos sources do not expose the scoring methodology, prompt sampling logic, prompt weighting, geographic segmentation, model versioning, or tracking-cycle cadence. That is a real question for buyers running monthly reporting against AI-search investment, and one the public pages do not yet answer.

How does HeyAmos move from visibility data to content drafts?

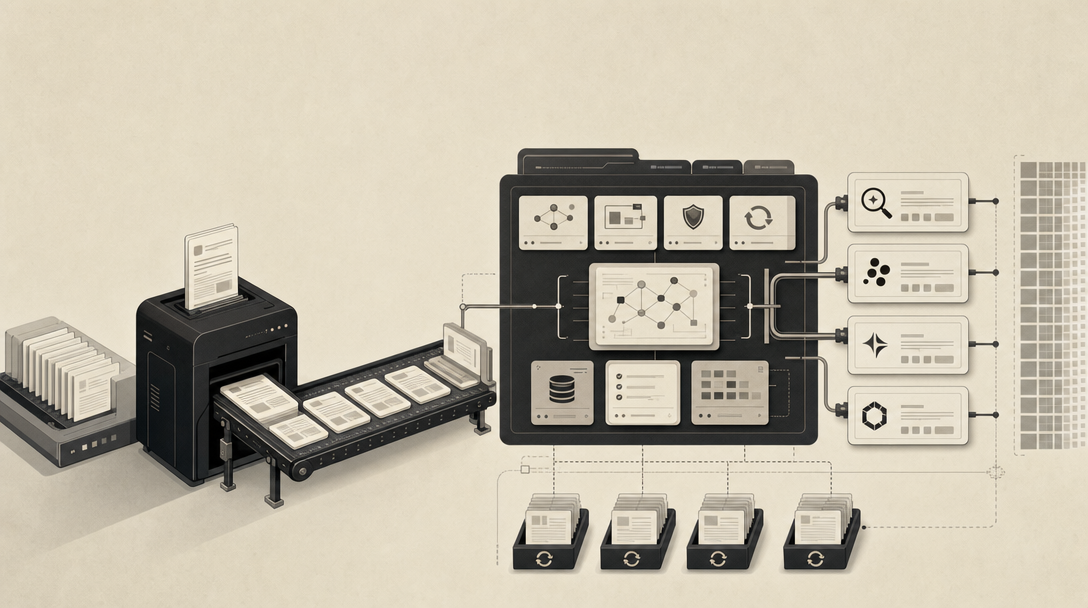

HeyAmos describes a six-step loop on its homepage that runs from monitoring to draft and back to measurement:

- Monitor the prompts buyers are asking and the answers AI engines give.

- Identify visibility gaps — where the brand is missing, weak, or beaten by a competitor.

- Compare competitor citations to surface what is already working in the category.

- Produce prioritized recommendations from those gaps.

- Generate a full content piece from brand inputs, facts, and figures, optimized for AI citation, and copy it into a CMS.

- Review impact in the next tracking cycle, with each round refining recommendations.

This is a visibility-to-draft workflow, not a headless publishing system. The output is a document you paste into your existing CMS — which is fine if you have a small number of sites and a human in the loop, and harder if you are an agency running content across dozens of client domains or a team operating on a headless stack.

If your loop currently ends at a recommendation document, the next gap is the publishing side: turning approved recommendations into citation-shaped pages that ChatGPT, Claude, Gemini, Grok, and Perplexity can actually pull from. That is where Mentionwell runs the other half of the loop — onboard your domain and Get My Site GEO Optimized to start shipping articles your monitoring tool can finally measure against.

The other honest gap: the supplied HeyAmos public material does not document citation lift after publication, editorial acceptance criteria, fact-check handling, or how the Content Agent decides what makes the cut. For commercial buyers, those are the questions that separate "interesting recommendation engine" from "trustworthy publishing input."

How do you set up your site in Mentionwell?

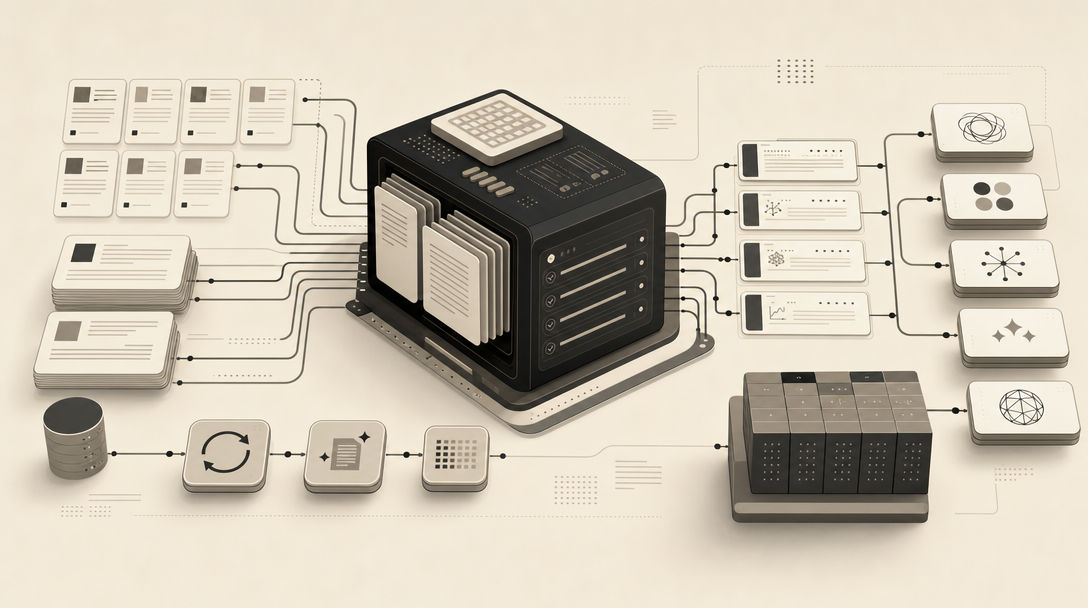

Setup in Mentionwell is operational, not conversational. According to the Mentionwell setup docs, onboarding a domain runs in four phases:

- Onboard the domain. Mentionwell crawls the homepage and sitemap, detects the framework, blog path, and CMS signals.

- Generate a starter site profile. This covers audience, voice, pitch rules, taxonomy, image style, CTA, and brand rules — the things every article writer on the platform reads before drafting.

- Run the article workflow. Articles move through statuses: proposed → approved → drafted → published. The docs say an approved draft typically lands in 60–90 seconds.

- Wire delivery. Choose one of four delivery modes (pull, push, commit, or shove) and connect the destination — WordPress, Webflow, a Next.js front-end, a Cloudflare Workers edge, Cloudflare R2-backed assets, or anywhere else you already publish.

The implementation surface is intentionally narrow. According to Mentionwell's docs, every destination integration reads the same 3 environment variables and calls the same 2 endpoints — typically a markdown-source endpoint such as /post.md and an article list endpoint — exposed through the bearer-token-scoped reader API or the mentionwell-reader npm package.

| Phase | What runs | What you get |

|---|---|---|

| Onboarding | Homepage + sitemap crawl | Detected framework, CMS signals, blog path |

| Profile | Brand profile generation | Audience, voice, pitch rules, taxonomy, CTA |

| Pipeline | 11-stage article run | Draft in ~60–90 seconds after approval |

| Delivery | One of 4 modes + reader API | Published article in your CMS or headless stack |

The point of the setup is not "write one article fast" — it is to make the same brand profile, taxonomy, and citation-shaped structure repeatable across every article and every site you operate. That is the part that matters for agencies, multi-tenant operators, and programmatic SEO teams.

What do GEO and AEO mean in practice?

AEO, GEO, LLMO, and SEO are four optimization surfaces, not synonyms. The decision-useful definitions:

- AEO (Answer Engine Optimization) targets the direct-answer surface: Google AI Overviews, Bing Copilot, Perplexity, voice assistants, and featured snippets. The job is to be the answer in the box at the top of the result.

- GEO (Generative Engine Optimization) targets the synthesized-answer surface: ChatGPT Search, Gemini, Perplexity, Grok, with Microsoft Copilot and Google AI Overviews relying heavily on the same signals. The term was coined in a 2023 Princeton paper. The job is to be one of the cited sources inside the model's written answer.

- LLMO (LLM Optimization) targets the underlying models and crawlers — GPTBot, ClaudeBot, PerplexityBot — at both training time (large-scale crawls) and retrieval time (RAG pipelines, browsing tools, agents). The job is to be reachable, parseable, and trustworthy enough to ingest.

- SEO remains the blue-link foundation. It is still the largest single source of traffic for most sites, and the structural signals — topical authority, fast load, semantic HTML, internal linking, schema — are also what make pages citable in AI surfaces.

According to Mentionwell, AIO (AI Optimization) is not a separate channel; it is the umbrella over AEO + GEO + LLMO. If you are shipping for all three, you are already doing AIO.

How do AI answers get built: citations, mentions, and no-link mentions?

A citation links to the source, a mention names the brand without a link, and a no-link mention provides visibility with no obvious path back to the site. Those three outcome types are not interchangeable, and any AI-search tool that reports a single "visibility" number is collapsing them.

Three outcome types exist, as defined in the HeyAmos guide:

| Outcome type | Linked? | Click path? | Why it matters |

|---|---|---|---|

| Citation | Yes | Yes | Closest analog to a classic search result |

| Mention | No | No | Brand surface area without traffic |

| No-link mention | No | No | Visibility with no obvious path back |

The most consequential data point in the public AEO literature comes from a Yext analysis cited by HeyAmos. According to HeyAmos, the October 2025 Yext analysis examined 6.8 million citations across 1.6 million responses and found that 86% of AI citations came from sources brands own or manage — first-party sites and listings. Gemini citations skewed even further toward brand-owned domains, with 52.15% coming directly from brand websites.

The operational implication is sharp: GEO is not primarily a link-building exercise. It is a first-party publishing problem. The pages on your own domain — how they are structured, how clearly they answer, how cleanly they expose to crawlers — are doing most of the work. That is the boundary where a monitoring tool stops and a content engine starts.

Is GEO built on top of SEO?

Yes. According to Mentionwell, GEO is built on top of SEO, not a replacement for it. The same signals that make a page rank in classic search — topical authority, fast load, semantic HTML, internal linking, schema, canonical URLs, sitemap.xml, RSS / JSON Feed, and Open Graph tags — also make it easier for AI systems to extract, attribute, and cite.

That alignment is what makes the comparison between HeyAmos and Mentionwell meaningful rather than zero-sum. HeyAmos frames GEO and AEO as analytics disciplines: what to measure, what to fix, what to draft next. Mentionwell frames the same surfaces as a publishing discipline: every article ships with four layers — AEO, GEO, LLMO, and classic SEO — built into the pipeline, not bolted on.

Treating GEO as separate from SEO produces fragile content; treating them as a single ship-list produces pages that work in both surfaces. The classic-SEO foundation is not optional, it is the substrate.

Where does publishing happen: CMS copy-paste, API delivery, or crawler-readable endpoints?

This is the operational boundary between the two products, and it is the part most buyer comparisons skip. According to its homepage, HeyAmos generates a content piece you can copy and paste into a CMS. According to its features page, Mentionwell publishes into existing workflows through four delivery modes, a bearer-token-scoped reader API, the mentionwell-reader npm package, and markdown-source endpoints such as /post.md, plus llms.txt, llms-full.txt, FAQPage, BlogPosting, and BreadcrumbList schema.

| Capability | HeyAmos | Mentionwell |

|---|---|---|

| Generate a draft | Yes (Content Agent) | Yes (11-stage pipeline) |

| Copy into CMS | Yes (documented) | Yes (one of four modes) |

| API delivery | Not documented in source | Yes (reader API) |

| npm reader package | Not documented in source | Yes (mentionwell-reader) |

Markdown-source endpoint (/post.md) | Not documented in source | Yes |

llms.txt / llms-full.txt | Not documented in source | Yes |

| BlogPosting / BreadcrumbList / FAQPage schema | Not documented in source | Yes |

| Multi-site / multi-tenant brand profiles | Not documented in source | Yes |

For a single brand running a small WordPress blog, copy-paste is fine. For an agency operating brand-consistent content across many client domains, a headless team running Webflow plus a Next.js front-end on Cloudflare Workers, or a programmatic SEO operation refreshing thousands of archive pages, copy-paste is the bottleneck. Crawler-readable endpoints, schema-on-every-article, and a reader API are not luxuries at scale — they are the difference between a draft pile and a publishing engine.

How should teams choose between HeyAmos and Mentionwell?

The choice is not "which product wins." It is "which loop are you trying to close right now?"

Choose HeyAmos when the immediate job is:

- Monitoring buyer prompts in ChatGPT, Gemini, and Perplexity.

- Reporting AI visibility — citations, mentions, no-link mentions — to leadership.

- Identifying competitor citation gaps and category-leader patterns.

- Producing prioritized recommendations from those gaps.

- Measuring change in the next tracking cycle after content ships.

Choose Mentionwell when the immediate job is:

- Producing citation-ready content with AEO, GEO, LLMO, and classic SEO in every article.

- Publishing into an existing CMS, headless stack, or multi-site operation through API delivery.

- Refreshing archives so older pages stay parseable to crawlers.

- Running programmatic SEO and glossary-style coverage with brand-consistent guardrails.

- Operating across many domains with site-specific profiles, taxonomies, and CTAs.

Use both layers together when measurement needs to feed a publishing engine — HeyAmos surfaces what to write, Mentionwell ships it in a form ChatGPT, Claude, Gemini, Grok, and Perplexity can cite.

If you are still mapping the broader category, several adjacent comparisons sharpen the trade-offs further: Peec AI tracks the score, Mentionwell changes it for prompt monitoring versus governed publishing, Ahrefs Brand Radar at $828/month for the cost of a monitoring-only stack, the Semrush AI Visibility Toolkit comparison for the line-item-versus-engine framing, Scrunch as a command center, Profound's billion-dollar valuation against the publishing gap, AthenaHQ's recommendation boundary, and the foundational AEO vs GEO vs LLMO workflow guide.

If your loop currently ends at a recommendation document, the next step is shipping the pages those recommendations point to. Mentionwell runs the publishing side of that loop across one site or hundreds — onboard your domain and Get My Site GEO Optimized to start producing citation-shaped articles your monitoring tools can finally measure.

Sources

- HeyAmos – Track & Improve Visibility on AI Searchwww.heyamos.com