What Is OtterlyAI, and How Does It Work?

OtterlyAI is an AI search monitoring and optimization platform that tracks how a brand appears — or fails to appear — when users ask questions in ChatGPT, Google AI Overviews, Google AI Mode, Perplexity, Gemini, and Microsoft Copilot. It is not Otter.ai, the meeting transcription tool. The two products share a similar name and nothing else.

The platform works by sending prompts to those engines daily as a neutral, non-personalized user, then aggregating which brands get cited, mentioned, or linked. During setup, OtterlyAI builds a Brand Report that connects prompts to your brand and competitors and tracks visibility over time (Source: Otterly help docs). It also runs a GEO Audit that checks whether AI engines can crawl and read your site, returning a checklist of fixes.

OtterlyAI tells you where your brand shows up in AI answers; it does not produce the pages those answers cite. That distinction defines the entire buyer decision around it. According to AI Search Tools, the platform launched in 2024 and now reports 20,000+ marketing professionals using it, with a Gartner Cool Vendor 2025 mention.

The implication is straightforward. Monitoring is the diagnostic layer. Citation outcomes — the actual goal of AEO, GEO, LLMO, and SEO work — require an execution workflow that takes Otterly's signals and converts them into published, citation-shaped pages. Everything that follows in this article evaluates Otterly through that lens: a measurement tool that benefits from being paired with a publishing engine.

Is Otterly.AI the Best $29 Entry-Level AI Visibility Tool?

For teams running their first GEO monitoring program on a tight budget, Otterly.AI's $29/month Lite plan is one of the strongest entry points on the market today. Setup is fast, daily tracking is included on every tier, and the interface is built for non-specialists. Gauge calls it "a solid entry point for AI visibility monitoring" specifically because of those three properties.

According to AI Search Tools, Otterly is used by 20,000+ marketing professionals and received a Gartner Cool Vendor 2025 mention — signals that the entry-level positioning has produced real adoption inside its second year on the market.

But "best $29 tool" is not the same as "best tool." Qualify the claim:

- Best for: small SaaS teams, founders, and consultants validating whether their brand appears in AI answers at all.

- Not best for: teams tracking dozens of buying-stage prompts, agencies running multiple client domains, or operators who need content briefs and publishing alongside measurement.

The "best" framing only holds inside the monitoring category, and only at the entry tier. Once you start asking the platform to drive content decisions, the limits show up quickly — which is what the next three sections work through.

How Much Does Otterly.AI Cost, and Where Do Prompt Caps Start to Bite?

Otterly.AI offers three plans, and the prompt allowance — not the price — is the decision variable.

| Plan | Monthly price | Prompts tracked | Cost per prompt |

|---|---|---|---|

| Lite | $29 | 15 | ~$1.93 |

| Standard | $189 | 100 | ~$1.89 |

| Premium | $489 | 400 | ~$1.22 |

Source: Gauge's Otterly alternatives breakdown.

Fifteen prompts is enough to test brand visibility, not enough to run a real GEO program. A typical B2B SaaS team needs prompt coverage across at least four dimensions: branded queries, category queries, competitor comparisons, and buying-stage questions. Even a focused vertical SaaS with three personas blows past 15 prompts the moment you add one comparison set per competitor.

The jump from Lite to Standard is the operational pinch point. GEOly identifies the $29 to $189 step as one of the most cited Otterly pain points: there is no middle tier for teams that need 30–60 prompts. You either stay constrained on Lite or pay 6.5x for capacity you may not yet need.

The practical impact: budget Lite for diagnosis (4–8 weeks), Standard for a single brand running a maturing GEO program, and Premium for teams that have already decided AI visibility is a permanent line item. Anything beyond Premium-scale tracking, especially across multiple clients or domains, is where Otterly stops being the right tool.

Which AI Engines Does OtterlyAI Track?

OtterlyAI's documentation states it queries six engines daily: ChatGPT, Google AI Overviews, Google AI Mode, Perplexity, Gemini, and Microsoft Copilot. That is the public answer. The buyer answer is more complicated.

According to Gauge, Google AI Mode and Gemini coverage may require add-on purchases on Otterly's standard plans, creating extra cost and friction for teams that need full multi-engine visibility out of the box. The vendor doc and the third-party review do not fully agree, which means engine coverage is a verification item, not an assumption.

Three buyer questions to resolve before purchase:

- Which engines actually drive traffic and influence in your market — ChatGPT and Perplexity for technical buyers, Google AI Overviews for high-volume informational queries, Copilot for enterprise Microsoft accounts?

- Which of those engines are included by default on the plan you are considering?

- What is the add-on cost per engine, and does it scale with prompt volume?

If your priority engines fall outside the default coverage, the effective monthly cost is higher than the headline price. Build the real number before comparing Otterly to alternatives.

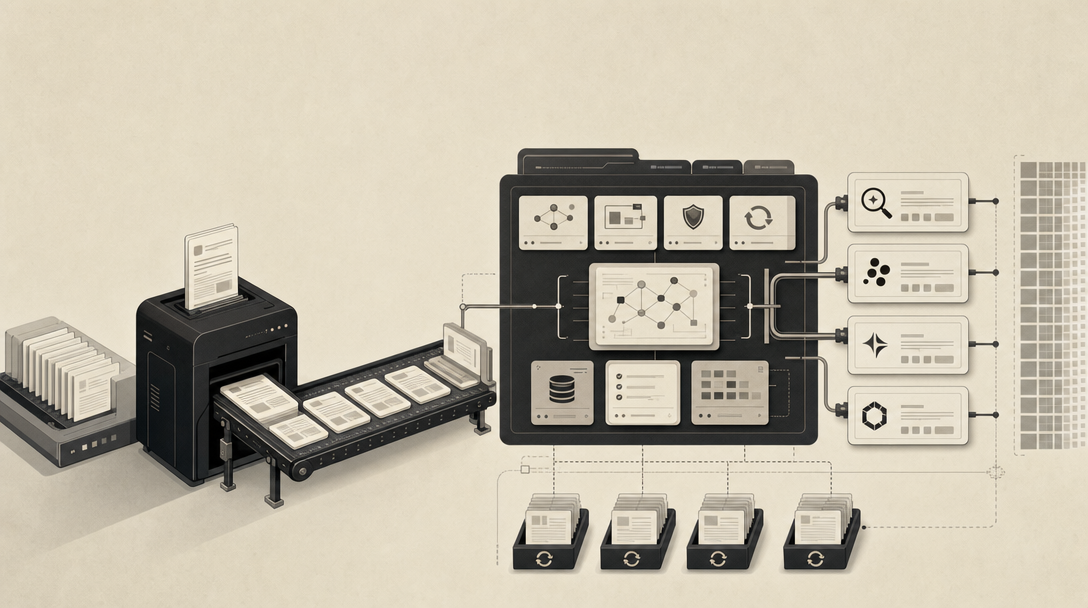

What Does Otterly's GEO Audit Check — and What Doesn't It Execute?

Otterly's GEO Audit is a crawlability and AI-readiness checklist. It checks whether AI engines can access your site, parse your content, and extract usable answers. The output is a list of technical and structural improvements: robots access, schema, content structure, llms.txt presence, and similar signals.

That is genuinely useful — and genuinely incomplete.

Otterly shows you the problem without giving you the tools to fix it. It is a rearview mirror, not a GPS. (Source: AI Search Tools)

The GEO Audit can tell you a page is poorly structured for AI extraction. It cannot rewrite the page, generate a citation-shaped brief, or publish a replacement to your CMS. According to Gauge, Otterly's monitoring-first workflow surfaces reports and trends but offers little guidance on why visibility changed, where competitors are winning specific prompts, or what content to create next. AI Search Tools reaches a similar conclusion: Otterly delivers visibility tracking, competitive benchmarking, automated weekly reports, and sentiment analysis, but leaves users to determine how to fix visibility gaps themselves.

What the GEO Audit does well:

- Flags crawl access problems for OAI-SearchBot, PerplexityBot, GoogleOther, and similar agents.

- Identifies missing or weak structured data.

- Highlights pages that lack the direct-answer structure AI engines extract from.

What it does not do:

- Diagnose why a competitor wins a specific prompt.

- Generate AEO, GEO, or LLMO content briefs.

- Publish to your CMS or refresh stale archive pages.

- Govern multi-site editorial workflows.

The GEO Audit is the start of an execution plan, not a substitute for one. The gap between "here is what's broken" and "here is the published page that earns the citation" is where most teams stall.

I Can See I'm Mentioned or Not — How Do I Improve It?

To improve AI citations after Otterly flags a gap, you have to publish the page the engine would actually cite — which means converting visibility reports into a research-backed brief, shipping it to your CMS, validating crawl access, and re-testing the prompt. Monitoring tools do not do this; they tell you the gap exists.

The procedural bridge from Otterly's reports to citation outcomes looks like this:

- Group prompts by buying intent. Separate top-of-funnel category questions, mid-funnel comparison queries, and bottom-funnel branded or evaluation prompts. Citation strategies differ by stage.

- Identify competitor citation patterns. For each prompt where a competitor is cited and you are not, capture which page the engine cited and why — direct-answer structure, schema, freshness, or domain authority.

- Map missing source pages. Many citation gaps are not optimization problems; they are coverage problems. The page the AI engine would cite simply does not exist on your site.

- Write citation-shaped briefs. Each brief should specify direct-answer openings, named entities, attributed statistics, numbered process steps, and the schema the page needs. This is the AEO, GEO, LLMO, and SEO layer working together — not separately.

- Publish to your CMS or headless stack. A brief that sits in a Google Doc does not earn citations. Publishing velocity is the rate-limiter for most teams.

- Validate crawl access. Confirm OAI-SearchBot, PerplexityBot, and GoogleOther can reach the new page. Re-run the GEO Audit if needed.

- Re-test AI answers. Wait 7–30 days, then re-query Otterly's tracked prompts to see whether the new page is cited.

- Refresh archive pages. Existing content with weak AI signals often outranks new pages once updated for direct-answer structure and entity clarity.

This is where Mentionwell fits. Otterly tells you the prompt where you are missing. Mentionwell, working from your site profile, ships the citation-shaped page that closes the gap — across one site or hundreds — and refreshes the archive pages that need to be updated to earn or hold citations. The tools are complementary: monitoring on one side, publishing on the other.

For teams building this loop from scratch, three internal references will help calibrate the workflow: AEO vs GEO vs LLMO: Which Workflow Fits Your Team?, How to Show Up in ChatGPT in 2026, and How to Show Up in Google AI Overviews in 2026.

When Do Teams Outgrow Otterly.AI, and Is 90 Days Evidence-Based?

The 90-day line is an operator heuristic, not a measured benchmark — none of the available sources prove a specific timeline. What the sources do prove is a clear set of graduation signals that, in practice, most growing teams hit within roughly a quarter.

You have outgrown Otterly when monitoring data starts arriving faster than you can act on it. That is the real graduation signal, and it shows up differently for each operator type.

| Team type | Graduation signal | What's needed next |

|---|---|---|

| B2B SaaS, single product | Prompt count exceeds 100; competitor wins need explanation, not just detection | Content briefs, citation-gap diagnosis, CMS publishing |

| Agency / multi-client | Managing 3+ client domains; brand-voice governance per site | Multi-site editorial pipeline, programmatic templates |

| Enterprise / multi-brand | Tracking 200+ prompts across categories and personas | Archive refreshes, headless delivery, source-of-truth governance |

| Programmatic SEO operator | Glossary or template-driven publishing across hundreds of pages | Editorial controls, citation-shaped templates, bulk QA |

According to AI Search Tools, the specific outgrowth conditions include exceeding prompt caps, needing to manage multiple clients, requiring competitor citation explanations, and needing content briefs alongside measurement. None of those map to a calendar — they map to operational maturity.

A practical reframe: 90 days is roughly how long it takes a competent team to move from "we should monitor AI visibility" to "we have a list of 40+ prompts where we're losing citations and no clear publishing workflow." That is the moment monitoring alone stops paying for itself.

What Is the Best Otterly.AI Alternative If You Need Publishing, Not More Monitoring?

The right framing is not "which monitoring tool replaces Otterly" but "what kind of system do I need next?" Three paths:

- Stay with Otterly. You are at Lite or early Standard, your prompt list is stable, and your team has bandwidth to translate reports into content manually. Otterly is the right tool.

- Pair Otterly with an execution layer. You like Otterly's measurement but need a publishing engine that converts citation gaps into shipped pages. Keep Otterly. Add a content engine.

- Replace Otterly with a tool that handles both. You want a single workflow covering AEO, GEO, LLMO, and SEO end-to-end. ZipTie frames this clearly: a monitoring tool plus an optimization tool gives full coverage, while a single tool is sufficient mainly when starting out or budget-constrained.

Mentionwell sits in the execution layer. It is a blog engine, not a monitoring dashboard. Onboarding captures your site profile — audience, tone, pitch rules, blocked competitors, CTA placement — and the pipeline runs research-grounded drafts through AEO, GEO, LLMO, and SEO checks before publishing into your existing CMS or headless stack. It supports programmatic templates, archive refreshes, and multi-site governance for agencies running content across many domains.

The decision is operational, not feature-based. If your bottleneck is "we don't know where we're invisible," monitoring tools solve that. If your bottleneck is "we know where we're invisible and can't publish fast enough to fix it," the answer is a content engine.

How Should Buyers Choose After the First 90 Days?

Use this checklist to decide whether to stay, add, or switch after a quarter with Otterly:

Capacity and coverage - Are you within your prompt cap, or are you actively pruning the list to fit? - Do your priority AI engines (ChatGPT, Google AI Overviews, Gemini, Perplexity, Copilot) come included, or are you paying add-ons? - Can the tool segment prompts by buying stage, persona, and geography?

Diagnosis and execution - When a competitor wins a citation, can the tool tell you why? - Does the GEO Audit produce briefs, or only a checklist? - Is there a path from "missing citation" to "published page" inside the workflow?

Operations - Does the tool publish into your CMS or headless stack, or stop at reporting? - Can it refresh existing archive pages to recover lost citations? - Does it govern multi-site or multi-client work without sacrificing brand voice?

The decision model:

- Stay if you are on Lite or early Standard, single-brand, and your team has the editorial capacity to translate Otterly reports into shipped content manually.

- Add an execution layer if Otterly's measurement is working but your publishing velocity cannot keep up with the gap list — this is where most teams land at the 90-day mark.

- Switch to a content engine if you need AEO, GEO, LLMO, and SEO unified across one site or many, with publishing, refreshes, and governance built in.

If your team falls into "add" or "switch," Mentionwell is built specifically for the execution side of that decision — a blog engine that turns citation gaps into citation-shaped pages, across one domain or a portfolio. Get My Site GEO Optimized to see how the publishing pipeline maps to the prompts you are already tracking.

Sources

- 7 Best AI Optimization Tools in 2026 (Reviewed)www.erlin.ai

- Best AI Visibility Tools in 2026 (Ranked & Reviewed) - Connor Gillivanconnorgillivan.com

- 7 Best Scrunch AI Alternatives to Try in 2026 - Rankshiftwww.rankshift.ai

- Otterly.AI: Affordable AI visibility monitoring – AI Search Toolsai-search-tools.com

- 7 Best Otterly Alternatives in 2026: Ranked & Comparedwww.withgauge.com